Stop Reinventing the Solver Stack

How engineering teams in simulation and ML waste years rebuilding what already exists, and what to do instead

In engineering, there is a certain pride attached to building everything yourself. It feels serious, rigorous, and disciplined. In solver development, this instinct often appears as the desire to create an internal stack for nearly every layer: the numerical core, the optimization tooling, the model format, the training framework, the deployment runtime, and the surrounding workflow.

At first glance, that can look like technical leadership. In practice, it often becomes strategic drift. A company may spend years building a private version of infrastructure that already exists outside the company in a more mature, better-supported, and faster-evolving form. The result is not always advantage. Quite often, it is delay.

The real mistake: confusing control with differentiation

The deeper problem is that companies often confuse control with differentiation. Owning every layer does not automatically make a product stronger. It may simply make the organization responsible for every missing feature, every integration problem, every export path, every onboarding burden, and every future maintenance headache.

In solver development, this tradeoff matters a great deal. The limited engineering effort of a team should usually go toward the part the company uniquely understands: the physics, the numerics that matter for the target problem, the workflow fit for the user, the calibration logic, the real-time constraints, and the trustworthiness of the final product. Rebuilding the commodity tooling around those things often consumes energy without increasing the true moat of the product.

Discovery versus delivery

The distinction that matters is not simply internal versus external. It is discovery versus delivery. In research, the code may itself be the invention. If a team is developing a genuinely new optimizer, numerical method, or physical formulation, then building internally is not a distraction. It is the work.

But in applied engineering, where the goal is to solve a defined domain problem, the value usually lies elsewhere: in the data, the physics, the workflow, the validation strategy, and the deployment constraints. In that setting, rebuilding the training or deployment stack is often not innovation. It is diversion.

The same problem, two paths

To make the tradeoff more concrete, consider a simplified fault detection task for manufacturing equipment. The point is not that real systems are this small. It is that even in simplified form, the two paths create very different maintenance burdens.

Path A — External foundation

import pandas as pd

from sklearn.ensemble import IsolationForest

import joblib

# Train

data = pd.read_csv("sensor_readings.csv")

model = IsolationForest(contamination=0.05)

model.fit(data[["vibration", "temperature", "pressure"]])

# Save + deploy

joblib.dump(model, "fault.pkl")

model = joblib.load("fault.pkl")

prediction = model.predict([[0.82, 74.3, 1.4]])

A new hardware target, different deployment environment, or evolving data pipeline typically requires configuration rather than reengineering. The engineering effort stays focused on what matters: feature selection, thresholds, validation, and how the model reflects the physical system.

Path B — Full internal control

import numpy as np

import json

class InternalFaultDetector:

def __init__(self, threshold=2.5):

self.threshold = threshold

self.mean_ = None

self.std_ = None

def fit(self, X):

self.mean_ = np.mean(X, axis=0)

self.std_ = np.std(X, axis=0)

def predict(self, X):

z = np.abs((X - self.mean_) / (self.std_ + 1e-8))

return (z.max(axis=1) > self.threshold).astype(int)

def save(model, path):

payload = {

"threshold": model.threshold,

"mean": model.mean_.tolist(),

"std": model.std_.tolist()

}

with open(path, "w") as f:

json.dump(payload, f)

def load(path):

with open(path) as f:

payload = json.load(f)

model = InternalFaultDetector(payload["threshold"])

model.mean_ = np.array(payload["mean"])

model.std_ = np.array(payload["std"])

# Backward compatibility gets harder as the format evolves

return model

def run(model, readings, hardware="x86", sampling_rate=100):

# Hardware support expanded over time; other targets still require work

if hardware not in ["x86", "x86_64"]:

raise NotImplementedError("Hardware target not supported")

# Non-default sampling rates require extra validation across the pipeline

if sampling_rate != 100:

raise NotImplementedError("Non-standard sampling requires pipeline changes")

# New sensor channels must be wired through manually

X = np.array(readings)

# Assumes training and deployment feature order remain perfectly aligned

return model.predict(X)

A new hardware target, a change in sampling rate, or a modification in sensor inputs now requires coordinated changes across multiple parts of the system. What would have been a configuration change becomes an engineering task.

By the third deployment, this infrastructure often becomes the responsibility of a small number of engineers. The domain logic, the part competitors cannot easily replicate receives less attention than the machinery built around it.

The point is not that this code is wrong. It is not. The point is that it is expensive to change, expensive to hand off, and competes for attention with the actual problem the team was trying to solve.

When reinvention becomes a strategic trap

This is how many technical organizations get trapped. They may begin with a reasonable motivation: “our use case is special.” Sometimes that is true. But then the scope quietly expands. Instead of building the differentiated solver layer, the organization starts rebuilding generic training loops, basic optimization workflows, common export paths, standard deployment mechanics, and infrastructure that the outside world already provides.

At that point, the company is no longer protecting its edge. It is paying to recreate the commodity layer underneath its edge. Technical ambition then turns into long-term maintenance burden. The team begins maintaining plumbing instead of strengthening the actual product.

The trap is rarely incompetence. More often, it is technical discomfort. Engineers see the limitations of existing tools very clearly, and the instinct to replace them can feel rational, even rigorous. But a tool does not need to be perfect to be strategically useful. In many cases, an imperfect external foundation is still better than an ideal internal one that consumes the team’s attention for years.

AI democratization has changed the default

What makes this more important now is not just the existence of outside tools. It is the speed at which AI and ML infrastructure has become democratized.

Training frameworks, model ecosystems, deployment runtimes, and interoperability standards are no longer scarce capabilities. They are part of the shared technical environment. A team choosing to build its own stack is not simply filling a gap. It is often competing against an ecosystem that improves continuously, supported by a much larger community and resource base.

The shift is also cultural. Engineers encounter common tools in education, research, and prior work. Customers and stakeholders are often familiar with them as well. Familiarity creates trust. A system built on recognizable foundations is easier to understand, evaluate, and adopt than one built entirely behind internal abstractions.

External tools now begin with a credibility advantage that internal stacks must work much harder to earn.

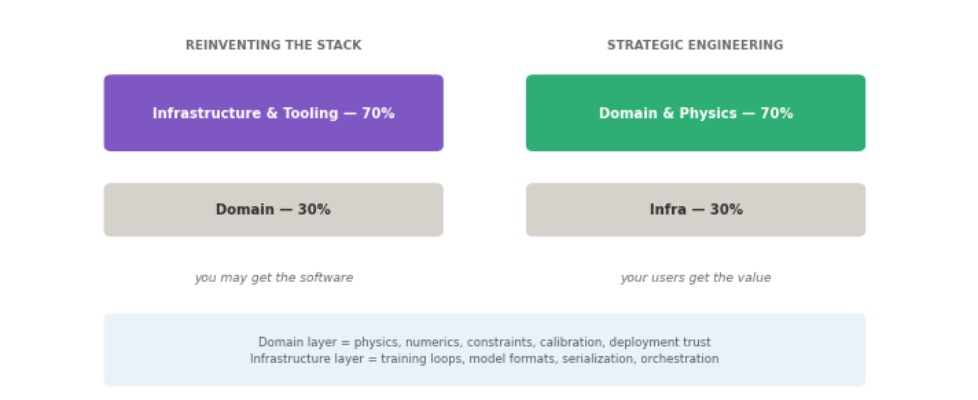

problem-specific logic, while training, deployment, and tooling layers are increasingly standardized.

Source: Author’s illustration

Ecosystem gravity and organizational cost

A team that isolates itself from widely adopted ecosystems is not merely choosing control. It is also accepting a set of organizational costs that are often underestimated.

Standard tools do more than reduce implementation time. They reduce organizational fragility. They come with a labor market, documentation, examples, and shared understanding. An internal stack comes with none of those by default. Its knowledge is local, and its continuity depends on the people who built it.

If the continued operation of a critical internal system depends on the uninterrupted availability of one or two engineers, the organization does not own the infrastructure in any robust sense. It is borrowing continuity from specific individuals.

This is the hidden cost of reinvention. It is not just technical. It is structural.

Trust still matters, but it changes the answer less than people think

A reasonable counterargument is that internal tools can be easier to trust inside an organization, especially in regulated or high-consequence domains. That concern is real. But it does not require rebuilding the entire stack.

In many cases, the stronger approach is to build a thin internal trust layer on top of mature external infrastructure. The external layer provides speed, familiarity, and leverage. The internal layer provides validation, traceability, domain constraints, and safeguards. In practice, that internal trust layer may include domain-specific validation, conservation checks, safety guardrails, regression tests, and workflow-specific acceptance criteria. The foundation does not need to be built internally for the system to be trusted internally.

The modern answer is not full external dependence or full internal reinvention. It is external foundation with internal trust.

Why some teams moved faster

Some teams move faster not because they do more engineering, but because they choose where engineering effort actually compounds.

Stripe focused its effort on the layer users experience, rather than rebuilding underlying infrastructure. That choice allowed it to concentrate engineering energy on developer experience, reliability, and the payment workflows customers actually valued, rather than on commodity layers that would not differentiate the product.

A similar pattern appeared at larger scale in companies like Netflix. Rather than treating data-center ownership itself as the source of advantage, the company focused on the service, the platform, and the user experience. The leverage came not from owning every layer underneath, but from investing deeply in the layer users actually felt.

The same pattern appears in modern ML and simulation. The teams that move fastest are often those that use existing ecosystems effectively, then concentrate their effort on domain-specific value.

What this means in solver development

In solver development, the valuable layer is rarely the surrounding infrastructure. It is the domain logic: the equations, the constraints, the numerical behavior, and the integration into real workflows.

That is where expertise compounds and differentiation emerges. Rebuilding the general-purpose tooling underneath it rarely strengthens that advantage.

Three questions before you build internally

- Does this layer create domain-specific value, or is it commodity infrastructure?

- Can an existing tool solve most of the problem with reasonable adaptation?

- Can this system be maintained and understood beyond the individuals who created it?

If the answer to the first question is no, and the others point toward cost or fragility, reuse is usually the better path.

Final thought

Reinventing infrastructure can feel like technical rigor. But more often, it is a misallocation of engineering effort. The strongest teams are not the ones that build the most. They are the ones that understand where building creates leverage and where it only creates maintenance.

Further reading

- Choose Boring Technology — Dan McKinley. A widely read case for using well-understood tools when novelty does not create user-facing value.

- The Bitter Lesson — Rich Sutton. A short essay on why general, scalable methods often outperform more hand-crafted approaches over time.

- Research Debt — Chris Olah and Shan Carter. A thoughtful essay on the long-term cost of complexity, poor reuse, and weak knowledge transfer in technical work.

Stop Reinventing the Solver Stack was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.