When are Neural Networks more powerful than Neural Tangent Kernels?

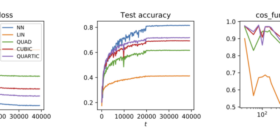

The empirical success of deep learning has posed significant challenges to machine learning theory: Why can we efficiently train neural networks with gradient descent despite its highly non-convex optimization landscape? Why do over-parametrized networks generalize well? The recently proposed Neural Tangent Kernel (NTK) theory offers a powerful framework for understanding these, but yet still comes with its limitations. In this blog post, we explore how to analyze wide neural networks beyond the NTK theory, based on our recent […]