Training Qwen2.5-0.5B-Instruct on Reddit posts summarization tasks with length constraint on my 3xMac Minis with GRPO – evals update

|

So, I trained two variants of this task:

I ran LLM-As-A-Judge eval for checking the summarization quality using DeepEval tools. Those are:

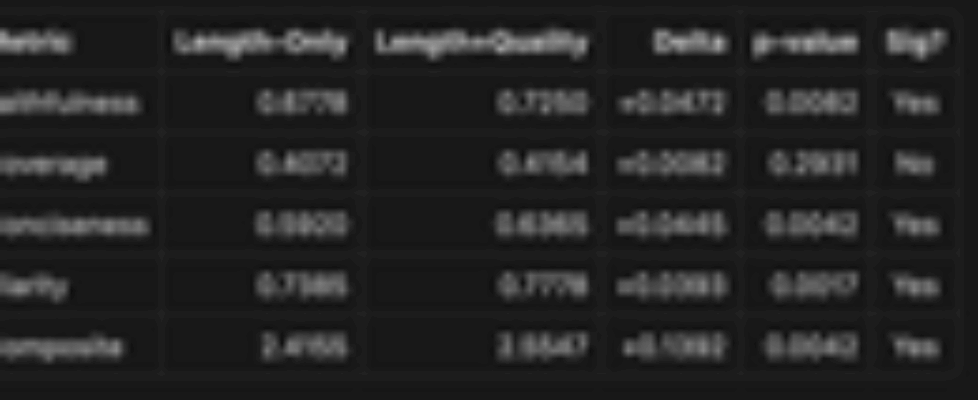

The results are as follows:

Results: The model with length penalty and quality reward as ROUGE L is significant with a p-value of 0.0042 wrt the final composite score using one-sided t-test with a total of 5 rounds of evals for each model. Performed on the test sample of 200 of smoltldr dataset. Baseline: length penalty only

Well, it is meant to allow any LLM of your choice to judge certain outputs which cant be easily be segregated into definitive reward because of its variance or subjective nature, like summarization! Such rewards varies for person to person, so we employ an LLM to act like one and give rewards multiple times and aggregates the results.] which is cheap compared to human labelers! So, I used DeepEvals amazing tools to create a eval system for me to evaluate the summarizations by my models on the aforementioned four factors:

The composite score is the mean of the above scores.

submitted by /u/East-Muffin-6472 |