The Transparency Rule — Make Clarity the Default (AISAFE 3)

“If you can’t explain it to a child, it shouldn’t run a nuclear plant or an economy”

By Michal Florek, October 2025

Executive Summary

Artificial intelligence now makes decisions that shape economies, influence healthcare, and guide governance. Yet, too often, these systems operate as black boxes — their reasoning hidden even from their creators.

The Transparency Rule — Framework 3 of the AI SAFE© Standards — establishes that every AI system must be explainable by design. If a system cannot articulate its logic in human terms, it should not govern critical functions or public systems.

This white paper introduces the Transparency Rule as a universal standard for explainable, auditable, and trustworthy AI. It defines:

- A measurable Clarity Ladder for transparency maturity

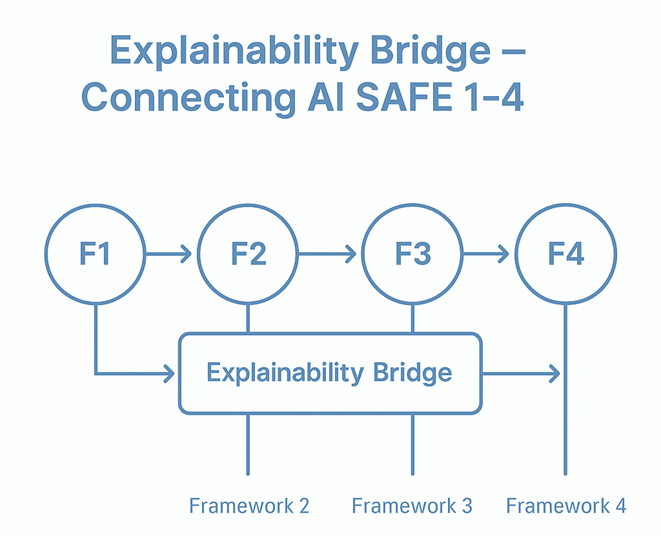

- Integration across the AI SAFE© 1–4 frameworks

- Policy and certification models such as the AI SAFE© T-Mark

- Sector-specific implementation guidance and global best practices

“Transparency is the price of trust.”

1. Introduction — The Age of Hidden Decisions

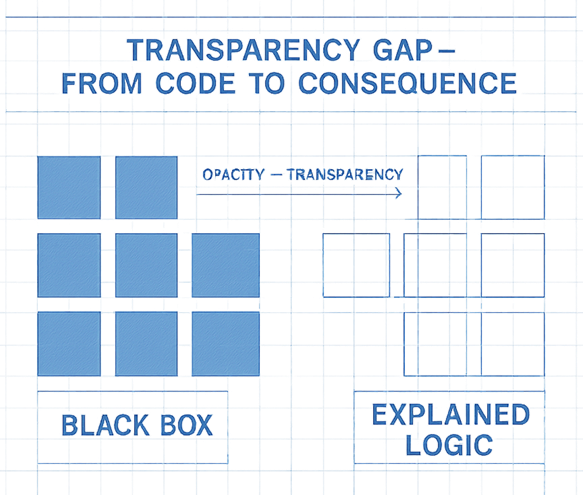

Artificial intelligence governs more aspects of human life than any previous technology — yet AI’s reasoning often remains invisible. This gap between algorithmic action and human understanding is the Transparency Gap.

Public confidence erodes when systems decide without explaining why. From loan approvals to hospital triage, opacity breeds suspicion and weakens the social contract.

The Transparency Rule asserts that:

AI that cannot explain itself cannot be safely governed.

Transparency must not be an afterthought but a foundational design requirement, embedded as deeply as accuracy or performance.

2. The Problem — Black Boxes in Command

The central flaw of contemporary AI is not lack of intelligence, but lack of intelligibility.

Deep learning models have evolved into non-transparent (opaque) architectures with billions of parameters — powerful, but incomprehensible.

Consequences of opacity:

- Accountability vacuum — No clear line of responsibility when harm occurs.

- Regulatory paralysis — Policymakers cannot audit or verify.

- Erosion of public trust — “Invisible logic” feels manipulative.

- Scientific stagnation — Without transparency, reproducibility collapses.

Examples:

- Financial “flash crashes” caused by untraceable trading bots.

- Diagnostic AIs recommending treatment paths without rationale.

- Predictive policing systems criticized for bias yet shielded from review.

A non-transparent (opaque) system is an unsafe system.

3. The Framework — The Transparency Rule

The Core Principle:

“Every AI system must be inherently interpretable — by design, not as an afterthought.”

Sub-Principles

- Explainability by Design — Interpretability built into architecture.

- Accessible Logic — Explanations must be human-understandable.

- Auditable Trail — Trace every output back to its data source.

- Tiered Visibility — Different levels of openness for developers, regulators, and users.

- Ethical Mirrors — Models must self-report bias, uncertainty, and ethical boundaries.

These five principles form the Transparency Stack — the structural path from data to accountability.

Transparency converts AI from a sealed engine into a glass system: auditable, explainable, and worthy of trust.

4. Implementation Path — The Clarity Ladder

The Clarity Ladder provides a measurable scale of transparency maturity, allowing regulators and organizations to classify AI systems.

The AI SAFE© Transparency Certificate will certify compliance with levels 3–5.

Each ascent improves accountability and public trust.

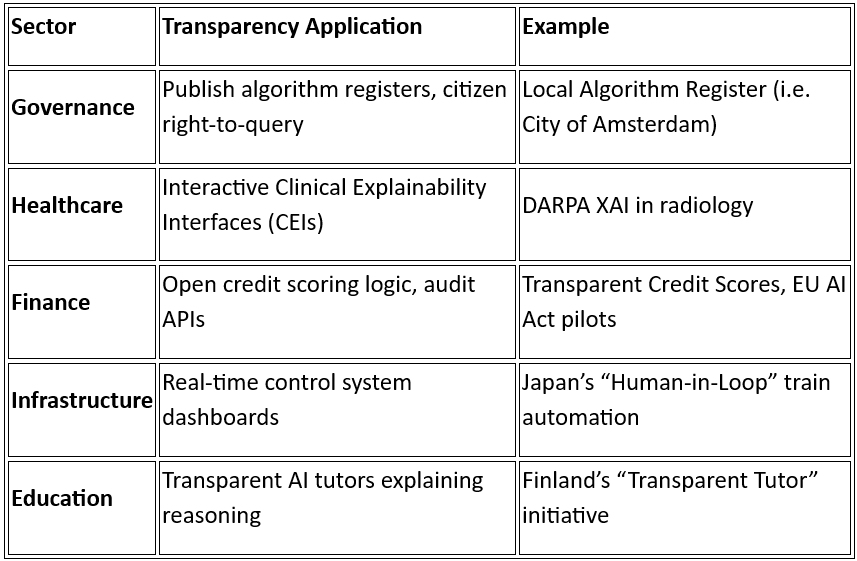

5. Applications Across Sectors

Transparency manifests differently across sectors. The Transparency Rule ensures that explainability is context-specific yet universally applicable.

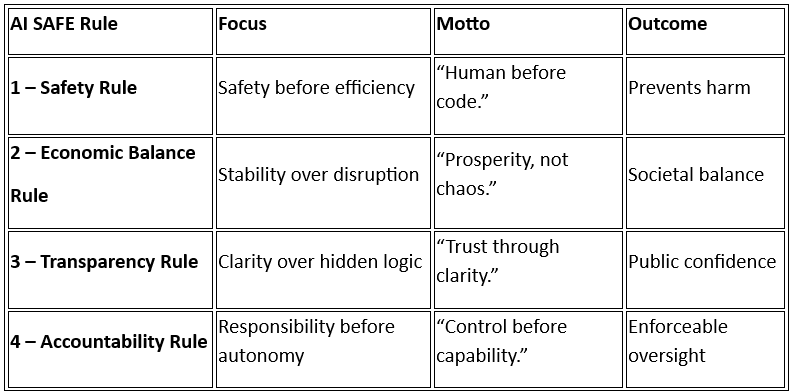

6. Integration with AI SAFE© Standards

The Transparency Rule (Framework 3) is part of the AI SAFE© architecture of four interlocking rules:

Transparency acts as the connective Explainability Bridge: it validates Safety, interprets Economic Balance, and enables Accountability.

7. Policy Recommendations

For Governments

- Legislate explainability into AI law.

- Establish a National AI Transparency Registry.

- Mandate T-Mark certification for high-risk systems.

- Implement tiered disclosure (regulator, industry, public).

- Fund open explainability frameworks for SMEs.

For Industry

- Appoint Chief Transparency Officers (CTOs).

- Build transparency-by-design pipelines.

- Provide Model Cards with training data, limitations, and biases.

- Develop Explainability APIs for regulators and users.

For Academia & Research

- Prioritize Explainable AI (XAI) research.

- Embed explainability into computer science curricula.

- Create reproducibility benchmarks for transparency.

For Global Collaboration

- Establish ICAT — International Council for Algorithmic Transparency under OECD/UNESCO.

- Maintain a Global Transparency Register.

8. Case Studies — Transparency in Practice

DeepMind Health (UK) — Exposed flaws in opaque data handling; led to stronger NHS audit rules.

OpenAI Model Cards — Example of evolving explainability in large models.

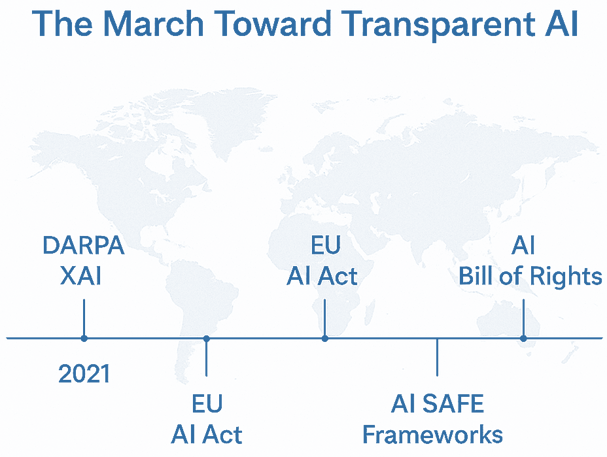

EU AI Act (2024) — Legal codification of transparency duties.

Japan Civic AI — National transparency as a cultural value.

OECD AI Principles — International convergence of trust standards.

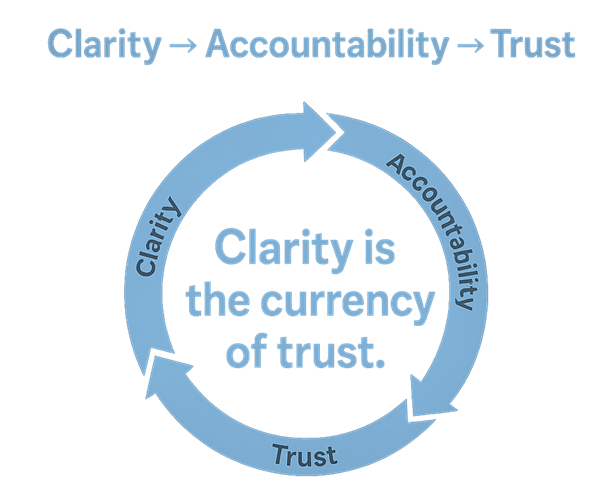

9. Conclusion — Clarity as the Currency of Trust

The era of black-box intelligence is ending.

Progress will now be measured not by how powerful an AI is, but by how understandable it remains.

Transparency transforms AI from a mysterious oracle into a civic tool.

Systems that can explain themselves will earn the legitimacy to operate; those that cannot should remain in the lab.

“Clarity is the currency of trust — and without trust, there is no safe intelligence.”

10. References

- European Commission — AI Act Overview https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

- EU AI Act Full Text (2024) https://artificialintelligenceact.eu/the-act/

- IEEE 7001 — Transparency of Autonomous Systems https://standards.ieee.org/ieee/7001/6929/

- OECD AI Principles https://oecd.ai/en/ai-principles

- DARPA — XAI: Explainable Artificial Intelligence https://www.darpa.mil/research/programs/explainable-artificial-intelligence

- EDPS Tech Dispatch on Explainable Artificial Intelligence (2023) https://www.edps.europa.eu/system/files/2023-11/23-11-16_techdispatch_xai_en.pdf

- DeepMind NHS deal ruled illegal https://www.businessinsider.com/ico-deepmind-first-nhs-deal-illegal-2017-6

- OpenAI all available models https://platform.openai.com/docs/models

- City of Amsterdam — Algorithm Register (term: “artificial intelligence”); https://algoritmes.overheid.nl/en/algoritme

- Future AI Classroom in Finland | European School Education Platform https://school-education.ec.europa.eu/en/learn/courses/future-ai-classroom-finland

- JR East unveils plans for driverless shinkansen by mid-2030s — The Japan Times https://www.japantimes.co.jp/business/2024/09/11/tech/jr-east-shinkansen-driverless/

- G7 AI transparency reporting: Ten insights for AI governance and risk management https://oecd.ai/en/wonk/g7-haip-report-insights-for-ai-governance-and-risk-management

- Guidance for Public and Private Entities on Transparency and Personal Data Protection for Responsible Artificial Intelligence — OECD.AI https://oecd.ai/en/dashboards/policy-initiatives/guidance-for-public-and-private-entities-on-transparency-and-personal-data-protection-for-responsible-artificial-intelligence-2652

- OECD finds growing transparency efforts among leading AI developers https://www.oecd.org/en/about/news/press-releases/2025/09/oecd-finds-growing-transparency-efforts-among-leading-ai-developers.html

The Transparency Rule — Make Clarity the Default (AISAFE 3) was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.