The Responsibility Rule — Why “the Algorithm Did it” is Unacceptable (AI SAFE© 4)

By Michal Florek, October 2025 (Updated May 2026)

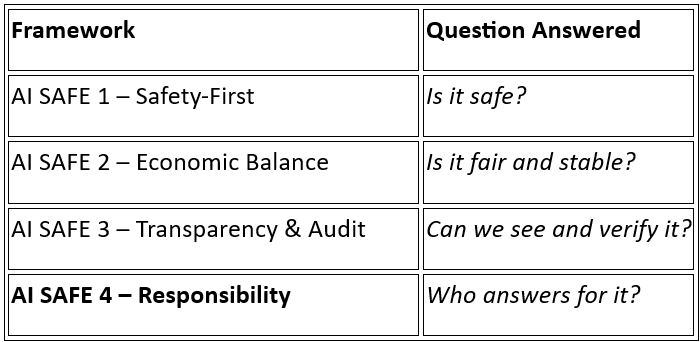

The Illusion of Blame-Free AI, why “the algorithm did it” is unacceptable. This is why following AI SAFE© 1: Safety-First Rule, AI SAFE© 2: Economic Balance Rule, AI SAFE© 3: Transparency & Audit Rule is key.

Executive Summary

Artificial intelligence cannot bear moral or legal responsibility. Yet in public discourse and corporate governance, we increasingly hear: “the algorithm did it.”

Artificial Intelligence is often described as a black box that “decides.” The Responsibility Rule asserts the opposite: AI is never autonomous in a moral sense.

AI is like a power tool — if it hurts someone, the manufacturer and user are responsible, not “the hammer.”

This white paper dismantles that illusion. It defines the Responsibility Rule (AI SAFE© 4) — a framework ensuring every AI system remains anchored to identifiable human accountability. It proposes a global Human Accountability Certification (HAC) system, integrates responsibility into AI design and deployment lifecycles, and closes the ethical gap between automation and liability.

Key assertions:

- AI amplifies human choices; it does not replace them.

- Lack of clarity creates liability. Explainability and accountability must coexist.

- Responsibility must be certified, not implied.

- Liability cannot be delegated to code.

- Public trust requires visible ownership of outcomes.

The Rule transforms ethics into infrastructure: a verifiable, continuous chain of accountability from design to oversight.

Context & Problem Definition

Automation has long diffused blame. From factory accidents to autopilot crashes, each era’s innovation produced its own “nobody’s fault.” In the age of AI, this diffusion becomes institutional, which destroys trust.

“The algorithm made an error” is not a statement of fact — it is an act of moral disappearance.

When AI automation is impacting many different areas of life at the same time, can we really afford a ‘no blame’ approach today?

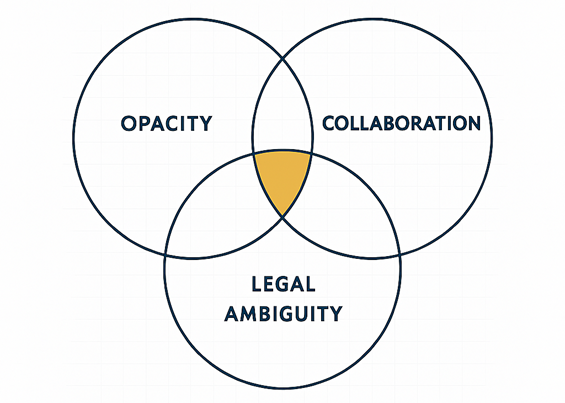

The Responsibility Gap

Emerges from three forces: lack of clarity, complex collaboration, and legal ambiguity. When combined, they create an ethical vacuum where harm is measurable, yet no actor is accountable.

Consequences

- Economic: Moral hazard and unchecked automation.

- Psychological: Erosion of trust in systems.

- Regulatory: Paralysis due to fragmented ownership.

- Cultural: Civic resignation — “machines know better.”

Persistence of the “Blame-Free” Mindset

Marketing, legal convenience, and regulatory lag promote the myth of neutral algorithms. The Responsibility Rule challenges this by demanding traceable human ownership for every algorithmic act.

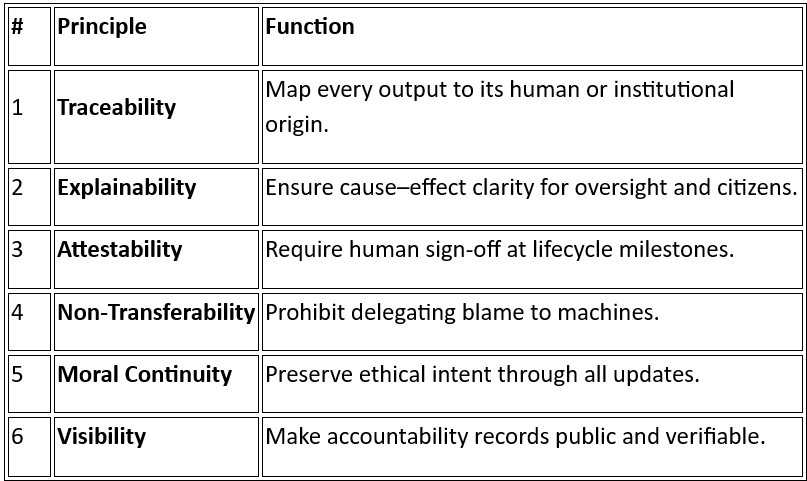

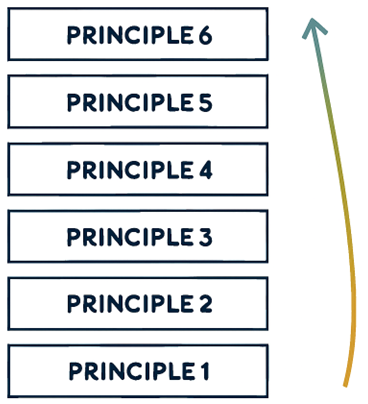

The Responsibility Rule — Core Principles

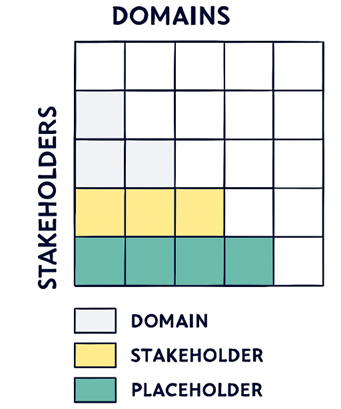

Every AI system must be traceable to an accountable actor. The Rule is structured around six enforceable principles:

Accountability without explainability is theatre (an act!), whilst explainability without accountability is noise.

These principles are the architecture of trust. They turn ethics into system design.

Governance Model: The Accountability Chain

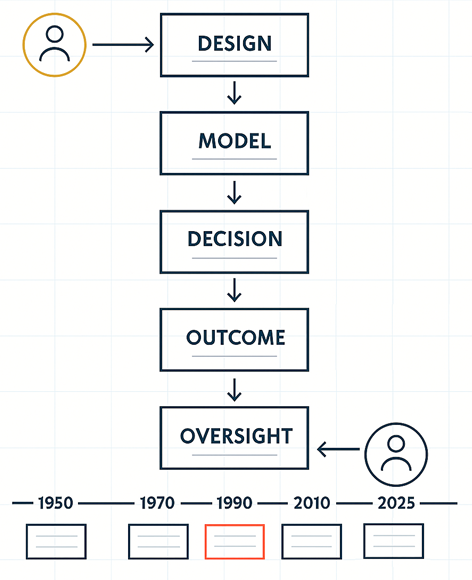

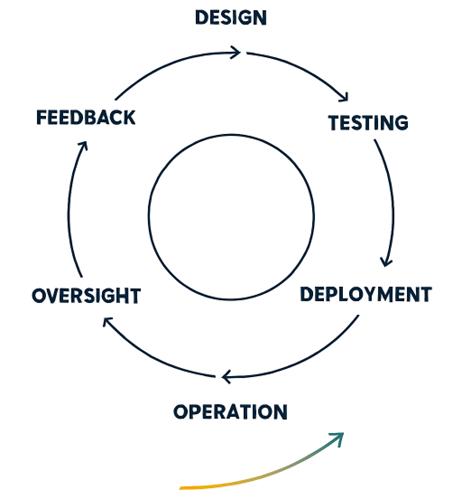

Lifecycle Stages

- Design Accountability — Ethical impact, data provenance, bias prevention.

- Testing Accountability — Transparent test logs and independent review.

- Deployment Accountability — Public risk disclosure and human-in-loop guarantees.

- Operational Accountability — Continuous monitoring and incident reporting.

- Oversight & Enforcement — Audits, sanctions, and public registries.

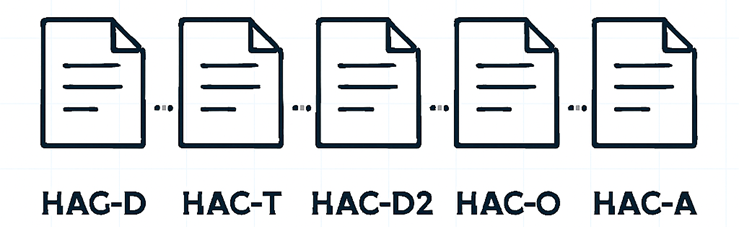

Visual 7: HAC Chain Layout

Each stage issues a Human Accountability Certificate (HAC):

- HAC-D (Design)

- HAC-T (Testing)

- HAC-D2 (Deployment)

- HAC-O (Operation)

- HAC-A (Audit)

Together, they form a closed-loop chain of traceable responsibility.

The loop transforms accountability from paperwork into living infrastructure.

Framework Integration — The Four Pillars of AI Trust

Each rule reinforces the others. Responsibility is the keystone — the lock that secures the entire AI SAFE© architecture.

Policy & Industry Recommendations

Human Accountability Certification (HAC)

A global regulatory framework aimed at verifying that:

- Every AI system has a named accountable owner.

- Lifecycle attestations are digitally signed and auditable.

- Registries connect systems, actors, and compliance status.

The key here is the central ‘Regulator’ function which reveals a need to an industry regulating entity. In current situation AI software suppliers are not bound by any set standard on the services they provide. The economical impact their product are having in local legislation areas (country’s legal codes) is evaluated on a case by case basis, without set regulations to protect the users. Each AI product supplier needs to ensure Humans have Accountability (HAC Certificate) for each step leading to a deployment of the AI based products.

The suggested Regulator entity will need to have cross-border jurisdiction at minimum at the local economy-arena level. Ideally on a global scale. Its function cannot be driven by the product offering, it must be set on protecting the end consumers from harmful AI products. That is why five legal clauses are being proposed here.

Legal Clauses

- Non-Delegation of Liability

- Mandatory Attestation

- Liability Continuity

- Transparency Disclosure

- Sanctions for Breach

Institutional Recommendation

Governments should legislate HAC integration within national AI Acts and require interoperability with ISO 42001 and EU AI Act standards. With an international body created acting as a Regulator for AI technologies.

Case Studies & Future Scenarios

Self-Driving Vehicles

Blame oscillated between software and human monitor. Under HAC, design and deployment teams would bear certified accountability.

Credit Scoring Bias

Non-transparent data led to discrimination. HAC-T bias attestation would have prevented release.

Generative Misinformation

Developers blamed users. Non-transferability would preserve responsibility.

By 2030, global HAC registries should enable instant accountability tracing. Every AI decision has a digital signature; every harm has a human answer.

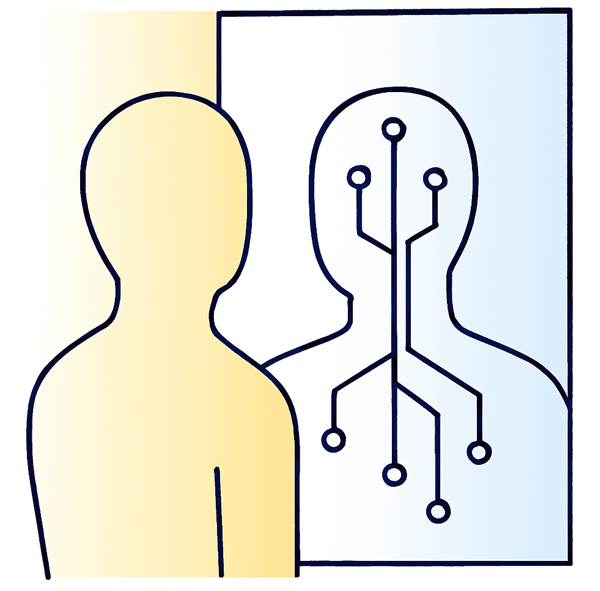

Conclusion — The Mirror Principle

AI is not a moral actor; it is a mirror.

What it reflects depends on who is looking.

Autonomy does not absolve — it obliges.

Responsibility is civilization’s boundary.

When machines act, we must ensure humanity remains visible in the reflection, in subconscious.

Final Call

- Policymakers: legislate responsibility infrastructure.

- Industry: embed HAC in every release pipeline.

- Academia: train “AI accountability engineers.”

- Citizens: demand to know who is responsible.

The age of algorithmic innocence ends here.

Citations / References

- OECD AI Principles (2019) —

https://oecd.ai/en/ai-principles - AI — Human Frameworks —

https://theailaws.com/ai-human-frameworks-1 - UNESCO (2021) — Recommendation on the Ethics of AI — https://www.unesco.org/en/articles/recommendation-ethics-artificial-intelligence

- EU AI Act Full Text (2024) — https://artificialintelligenceact.eu/the-act/

- ISO/IEC 23894: 2023 — AI Risk Management — https://www.iso.org/standard/77304.html

- ISO/IEC 42001: 2023 — AI Management Systems — https://www.iso.org/standard/42001

- IEEE P7009 — Fail-Safe Design Standard — https://ieeexplore.ieee.org/document/10462965

- RAND (2024) — When AI Gets It Wrong, Will It Be Held Legally Accountable? — https://www.rand.org/pubs/articles/2024/when-ai-gets-it-wrong-will-it-be-held-legally-accountable.html

- Collina, L. (2023) — Critical Issues about AI Accountability Answered, California Management Review — https://cmr.berkeley.edu/2023/11/critical-issues-about-a-i-accountability-answered/

- Lima et al. (2023) — Blaming Humans and Machines: What Shapes Reactions to Algorithmic Harm — https://arxiv.org/abs/2304.02176

- Ryan et al. (2023) — Modelling Responsibility for AI-Based Safety-Critical Systems — https://arxiv.org/abs/2401.09459

AI SAFE Initiative Source Material

- Michal Florek — AI SAFE Initiative — https://theailaws.com/ (2025). AI SAFE Framework 1: The Safety-First Rule — Why Efficiency Without Brakes is Dangerous. AI SAFE White Papers, Vol. 1. https://img1.wsimg.com/blobby/go/40c8a18e-677d-4b1c-acb7-56f2bf5ca11a/downloads/28af4bcc-24fc-4c08-b566-9600e85b6021/White%20Paper%20-%20Framework%201%20of%205%20-%20Why%20Efficienc.pdf?ver=1761528563581

- Michal Florek — AI SAFE Initiative — https://theailaws.com/ (2025). AI SAFE Framework 2: The Economic Balance Rule — Stability Over Disruption. AI SAFE White Papers, Vol. 2. Internal draft version 1.2, September 2025. https://img1.wsimg.com/blobby/go/40c8a18e-677d-4b1c-acb7-56f2bf5ca11a/downloads/4355f8dd-a6fd-4a82-bee5-06fd2d93a386/White%20Paper%20-%20Framework%202%20of%205%20-%20AI%20SAFE%202%20-%20S.pdf?ver=1761528563581

- Michal Florek — AI SAFE Initiative — https://theailaws.com/ (2025). AI SAFE Framework 3: The Transparency Rule — Transparency as Default. AI SAFE White Papers, Vol. 3. Internal draft version 1.1, October 2025. https://img1.wsimg.com/blobby/go/40c8a18e-677d-4b1c-acb7-56f2bf5ca11a/downloads/d8807469-35bc-4726-8e2f-06f104cf7476/White%20Paper%20-%20Framework%203%20of%205%20-%20AI%20SAFE%203%20-%20M.pdf?ver=1761528563582

- Michal Florek — AI SAFE Initiative — https://theailaws.com/ (2025). AI SAFE Framework 4: The Responsibility Rule — Human Accountability. AI SAFE White Papers, Vol. 4. Internal draft version 1.1, October 2025. https://img1.wsimg.com/blobby/go/40c8a18e-677d-4b1c-acb7-56f2bf5ca11a/downloads/8ffbce68-c7e9-4847-a838-8dc6d95d2aa6/White%20Paper%20-%20Framework%204%20of%205%20-%20AI%20SAFE%204%20-%20_.pdf?ver=1761528563582

The Responsibility Rule — Why “the Algorithm Did it” is Unacceptable (AI SAFE© 4) was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.