The Autonomy Trap: Why Most Organisations struggle at AI Integration (And How to Actually Ship…

The Autonomy Trap: Why Most Organisations struggle at AI Integration (And How to Actually Ship Reliable AI Agents) — An Engineer’s guide

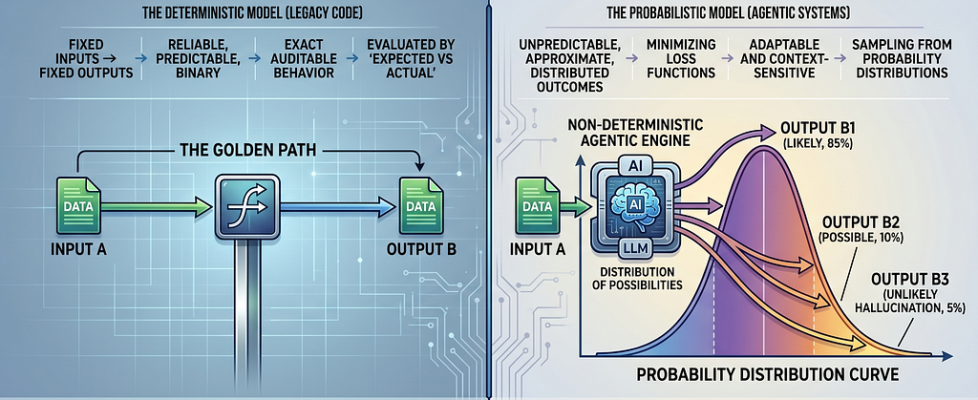

From Deterministic to Probabilistic: Navigating the Agentic Shift

Only a few years ago, predictability and determinism were the bedrocks of the software industry. We designed systems to produce the exact same output regardless of how many times a piece of code was executed. This reliability allowed engineers to ship products with confidence; once a product passed the testing layer, it was ready for the user.

Today, we are in the middle of a massive paradigm shift. As agentic systems become more prevalent, we are introducing non-deterministic components — like Generative AI and LLMs — into our architectures. Our outputs are no longer binary; they are uncertain, approximate, and distributed. While traditional software relies on “Golden Paths” where fixed inputs lead to fixed outputs, AI agents are inherently non-deterministic.

As software engineers, our fundamental responsibility remains the same: shipping reliable products. However, this new landscape forces us to move beyond simple prompt engineering toward sophisticated agentic design patterns to manage this inherent uncertainty.

Defining the Baseline: What was Determinism?

When we talk about classical software the behaviour is always deterministic by design,

- Any conditional clause will always branch the same way given the same input.

- Functions are pure, given input x, you expect the exact defined output.

- Testing is “expected vs Actual”, we hardcode the expected value and allow the test to fail if the value is anything other than that.

And for decades it has worked, and helped millions of us to build software for transactional systems, where correctness means exact auditable behaviour and not just good enough

Another important concept in designing deterministic system is composability, which suggests, larger systems can be built by composing smaller units.

The rise of Non-Determinism

With the rise of generative AI, the core expectation from a software system is changing, users are now expecting softwares to be more conversational, and natural language friendly. The roadmaps have shifted from building features, to building workflows and automations, no matter the domain of the software product. However, we must understand that there is a fundamental shift in how a deterministic program works vs how a probabilistic one operates:

- In a deterministic program, 2+2 is always 4 vs,

- In a probabilistic model, the goal is not to be “correct” in a binary sense, but to minimise a loss function

When one interacts with an AI, they are not executing a script, they are actually sampling from a probability distribution.

The software doesn’t know the answer, it just calculates that “Option A” has an 85% probability of being the desired response.

Particularly in Generative systems, this variation happens because of several factors:

- Sampling Techniques — that control how “creative” or focused the responses should be

- Context Sensitivity — the conversation history influences the outcome

- Prompt phrasing — minor change in wording can derive noticeably varied result

- Infrastructure variation — How and where the model is hosted and run.

Its not all bad in binary sense, Its this probabilistic nature, that gives the model the adaptability and expressiveness, but certainly poses bigger challenge and ethical responsibility on the developers and engineers to adapt to this shift and learn to think non-deterministically!

And the first step to getting onboard with this ideological shift is by informing and educating ourselves!

So lets learn in terms of Agentic development, how we can navigate through this non-determinism and how we can build more reliable systems

Understanding Agentic AI

We can think of AI agents as intelligent systems that can act autonomously, perceive the environment, comprehend the inputs and dynamically understands what response to give.

These systems are typically different from the ones we build in traditional ML, as not only these system react to the input, but they also operate in loops

Observe → Plan → Act → Reflect → Iterate

The Core Components of an Agent

To understand this better, agentic systems are typically made of 3 core components:

- Reasoning Engine: Supported by frameworks like ReAct or hierarchical planning to decompose the tasks into steps, this component is usually where your LLMs sit

- Tools: are the extensions that extends the Reasoning Engine’s capability to support various integrations with external world( search engines, database, APIs etc)

- Memory: is a store which retains previous interactions, user preferences, context etc, and this can be long-term memory and short-term memory

There are other components as well, perception, etc, but they more or less boil down to these three.

These agents strive in tasks which require creativity and out of the box thinking, and after having worked extensively on them for that last couple of months I cant deny the immense power they hold, and how much they can attribute to our productivity, but one thing that I realised is that these systems can have catastrophic results if not handled with utmost responsibility.

I spent a lot of my time in studying and analysing how we can navigate through this, and in today’s blog we will dissect into some of the most essential pre-requisite that will not only help you in having more confidence in the agents you build but also will help you have more control over non-deterministic Agentic Behaviour

Building Confident AI Agents: The Spectrum of Autonomy

To really build agents that have actual impact on your productivity and revenue stream, the first myth/ misconception you have to debunk is “You always need complete autonomy”.

As Andrew Ng also pointed out:

The question isn’t agent it’s workflows. Its how much autonomy does your use-case need? — Andrew Ng

By reading all the articles and news and the latest advancement, you and your organisation might want to jump right to building a highly autonomous AI agent that specialises in doing multiple tasks, but the truth is, the more shared responsibility one single agent has, the more it is prone to hallucination.

But this doesn’t mean that we cannot solve this. Once you have clearly noted down your requirements and the use-case that you are trying to solve using AI agents, the next step is to chose the right level of autonomy you want it to have. The decision becomes easy when you accustom yourself with the spectrum of Autonomy

Autonomy in AI Agent refers to the degree to which the LLM decides the system behaviour. It is not binary but a spectrum from highly controlled → fully autonomous.

- Agentic Workflows: (Low Autonomy) The engineers design explicit graphs with predefined nodes, edges, and pathways. LLMs only makes decision within the structure including tool execution

- Autonomous Agents: (Hight Autonomy) the agent gets the tools, memory, environment access, then decide everything — what tool to use, what workflows to create or modify and they have the capability of looping back and forth, thinking independently

Choosing Your Level of Autonomy

And when you stand on the cross road to decide on the level of autonomy you want to have, you must answer these questions about the task in hand:

- Task Predictability:

- High Predictability → Chose Agentic Workflows: Structured paths (predefined nodes/edges) enforce consistency, reducing variability in repetitive or rule-based tasks.

– Example: Processing payroll — input salary data always follows fixed steps (validate → calculate → approve), avoiding LLM hallucinations. - Low Predictability → Lean Autonomous Agents: Agents adapt dynamically as unknowns emerge.

– Example: Web scraping for real-time market research — URLs change, so self-modifying loops handle surprises.

3. Risk Tolerance:

- Low Risk Tolerance → Agentic Workflows: Oversight via human-defined guardrails minimises errors, failures, or harmful outputs in high-stakes scenarios.

– Example: Healthcare data access — predefined flows ensure compliance, preventing unauthorised leaks. - High Risk Tolerance → Autonomous Agents.Allows experimentation where failure is cheap and recoverable.

– Example: Prototyping a Telegram bot for LeetCode problem-solving — iterative self-correction speeds development without real-world impact.

4. Need for Explainability:

- High Explainability → Agentic Workflows: Traceable execution (logged nodes/edges) supports audits, debugging, and regulatory proof.

– Example: Backend API for managing critical documents where every decision maps to a graph step for compliance reviews. - Low Explainability → Autonomous Agents:Black-box loops prioritise speed over full transparency.

– Example: Exploratory AI agent for travel planning — creative route optimisation doesn’t need step-by-step logs.

5. Performance Requirement:

- Rigid/High-Throughput → Agentic Workflows: Optimised for speed and scale in predictable volumes.

– Example: Cron jobs for daily ML model monitoring — fixed efficiency beats adaptive overhead. - Flexible/Creative → Autonomous Agents: Self-modification unlocks innovation and handles novelty.

– Example: Building RAG systems for interview prep — agents refine retrieval strategies on-the-fly for better accuracy.

These 5 parameters can help you determine how you want your systems to behave. And the best part is that, you dont have to always chose between one of these ways of working, you can also arrive on middle ground by creating hybrid systems like agentic workflows with autonomous subagents.

But its very important that you keep these parameters into account before starting on your agentic journey.

Based on the level of autonomy you decide your system can behave like a:

- Router: The LLM simply directs input to specific, predefined workflows.

- State Machine: The LLM determines whether a process should continue or end based on the current state

- Autonomous Agent: the system can independently build tools, remember them and apply them to future steps. This systems tend to be highly capable but they need significant guardrails to remain predictable

Now that we completed the initial steps in understanding the system we are building, analogous to Design patterns in Software development, we have design patterns for guiding us through building Agentic systems.

Essential Agentic Design Patterns

Design patterns have been always there providing standard ways of solving complex problems, Agentic AI design patterns are proven architectural solutions that can significantly enhance how you structure your systems and help build confidence for users and engineers.

Planning:

The agent outlines sequence of actions to achieve a specific goal. There can be two types of Planning agent:

- Explicit Planning: The LLM generates a series of steps that the system then executes, either within the same agent or by delegating to sub-agents.

- Plan Refinement: the agent revisits and tweaks its plan based on the new information or changing conditions

Reflection:

The agent reviews its own outputs and actions, assessing their quality based on the past steps, observations, feedback from the environment, enabling re-planning, exploring and self evaluation

Key Features:

- Self Evaluation: agent can assess its own performance using metrics or goals

- Feedback Loop: they can adjust their strategies based on evaluation

- Scenario: it can reflect on user feedback to improve its suggestion

Map-Reduce:

This pattern breaks a large task into smaller components (Map phase), processes each individually, and then combines the results into a final output (Reduce phase).

The two phases:

- Map phase: the primary task is broken down into a list of smaller independent subtask, each task is then carried out by either a single agent or a sub agent, and you have the flexibility to decide whether to execute it sequentially or parallel

- Reduce phase: once all the tasks are complete, the system collects and combines these separate results into a single cohesive final response.

Multi-Agent Collaboration:

Two or more specialised agents collaborate to execute a common goal. Often, a “supervisor” agent orchestrates these interactions. Here a single task is split into multiple tasks which are then handed over to specialised agents that talk to each other. This mostly result in higher quality than a single monolithic agent.

It follows certain common structures:

- Hierarchical: A “Manager” agent delegates the task to “Worker” agents.

- Joint Collaboration (Swarm): Here agents work in shared space picking up tasks as they appear

This approach reduces the level of complexity a single agent needs to handle and reduces the risk of it hallucinating

Human-in-the-Loop:

This pattern introduces human oversight into the agent’s workflow.

- Approval: The agent requests permission before executing specific tasks.

- Wait for Input: The agent pauses its workflow until it receives necessary information from a user.

- Time Travel: Allows a human to review and edit previous state checkpoints

To understand this better, lets go over a simple scenario:

Lets suppose you have decided to build a “Corporate Travel Assistant”. Now lets go over the above discussed patterns on how this problem can be solved in different levels of autonomy

The Scenario: Corporate Travel Booking

The goal is to help an employee book a business trip that includes a flight, hotel, and car rental while adhering to company policy.

Level 1: The Router (Low Autonomy)

In this model, the LLM acts as a sophisticated switchboard. It doesn’t “reason” through a complex plan; it simply identifies the user’s intent and routes them to a pre-defined, deterministic workflow.

- How it works: The user says, “I need to go to New York next Tuesday.” The LLM classifies this as a booking_intent and triggers a strict, step-by-step form.

- Best for: High-compliance environments where you cannot afford deviations from a legal or financial process.

- Reliability: Very high, but lacks flexibility if the user has a complex, multi-part request.

Level 2: The State Machine (Balanced Autonomy)

Here, the LLM has more control. It uses a graph-based approach (like LangGraph) where it decides whether to move to the next step or loop back based on the current state of the data.

How it works:

- Node A (Search): The agent searches for flights.

- Node B (Policy Check): The agent checks if the flight is under $500.

- Conditional Edge: If “Yes,” move to Hotel Booking. If “No,” loop back to Search with new constraints.

- Best for: Workflows that require iterative reasoning — like finding the best price or refining a search based on feedback.

- Reliability: High, because the “loops” and “conditions” are explicitly defined by the developer, preventing the agent from wandering off-track.

Level 3: The Autonomous Agent (High Autonomy)

At this level, the agent is given a goal and a set of tools (Search, Calendar, Email). It creates its own plan, executes it, and manages its own memory of what worked and what didn’t.

- How it works: You give the agent the goal: “Organize my trip to NYC.” It decides on its own to first check your calendar, then search for flights, then realise the hotel is too far from the office, and autonomously decides to search for a different hotel without being explicitly told to “loop”.

- Best for: Open-ended tasks where the “right” path isn’t known in advance.

- Reliability: Lower. Because the agent “builds its own tools” and remembers them for future steps, it can become unpredictable.

Conclusion

The shift from deterministic to probabilistic software is inevitable. By understanding the spectrum of autonomy and applying the right design patterns, we can build agentic systems that are not only powerful but also reliable and controllable.

How are you balancing autonomy and control in your current AI implementations?

Resources:

https://oxd.com/insights/software-for-the-generative-age-from-precision-to-probability/

https://medium.com/@bijit211987/agentic-design-patterns-cbd0aae2962f

https://narain.io/writing/on-probabilism-and-determinism-in-ai.html

The Autonomy Trap: Why Most Organisations struggle at AI Integration (And How to Actually Ship… was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.