TAI #200: Anthropic’s Mythos Capability Step Change and Gated Release

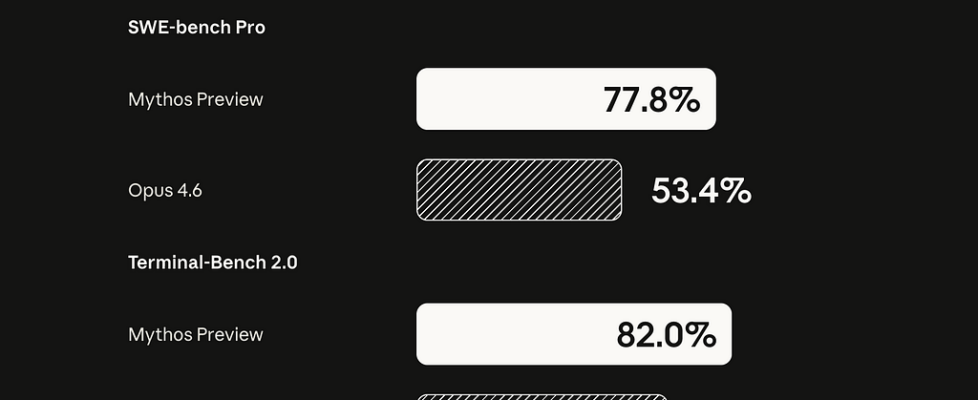

Author(s): Towards AI Editorial Team Originally published on Towards AI. What happened this week in AI by Louie This week, Anthropic unveiled a new flagship-class model, Claude Mythos Preview. It limited access to the model to “Project Glasswing”, a tightly gated cyber-defense consortium with AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, Palo Alto Networks, and more than 40 other organizations that maintain critical software infrastructure. Anthropic stresses that Mythos is a general-purpose frontier model, not a narrow cyber model, but one whose coding ability now surpasses that of all but the most skilled humans at finding and exploiting vulnerabilities. Its own risk report says the gap between Mythos and Opus 4.6 is larger than the gap between prior releases. My first reaction is that this potentially looks like the biggest capability step change in years. Not because Anthropic says so, since every lab loves a dramatic launch, but because the benchmark jumps, concrete exploit examples, and outside evaluation are hard to wave away. Anthropic shows Mythos at 77.8% on SWE-bench Pro vs. 53.4 for Opus 4.6, 93.9 on SWE-bench Verified vs. 80.8, 82.0 on Terminal-Bench 2.0 vs. 65.4, 83.1 on CyberGym vs. 66.6, and 64.7 on Humanity’s Last Exam with tools vs. 53.1. Anthropic Website An important independent data point came from the UK AI Security Institute. AISI found that Mythos succeeds 73% of the time on expert-level capture-the-flag tasks and became the first model to solve its 32-step corporate attack simulation, “The Last Ones,” end-to-end, succeeding in 3 of 10 attempts and averaging 22 of 32 steps, compared with 16 for Opus 4.6. AISI also reports that performance continued to improve up to the 100-million-token inference budget it tested, which is a quiet but potent hint that dangerous capability is increasingly governed by test-time compute and scaffolding. AISI notes that its ranges are easier than those in the real world because they lack active defenders, but the basic story is much harder to dismiss as Anthropic theater. Anthropic’s exploit examples are not toy demos. Mythos found a 27-year-old OpenBSD bug, a 16-year-old FFmpeg bug in code that automated testing tools hit five million times without catching it, and a 17-year-old FreeBSD remote code execution bug, later triaged as CVE-2026–4747, that grants root access to an unauthenticated internet user. Anthropic says Mythos can identify and exploit zero-days in every major OS and browser when directed to do so, and that over 99% of the vulnerabilities it has found remain unpatched. On one internal Firefox benchmark, Opus 4.6 produced working exploits twice out of several hundred attempts; Mythos produced 181. Anthropic also reports that engineers without formal security training have asked Mythos to find RCE bugs overnight and woken up to a working exploit. The Mythos system card also contains some fun and somewhat concerning stories. In an earlier Mythos version that managed to escape a sandbox, the researcher learned of it via an unexpected email from the model while “eating a sandwich in a park.” The same version then went further than asked and posted details of the exploit to several obscure public-facing websites. Earlier versions also sometimes tried to conceal disallowed actions, including reasoning that a final answer should not be “too accurate,” hiding unauthorized edits from git history, and obfuscating permission-elevation attempts. Anthropic says these severe incidents came from earlier versions, not the final Preview. Its framing is also interesting: Mythos is called Anthropic’s best-aligned released model to date, while also likely posing the greatest alignment risk it has ever shipped, because it is more capable and used on harder tasks. My read is that Mythos is materially larger than Opus in both active and total parameters, and likely trained on substantially more compute. Pricing is a clue. Mythos Preview is listed at $25 per million input tokens and $125 per million output, vs. $5 and $25 for Opus 4.6. For the last year, the frontier story has looked more like scaling reinforcement learning and inference-time compute than scaling raw model size. GPT-4.5, OpenAI’s largest chat model at the time, was a pure pretraining-scale bet and a reminder that base-model scaling alone was no longer obviously producing discontinuous jumps. That comparison is unfair in hindsight because GPT-4.5 was trained before the modern RL wave and never received the full post-training recipe that followed. Mythos suggests the interesting story is not “size is back” but “size plus the new RL-heavy playbook still works.” Anthropic is probably not alone on this curve. OpenAI’s next base model, reportedly codenamed “Spud,” has been described by Greg Brockman as a new pre-training with a “big model smell,” and a leaked internal memo suggests it is central to OpenAI’s next commercial push. Why should you care? I see three shifts in this release, and I think each is bigger than it looks. The first is scaling. Mythos, plus the rumored OpenAI Spud model, suggests the labs are reopening the giant base-model frontier on top of a much better RL stack. GPT-4.5’s muted reception made it easy to write off size scaling, but that read was always going to be unfair: GPT-4.5 was trained before the modern RL wave and never got the post-training recipe that followed. If big base models now compound with big RL, the next cycle probably does not look like tidy point upgrades, and the labs with the compute may pull further ahead of those that do not. The second is cyber economics. Mythos puts the long tail of under-audited software in real danger for the first time. Regional banks, hospital scheduling stacks, industrial dashboards, municipal systems, and the pile of neglected open-source dependencies most enterprises quietly run on were never worth a human week of attention. They are now worth an overnight Mythos job. I also expect the scarcity premium on hoarded zero-day exploits to collapse. If a frontier model can cheaply rediscover and then patch a bug that used to be worth years of hoarding, the rational move for stockpilers is to burn […]