TAI #198: Real-Time Speech AI Gets Serious: Google and OpenAI Race to Own the Voice Layer

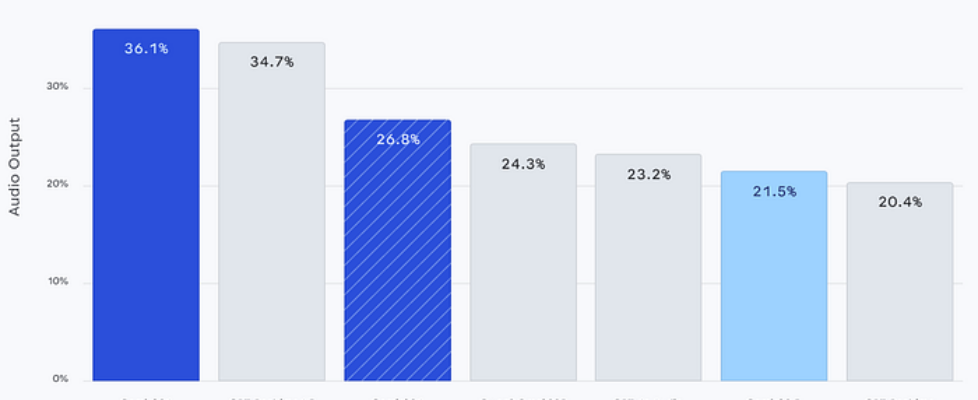

Author(s): Towards AI Editorial Team Originally published on Towards AI. What happened this week in AI by Louie Real-time speech AI has been progressing quietly for the past year, but the past few weeks have delivered enough to warrant a dedicated look. Google released Gemini 3.1 Flash Live on March 26, OpenAI shipped GPT-Realtime-1.5 on February 23, and Cohere launched its Apache 2.0-licensed Transcribe model the same day as Google. We are now past the point where real-time voice AI feels like a demo-stage curiosity. It is starting to look like deployable infrastructure, and headline audio pricing has fallen sharply since OpenAI’s original Realtime API launch in October 2024. Google’s Gemini 3.1 Flash Live is the headline release. It is Google’s highest-quality real-time audio model, designed for voice-first agents that can reason, call tools, and hold natural conversations across 70 languages. It accepts audio, video, text, and image input, supports function calling with Google Search grounding and extended thinking, and is available in developer preview via the Gemini Live API. The benchmarks are strong. On ComplexFuncBench Audio, which tests multi-step function calling, Gemini 3.1 Flash Live leads with 90.8% compared — a big step up from 71.5% on the prior Flash 2.5 model. On Scale AI’s AudioMultiChallenge, which tests instruction-following amid real-world interruptions and hesitations, Gemini scores 36.1% with thinking enabled, compared to GPT-Realtime-1.5 at 34.7%. On BigBenchAudio for reasoning, Gemini reaches 95.9% with high thinking, compared to GPT-Realtime-1.5 at 81.1%. The catch is that these top Gemini scores require extended thinking, which adds latency. With minimal thinking, Gemini drops to 70.5% on BigBenchAudio and 26.8% on AudioMultiChallenge, both below GPT-Realtime-1.5. The reasoning-versus-latency trade-off is now a live engineering decision, not a footnote. Google has also improved tonal understanding, with the model recognizing pitch, pace, frustration, and confusion and adjusting its responses accordingly. Enterprise customers, including Verizon, LiveKit, and The Home Depot, have tested 3.1 Flash Live. The Home Depot highlighted the model’s ability to capture alphanumeric product codes in noisy environments and handle customers switching languages mid-conversation. OpenAI’s GPT-Realtime-1.5 looks strongest on conversational dynamics and transport options rather than on raw reasoning benchmarks. Artificial Analysis currently gives it a 95.7% Conversational Dynamics score and a 0.82-second time-to-first-audio. The same benchmark page lists Gemini 3.1 Flash Live at 2.98 seconds with high thinking and 0.96 seconds with minimal thinking. In practice, GPT-Realtime-1.5 should feel snappier in live conversation, while Gemini scores higher on published reasoning benchmarks. A key operational improvement in GPT-Realtime-1.5 is OpenAI’s reported 10.23% gain in alphanumeric transcription accuracy. That matters because phone numbers, order IDs, and product codes are where voice systems often fail. OpenAI also supports WebRTC, WebSocket, and SIP for Realtime, which gives developers a direct path into browser, server, and telephony stacks. Perplexity says it already uses Realtime-1.5 in production for millions of voice sessions each month. They are not the only players, either. Step Audio R1.1 out of China is a notable contender in the speech-to-speech space, winning on several benchmarks at very competitive pricing. Grok’s Voice Agent also remains in the running. The field is getting crowded fast. The pricing tells an important story, but it is worth being precise about what is being compared: raw audio model cost, not total application cost. OpenAI documents audio tokenization at 1 token per 100 milliseconds for user audio and 1 token per 50 milliseconds for assistant audio. At $32 per million audio input tokens and $64 per million audio output tokens, that works out to roughly $0.096 per minute of two-way audio before text tokens, grounding, or telephony. Google publishes direct per-minute equivalents for Gemini 3.1 Flash Live Preview: $0.005 per minute of audio input and $0.018 per minute of audio output, or a total of $0.023 per minute. That makes Google about 4.2x cheaper on headline audio rates, although the model remains in preview and Google notes that preview models may change and may have tighter rate limits. Another development that shows what this all unlocks is Google Live Translate. On March 26, Google expanded real-time headphone translation to iOS and additional countries, including France, Germany, Italy, Japan, Spain, Thailand, and the UK. The feature works with any headphones, supports 70+ languages, and preserves the original speaker’s tone and cadence. This is the closest thing to a universal translator that exists today. Five years ago, it was science fiction. Now it runs on a phone with any pair of earbuds. Google Meet’s speech translation beta extends this into professional settings, translating your speech in real time “in a voice like yours.” Search Live expanded to over 200 countries this week. The direction is clear: multilingual voice interaction is becoming a default capability, not a premium feature. The cost trajectory reinforces this. In late 2024, OpenAI’s original Realtime API priced audio input at $100 per million tokens. GPT-Realtime brought that to $32. Gemini 3.1 Flash Live enters at $3 (albeit with different tokenisation), with a free tier. That’s a huge cost reduction in under two years. Cohere also contributed this week from a different angle. Cohere Transcribe is not a conversational model but a dedicated automatic speech recognition (ASR) system: 2 billion parameters, conformer-based, 14 languages, Apache 2.0. It ranks first on the Hugging Face Open ASR Leaderboard with an average word error rate (WER) of 5.42%, ahead of Zoom Scribe v1 at 5.47% and OpenAI Whisper Large v3 at 7.44%, and processes audio at 525x real-time. For enterprises in healthcare, legal, finance, or government that cannot send audio to third-party cloud APIs, this is the most important release of the week. Open weights, consumer-GPU-sized, and zero licensing cost. On a personal note, one of my favourite audio-based AI tools right now is Granola. It captures high-quality transcripts of your computer audio and calls with minimal setup, and then lets you run top models over those transcripts to produce call summaries or fully cleaned-up notes. It’s the kind of product that shows where this whole space is heading: speech capture and understanding becoming […]