PAC Learning Model in Machine Learning: All You Need To Know 2026

The PAC learning model in machine learning is the foundation of how we perceive algorithm dependability when working with limited data. It predicts whether a model will run well on fresh examples after training on a set of data.

This blog examines the PAC learning model in machine learning, beginning with its fundamental concept and progressing to key principles, conceptual foundations, and applications. The blog also discusses what is PAC in machine learning, the concept of probably approximately accurate PAC learning, the role of the PAC hypothesis in machine learning, and PAC learnability in machine learning.

What is PAC in Machine Learning?

PAC stands for Probably Approximately Correct. It is a structure that defines the conditions under which a machine learning algorithm can effectively learn from data. Leslie Valiant introduced it in 1984, and it answers a fundamental question: how many examples does an algorithm require to make reliable forecasts on unknown data with great accuracy?

Simply put, what is PAC in machine learning? The procedure produces an assumption that is “approximately correct” (error below a small value ε) and “high probability” (at least 1-δ, where δ is small). This system is suitable for any sort of data distribution, making it resilient.

Consider this: you train a model on patient data from Indian hospitals to detect diseases. PAC tells you the sample size needed so the model works well on new patients from different regions.

Key parameters include:

- ε (epsilon): maximum allowable error.

- δ (delta): failure probability.

- These ensure the model generalizes beyond training data.

As Valiant notes in his original work, “PAC learning formalizes the conditions under which efficient learning is possible from finite samples.”

To know more about the perspectives and issues of machine learning, visit this blog now.

Components of Probably Approximately Correct PAC Learning

PAC learning is likely to be roughly correct in terms of instance space, concepts, hypotheses, and distributions. The instance space X stores all conceivable data points, such as images or numbers. A concept c is a subset of X that defines the true pattern, for example, “all spam emails.”

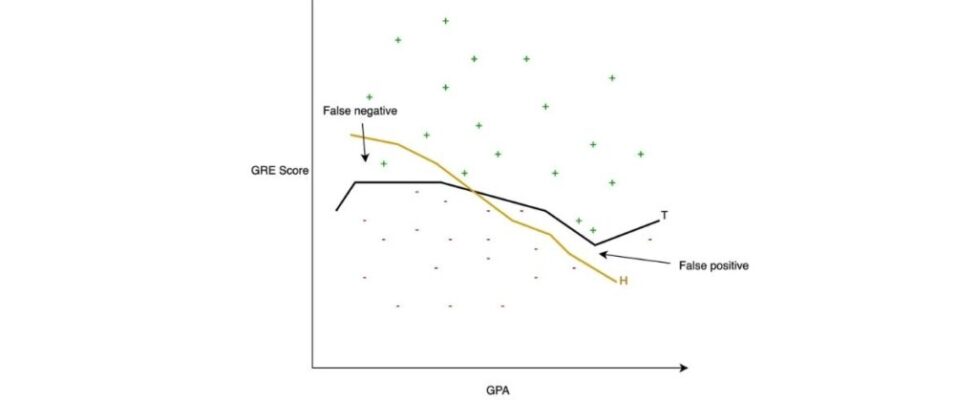

In machine learning, a PAC hypothesis is the model’s guess (h) from a hypothesis class H. The goal is to identify h close to c such that error(h, c) ≤ ε with probability ≥ 1-δ.

Why does this matter? In India’s expanding AI business, where data security rules such as the DPDP Act limit datasets, approximately accurate PAC learning likely aids in the development of reliable models without the need for vast amounts of data.

PAC Learnability in Machine Learning Explained

PAC learnability in machine learning defines if a concept class C is learnable by an algorithm A. C is PAC-learnable if A, for any c in C, any distribution D over X, and any 0 < ε, δ < 1, uses polynomial samples in 1/ε, 1/δ, and size parameters to output h with error ≤ ε, probability ≥ 1-δ.

Formally, a class C is PAC-learnable if such an efficient A exists. Finite VC dimension (Vapnik-Chervonenkis) equals PAC learnability under regularity conditions.

- If |H| (hypothesis space size) is finite, sample complexity m ≥ (1/ε) (ln|H| + ln(1/δ)).

- This bound shows more complex H needs more data.

Understanding PAC learnability guides us in choosing hypothesis spaces that balance expressiveness and data needs.

Formal PAC Learning Theorem and Sample Complexity

The PAC learning theorem states: for accuracy ε and confidence 1-δ, a sample size m exists so any consistent hypothesis (matches all training examples) has true error < ε with probability ≥ 1-δ.

Mathematically, for finite H:

This is the Hoeffding-based bound, assuming independent samples.

Sample complexity grows with hypothesis complexity. For VC dimension d:

Example: Learning intervals on a line (VC dim=2) needs fewer samples than complex neural nets.

This theorem proves why consistent learners like decision trees work well with enough data.

Role of PAC Hypothesis in Machine Learning

In machine learning, the PAC hypothesis is any h from H that closely resembles the true concept. Success depends on H having correct approximations of C (realizable case: H ⊇ C). In agnostic PAC, there is no ideal h; instead, we seek the smallest error h’. The bounds are based on Rademacher complexity or VC dim.

The learner must select a generalization function (hypothesis) from among various functions with a high probability of low generalization error.” When we talk about the uses in Indian context, such as anticipating crop yields with satellite data, a good PAC hypothesis prevents excess fitting of chaotic monsoon patterns.

Steps to find a PAC hypothesis:

- Draw m samples from EX(c, D).

- Run learner A(ε, δ, samples).

- Output h consistent with samples.

Generalization Bounds and VC Dimension

Generalization in PAC assesses how well error during training predicts actual error. Uniform descent is defined as sup over h in H |empirical error – true error| < ε, with high probability. VC dimension d(H) is the maximum number of points that H can shatter. If d is finite, then H PAC is learnable.

For half-spaces in R^d, VC dim = d+1 and PAC-learnable. In code, scikit-learn’s decision trees indicate that accuracy increases with sample size, which matches PAC expectations.

Practical Applications and Examples

PAC learning model in machine learning can be used to spam filters, make a diagnosis, and recommendation systems.

Example:

- Interval learning. Target: points in [a,b]. Hypothesis space: all intervals. VC dim=2, PAC-learnable. Algorithm: smallest interval covering positives, excluding negatives.

- In India, Zomato uses similar trends and model to figure out delivery predictions. It ensures generalization of models across cities.

- Python snippet insight: Training decision trees on synthetic data shows accuracy stabilizing around 90% with 50%+ samples, aligning with PAC sample needs.

- Challenges: Infinite H (e.g., deep nets) needs regularization for PAC-like guarantees.

On A Final Note…

The PAC learning paradigm in machine learning provides timeless guarantees for when algorithms expand reliably. This approach develops robust AI systems by first knowing what PAC is in machine learning and then grasping PAC learnability. As India progresses in data science, implementing PAC principles will result in more accurate simulations with less resources.

What is PAC learning model in machine learning?

PAC learning model in machine learning is a framework where an algorithm learns a hypothesis approximately correct (error ≤ ε) with high probability (≥ 1-δ) using finite samples, regardless of distribution.

What is PAC in machine learning?

PAC in machine learning means Probably Approximately Correct, defining learnability via accuracy ε and confidence 1-δ parameters.

What is probably approximately correct PAC learning?

Probably approximately correct PAC learning ensures a learner finds a good hypothesis with small error on new data, with bounds on samples needed.

What is PAC hypothesis in machine learning?

PAC hypothesis in machine learning is the output model approximating the true concept, with error controlled by PAC parameters.