Failure-Aware Medical AI: A System Architecture for Uncertainty-Driven Clinical Decision Making

Integrating OOD detection, calibrated confidence, and human-in-the-loop escalation

In 2019, a deep learning system reported in Nature Medicine [1] achieved performance comparable to expert radiologists in detecting breast cancer from mammograms. The result was widely interpreted as a milestone for medical AI. However, subsequent evaluations across different hospitals revealed a sharp decline in performance when the same models were deployed in environments with different patient distributions, imaging devices, and clinical protocols.

This pattern has become increasingly common in healthcare AI. A large-scale review published in The BMJ [2] found that more than 90 percent of medical AI systems lack proper external validation. Many models demonstrate strong performance on curated datasets but degrade significantly under real-world conditions where data distributions shift unpredictably.

What makes this problem particularly serious is not simply that models fail. It is that they fail silently while remaining confident. This exposes a deeper issue that goes beyond model accuracy. It reflects a fundamental limitation in how current medical AI systems are designed. Most systems are built to produce predictions. Very few are built to determine whether those predictions should be trusted.

Where Current Medical AI Architectures Break Down

To understand the limitation, it is useful to examine the standard architecture used in most medical AI pipelines. Despite differences in model complexity, the system design is typically linear: patient data is processed by a trained model, which outputs a prediction that is directly or indirectly used for clinical decision making.

This structure in fig. 1 implicitly assumes that the model’s output is always meaningful and safe to act upon. It treats prediction as the final step in the decision chain rather than one component of a broader reasoning system.

However, real-world medical environments violate this assumption in several important ways. First, model confidence is often miss-calibrated. Neural networks can assign high probability scores to incorrect predictions, creating a false perception of reliability. Second, medical data is highly non-stationary. Changes in population demographics, imaging equipment, hospital workflows, or even disease prevalence can lead to significant distribution shifts that models are not prepared for. Third, and most critically, there is no built-in mechanism for abstention. The system is forced to produce an output even when it lacks sufficient information.

These issues are not hypothetical. They manifest directly in deployed systems, often with clinically significant consequences.

A Real-World Example: Failure in Sepsis Prediction Systems

Sepsis prediction models are among the most widely deployed AI systems in clinical practice. Designed to identify early signs of a life-threatening condition, these systems are intended to support timely intervention in critical care settings.

However, independent evaluations of a widely deployed sepsis prediction system revealed a concerning outcome. The model identified only a small fraction of true sepsis cases while simultaneously generating a large number of false alerts. Over time, clinicians began to disregard the system entirely due to alert fatigue.

The failure here was not merely statistical. It was systemic. The model did not differentiate between high-confidence and low-confidence predictions. It did not account for contextual uncertainty. It did not adapt its behavior based on data quality or distribution shift. Instead, it produced uniform outputs regardless of reliability.

In practice, this transformed a potentially useful clinical support tool into a noisy signal that reduced trust in automated decision systems. This highlights a critical limitation in current architectures: they optimize for prediction accuracy but ignore prediction reliability.

From Prediction Systems to Decision Systems

Addressing this limitation requires a fundamental shift in design philosophy. Instead of building systems that always generate predictions, we must design systems that explicitly decide when to predict, when to defer, and when to escalate.

This shift leads to a new class of systems known as failure-aware medical AI architectures. These systems do not treat prediction as an isolated task. Instead, they embed prediction within a broader decision-making framework that evaluates uncertainty, validates input reliability, and determines whether automated output is appropriate. This transforms the role of AI from a deterministic predictor into a probabilistic decision agent.

A Failure-Aware Architecture for Medical AI

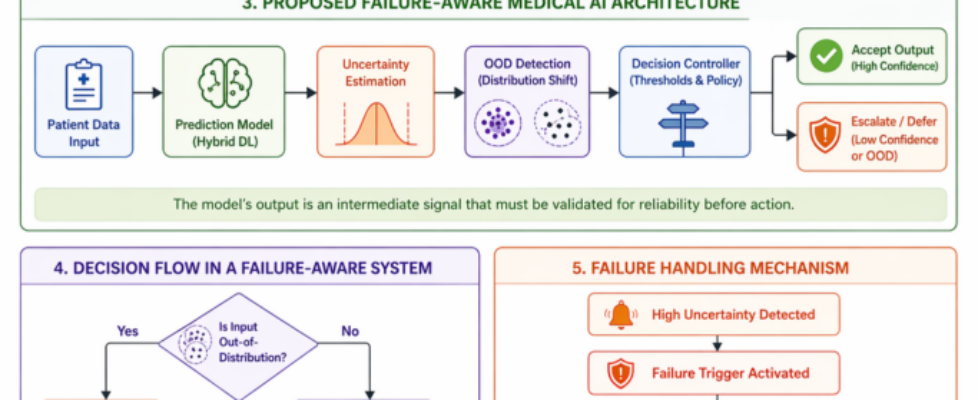

A failure-aware system introduces multiple layers of reasoning beyond the predictive model. Each layer evaluates a different aspect of reliability before a final decision is made.

This architecture in fig. 2 introduces a key conceptual change. The predictive model is no longer the final decision maker. Instead, its output is treated as an intermediate signal that must be evaluated for reliability before being acted upon.

Uncertainty estimation and out-of-distribution detection operate as parallel validation mechanisms. Their outputs are then integrated by a decision controller that determines whether the system should proceed autonomously or defer to human expertise.

Operational Behavior Under Uncertainty

The behavior of this system can be better understood through its decision flow. Unlike traditional pipelines, this structure explicitly encodes escalation and rejection pathways.

This flowchart above ensures that uncertainty is not ignored or hidden. Instead, it directly influences the decision pathway, allowing the system to adapt its behavior based on reliability signals.

Explicit Failure Handling as a Design Feature

A key principle of this approach is that failure is not treated as an exception. It is treated as an expected outcome that must be handled systematically.

This structure in fig. 4 ensures that uncertain cases do not result in uncontrolled or misleading predictions. Instead, they are redirected into safer computational or clinical pathways.

Why Failure-Aware Design Improves Clinical Reliability

Incorporating uncertainty into decision making has been shown to improve clinical outcomes in multiple domains. Studies in medical imaging suggest that deferring uncertain cases to human experts can improve overall system accuracy beyond either standalone AI or human performance. Other research demonstrates that calibrated uncertainty estimation significantly reduces high-confidence errors, which are often the most dangerous in clinical settings.

The key insight is that improvement does not come from increasing model accuracy alone. It comes from improving decision allocation. The system becomes more reliable not by answering more questions, but by knowing which questions it should not answer.

The Trade-Off Between Automation and Safety

Failure-aware systems introduce an inherent trade-off. As uncertainty thresholds become more conservative, the number of automated decisions decreases. However, this reduction in automation is not necessarily a drawback in clinical contexts.

In healthcare, the cost of an incorrect decision often outweighs the cost of delayed decision making. Therefore, systems must be evaluated not only on throughput but also on risk-adjusted reliability. A system that defers appropriately is not less capable. It is more aligned with clinical safety requirements.

Toward Next-Generation Medical AI Systems

The current generation of medical AI systems is optimized for predictive performance on static datasets. The next generation must be optimized for robust decision making under uncertainty.

This requires a shift in evaluation philosophy. Instead of asking how accurate a model is under ideal conditions, we must ask how it behaves when data is incomplete, when distributions shift, and when its internal confidence is low.

In medicine, uncertainty is not an edge case. It is a constant operational condition. Clinicians are trained to recognize and manage it continuously. AI systems must be designed with the same principle. The most reliable system is not the one that always produces a prediction. It is the one that understands when it should not.

References

- McKinney et al., Nature Medicine, 2019

- Wynants et al., BMJ, 2020

Failure-Aware Medical AI: A System Architecture for Uncertainty-Driven Clinical Decision Making was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.