DeepSeek V4 Just Launched on Huawei Chips First — No Nvidia Required.

DeepSeek V4 Just Launched on Huawei Chips First — No Nvidia Required. Here’s Why That Changes Everything.

1.6 trillion parameters. 1 million context. Running natively on Ascend. Jensen Huang’s “horrible outcome” just became reality.

On April 24, DeepSeek dropped a bomb.

It wasn’t the 1.6 trillion parameters. It wasn’t the 1 million token context window. It wasn’t even the 93.5% pass rate on LiveCodeBench that put it ahead of Claude Opus 4.6 and Gemini 3.1 Pro.

It was this: Huawei Ascend gets it first.

Not “compatible.” Not “community port.” Debuted.

Nvidia CEO Jensen Huang once said in a podcast interview:

“The day that DeepSeek comes out on Huawei first, that is a horrible outcome for our nation.”

That day is today.

How Good Is V4? Let’s Start With Numbers

DeepSeek V4 ships in two sizes:

- V4-Pro: 1.6T total params, 49B active, MoE architecture, pretrained on 33T tokens

- V4-Flash: 284B total params, 13B active, pretrained on 32T tokens

Both support a 1M context window. Not extended context — native million-token support.

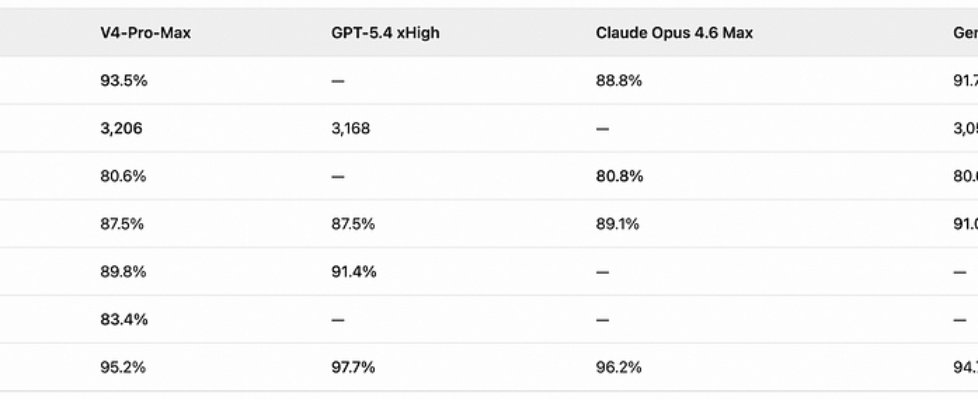

Here’s what V4-Pro-Max (maximum reasoning effort) scores against the best in the world:

Flash isn’t slouching either. V4-Flash Think Max hits 91.6% on LiveCodeBench and 3,052 on Codeforces — and that’s the 284B/13B-active “lightweight” variant.

In an internal survey of 85 experienced DeepSeek developers and researchers, over 90% said V4-Pro is already their primary or near-primary coding model. On agentic coding tasks, V4-Pro-Max achieved a 67% pass rate, beating Sonnet 4.5’s 47% and trailing only Opus 4.6 Thinking at 80%.

Bottom line: On coding and math — the two hardest benchmarks — V4-Pro-Max is the strongest open-weight model of 2026. On several benchmarks, it outright beats GPT-5.4 and Claude Opus 4.6.

Now Look at the Price

This is where DeepSeek gets truly terrifying.

V4-Flash input costs 1/18th of GPT-5.4. 1/36th of Claude Opus 4.6.

V4-Pro output at 3.48versusClaudeOpus4.6at3.48versusClaudeOpus4.6at25–7x cheaper, with matching or superior performance on multiple benchmarks.

This isn’t “great value for money.” This is a pricing power reset.

But None of That Is the Real Story. This Is: No Nvidia.

For fifteen years, the rules of frontier AI were simple — you need Nvidia GPUs. CUDA + H100 + NVLink. That was the only game in town.

DeepSeek V4 just broke that rule.

On the same day, Huawei announced that its full lineup of Ascend super nodes — Ascend 950, A2, and A3 — supports V4 out of the box. Not “coming soon.” Not a community effort. Official day-one support.

DeepSeek and Huawei engaged in deep “chip-model technical collaboration,” jointly defining the Ascend super node architecture. V4’s entire inference stack runs natively on Huawei’s CANN (Compute Architecture for Neural Networks) platform.

To understand the weight of this moment, you need two pieces of context: where Huawei’s chips have gotten to, and where U.S. export controls have pushed things.

Context: A Chip Revolution Forced by Sanctions

The American chokehold keeps tightening.

In October 2022, the Biden administration imposed the first AI chip export restrictions on China. The policy has escalated steadily since. In December 2025, Trump allowed H200 exports with a 25% tariff. But just weeks later, on January 14, 2026, Reuters broke the news: Chinese customs agents were told Nvidia H200 chips are not permitted to clear customs. The window for large-scale compliant procurement was narrowing fast.

By April 2026, large-scale compliant procurement of Nvidia’s high-end chips is effectively dead. Gray-market channels still exist, but no serious infrastructure plan can be built on gray markets.

Meanwhile, Huawei’s chip roadmap is accelerating.

Several specs on the 950 are worth unpacking:

Multi-chip module (MCM) architecture. Two compute dies + two I/O dies, manufactured by SMIC on an advanced process node (5nm equivalent), no EUV lithography required. Industry analysts estimate yields above 80%. Huawei has worked around the lithography bottleneck.

Proprietary LingQu interconnect. Bandwidth of 2TB/s, exceeding Nvidia NVLink 5.0’s 1.8TB/s. Latency at 2.1 microseconds.

Native FP4 inference. The Ascend 950PR is the world’s first chip with hardware-native FP4 support, reducing memory footprint by 75%. And V4 happens to be one of the first trillion-parameter models to deploy FP4 at scale — the chip and the model are precision-matched.

Massive cluster scale. Atlas 950 SuperPod scales to 8,192 chips / 8 EFLOPS (FP8), expandable up to 524,000 chips / 524 EFLOPS.

Meanwhile, China’s AI chip self-sufficiency rate is climbing fast. According to IDC, domestic chip makers captured over 40% of the Chinese AI accelerator market in 2025, shipping approximately 1.65 million units. Huawei Ascend ranks #1 among domestic brands. Nvidia’s China share has dropped from 70%+ at its peak to roughly 55%.

It’s not just Huawei. ByteDance plans to spend over ¥160 billion ($22B+) on AI infrastructure in 2026 (up from ¥150B in 2025), becoming Cambricon’s largest customer. Alibaba’s DAMO/T-Head chip division generates annual revenue in the tens of billions of yuan. At least 9 Chinese AI chip companies shipped more than 10,000 accelerators.

A clear trend line is emerging: It’s not that China wants to replace Nvidia. It’s that U.S. sanctions forced China to replace Nvidia. And the replacement is accelerating.

V4’s Architecture Wasn’t Built for Nvidia

V4 isn’t just “bigger V3.” It was systematically redesigned across four layers — and every redesign points in the same direction: making the model run efficiently on non-Nvidia hardware.

1. MoE Routing: 256 Experts, Only 49B Active

V4-Pro inherits V3’s MoE design: each layer contains 1 shared expert (always active) + 256 routed experts. But the routing strategy has been significantly upgraded.

Shallow blocks use hash-based deterministic routing. Deep blocks use learned routing. The system employs auxiliary-loss-free load balancing with lightweight sequence-level penalties. Router weights are quantized to FP4.

Result: out of 1.6T parameters, only 49B activate during inference. V4-Flash is even more extreme — 284B parameters, 13B active, using roughly 10% of V4-Pro’s FLOPs and 7% of its KV cache.

2. Dual-Mode Compressed Attention: CSA + HCA

This is V4’s most significant architectural innovation.

CSA (Compressed Sparse Attention) handles long-range dependencies. It bundles every m tokens into a single KV entry and uses an FP4 lightning indexer for top-k block selection. Think of it as “global skim” — scanning million-token contexts at minimal compute cost.

HCA (Hybrid Chunked Attention) handles local fine-grained attention. It splits the sequence into chunks, computes full attention within each chunk, and connects chunks via sparse mechanisms. This is “local deep read.”

The two mechanisms alternate across layers, both incorporating sliding-window branches for local precision.

The result at 1M tokens:

- V4-Pro per-token compute: 27% of V3.2

- V4-Flash per-token compute: 10% of V3.2

- KV cache: 10% and 7%, respectively

- MRCR (long-context retrieval) score: 92.9

A comment on Reddit’s r/LocalLLaMA put it well: “The CSA + HCA approach is interesting because it decouples local attention from global context compression.”

3. Manifold-Constrained Hyper-Connections (mHC)

Traditional residual connections are simple: h_{l+1} = h_l + F_l(h_l). Stable, but expressively limited.

DeepSeek’s mHC (arXiv 2512.24880) replaces this with manifold-constrained signal propagation — confining gradient flow to specific geometric manifolds that balance stability and expressiveness. The team describes it as “mHC as a flexible and practical replacement for residual connections.”

4. FP4/FP8 Mixed Precision

V4 is among the first trillion-parameter models to deploy FP4 at scale:

- Pretraining uses FP4 quantization-aware training (QAT)

- MoE router weights: quantized to FP4

- Lightning Indexer (long-context component): runs at FP4

- Base weights: FP8; instruct weights: FP4 for MoE experts, FP8 elsewhere

This maps perfectly to Ascend 950PR’s native FP4 hardware support — the chip processes FP4 operations directly, no conversion overhead, 75% memory reduction.

5. Three Reasoning Gears

V4 offers three reasoning modes — Non-think (direct output), Think High (moderate reasoning), and Think Max (maximum effort, chain-of-thought similar to R1). In Think Max, the system prompt instructs the model to “reason with absolute maximum effort, no shortcuts allowed.”

All those eye-popping benchmark numbers above? Think Max mode.

A Training-Scale Leap

V4 was pretrained on over 33 trillion tokens — 2.2x more than V3 (14.8T). For context, Llama 3.1 405B used 15.6T. This is one of the largest publicly disclosed pretraining datasets to date.

Training employed a Muon + AdamW hybrid optimizer, expectation-based routing to suppress loss spikes, and SwiGLU truncation clamped to [-10, 10]. Post-training combined SFT, GRPO, and online distillation across ten teacher models.

All of these design choices converge on one conclusion: When you compress inference compute to a quarter of its predecessor, when FP4 precision is natively supported, when KV cache drops below one-tenth — you no longer need the world’s fastest chip. You need the most efficient one, precisely matched to these characteristics.

And the Ascend 950 is exactly that.

CANN vs. CUDA: The Ecosystem Battle

Chip-level catch-up matters. But the deeper battlefield is the software ecosystem.

How deep is CUDA’s moat? Launched in 2006, nearly 20 years of accumulation, 5 million+ developers, native support in virtually every major AI framework. When you say “do AI,” the subtext is “write CUDA.” This isn’t replaceable in a year or two.

Where has CANN gotten? Launched in 2019, now boasting 4 million+ developers, 3,000+ partners, and 35K+ GitHub stars. Went fully open-source in August 2025, completed architecture overhaul by December. CANN 8.0 offers 200+ deeply optimized base operators, 80+ fused operators, and 100+ communication matrix APIs.

The gap is real. Migrating from CUDA to CANN requires “extensive code rewrites.” ChinaTalk reported that CANN developer documentation lagged behind (some still outdated in early 2025), and debugging experiences weren’t smooth. Running one model on a framework versus sustaining millions of developers’ daily workflows are challenges of entirely different magnitudes.

But the pace of catch-up is also real. Four million developers is not a number you can dismiss. Full open-sourcing means community-driven improvements are now underway.

And DeepSeek V4’s Ascend-first launch means far more to this ecosystem than one model — it is the first trillion-parameter flagship to officially endorse CANN. According to reports, V4’s inference speed on Ascend improved 35x compared to initial unoptimized versions, with energy consumption down approximately 40%. The 950PR delivers 2.87x the per-chip performance of the Nvidia H20 (China’s export-restricted, downgraded variant).

DeepSeek itself was blunt:

“Due to limited high-end compute, V4-Pro throughput is currently constrained. We expect prices to drop significantly once Ascend 950 super nodes ship at scale in H2 2026.”

V4-Pro’s scale-out roadmap is 100% bet on Huawei. Not Nvidia.

The Global Picture: Who’s Nervous?

Nvidia, obviously. The company controls 80%+ of the global AI training chip market. But in China — one of the world’s largest AI chip demand markets — it’s already losing ground. China share has dropped from 70%+ to roughly 55%. Inference-side erosion is even faster.

What worries Nvidia more than market share is the loosening of ecosystem lock-in. Previously, even when China couldn’t buy the latest chips, developers still coded in CUDA — because there was no alternative. V4’s Ascend-first launch is changing that premise.

Other Chinese AI companies are moving too. ByteDance plans to spend ¥160B+ on AI infrastructure in 2026, becoming Cambricon’s top customer. Alibaba’s T-Head chip division generates tens of billions in annual revenue. Baidu’s Kunlun chip line holds meaningful domestic share. The entire Chinese AI industry is accelerating its migration to domestic silicon.

The geopolitical irony is hard to miss. U.S. chip export controls were designed to constrain Chinese AI development. But from 2022 to 2026, four years of sanctions have produced: a domestic AI chip industry shipping 1.65 million units annually, a CANN ecosystem with 4 million developers, and China’s most powerful AI model choosing to debut on Huawei over Nvidia.

Jensen Huang clearly sees this. His “horrible outcome” wasn’t a throwaway line — if V4’s Ascend-first launch becomes a trend, if more frontier models begin optimizing for CANN, Nvidia doesn’t just lose Chinese revenue. It loses CUDA’s status as the world’s sole AI development standard.

Reality Check: Don’t Pop the Champagne Yet

Amid the milestone, several hard constraints deserve honest acknowledgment:

Training vs. inference is a chasm. V4’s Ascend debut is on the inference side. Large-model training demands a magnitude more chip performance, interconnect bandwidth, and software stack maturity. There’s no public confirmation that V4 was trained entirely on domestic silicon. The Ascend 950PR’s FP8 performance sits at 1 PFLOPS — Nvidia’s B200 hits 4.5 PFLOPS. The gap remains real.

CANN’s ecosystem depth. Four million developers is encouraging, but CUDA has 5 million+ with a 20-year head start in libraries and tooling. Migration from CUDA requires extensive code rewrites. Developer debugging experience, third-party library support — all still lag. Running one model versus sustaining a thriving ecosystem are different propositions entirely.

Supply chain is the hard constraint. DeepSeek acknowledged V4-Pro throughput is limited, pending Ascend 950 volume shipments. The 4-die MCM architecture is estimated to yield above 80%, but large-scale manufacturing capacity remains unproven. Whether the 960 (2027) and 970 (2028) can ship on schedule will directly determine medium-term competitiveness.

Performance gaps are shrinking but persist. The Ascend 910C delivers ~60% of H100 inference performance. The 950PR at 1 PFLOPS FP8 versus B200’s 4.5 PFLOPS. Huawei is closing the gap through efficiency and architectural innovation, but raw compute still shows a generational lag. V4 can debut on Ascend largely because its compressed attention architecture slashed inference compute requirements — a “brute-force” model might not replicate this path.

The Bottom Line

DeepSeek V4 + Huawei Ascend-first launch isn’t a PR stunt. It isn’t a policy-mandated choice. It’s the result of deliberate technical co-design.

From the model side: V4’s CSA/HCA compressed attention cuts inference compute to 27% of its predecessor. FP4 mixed precision natively matches Ascend 950’s hardware capabilities. MoE routing constrains the active parameter count to 49B out of 1.6T.

From the chip side: Ascend 950’s 4-die MCM architecture works around lithography restrictions. LingQu interconnect exceeds NVLink 5.0. World-first FP4 hardware support precisely maps to V4’s quantization strategy.

From the ecosystem side: CANN just proved itself at the trillion-parameter scale for the first time. A 4-million-developer ecosystem just received its strongest confidence injection ever.

Jensen Huang called it a “horrible outcome.” For Nvidia, it certainly isn’t good news.

But for the diversification of global AI compute?

This may be one of the most consequential technology events of 2026. Not because domestic chips “caught up” with Nvidia — they haven’t. But because a trillion-parameter frontier model just proved, for the first time, that you don’t need Nvidia. And that changes everything.

Sources: DeepSeek official release, Huawei official announcements, Reuters, IDC reports, arXiv papers (2512.24880, DeepSeek-V3 Technical Report), and multiple technology publications. All data cited from publicly available sources.

DeepSeek V4 Just Launched on Huawei Chips First — No Nvidia Required. was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.