Agents as Tools vs Handoffs: Understanding the Two Patterns Behind Modern AI Systems

Multi-agent systems are quickly becoming the backbone of modern AI applications, especially in areas like assistants, copilots, and customer support systems. Instead of relying on a single general-purpose model, systems are now composed of multiple specialized agents that collaborate to solve tasks. This shift is not just about capability, but about structure. As complexity increases, the way these agents are orchestrated begins to matter more than the agents themselves.

Among the various orchestration strategies that have emerged, two patterns consistently stand out: agents as tools and handoffs. These are not just implementation details, but fundamentally different ways of thinking about control, responsibility, and flow within an AI system.

While they are often discussed separately, understanding them together reveals a more complete picture of how scalable AI systems are actually built.

The Agents-as-Tools Pattern: Centralized Control with Distributed Capability

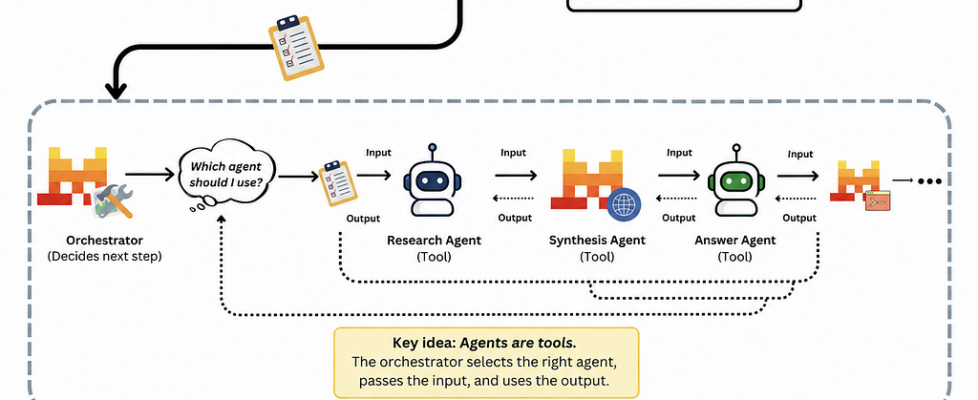

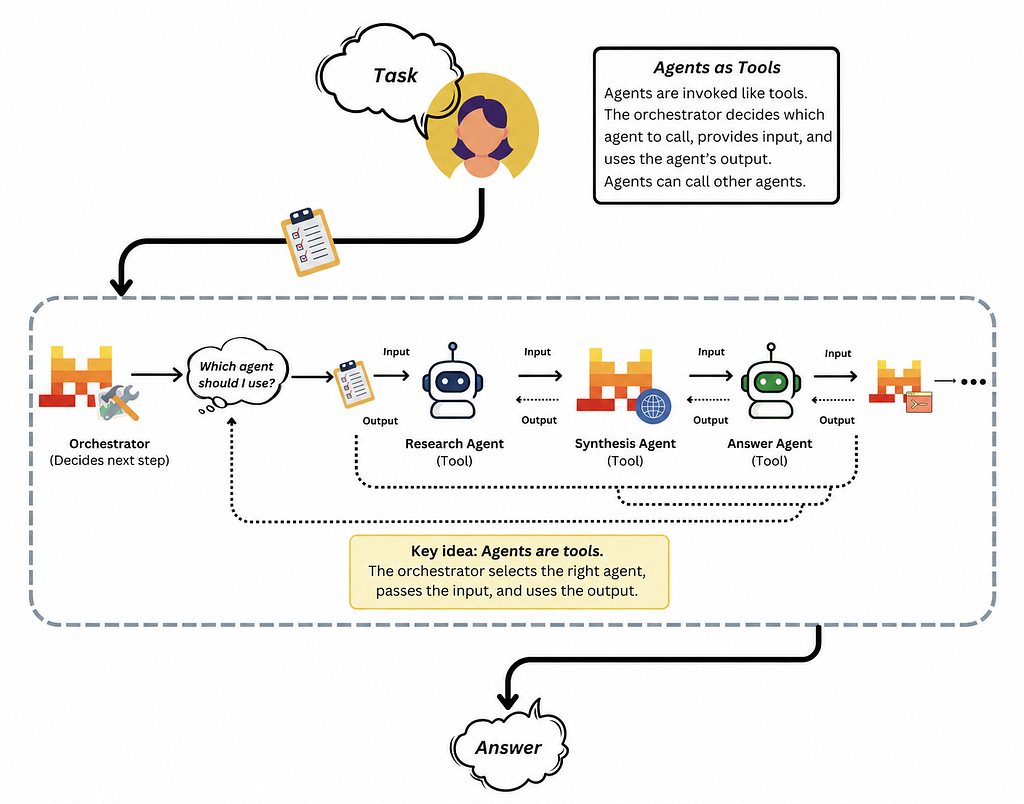

The agents-as-tools pattern, sometimes referred to as the centralized manager model, is built around a simple but powerful idea: one primary agent retains control of the interaction, while other agents are invoked as needed to perform specific subtasks. These secondary agents are not autonomous participants in the conversation; instead, they behave more like callable functions that the primary agent uses to gather information or execute specific operations.

This structure introduces a clear separation between decision-making and execution. The primary agent, often referred to as the orchestrator or manager, is responsible for interpreting the user’s request, deciding which capabilities are required, and integrating the results into a final response. The specialized agents, on the other hand, focus only on their defined roles. They receive input, produce output, and return control.

This approach provides a cohesive user experience because, from the user’s perspective, there is only a single interface. The complexity of multiple agents remains hidden behind the scenes. More importantly, it allows the system to maintain a consistent global context. Since the orchestrator never relinquishes control, it can ensure that all intermediate results are properly combined, filtered, and aligned with the user’s intent.

Consider a customer support scenario where a user asks, “Why was my bill higher this month, and can I change my plan for next month?” In a system built using agents as tools, the primary agent would first interpret the query as containing two distinct intents. It might then invoke a billing analysis agent to examine the invoice and a plan management agent to retrieve available options. Once both results are returned, the primary agent synthesizes them into a single, coherent response.

What makes this pattern particularly effective is its flexibility. The orchestrator is not bound to a predefined sequence of steps; it can dynamically decide which agents to call based on the input. At the same time, this flexibility is grounded in control. Every action passes through a single decision-making layer, making the system easier to reason about, test, and secure.

However, this centralization is also where its limitations begin to appear. The primary agent can become a bottleneck, especially as the number of tools and possible decision paths grows. Its prompt must encode not only task understanding but also routing logic, safety checks, and integration strategies. As complexity increases, this can strain both performance and maintainability. In simpler cases, where a single specialized agent could handle the request directly, routing everything through a central manager may introduce unnecessary overhead.

The Handoff Pattern: Decentralized Flow and Delegated Control

In contrast to the centralized nature of agents as tools, the handoff pattern embraces decentralization. Here, agents are not merely invoked; they can transfer control entirely to another agent. Once a handoff occurs, the receiving agent becomes responsible for continuing the interaction, often with full access to the conversation history and relevant context.

This creates a fundamentally different interaction model. Instead of a single orchestrator coordinating all actions, the system behaves more like a relay, where each agent handles a portion of the task before passing it along. Control flows through the system rather than remaining anchored in one place.

One way to think about this is as a graph of agents, where each node represents a specialist and edges represent possible handoff paths. As the conversation evolves, the system moves from one node to another, guided either by explicit logic or by the agents’ own reasoning.

This pattern is particularly well-suited for scenarios where interactions naturally segment into phases. In a customer support setting, a user might begin with a billing issue, transition into a technical problem, and later inquire about account upgrades. Rather than forcing a single agent to handle all of these domains, the system can hand off the conversation to the most appropriate specialist at each stage. The result is an experience that feels more like interacting with a team than with a single monolithic assistant.

The benefits of this approach lie in its modularity and focus. Each agent can be designed with a narrow scope, reducing prompt complexity and improving performance within its domain. Because context is carried forward during handoffs, users do not need to repeat themselves, preserving continuity across interactions. Additionally, the system becomes easier to extend; new capabilities can be added by introducing new agents and defining appropriate handoff paths, without modifying a central controller.

Yet, this decentralization introduces its own challenges. Without a single coordinating entity, ensuring consistency across agents becomes more difficult. Information gathered by one agent must be correctly passed along and understood by the next, which is not always guaranteed. Routing decisions can also become a point of failure. If an agent hands off to the wrong specialist, recovering from that mistake may require additional logic or further handoffs.

There are also performance considerations. Since handoffs typically occur in sequence, each agent must wait for the previous one to complete, which can increase latency. Debugging such systems can be particularly complex, as the logic is distributed across multiple agents, each with its own prompt and behavior. Tracing an issue often requires analyzing the entire chain of interactions rather than a single decision point.

Choosing Between the Two: Control vs Flexibility

At a high level, the distinction between these patterns can be understood as a trade-off between control and flexibility. The agents-as-tools pattern prioritizes centralized decision-making, making it easier to maintain consistency, enforce safety, and produce unified responses. The handoff pattern, on the other hand, prioritizes adaptability, allowing the system to dynamically shift responsibility as the interaction evolves.

In practice, the choice is rarely binary. Most real-world systems benefit from a combination of both approaches. A common design is to use a centralized orchestrator for high-level task management while allowing controlled handoffs within specific subsystems where conversational flow is more dynamic.

For example, an AI assistant might use an orchestrator to determine whether a query relates to billing, technical support, or general information. Once routed to a particular domain, that subsystem could employ handoffs among specialized agents to handle different aspects of the conversation. This hybrid approach leverages the strengths of both patterns while mitigating their weaknesses.

A Practical Perspective on When to Use Each Pattern

Agents as tools are most effective when a task requires coordination across multiple capabilities and the results need to be combined into a single response. They are particularly well-suited for multi-intent queries, structured workflows, and scenarios where reliability and consistency are critical.

Handoffs, in contrast, are better suited for interactions that evolve over time and require different types of expertise at different stages. They shine in conversational systems where maintaining a natural flow and delegating control to specialists enhances the user experience.

A useful rule of thumb is to favor agents as tools when the system needs to “consult specialists while staying in control,” and to use handoffs when it makes sense to “transfer control entirely to a specialist and continue from there.”

Conclusion: Architecture Over Intelligence

As AI systems continue to grow in complexity, the focus is gradually shifting from model capability to system design. The difference between a system that works in a demo and one that scales in production often lies not in how intelligent the agents are, but in how they are structured and coordinated.

Agents as tools and handoffs represent two complementary ways of organizing that coordination. Understanding when and how to use each is less about following a rulebook and more about recognizing the nature of the problem being solved. Some problems require tight control and synthesis; others benefit from fluid delegation and specialization.

The most effective systems are not those that choose one pattern over the other, but those that combine them thoughtfully, using each where it fits best.

Agents as Tools vs Handoffs: Understanding the Two Patterns Behind Modern AI Systems was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.