[Day 1/100] What Is Agentic AI? Beyond Chatbots and Copilots

Open ChatGPT and ask it to book you a flight. It will write you a beautifully formatted itinerary, suggest some airlines, and tell you to head over to Expedia.

Now imagine asking the same thing and watching the assistant actually open a browser, search across three sites, compare prices, check your calendar for conflicts, hold the cheapest option, and email you for confirmation before paying.

That second thing is an agent. The first thing is a chatbot.

This series is about how to build the second thing.

Three Words That Get Mixed Up

The terms chatbot, copilot, and agent get used as if they were the same product with different marketing. They are not. They are three different abstractions, and the gap between them is the entire reason this field exists.

Chatbot

A chatbot takes a message and returns a message. That is the contract. The model is stateless from the perspective of the world. It cannot do anything other than produce text.

Useful chatbots include customer service bots, FAQ systems, and most direct uses of the OpenAI Playground. They have one input channel (your message) and one output channel (their reply). Whatever happens after that is up to you, the human.

Copilot

A copilot is a chatbot that lives next to a tool you are already using. GitHub Copilot writes code into your editor. Notion AI fills in your document. Microsoft Copilot rewords your email.

The crucial property of a copilot is that the human is still the one driving. The copilot suggests. You accept, reject, or edit. Nothing happens without your hand on the wheel.

Copilots are useful, sometimes magical. But they are still bounded by the human in the loop. If you stop typing, the copilot stops working.

Agent

An agent makes decisions and takes actions toward a goal, on its own, until the goal is met or it gives up trying.

Read that sentence again, because every word matters.

It makes decisions. Not just predictions about the next word, but choices about what to do next. Search the web, or check the database first? Call the function with these arguments, or ask a clarifying question?

It takes actions. The output is not text for a human to read. The output is a function call, an API request, a SQL query, a write to a file, a message to another system. Real effects on real systems.

On its own. The human is not in the inner loop. You set a goal and the agent runs.

Toward a goal. There is something the agent is trying to accomplish, and it can tell when it has accomplished it.

Until the goal is met or it gives up. There is a stopping condition, which means there is a loop, which means the agent reasons about its own progress.

That is what makes a system agentic. Not the model behind it. Not the framework. The behavior.

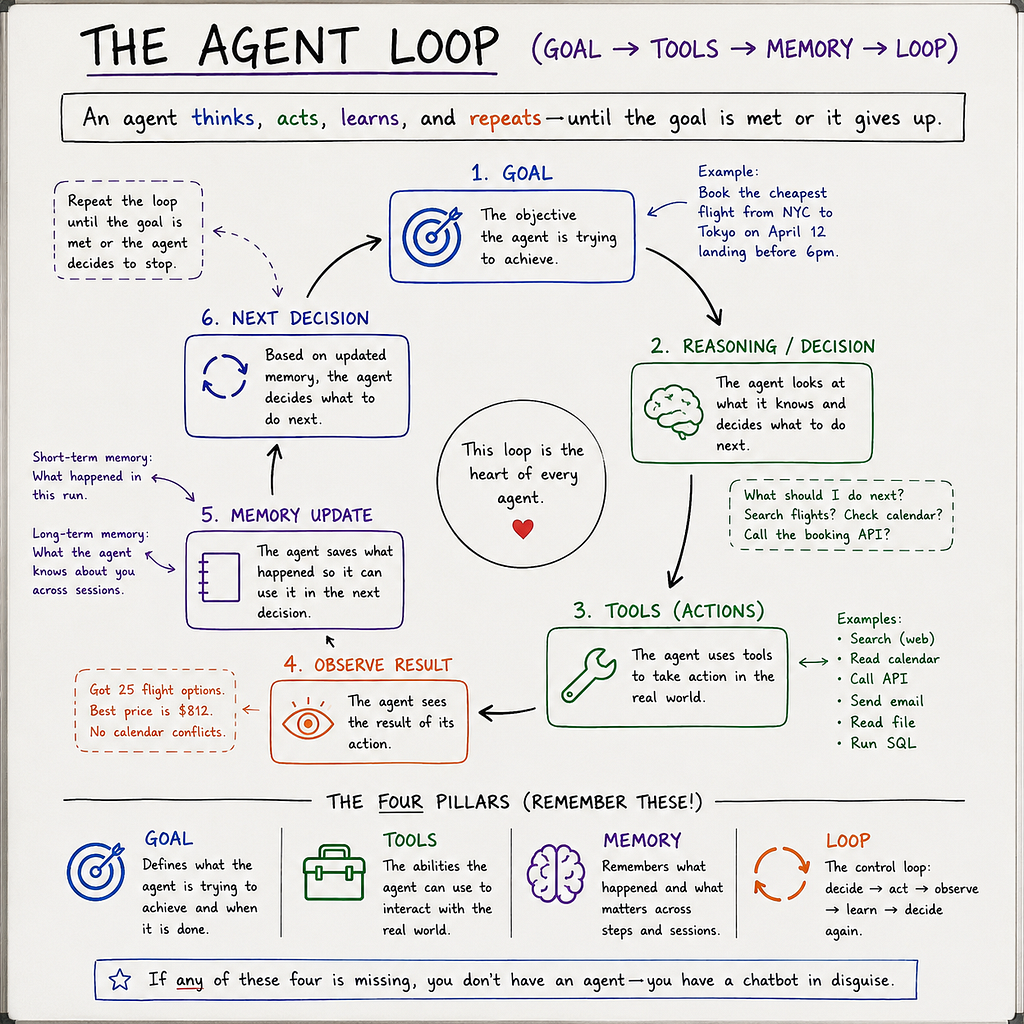

The Four Properties of an Agent

Every agent worth the name has four moving parts. If any of these is missing, you have a chatbot wearing a costume.

1. A goal. Something the agent is trying to do. Book me the cheapest flight from New York to Tokyo on April 12 that lands before 6pm. Without a goal there is nothing to plan toward and no way to know when to stop.

2. Tools. Concrete things the agent can do in the world. Search the web. Run SQL. Send an email. Call the Stripe API. Read a file. Tools are how the agent’s reasoning turns into real-world effects.

3. Memory. State that survives across steps. Short-term memory holds what just happened in this run. Long-term memory holds what the agent learned about you across many runs. Without memory, the agent loses track of itself the moment it tries anything complex.

4. A control loop. The thing that says given what I know now, what should I do next?, then does it, then asks again. This loop is where the magic and the chaos both live. Build it well and the agent gets things done. Build it badly and you get a token-burning machine that runs forever and accomplishes nothing.

Hold these four words in your head for the rest of the series: goal, tools, memory, loop. Almost every problem in agentic AI is a problem with one of those four.

Why This Is Suddenly Possible

People have been trying to build software agents for thirty years. Why did it work in the last two?

The short answer is that large language models gave us, for the first time, a general-purpose reasoning component that can read instructions in plain English, decide what to do, and emit a structured call to a tool. That last part, structured tool calling, is the unlock. Before tool calling, getting a model to produce a reliable JSON object describing the next action was a constant battle of prompt engineering and regex. Now it is a built-in feature of every major model.

The second unlock is that frontier models can hold enough context to reason about multi-step plans. A model with 200,000 tokens of context can keep an entire codebase, a project history, and a long conversation in working memory at once. Plans that used to require complex external state can now sit inside the model’s prompt.

These two changes, reliable tool calling and large context, are what turned agentic AI from a research idea into a thing you can put in production this quarter.

A Tiny Example

Here is the smallest piece of code that illustrates the difference.

A chatbot call:

from openai import OpenAI

client = OpenAI()

response = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": "What is the weather in Paris?"}]

)

print(response.choices[0].message.content)

The model returns text. Maybe it makes up the weather. Maybe it tells you it does not know. Either way, no real-world weather data was ever consulted.

An agentic loop:

from openai import OpenAI

import json, requests

client = OpenAI()

def get_weather(city: str) -> str:

r = requests.get(f"https://wttr.in/{city}?format=3")

return r.text

tools = [{

"type": "function",

"function": {

"name": "get_weather",

"description": "Get current weather for a city",

"parameters": {

"type": "object",

"properties": {"city": {"type": "string"}},

"required": ["city"]

}

}

}]

messages = [{"role": "user", "content": "What is the weather in Paris?"}]

while True:

response = client.chat.completions.create(

model="gpt-4o", messages=messages, tools=tools

)

msg = response.choices[0].message

messages.append(msg)

if not msg.tool_calls:

print(msg.content)

break

for call in msg.tool_calls:

args = json.loads(call.function.arguments)

result = get_weather(args["city"])

messages.append({

"role": "tool",

"tool_call_id": call.id,

"content": result,

})

Fewer than thirty lines, but everything is here.

There is a goal (answer the question). There is a tool (get_weather). There is memory (the messages list). There is a loop (the while True that runs until the model decides it has the answer).

This is an agent. A small one, with one tool and a trivial goal, but the same skeleton scales to a system that books flights, reviews code, or triages support tickets. Every project in this series will live inside that pattern.

What Agentic AI Is Not

A few things to clear out before we go further.

It is not just “putting an LLM in a loop.” A loop with no tools, no real goal, and no stopping condition is a token bonfire, not an agent.

It is not autonomous in the science-fiction sense. Today’s agents do exactly what their tools allow them to do, no more. They cannot acquire new capabilities at runtime unless you give them a tool that does that.

It is not a magic productivity multiplier. Building a real agent is harder than building a chatbot, not easier. The reward is that the agent can do things the chatbot cannot.

And it is not always the right answer. If a workflow is well understood, deterministic, and high-volume, write a script. Agents earn their cost when the path is uncertain, the inputs vary, and the cost of a wrong step is something the agent can recover from.

What You Will Build in This Series

Across the next 99 posts, you will go from this small weather agent to systems with real memory that survives across sessions, retrieval pipelines that ground answers in your own documents, multi-agent teams that hand work off between roles, agents running on local models on your own laptop, production observability with Langfuse, and evaluation harnesses you can put in CI.

The final ten days are capstone projects. You will ship a customer support agent, a SQL analytics agent, a code review bot that posts on GitHub PRs, a voice assistant running fully offline, and six others. Each one is built end to end with tests and traces. By Day 100 you will have a portfolio of agents, not a folder full of notebooks.

Your Homework for Day 1

Run the code above. Get an OpenAI API key, paste in the agentic loop example, and watch the model decide to call the weather function on its own. The first time you see that tool call appear in the response, something clicks that no blog post can explain in words.

Then change the question. Ask What is the weather in Tokyo and London? and watch how the model handles two cities. Ask something the tool cannot answer and watch what happens. Break it on purpose. Pay attention to what feels solid and what feels brittle.

That brittleness, the gap between it works on the happy path and it works in production, is what the next 99 days are about.

See you tomorrow for [Day 2/100] The Anatomy of an Agent: Perception, Reasoning, Action, Memory.

This is Day 1 of the 100 Days of Learning Agentic AI series. See the 100 Days of Learning Agentic AI: Full Topic Roadmap for everything we will cover. Follow along to build production-grade agents from scratch with LangChain, LangGraph, Langfuse, RAG, local models, and ten end-to-end capstone projects.

[Day 1/100] What Is Agentic AI? Beyond Chatbots and Copilots was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.

![[Day 1/100] What Is Agentic AI? Beyond Chatbots and Copilots [Day 1/100] What Is Agentic AI? Beyond Chatbots and Copilots](https://www.digitado.com.br/wp-content/uploads/2026/05/1aOoRcZMZe8qWBDLM2RjpEw-6Dy9rc-978x400.png)