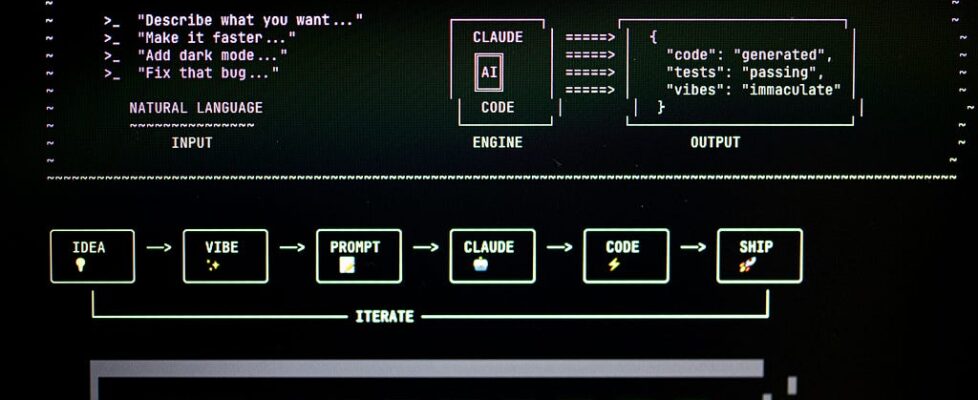

Vibe-Coding Works. That’s Exactly Why It Will Destroy Your Codebase at Scale.

The productivity gains are real. The compound debt is realer. And almost no team is measuring the right thing.

Let me say the quiet part out loud: vibe-coding is not the villain in this story. The critics who call it “reckless” are wrong. The evangelists who call it “the future of engineering” are also wrong. And if your team is using it at scale without a framework, you are quietly building a time bomb inside your own codebase.

I’ve watched this pattern play out across multiple production systems. The first 90 days are miraculous. Features ship in hours. Backlogs shrink. Stakeholders are ecstatic. Then, somewhere around the 4-month mark, something subtle changes. PRs get harder to review. Bugs surface in places nobody thought to check. Onboarding new engineers takes longer. And the team can’t quite explain why.

Nobody tracks the inflection point. That’s the whole problem.

“The AI didn’t make your codebase worse. It made your bad habits move at the speed of light.”

The consensus is measuring the wrong metric

When teams evaluate vibe-coding at scale, they measure output velocity — lines shipped, tickets closed, deployment frequency. These numbers go up. Sometimes dramatically. So the conclusion seems obvious: AI-assisted coding works.

But output velocity is a leading indicator. The lagging indicators — the ones that actually matter for a codebase that needs to survive past your next sprint cycle — are mostly invisible until they aren’t. Coupling density. Contextual coherence across modules. The ratio of code that can be safely changed by an engineer who didn’t write it. These don’t show up in your Jira dashboard.

Vibe-coding is extraordinarily good at generating locally correct code. A function that does what its prompt described. A class that passes its tests. An endpoint that returns the right shape. What it’s structurally blind to — without deliberate scaffolding — is global coherence. And global coherence is exactly what decides whether your codebase scales or suffocates.

The 4 hidden costs nobody’s budgeting for

These aren’t theoretical. They’re what I see in every team that’s been vibe-coding at scale for more than a quarter without a deliberate system around it.

Cost 01 — The coherence gap

AI generates code that fits the prompt, not the system. Over hundreds of PRs, modules develop subtly incompatible assumptions. Nobody catches it because each PR looks fine in isolation. The architecture degrades not through big, obvious mistakes but through the slow accumulation of locally correct, globally incompatible decisions.

Cost 02 — The ownership void

Engineers stop building mental models of their code. When a bug surfaces in AI-written logic, the team debugs blind. Nobody truly “owns” what they didn’t think through. This isn’t a character flaw — it’s a structural consequence of a workflow that optimizes generation over comprehension.

Cost 03 — The test confidence trap

AI writes tests optimistically — testing what the code does, not what it should do. Green CI becomes a false floor. Production failures start happening in the gap between “tests pass” and “system works.” The more AI-generated your test suite, the more confidently wrong your confidence intervals are.

Cost 04 — The context window debt

Every future AI-assisted change now requires feeding the model more context to compensate for the incoherence introduced by previous AI-assisted changes. Debt compounds automatically. The very tool you’re using to pay down technical debt is silently issuing new debt instruments faster than you can retire the old ones — unless you architect for it deliberately.

Here’s what the critics get wrong

The anti-vibe-coding camp makes a seductive argument: the AI doesn’t understand your system, therefore it cannot write code that belongs in your system. This sounds rigorous. It is also completely beside the point.

No tool understands your system. Junior engineers don’t understand your system on day one. Contractors don’t understand your system. Copy-pasted Stack Overflow answers definitely don’t understand your system. We have always used code-generation mechanisms that produce locally correct, globally risky output — and we’ve always managed that risk through process: code review, architecture docs, team conventions, onboarding.

“The failure mode isn’t that AI writes bad code. The failure mode is that teams stop doing the process work that made previous bad code safe to ship.”

When vibe-coding goes wrong at scale, the AI isn’t the reason. The reason is that the team scaled their output without scaling their quality infrastructure. They optimized the generation step without investing in the integration step. And because the AI made generation so effortless, the integration step started to feel like bureaucratic overhead — until it wasn’t optional anymore.

The inflection point nobody is tracking

In my experience, there’s a reliable pattern: teams hit an invisible inflection point around the 90-day mark. Before it, vibe-coding feels like a superpower. After it, the team is spending an increasing proportion of its time managing the artifacts of its own productivity.

The marker isn’t a metric. It’s a feeling. Engineers start saying things like “I’m not sure how this connects to the rest of the system” in PR reviews. Architectural discussions get longer and more circular. Postmortems trace bugs back not to a bad line of code but to a wrong assumption that was encoded in 30 files simultaneously.

At that point, you haven’t hit a vibe-coding problem. You’ve hit a systems thinking deficit — and the AI accelerated your arrival at a destination you were already heading toward.

What actually works at scale (and why it’s not what you think)

The answer is not to vibe-code less. The answer is to build the infrastructure that makes vibe-coding safe to do at velocity. Here is the framework I’ve seen work in practice:

1. Architect before you prompt.

Every AI-generated module should begin with a human-written architectural decision record — not a full spec, just a paragraph: what this does, what it doesn’t do, and what global assumptions it’s allowed to make. This becomes the ground truth the AI generates against. Without it, the AI invents assumptions and you pay for them later.

2. Review for coherence, not correctness.

Most teams review AI-generated PRs the same way they review human-written PRs: does this code do what it claims? The more important question at scale is: does this code belong in this system the way we want this system to evolve? That’s a different review entirely, and almost no team has built the habit.

3. Rotate ownership explicitly.

If an engineer didn’t write a module by hand, assign them a scheduled “understanding session” — read it, trace the dependency graph, add comments that explain the why. This is not about distrust. It’s about preventing the ownership void that turns a future bug into a week-long archaeology expedition.

4. Test the boundary, not the happy path.

Mandate that every AI-generated test suite gets reviewed for what it doesn’t test. AI writes optimistically. Your job is to be a pessimist: what happens when the upstream service returns a 200 with an empty body? What happens at the 2GB payload? AI will not volunteer these scenarios. Someone has to own the adversarial imagination.

5. Track the lagging indicators.

Instrument what matters: time-to-understand for new engineers on existing modules, mean time to debug production incidents that touch AI-generated code, coupling metrics across AI-generated vs. human-written boundaries. You cannot manage what you don’t measure — and right now, most teams are only measuring velocity.

The real contrarian take

Here it is: the engineers who will thrive in the next five years are not the ones who use AI the most aggressively. They are the ones who understand when AI-generated code becomes a liability and how to defer that moment as long as possible.

Senior engineers have always done this. We’ve always taken code that worked locally and asked: does this belong here? Does this fit our operational model? Can someone wake up at 3am and understand this? Vibe-coding doesn’t change those questions. It makes them more urgent and more frequent.

The teams that treat vibe-coding as a pure velocity tool will discover its costs on a 6-month delay with compounded interest. The teams that treat it as a powerful new generation mechanism that requires a proportionally upgraded integration discipline will build the fastest, most coherent codebases the industry has ever seen.

The productivity gains are real. They are also a trap — if you only measure the gains and not the price. Vibe-coding works. That’s exactly why it deserves to be taken seriously enough to build a real system around it.

The engineers who will get this right aren’t the loudest advocates or the loudest skeptics. They’re the ones quietly asking: what does this code cost us in six months?

If this changed how you think about AI at scale — share it with your engineering team. The conversation your team hasn’t had yet is the one this article is trying to start.

Tags: AI Engineering, Software Architecture, Vibe Coding, Technical Debt, Engineering Leadership, LLM Tools, Production Systems, Code Quality, Senior Engineers, Developer Productivity

Vibe-Coding Works. That’s Exactly Why It Will Destroy Your Codebase at Scale. was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.