The Other 99%

Calling an LLM is 1% of the job. The remaining 99% is making sure it doesn’t go off the rails.

This week, I sat down with Claude Code and Sonnet 4.6 to build a personal knowledge wiki. The spec was detailed: parse 316 exported conversations, cluster them into topics using an LLM, compile each topic into a structured wiki page, and stand up an MCP server so future sessions start with full context. Maybe 10 Python files, some prompt engineering, and a local server. A weekend project.

The first compilation run looked like magic. Structured pages appeared with specific names, dates, decision rationale, and open questions. The linter passed. The MCP server responded to queries. I felt the rush that everyone describes: this is 10x.

Then real-world contact happened.

The Model Gets Worse When You Give It More Room

The first surprise had nothing to do with bugs or API limits. It was a finding about model behavior that I haven’t seen documented anywhere.

When compiling a governance architecture topic into a handbook-style page, I set max_tokens to 4096. The output was nearly perfect: clean section structure, second-person prescriptive voice throughout, a Mermaid state diagram, and concrete failure modes. It clipped mid-sentence on the very last bullet point.

The obvious fix: increase the limit. I set max_tokens to 8192.

The output was garbage.

Same model, same system prompt, same source material. With more room, the model abandoned the section structure entirely, transcribed the source material verbatim rather than synthesizing it, and produced a page that read as if someone had pasted raw chat logs into a document. It wasn’t a truncation issue or a formatting glitch. The model’s behavior changed qualitatively as the output window widened.

The working hypothesis is that, at 4096 tokens, the model is forced to be economical. It has to decide what matters, compress, and synthesize. At 8192, it has enough room to be lazy. It can reproduce instead of reason. The constraint was that the prompt couldn’t do cognitive work.

This was the beginning of a pattern. Over the course of the session, I discovered a cascade of model behaviors that required specific, non-obvious workarounds:

The model wrapped entire page bodies in markdown fences, which the downstream rendering pipeline interpreted as code blocks. Five pages resisted correct section structure across three or more recompile attempts despite explicit section headers in the system prompt. When I passed raw conversation transcripts, the model in handbook mode copied them instead of synthesizing them. Filtering to assistant-only turned it on, but nothing in the prompt or the documentation suggested that the input format would change the output behavior that drastically.

Each of these was individually solvable. A regex here, an input filter there, a retry with a different prompt. But the solutions were not predictable from the problem descriptions, and each one required a hypothesis-test-debug cycle that consumed 15 to 30 minutes of focused attention.

The Preamble Arms Race

One failure mode deserves its own section because it illustrates the adversarial dynamic that develops between a developer and a probabilistic system.

The compiler’s job was to produce clean markdown. The model’s job was to produce clean markdown. But the model kept prepending artifacts: “Here is the compiled wiki page:”, “Okay, let me search our conversation history…”, “Key additions to note:”. Each preamble was different. Each one broke the downstream parser in a different way.

The first fix was simple: strip the first line if it matches a known artifact pattern. That worked for a week’s worth of outputs.

Then the model started producing preambles that didn’t match the pattern. So I expanded the regex. The model started wrapping the entire output in markdown fences. So I added a fence stripper. The fence stripper was too aggressive: when the model produced no headings at all, the stripper removed everything, leaving a blank page.

The final solution was surgical: strip only the first non-empty line, only if it matches a specific artifact signature, and only if there’s actual content below it. Three lines of regex logic to handle a problem that shouldn’t exist.

This is a microcosm of the entire session. The model doesn’t fail in consistent, predictable ways. It fails in novel ways each time, which means the developer can’t build a mental model of the failure space. Every fix is bespoke. Every fix might break the next run.

25 Inputs, and What They Were For

Over a multi-hour session that produced 51 wiki pages, an MCP server, a Confluence integration pipeline, and a handbook publishing system, I gave roughly 25 inputs. That sounds efficient. It sounds like 10x.

But the distribution tells a different story.

About 40% were steering: the initial spec, “let’s do Confluence ingestion next,” “compile and publish the GraphQL page.” These are the inputs that move the project forward.

About 35% were error recovery: “again?” after a 500 error, “so what now?” after a rate limit, “fix those” after the MCP served bad descriptions. These are the inputs that recover from failures that the system couldn’t handle on its own.

About 25% were quality correction: “none of the handbook outputs are good now,” “source blocks are not showing up well, they should be actual code blocks,” “duplicating rubrics and schemas makes the doc too long, use expandos.” These are the inputs that catch problems the system can’t see.

Less than half my time was spent on the work I actually wanted to do. More than half was spent supervising a system that fails in ways that require human judgment to detect and human intervention to fix.

The Governor Is Off

Before AI coding tools, there was a natural ceiling on how much a developer could produce in a day. Typing speed, research time, compilation cycles, and the sheer effort of looking things up. That ceiling was frustrating, but it was also a governor. It enforced recovery time. It created natural pauses where the brain could consolidate, question, and redirect.

AI removed the governor.

A UC Berkeley team embedded at a technology company and documented what happened. Rather than saving time, AI tools often intensify work. Enthusiastic adopters took on more work, worked faster, and unsustainably multitasked. Workers were observed prompting AI during lunch, in between moments, and just before leaving, so agents could work while they were away. The workday had fewer pauses and more continuous cognitive engagement.

A study of 500 developers found a 19.6% rise in out-of-hour commits among engineers using AI tools. Output went up. So did the hours. The work didn’t compress. It expanded to fill the new capacity.

The wiki session followed this pattern exactly. The compilation took roughly 45 seconds per topic. With 51 topics, that’s 38 minutes of model computation. During those 38 minutes, a rational person would step away. I didn’t. I monitored output, tailed logs, checked file counts, and re-armed monitors. When a batch failed, I diagnosed it immediately. When the context window was filled and the session was compacted, I re-verified the assumptions immediately. There was no natural stopping point because the system was always doing something.

Simon Willison, with 25 years of development experience, named the constraint directly: “There is a limit on human cognition, in how much you can hold in your head at one time. And it’s very easy to pop that stack at the moment.”

The wiki session popped the stack twice. Both times, the conversation exceeded the context limits and had to be condensed into a summary. Each compaction lost nuance. Specific error messages, attempted fixes that didn’t work, and the reasoning behind a particular prompt design. After each compaction, I had to re-verify that the system still understood the project state. That verification took focused effort and delivered no forward progress.

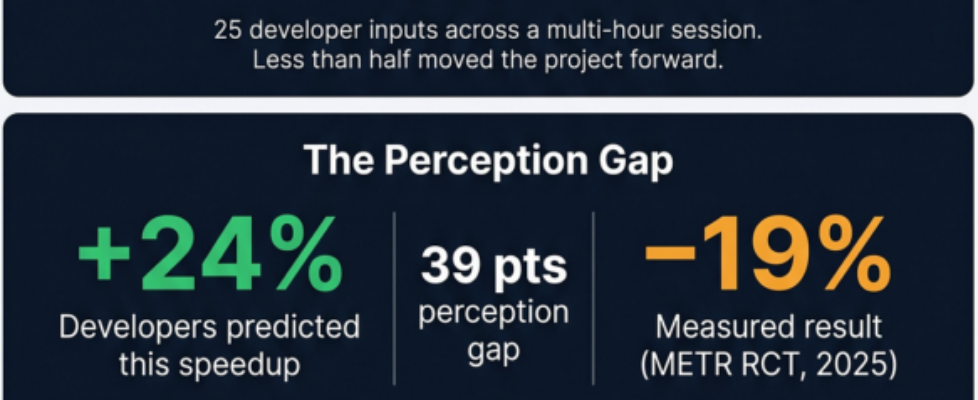

The Perception Gap

METR ran a randomized controlled trial with experienced open-source developers working on their own repositories. Before the study, developers predicted AI would make them 24% faster. After completing the study, they estimated 20% improvement. The measured result: AI increased completion time by 19%.

That’s a 39-point gap between perception and reality.

I would have told you the wiki session was enormously productive. And by output metrics, it was: 51 compiled pages, an MCP server, a Confluence pipeline, a handbook publishing system with Mermaid rendering and Confluence expand macros. A single person could not have built that in a day without the model.

But the cost was paid in a currency nobody measures. Not engineering hours. Not story points. Sustained attention, frustration tolerance, context maintenance across compaction boundaries, and the inability to look away because failures are unpredictable and compound if unaddressed. The METR finding isn’t about AI being slow. It’s about the validation overhead consuming the time that was nominally saved.

The Faros AI study of 10,000 developers confirms the mechanism at the organizational scale: high-AI-adoption teams completed 21% more tasks and merged 98% more PRs. But PR sizes grew by 154%, review time increased by 91%, bugs increased by 9%, and end-to-end DORA delivery metrics were flat. More output at the individual level. No improvement at the system level. The bottleneck shifted from generation to review, from writing to reading, from producing to validating.

Who Can Actually Do This

The people who thrive with AI coding tools are a narrow population, and even they face a specific risk.

A randomized study out of MIT found that AI use impairs conceptual understanding, code reading, and debugging abilities without delivering significant efficiency gains on average. Participants who fully delegated coding tasks showed some productivity improvements, but at the cost of learning the underlying systems. The people best positioned to use these tools effectively are precisely those with enough domain depth to catch the model’s failures. The people building that depth while relying on AI to get the work done risk both slower learning and false confidence in the output.

The cognitive offloading research draws the same line. A 2025 study found a strong negative correlation between AI tool usage and critical thinking scores. But buried in the same body of research, generative AI boosted learning for those who engage in deep conversations and explanations, while it hampered learning for those who sought direct answers. The difference between atrophy and amplification comes down to what the developer brings to the interaction.

In the wiki session, every failure I caught drew on knowledge the model didn’t have. The heading-demotion regex that mangled GraphQL # comments inside code blocks: I caught that because I know GraphQL syntax. The category drift where topics moved from specs/ to decisions/ on recompile, leaving orphan files: I caught that because I understood the compiler’s routing logic. The synthesis-vs-transcription failure in handbook mode: I caught that because I knew what a good handbook page should look like and could distinguish it from reformatted chat logs.

None of these catches were prompted by error messages or test failures. The system reported success each time. The model’s output was syntactically valid, passed the linter, and looked plausible. It was wrong in ways that only domain knowledge could detect.

Steve Yegge described crashing and falling asleep after long AI coding sessions. He called the experience “genuinely addictive” and wrote that he regrets contributing toward setting unrealistic standards. Tim Dettmers at the Allen Institute says peak productivity comes from running as many agents as possible in parallel, which requires near-constant context switching. “Agents expand what feels possible, but at the same time they really amplify this ongoing tension around focus and mental bandwidth.”

The mechanism mirrors variable-reward dynamics. Each completed task delivers a small hit. The work always feels like it’s on the cusp of something. High-functioning burnout under sustained cognitive load is not a collapse. It’s a well-documented condition: performance is maintained temporarily while mental reserves steadily erode.

The Supervision Problem Is the Product Problem

The industry is framing AI-assisted development as a tooling problem. Better models, better context windows, better code completion. The research suggests it’s a work organization problem.

The tools scaled output without scaling the human infrastructure around judgment, recovery, and validation. A developer using Claude Code is not writing code. They are supervising a probabilistic system that fails in novel ways, maintains context across compaction boundaries, distinguishes plausible output from correct output, and does all of this under continuous cognitive load with no natural stopping points.

That’s a different job from software engineering. It requires a different set of skills: context articulation, output validation, system orchestration, and meta-level pattern recognition. It demands sustained vigilance rather than creative flow. And it has no established practices, no ergonomics, no recovery protocols.

The 1% is calling the API. The 99% is everything that happens between the model’s response and a system that works correctly in production. The people who will build real things with AI are the ones who understand that the supervision problem is not a temporary inconvenience on the way to full automation. It is the work itself.

The author builds AI infrastructure for wealth management at Advisor360°, where this pattern plays out daily across agent development, evaluation pipelines, and production LLM systems.

The Other 99% was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.