TAI #195: GPT-5.4 and the Arrival of AI Self-Improvement?

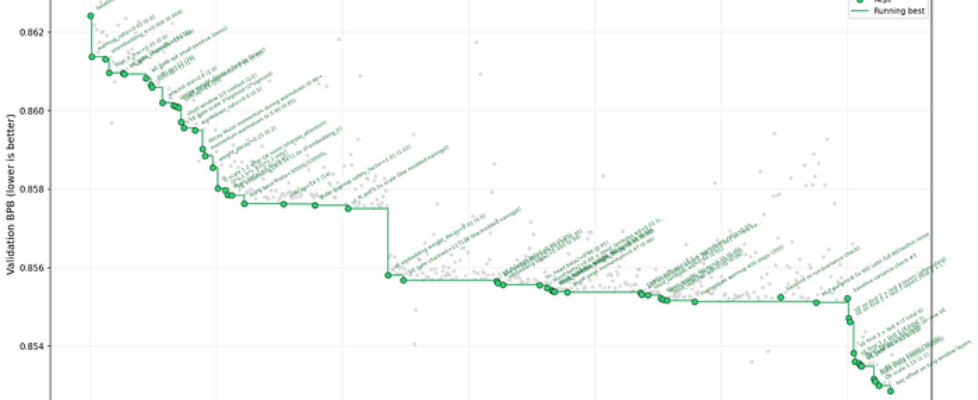

Author(s): Towards AI Editorial Team Originally published on Towards AI. What happened this week in AI by Louie Two stories dominated this week that look unrelated but tell the same story. On Wednesday, OpenAI released GPT-5.4, its most work-oriented frontier model to date. On Sunday, Andrej Karpathy posted results from his autoresearch experiment, showing that AI agents can autonomously find real, transferable improvements to neural network training. I think this combination marks a turning point: AI is becoming a closed-loop improver of its own stack. OpenAI released GPT-5.4 on March 5 as GPT-5.4 Thinking in ChatGPT, gpt-5.4 and gpt-5.4-pro in the API, and GPT-5.4 in Codex. It folds GPT-5.3-Codex’s coding strengths into the mainline model, adds native computer use, tool search, an opt-in 1M-token context window (272K default), native compaction, and a steerable preamble in ChatGPT that lets users redirect the model mid-task. Pricing has stepped up to $2.50/$15 per million tokens for the base model, $30/$180 for Pro, however increased token efficiency is largely cancelling this out in our tests. Requests exceeding 272K input tokens cost 2x more. The release cadence is also notable. GPT-5.2 in December, GPT-5.3-Codex on February 5, Codex-Spark on February 12, GPT-5.3 Instant on March 3, GPT-5.4 on March 5. An OpenAI staff member on the developer forum said it plainly: “monthly releases are here.” The progress now comes from post-training, eval loops, reasoning-time controls, tool selection, memory compaction, and product integration. The base model race still matters, but the surrounding engineering is where gains compound fastest. GPT-5.4 is another leap in many dimensions, but not a clean knockout. On Artificial Analysis’s Intelligence Index, it ties Gemini 3.1 Pro Preview at 57. On LiveBench, GPT-5.4 Thinking xHigh barely leads Gemini 3.1 Pro Preview, 80.28 vs. 79.93. On the Vals benchmark grid, the picture is splintered: GPT-5.4 leads ProofBench, IOI, and Vibe Code Bench; Gemini 3.1 Pro leads LegalBench, GPQA, MMLU Pro, LiveCodeBench, and Terminal-Bench 2.0; Claude Opus 4.6 leads SWE-bench; Claude Sonnet 4.6 leads the broad Vals composite and Finance Agent. There is no single best frontier model anymore. OpenAI’s benchmark story this time is unusually workplace-centric. On GDPval, which tests real knowledge work across 44 occupations, GPT-5.4 achieves 83.0% vs. 70.9% for GPT-5.2. On internal spreadsheet modeling tasks, 87.3% vs. 68.4%. On OSWorld-Verified for desktop navigation, 75.0%, surpassing the human baseline of 72.4% and nearly doubling GPT-5.2’s 47.3%. On BrowseComp, 82.7%, with Pro reaching 89.3%. OpenAI claims 33% fewer false claims and 18% fewer error-containing responses vs. GPT-5.2. Mainstay reported that across roughly 30,000 HOA and property-tax portals, GPT-5.4 hit 95% first-try success and 100% within three tries, about 3x faster while using 70% fewer tokens. Harvey’s BigLaw Bench: 91%. Despite continued progress on GDPval, I think OpenAI still has an interface gap for white-collar work. GPT-5.4’s preamble and mid-response steering are genuinely useful. ChatGPT for Excel and the new financial-data integrations are a smart wedge into high-value workflows. But OpenAI still does not have a broad non-developer surface as friendly as Claude Cowork for delegating messy cross-file, cross-app, real-world office work. Codex and the API now have serious computer-use capability, but the overall experience still leans more technical than it probably needs to if OpenAI wants to dominate the everyday white-collar desktop. Microsoft moved quickly on that front this week with Copilot Cowork. The company announced that it is integrating the technology behind Claude Cowork directly into Microsoft 365 Copilot, with enterprise controls, security positioning, and pricing under the existing Microsoft 365 Copilot umbrella. That gives Microsoft a clear distribution advantage because Word, Excel, PowerPoint, Outlook, and Teams are already where a large share of office work happens. But Microsoft’s execution so far has often felt like a company with perfect distribution and only intermittent product urgency. OpenAI and Anthropic, by contrast, have generally been sharper at making people actually want to use the thing. Microsoft still has the installed base. The question is whether it can convert that into a genuine product pull before the model labs sell their own work agents more directly into the enterprise. The other story this week that matters just as much, even if it looks smaller on paper, is Andrej Karpathy’s autoresearch experiment. Karpathy publicly reported that after about two days of autonomous tuning on a small nanochat training loop, his LLM agent found around 20 additive changes that transferred from a depth-12 proxy model to a depth-24 model and reduced “Time to GPT-2” from 2.02 hours to 1.80 hours, roughly an 11 percent improvement. The autoresearch repository describes the setup: give an AI agent a small but real LLM training environment, let it edit the code, run short experiments, check whether validation improves, and repeat overnight. Source: Andrej Karpathy. Autoresearch progress optimising nanochat over 2 days. A lot of people immediately reached for the “this is just hyperparameter tuning” line. I think that misses the economic point. If an agent swarm can reliably explore optimizer settings, attention tweaks, regularization choices, data-mixture recipes, initialization schemes, and architecture details on cheap proxy runs, then promote the promising changes to larger scales, that is already an extremely valuable research process even if it does not look like a lone synthetic scientist inventing an entirely new paradigm from scratch. Frontier research is full of bounded search problems with delayed but measurable feedback. That is exactly the terrain where agents can start compounding. This is the trajectory I expect from here. Labs will give swarms of agents meaningful GPU budgets to run thousands of small and medium experiments on proxy models. They will search for better attention mechanisms, better optimizer schedules, better training curricula, better post-training recipes, and better evaluation harnesses. The promising ideas will then get promoted upward through progressively larger training runs. Human experts will stay in the loop at the obvious choke points: deciding which metrics matter, spotting false positives, designing new search spaces, choosing which ideas deserve expensive scale-up, and co-designing the higher-stakes modifications once you are dealing with real parameter counts and serious training-flop […]