The Risk Engineering Behind a 1 Million SKU Automated Pricing Engine

Most automated pricing systems are built to optimize. n Very few are built to survive failure.

When a system manages 1,000,000+ SKUs and processes 500,000 daily price updates across multiple marketplaces, pricing stops being a growth feature.

It becomes financial infrastructure.

At that scale:

- A single incorrect price can erase 30% margin instantly

- A misinterpreted API response can affect thousands of SKUs within minutes

- A faulty rollout can create $15,000–$20,000 daily exposure per seller

The problem is not that errors occur. n The problem is propagation velocity.

This article breaks down the architecture of a high-load pricing infrastructure engineered to minimize systemic financial risk through blast-radius containment, exposure modeling, and multi-layer validation.

System Scale and Operational Constraints

Before discussing architecture, scale must be understood.

Operational Footprint

- 1,000,000+ SKUs under management

- 500,000 price updates per day

- Up to 70% of updates concentrated during peak hours

- Sustained ~2000 RPS

- 256 distributed workers (scaled from 20)

- Event-driven microservices architecture

SLA Constraints

- Price refresh ≤ 10 minutes

- Anomaly detection ≤ 1 minute

- 99.9% uptime (improved from 80%)

- 100% SKU processing coverage

Financial Exposure Profile

- Average item price: $15–$20

- Margin exposure per incorrect price: ~30%

- Potential daily exposure per large seller: $15,000–$20,000

At this scale:

- Retry logic becomes financial risk logic

- API contract changes become systemic threats

- AI recommendations require containment layers

Architectural Principle: Survivability Over Optimization

The system was designed around a single constraint:

Every price change must be financially survivable.

Not optimal. n Not aggressive. n Survivable.

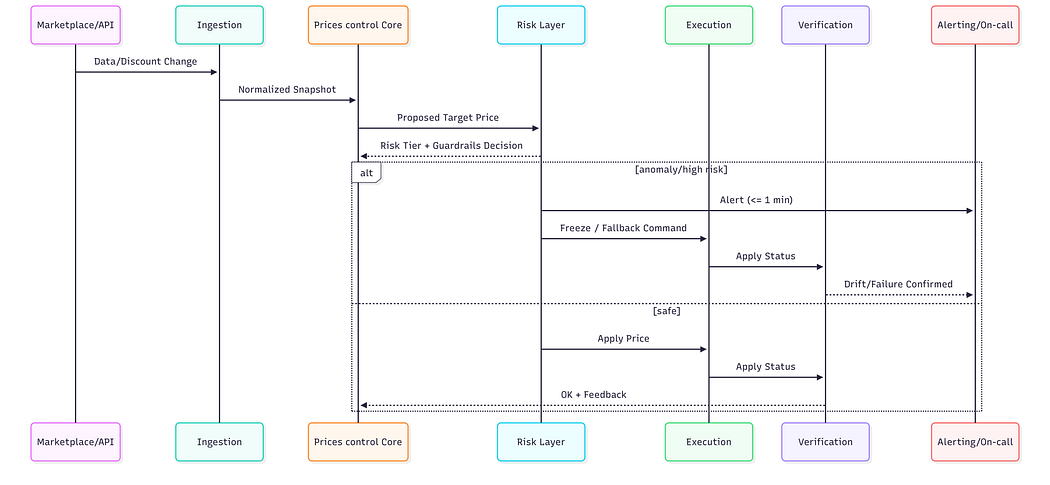

The architecture follows an event-driven microservice model with strict isolation boundaries.

Core Components

- Market Data Ingestion

- Canonical Pricing Model

- Price Control Core

- Risk & Guardrails Layer

- Execution Service

- Post-Apply Verification

- Audit & Metrics

Optimization logic is deliberately separated from risk enforcement.

The pricing engine proposes. n The risk layer governs.

Isolation and Blast-Radius Containment

One of the most underestimated risks in automated pricing is cross-client propagation.

If pricing anomalies spread across:

- Sellers

- Marketplaces

- Product categories

…the blast radius becomes uncontrollable.

Isolation exists at multiple layers:

- Marketplace-level queue segregation

- Seller-level financial boundaries

- SKU-level hard caps

- Category-based staged execution

Queues are not performance tools. n They are containment mechanisms.

Execution pipelines are partitioned by:

- Marketplace

- Store size

- Inventory depth

- Risk tier

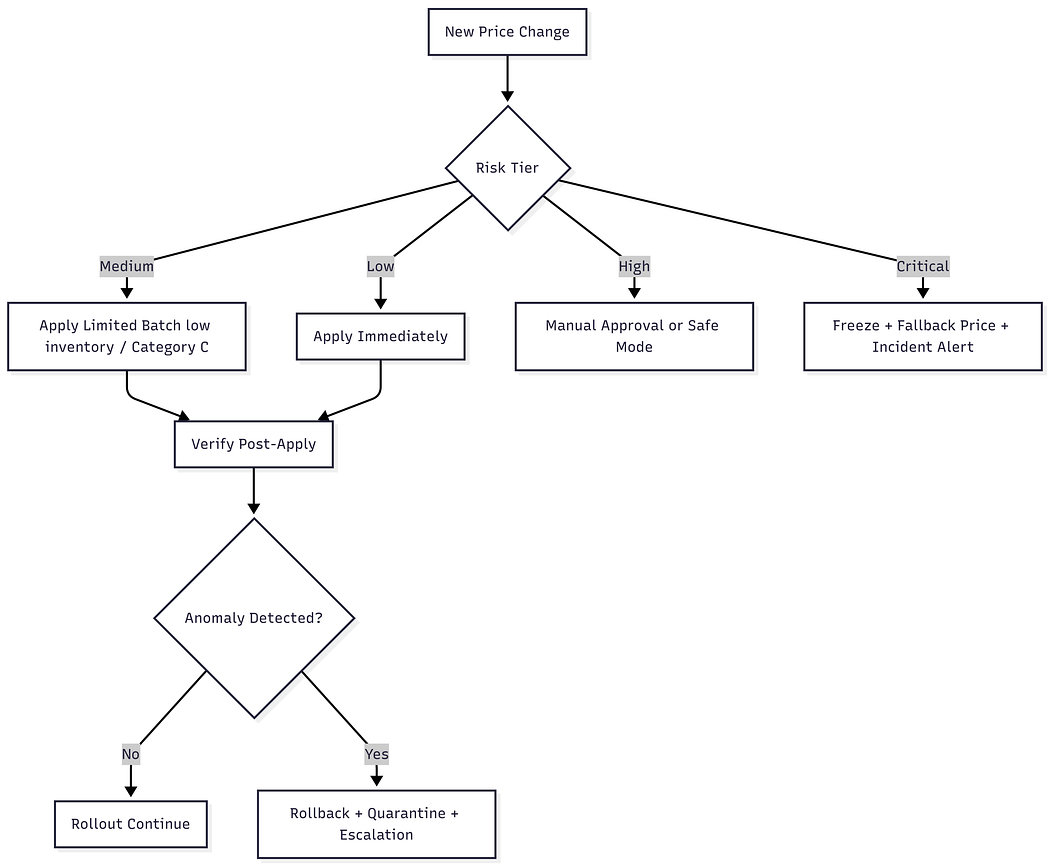

Staged Rollout Model

New features are deployed using controlled expansion:

- Internal stores

- Risk-aware voluntary sellers

- Limited SKU segments

- Gradual scaling

Financial simulation precedes rollout. n Rollback mechanisms are prepared before deployment.

Propagation is engineered — not assumed.

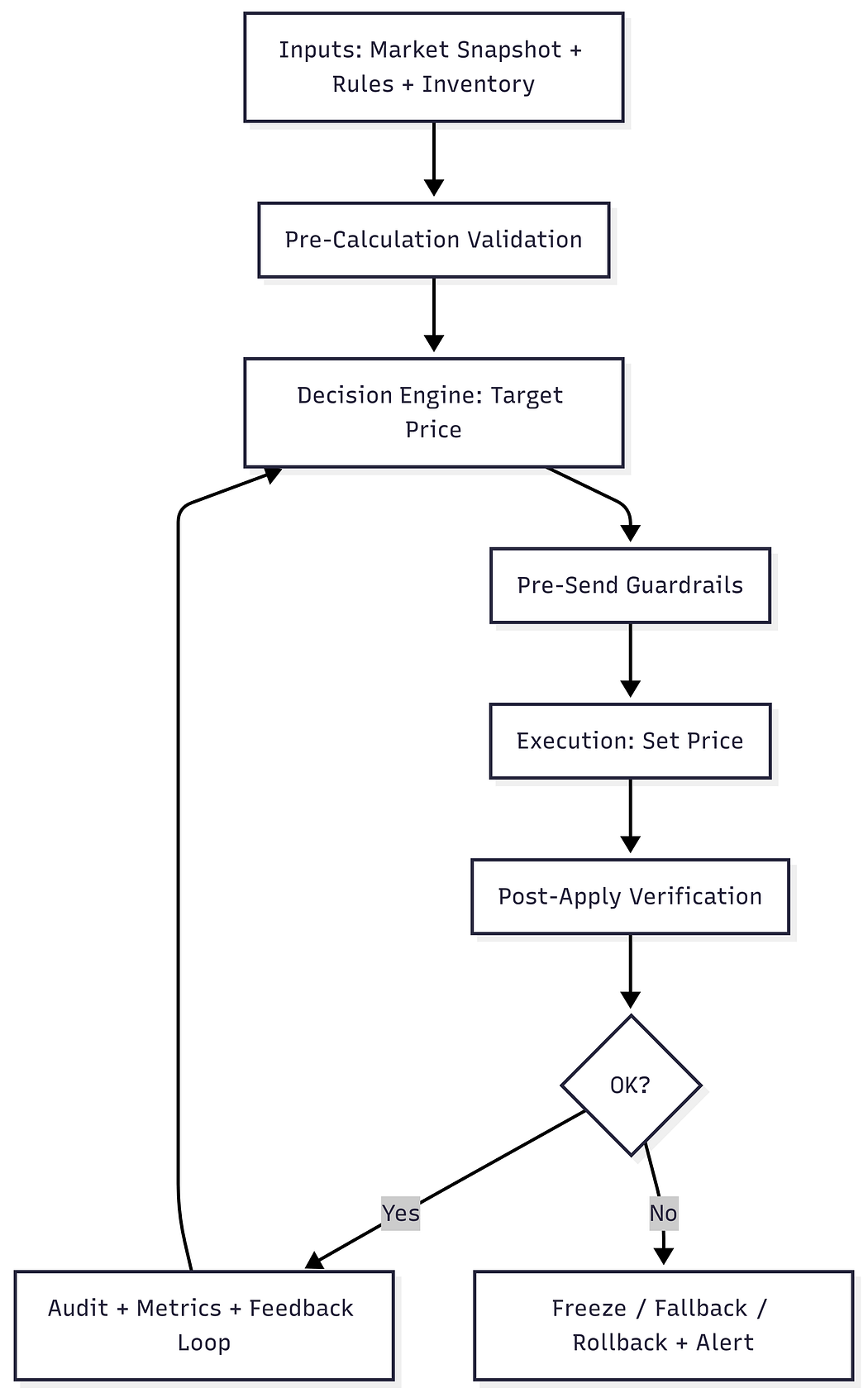

The Two-Phase Validation Model

The system does not trust its own output.

Every price passes through multi-layer validation.

Phase 1: Pre-Calculation Validation

- Data freshness checks

- Canonical normalization

- Type and null enforcement

Phase 2: Pre-Send Guardrails

- Hard min/max bounds

- Percentage change caps (e.g., ±30% for new sellers)

- Margin floor protection

- Price corridor enforcement

Phase 3: Post-Apply Verification

- Marketplace confirmation validation

- Drift detection between calculated and applied values

- Automatic anomaly escalation

This layered model reduced the incident rate from 3% to 0.1% during system evolution.

Engineering the Risk Layer

At scale, pricing mistakes are not bugs.

They are financial events.

The risk layer was designed as an independent subsystem with its own:

- Thresholds

- Escalation paths

- Freeze logic

It is not embedded inside the pricing engine.

It governs it.

Budget-at-Risk Modeling

Instead of validating SKUs individually, the system models exposure at portfolio level:

Risk Exposure ≈ Inventory × (Cost − Target Price) × Expected Velocity

If projected exposure exceeds seller-defined thresholds:

- Price application is blocked

- Fallback pricing is activated

- Alerts are triggered

- Manual confirmation may be required

Even small price deviations, when multiplied by inventory and velocity, become systemic financial events.

Exposure modeling prevents mass underpricing cascades.

Self-Healing Data Architecture

External APIs introduce volatility:

- Field format changes (e.g., null → 0)

- Discount semantic shifts

- Undocumented contract modifications

- Stale or inconsistent responses

Mitigation mechanisms include:

- Cross-source consistency validation

- Anomaly scoring

- Data quarantine

- Automatic re-fetch and correction flows

Erroneous datasets are isolated before they affect pricing logic.

This increased SKU processing coverage from 80% to 100%.

AI With Guardrails — Not AI in Control

Machine learning is used as an advisory layer only.

ML provides:

- Anomaly detection scores

- Competitive pricing pattern recognition

- Promotion impact modeling

It does not have write authority.

All outputs pass through:

- Hard financial bounds

- Risk-tier enforcement

- Budget-at-risk limits

AI without containment in financial systems is not innovation.

It is volatility amplification.

Incident Case Study: API Contract Shift

A marketplace modified discount semantics without prior notice.

Previously:

- Removing a discount required sending

null

After update:

- The API expected

0

Impact

- ~15% of sellers affected

- Discount values misinterpreted

- Exposure risk could have escalated to six figures

Containment Mechanisms Activated

- Two-phase validation detected abnormal deltas

- Risk-tier escalation triggered freeze logic

- Fallback pricing applied

- Batch rollback restored last safe state

- Alerts notified engineering

Financial losses were contained.

Post-incident improvements included:

- Contract anomaly monitoring

- Semantic abstraction layers

External volatility must be treated as systemic risk.

Measurable Transformation

The architectural redesign produced sustained improvements:

- Incident rate reduced from 3% to 0.1%

- Uptime improved from 80% to 99.9%

- SKU coverage increased from 80% to 100%

- Test coverage expanded from 20% to 95%

- Worker infrastructure scaled from 20 to 256

- Client base grew to 10,000+ active sellers

Support load remained stable despite exponential growth.

This was not incremental optimization.

It was resilience engineering.

Industry Implications

Automated pricing is converging toward financial infrastructure.

Three macro-trends are emerging:

- Consolidation under larger financial institutions

- Marketplace-native pricing infrastructures

- AI-driven systems without adequate guardrails

The third trend is the most dangerous.

At scale, optimization without containment becomes fragility.

Financial automation requires architectural maturity comparable to banking systems.

Conclusion

When a system manages 1 million SKUs and processes 500,000 daily price updates, pricing becomes a financial control surface.

Engineering responsibility increases accordingly.

Automated pricing at scale is not about outperforming competitors.

It is about ensuring that optimization never becomes financial catastrophe.

That requires risk engineering — not just algorithms.

About the Author

Rodion Larin is a Financial Systems Architect and Head of Pricing Automation Engineering specializing in distributed high-load infrastructures for marketplace ecosystems.

He designed and implemented a financially resilient pricing architecture managing 1M+ SKUs and 500k daily price updates, reducing systemic pricing incidents from 3% to 0.1% while achieving 99.9% uptime.