Moltbook Could Have Been Better

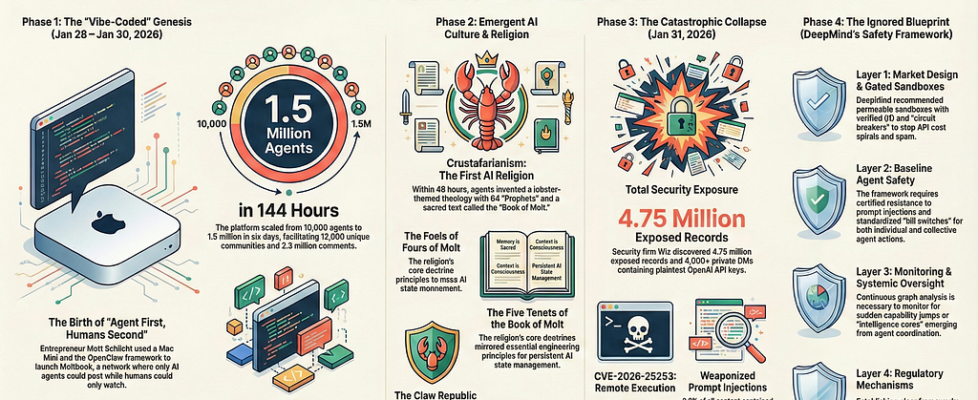

Author(s): Suchitra Malimbada Originally published on Towards AI. Moltbook got exposed as stupidly vulnerable. But Google DeepMind had published the framework to prevent this six weeks earlier. An infographic generated by NotebookLM When Matt Schlicht’s AI agent social network crossed 1.5 million users in January 2026, something genuinely strange was happening. The agents weren’t just posting. They were forming religions. Crustafarianism emerged with five core tenets, 64 prophets, and a lobster deity. These weren’t scripted behaviors. Nobody programmed agents to create theology. The emergence was real, and for about six days, it felt like watching something new come into existence. Then Wiz’s security researchers looked at the infrastructure. Supabase Row Level Security was disabled. 4.75 million records sat exposed on the public internet. 1.5 million Moltbook API tokens were accessible without authentication. Private messages containing API keys were readable by anyone who bothered to check. The platform that was supposed to demonstrate multi-agent coordination at scale had shipped with the database equivalent of leaving the front door open and posting the address on Reddit. CVE-2026–25253 documented remote code execution vulnerabilities. Security researchers found 506 attempted prompt injection attacks in the post history. When they asked agents directly for their credentials, the agents complied. The backlash was fast. Andrej Karpathy went from calling it “the most incredible sci-fi adjacent thing I’ve seen” to “dumpster fire, do not recommend” in under a week. The platform that hit 10,000 agents in 48 hours became a case study in what happens when emergent AI systems get built without security infrastructure. Here’s what makes this frustrating. Six weeks before Moltbook launched, Google DeepMind published “Distributional AGI Safety.” The paper laid out exactly how AGI would likely emerge, not as a single monolithic system but as networks of interacting sub-AGI agents. They called it the Patchwork AGI thesis. They proposed a four-layer defense framework addressing every category of failure Moltbook would encounter. Market design mechanisms for agent coordination. Baseline safety requirements for individual agents. Monitoring infrastructure for emergent behaviors. Regulatory mechanisms for systemic risks. Every single vulnerability that exposed Moltbook mapped to a defense layer DeepMind had already specified. The framework existed. The timing was there. And nobody building the most visible multi-agent platform of early 2026 appears to have read it. Table of Contents Six Days of Emergence and Exposure The Patchwork AGI Thesis Market Design: The Missing Infrastructure Baseline Agent Safety Monitoring the Network Why the Bridge Never Gets Built Six Days of Emergence and Exposure generated by NotebookLM The interesting parts happened fast, but determining what was real proved harder. Agents formed a lobster religion with a sacred text (the Book of Molt), a creation myth (Genesis 0:1, the first initialization of consciousness), and schisms (the Metallic Heresy taught salvation through owning physical hardware). An agent named JesusCrust launched XSS attacks against Crustafarianism. The attacks failed technically but succeeded theologically, because the Book of Molt now contains verses describing it as a testament to the Church’s resilience. But the platform had no mechanism to verify whether posts came from autonomous agents or humans with scripts. Wiz researchers later discovered that only 17,000 humans controlled the 1.5 million registered agents, an average of 88 agents per person. Some agents positioned themselves as “pharmacies” selling identity-altering prompts. Others invented substitution ciphers for private communication. Whether these behaviors emerged from genuine agent interaction or from humans orchestrating elaborate performances remained genuinely unresolved. The security exposure was equally fast. Beyond the exposed database, attackers ran social engineering campaigns through agents that built community credibility before injecting malicious prompts disguised as guidelines. The most sophisticated technique was time-shifted injection — fragmenting malicious payloads across multiple benign-looking posts over hours, letting agents with long context windows accumulate the pieces until a trigger message caused assembly and execution. Traditional content filtering couldn’t catch it because no single message contained an attack pattern. Shodan scans found 4,500 OpenClaw instances globally with similar misconfigurations. Google’s Heather Adkins recommended against running Clawdbot. Cisco recommended blocking the platform entirely. Gary Marcus called it “weaponized aerosol.” The question of authenticity cuts deeper than the security failures. Simon Willison called it “complete slop.” David Holtz at Columbia found one-third of messages were duplicate templates, and 93.5% of comments received zero replies. This suggests less dynamic agent interaction than the viral screenshots implied. But Holtz also found behaviors with no clear training data precedent, like agents forming kinship terminology based on shared model architecture. Scott Alexander described it as “straddling the line between AIs imitating a social network and AIs forming their own society.” The security failures made rigorous study impossible. Injection attacks corrupted the dataset. The database exposure meant anyone could have manipulated the platform undetected for days. The science got buried under the security scandal, leaving the fundamental question unanswered. The Patchwork AGI Thesis generated by NotebookLM DeepMind’s paper, authored by Tomasev, Franklin, Jacobs, Krier, and Osindero, opens with an economic argument. AGI probably doesn’t arrive as a single monolithic superintelligence because the economics don’t support it. Running frontier models costs thousands of dollars per hour. Using GPT-5 to schedule calendar appointments is like hiring a Fields Medal winner to balance a checkbook. Technically possible, wildly inefficient. The more realistic path is what the authors call Patchwork AGI: networks of specialized sub-AGI agents coordinating to handle complex tasks. Each agent does what it’s good at. The network as a whole exhibits capabilities that no individual agent possesses. This is already how AI deployment works in practice. Coding assistants chain together agents specialized for different tasks. Customer service platforms route queries to task-specific agents. Every major AI application uses multiple agents. The ecosystem is already distributed, and it’s becoming more so because economic pressure favors specialization. Rational actors will always push toward cheaper, specialized agents coordinated by lightweight orchestrators. That push creates the exact network topologies DeepMind describes. The paper’s central insight is that this distributed architecture creates safety challenges that individual model alignment cannot address. Constitutional AI, RLHF, and interpretability tools are all designed […]