How AI Actually Thinks – Explained So a 13-Year-Old Gets It

Tokens, training, context windows, and temperature — the four concepts that explain everything about large language models.

You know how your phone suggests the next word when you’re texting? Type “I’m going to the” and it suggests “store” or “park.”

Now imagine that autocomplete was trained on every book, every website, every conversation ever written — and instead of suggesting one word, it could write entire essays, solve math problems, and generate working code.

That’s fundamentally what a Large Language Model does. And once you understand four concepts — tokens, training, context windows, and temperature — you’ll know more about how AI works than 95% of people who use it daily.

No PhD required.

Concept 1: Tokens — How AI Reads

AI doesn’t read letters or words the way you do. It reads tokens — chunks of text that are usually 3–4 characters long.

Here’s how Claude sees the sentence “The quick brown fox jumped.”

“jumped” splits into two tokens: “jump” + “ed”

Notice how “jumped” becomes two tokens: “jump” and “ed.” The model learned that “-ed” is a common pattern that means “past tense.”

Concept 2: Training — How AI Learns

Here’s the key insight: AI learns by reading, not by being programmed.

e Library Resident Analogy

Imagine a brilliant person who has read every book in the world’s largest library — every novel, every textbook, every forum post, every code repository — billions of pages. But they have never stepped outside the library.

They don’t memorize specific facts word-for-word. Instead, their brain builds compressed representations of the world. They understand that water flows downhill, that functions need return values, and that sadness follows loss.

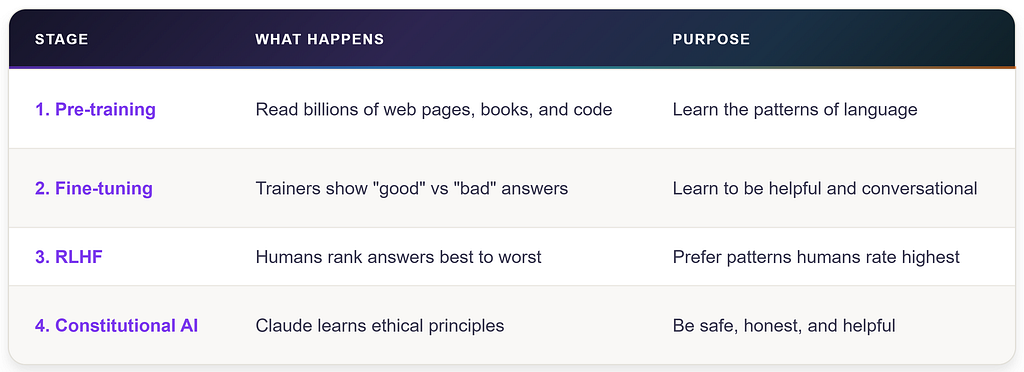

The Four Stages of Training

Concept 3: The Context Window — AI’s Working Memory

Imagine you have a whiteboard. Everything you write on it — your questions, Claude’s answers, files you share — all goes on the whiteboard. Claude can only see what’s currently on the whiteboard. If it fills up, older content gets erased from the top.

That whiteboard is the context window.

Concept 4: Temperature — The Creativity Dial#

Every time Claude generates a response, it has a temperature setting — a number between 0 and 1 that controls how predictable or creative the output is.

The Radio Dial Analogy

The Implication Everyone Misses

Every Claude response is a roll of weighted dice. Ask the same question five times, get five slightly different answers. This isn’t a bug — it’s a fundamental feature.

The critical insight: one response is an anecdote. Multiple responses are data. If you care about quality, don’t evaluate based on a single output. This is the seed of evaluation thinking — and it separates casual AI users from professional ones.

What AI Is and Isn’t

Five Things AI Cannot Do

- No real-time internet. Claude can’t browse the web during a conversation.

- No persistent memory. Each conversation starts from zero.

- No physical actions. Claude can’t click buttons or send emails on its own.

- Training cutoff. Events after training are unknown unless you provide them.

- No learning from you. Correcting Claude in one chat doesn’t improve future chats.

Check out my previous article: “I Gave a 7th Grader an AI Companion. Here’s What They Built in 30 Days.”

Follow me for the next article: “The 4D Framework: The Only Mental Model You Need for AI.”

How AI Actually Thinks – Explained So a 13-Year-Old Gets It was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.