Generative AI: How Generative AI Tools Generate New Content From User Prompts…

Compared with the past, Today, AI tools such as ChatGPT, Google Gemini and Claude are experiencing a rapid growth. These tools are capable of generating various types of contents including texts, images, computer codes and even music based on simple prompts provided by users. Actually,

How do these systems generate entirely new content from just a user prompt?

In this article, we will explore how generative AI systems generate create content from user prompts. We will begin by understanding what GenAI is and then look at key ideas such as tokenization and next — word prediction. By the end, you will have a clear understanding of the mechanisms that allow AI tools to generate meaningful responses from simple user prompts.

What is Generative AI?

Generative AI (GenAI) is a type of AI that can create new content. As well as, it is a subset of Deep Learning (DL) where the models are trained to generate output on their own. These models are capable of creating a wide range of outputs including texts, image, music, codes, videos and other types of data. Many modern AI tools use this capability to assist users in writing, designing, programming, and solving problems.

Language Models (LMs)

A language Model is a type of Artificial Intelligence system designed to understand and generate human language. It learns patterns in text by analyzing massive amounts of written data such as books, articles, web sites and code repositories.

The main goal of a Language Model is to predict the next word in a sequence of words. By repeatedly, predicting the most likely next word, the model can generate complete sentences and paragraphs that resemble human writing.

Role of the prompt

Prompt is the instruction given to the AI system by user. The output generated by the AI system always depends on the prompt. In simple terms, the quality of the output is closely related to the quality of the prompt. If a user provides a clear and well-structured prompt, the AI is more likely to generate a useful and relevant response. It performs its task according to the prompt.

Tokenization

When a prompt is given to an AI system, it does not actually read the words in the same way humans do. Instead, the system converts the words in the prompt into numerical representations.

This process is known as tokenization.

The AI model processes these numbers rather than the original words. By analyzing these numerical tokens, the model can understand patterns in the text and generate a response.

For example, consider the prompt :

“Write a short paragraph about usage of AI in medical sector.”

This sentence may be broken into tokens such as :

[‘Write’ , ‘a’ , ‘short’, ‘paragraph’, ‘about’, ‘usage’, ‘of’, ‘AI’, ‘in’, ‘medical’, ‘sector’, ‘.’ ]

These tokens are then converted into numerical values so the AI model can process them.

Next — Word Prediction

This is the core concept behind modern language models such as ChatGPT and Claude. Instead of understanding language in the same way humans do, these models generate new content by predicting the most probable next token in a sequence. Based on the tokens that appear earlier in the text, the model calculates probabilities for possible next tokens and selects one of them. By repeating this prediction process step by step, the model gradually generates complete sentences and paragraphs.

For an example, The model is generating a text as follows.

“AI is transforming the ……”

The system needs to fill the blank with appropriate word. For that, it calculates the probability values of the tokens that it has with previous trainings. The probability values for the tokens may as follows.

According to the probability values, it fills the blank with the token which has more probability value than others. For this, it chooses the word “World” since it has the highest probability value. Then,

“AI is transforming the world ….”

Again, it predicts the probability values of the tokens to fill the next blank.

Now, based on the calculated probability values, it decides to use “End Of the Sentence (EOS)” token to fill that blank.

This is how GenAI language models use Next — Word prediction method to generate the new contents.

Neural Networks behind Generative AI

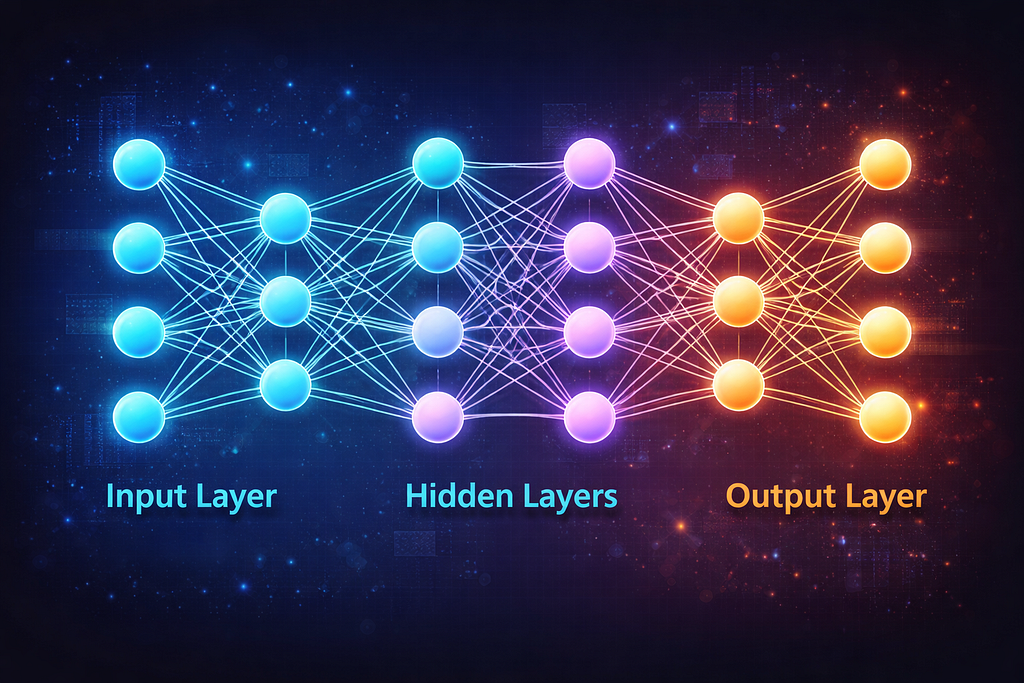

The ability of generative AI systems to produce texts, images, codes and other formats of content is powered by artificial neural networks. These networks are computational models inspired by the way the human brain processes information.

An artificial neural network consists of many interconnected units called neurons. These neurons are arranged in layers, typically including an input layer, several hidden layers and an output layer. Each neuron receives input values, performs mathematical calculations on them and passes the result to the next layer. Through this process, the network gradually learns patterns within the data.

Modern generative AI systems use a specific neural network architecture known as the transformer, introduced in the influential research paper “Attention Is All You Need”. This architecture is particularly powerful for processing language because it can analyze relationships between words in a sentence more effectively than earlier neural network models.

A key feature of transformer model is the attention of mechanism. Attention allows model to focus on the most relevant parts of the input text when generating each new token. Instead of processing words one by one in sequence, the model can examine the entire context of a sentence and determine how different words related to each other.

For an example, Consider the sentence :

“Mr. Lee has completed the project and he submitted it before the deadline.”

To generate a meaningful continuation of this sentence, the AI must understand that the word “he” refers to “Mr. Lee” and “it” refers to “the project” . Attention mechanisms help the model capture these relationships within the text.

These neural networks are trained using extremely large datasets containing billions of words collected from books, websites, articles and other textual sources. During training, the model repeatedly tries to predict missing or next tokens in sentences. Each time it makes an incorrect prediction, the system adjusts its internal parameters to improve future predictions. Over time, the network learns complex patterns in language such as grammar, style, context and even certain types of reasoning.

As a result, when a user provides a prompt, the trained neural network can analyze the input and generate a response by predicting tokens that best fit the learned patterns in the data.

Conclusion

In summary, Generative AI systems create responses through a series of processes. When a user provides a prompt, the text is first converted into tokens. These tokens are then processed by a language model powered by neural networks. The model analyzes patterns learned during training and predicts the most probable next token repeatedly until a complete response is generated. Through this process, modern AI tools are able to generate meaningful text from simple user inputs.

Generative AI: How Generative AI Tools Generate New Content From User Prompts… was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.