Feature Leakage in Machine Learning: The Silent Killer Destroying Your Model’s Real Performance

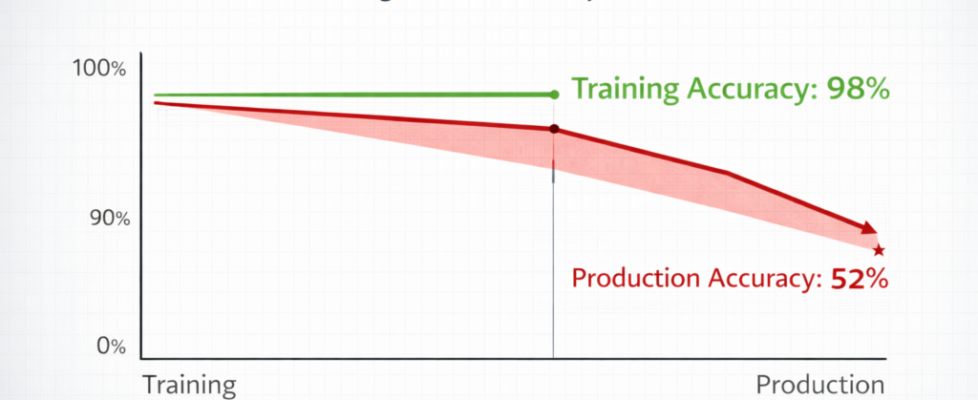

Author(s): Rohan Mistry Originally published on Towards AI. Understanding Data Leakage, Target Leakage, and Temporal Leakage — And How to Detect and Prevent Them Your machine learning model achieves 98% accuracy on validation data. Your team celebrates. You deploy to production. Source: Image by author.The article delves into the concept of data leakage in machine learning, explaining how it can lead to models performing well on training data but failing in real-world applications. It outlines three main types of leakage—feature leakage, target leakage, and temporal leakage—providing examples from fields like finance and healthcare. The importance of detecting and preventing these issues is emphasized, along with various strategies such as feature importance analysis and domain knowledge review, which are crucial for ensuring models are truly predictive rather than deceptively accurate. Read the full blog for free on Medium. Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor. Published via Towards AI