Building a Simple SQL Query Generator Using LLMs

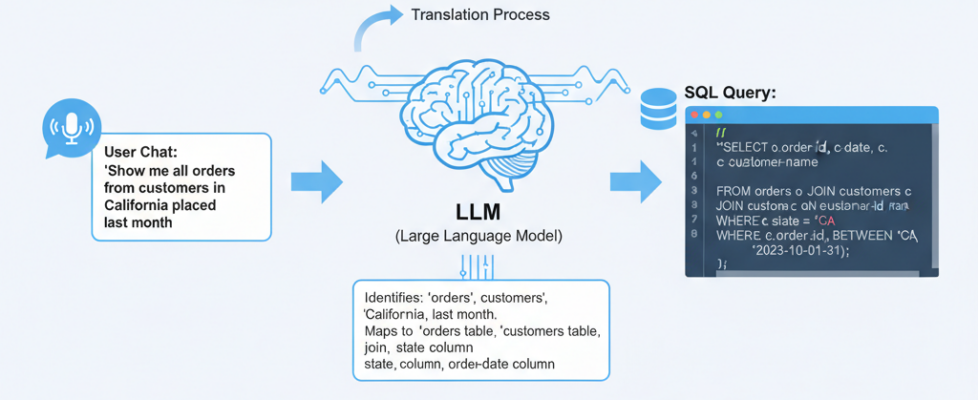

Use LLMs to bridge the gap between human language and database queries with Python.

Writing SQL is an art, but not everyone enjoys the canvas. For many, the syntax of a complex JOIN or a nested subquery is a barrier to getting the data they need. In our fast-paced world, not everyone has the time to become a database administrator—and with the power of Large Language Models (LLMs), you don’t have to.

Instead of teaching you how to write queries, I’m going to show you how to build a tool that writes them for you. We will use a Python-based extractor to feed your database schema to an LLM, turning a simple English question into a functional SQL statement.

The Tech Stack

We’ll use MS SQL Server for this walkthrough, but the logic applies to any relational database (Postgres, MySQL, etc.) as long as you use the correct connector library.

- dotenv: To securely load your environment variables and API keys.

- mssql-python: Our bridge to the MS SQL Server.

- openai: To connect to your LLM of choice (e.g., GPT-4).

- sqlparse: To clean up and format the SQL generated by the LLM.

- uv: A blazing-fast Python package manager to handle our environment.

Setting Up Your Environment

To get started, initialize your project using uv or your preferred manager.

# pyproject.toml

[project]

name = "simple_sql_query_generator"

version = "0.1.0"

requires-python = ">=3.11"

dependencies = [

"dotenv>=0.9.9",

"mssql-python>=1.3.0",

"openai>=2.21.0",

]

Create pyproject.toml file with the same contents as above and run the command uv sync to install these dependencies and create your virtual environment.

Also create .env file to have all your keys or environment details in it,

# .env

DB_HOST=<DB HOST>

DB_PORT=<DB PORT>

DB_NAME=<DB NAME>

DB_USER=<DB USER>

DB_PASSWORD=<DB PASSWORD>

OLLAMA_API_KEY=ollama

OLLAMA_BASE_URL="http://localhost:11434/v1"

OPENAI_API_KEY=

ANTHROPIC_API_KEY=

GROK_API_KEY=

GROQ_API_KEY=

Preparing the Playground

An LLM can’t guess what’s in your database. It needs context: table names, columns, and relationships. To demonstrate, let’s create a classic Customers and Orders scenario.

-- sql_script.sql

-- 1. Create Customers Table

CREATE TABLE customers (

customer_id INT NOT NULL PRIMARY KEY,

name NVARCHAR(255) NULL,

email NVARCHAR(255) NULL,

created_at DATETIME2 NULL DEFAULT GETDATE()

);

-- 2. Create Orders Table

CREATE TABLE orders (

order_id INT NOT NULL PRIMARY KEY,

customer_id INT NULL,

order_date DATE NULL,

total_amount DECIMAL(18, 2) NULL,

-- Define Foreign Key relationship

CONSTRAINT FK_Orders_Customers FOREIGN KEY (customer_id)

REFERENCES customers(customer_id)

);

-- 3. Insert Sample Records

INSERT INTO customers (customer_id, name, email, created_at)

VALUES

(1, 'Alice Johnson', 'alice@example.com', '2025-01-15 10:30:00'),

(2, 'Bob Smith', 'bob@example.com', '2025-02-20 14:45:00'),

(3, 'Charlie Brown', 'charlie@example.com', '2025-03-05 09:15:00'),

(4, 'Diana Prince', 'diana@themyscira.com', '2025-07-12 08:00:00'),

(5, 'Edward Nigma', 'riddler@gotham.com', '2025-08-01 11:20:00'),

(6, 'Fiona Gallagher', 'fiona@southside.com', '2025-09-14 16:30:00'),

(7, 'George Costanza', 'art_vandelay@latex.com', '2025-10-10 12:00:00'),

(8, 'Hannah Abbott', 'hannah@hufflepuff.edu', '2025-11-22 09:45:00'),

(9, 'Ian Malcolm', 'chaos@jurassic.org', '2025-12-05 13:10:00'),

(10, 'Julia Child', 'cooking@french.com', '2026-01-10 15:55:00');

INSERT INTO orders (order_id, customer_id, order_date, total_amount)

VALUES

(101, 1, '2025-04-01', 150.50),

(102, 1, '2025-04-15', 89.99),

(103, 2, '2025-05-20', 210.00),

(104, 3, '2025-06-10', 45.00),

(105, 4, '2025-07-20', 500.00),

(106, 5, '2025-08-05', 12.50),

(107, 5, '2025-08-15', 35.75),

(108, 6, '2025-09-20', 99.99),

(109, 1, '2025-10-01', 25.00), -- Customer 1 again

(110, 2, '2025-11-05', 300.25), -- Customer 2 again

(111, 7, '2025-12-01', 15.00),

(112, 4, '2025-12-15', 750.00), -- High value order

(113, 8, '2026-01-05', 62.40),

(114, 10, '2026-01-20', 120.00),

(115, 1, '2026-02-01', 10.00), -- Recent order

(116, 5, '2026-02-10', 55.00);

The “Secret Sauce”: Automating Schema Extraction

For an LLM to generate valid SQL, it needs more than just table names; it needs to understand the “connective tissue” of your database — primary keys, foreign keys, and data types.

While this isn’t a SQL class, we use a few standard T-SQL queries to “teach” the LLM our database structure. By querying the INFORMATION_SCHEMA, we can build a Markdown map that looks like this:

- Tables: We fetch all BASE TABLE entries to avoid system views.

- Columns: We grab types and nullability to help the LLM understand what data it’s dealing with.

- Keys: We identify Primary and Foreign keys, so the LLM knows exactly how to JOIN tables without guessing.

## Table: customers

Columns:

- customer_id: int NOT NULL (PRIMARY KEY)

- name: nvarchar NULL

- email: nvarchar NULL

- created_at: datetime2 NULL

## Table: orders

Columns:

- order_id: int NOT NULL (PRIMARY KEY)

- customer_id: int NULL

- order_date: date NULL

- total_amount: decimal NULL

Foreign Keys:

- customer_id → customers.customer_id

We wrap the queries into a Python class that does the heavy lifting. This class connects to your DB, runs the metadata queries, and outputs a clean Markdown string.

# schema_extractor.py

from mssql_python import connect

from typing import Dict, List, Optional

class SchemaExtractor:

def __init__(self, connection_string):

# Using the mssql-python connect method

if isinstance(connection_string, dict):

# Unpacks dict keys into: connect(SERVER='...', DATABASE='...')

self.conn = connect(**connection_string)

else:

# Treats it as a standard connection string

self.conn = connect(connection_string)

self.cursor = self.conn.cursor()

def get_tables(self, include_tables: Optional[List[str]] = None) -> List[str]:

"""Get specific or all table names in the database."""

if include_tables:

# Format list for T-SQL IN clause: 'table1', 'table2'

placeholders = ", ".join([f"'{t}'" for t in include_tables])

filter_clause = f"AND table_name IN ({placeholders})"

else:

filter_clause = ""

query = f"""

SELECT table_name

FROM information_schema.tables

WHERE table_type = 'BASE TABLE'

AND table_schema = 'dbo'

{filter_clause}

ORDER BY table_name;

"""

self.cursor.execute(query)

return [row[0] for row in self.cursor.fetchall()]

def get_table_schema(self, table_name: str) -> Dict:

"""Get detailed schema for a specific MSSQL table."""

# 1. Get Columns

query = """

SELECT column_name, data_type, is_nullable, column_default

FROM information_schema.columns

WHERE table_name = ?

ORDER BY ordinal_position;

"""

self.cursor.execute(query, (table_name,))

columns = []

for row in self.cursor.fetchall():

columns.append({

'name': row[0], # Accessing by index

'type': row[1],

'nullable': row[2] == 'YES',

'default': row[3]

})

# 2. Get Primary Keys (Improved Query)

pk_query = """

SELECT COLUMN_NAME

FROM INFORMATION_SCHEMA.KEY_COLUMN_USAGE

WHERE OBJECTPROPERTY(OBJECT_ID(CONSTRAINT_SCHEMA + '.' + CONSTRAINT_NAME), 'IsPrimaryKey') = 1

AND TABLE_NAME = ?;

"""

self.cursor.execute(pk_query, (table_name,))

# fetchall() returns a list of tuples like [('id',), ('other_pk',)]

primary_keys = [row[0] for row in self.cursor.fetchall()]

return {

'table_name': table_name,

'columns': columns,

'primary_keys': primary_keys

}

def get_foreign_keys(self, table_name: str) -> List[Dict]:

"""Get foreign key relationships in MSSQL."""

query = """

SELECT

cp.name AS column_name,

tr.name AS referenced_table,

cr.name AS referenced_column

FROM sys.foreign_keys AS fk

INNER JOIN sys.foreign_key_columns AS fkc ON fk.object_id = fkc.constraint_object_id

INNER JOIN sys.tables AS tp ON fkc.parent_object_id = tp.object_id

INNER JOIN sys.columns AS cp ON fkc.parent_object_id = cp.object_id AND fkc.parent_column_id = cp.column_id

INNER JOIN sys.tables AS tr ON fkc.referenced_object_id = tr.object_id

INNER JOIN sys.columns AS cr ON fkc.referenced_object_id = cr.object_id AND fkc.referenced_column_id = cr.column_id

WHERE tp.name = ?;

"""

self.cursor.execute(query, (table_name,))

# Accessing each column by its index in the tuple

return [{

'column': row[0],

'references_table': row[1],

'references_column': row[2]

} for row in self.cursor.fetchall()]

def format_schema_for_llm(self, target_tables: Optional[List[str]] = None) -> str:

"""Format the filtered schema for LLM consumption."""

tables = self.get_tables(include_tables=target_tables)

schema_description = "# Database Schema (MSSQL)nn"

for table in tables:

schema = self.get_table_schema(table)

fks = self.get_foreign_keys(table)

schema_description += f"## Table: {table}n"

schema_description += "Columns:n"

for col in schema['columns']:

pk_marker = " (PRIMARY KEY)" if col['name'] in schema['primary_keys'] else ""

nullable = "NULL" if col['nullable'] else "NOT NULL"

schema_description += f"- {col['name']}: {col['type']} {nullable}{pk_marker}n"

if fks:

schema_description += "nForeign Keys:n"

for fk in fks:

schema_description += f"- {fk['column']} → {fk['references_table']}.{fk['references_column']}n"

schema_description += "n"

return schema_description

def close(self):

"""Close database connection."""

self.cursor.close()

self.conn.close()

if __name__ == "__main__":

# 1. Define your connection string

# Change 'YourDatabaseName' and 'YourServerName' to your actual database

db_config = {

"SERVER": "YourServerName",

"DATABASE": "YourDatabaseName",

"Trusted_Connection": "yes",

"Encrypt": "no"

}

# 2. Define the specific tables you want to extract, this is an

# optional field, you can skip it to get details of all tables.

MY_TABLES = ["customers", "orders"]

extractor = None

try:

# 3. Initialize and run

extractor = SchemaExtractor(db_config)

schema_text = extractor.format_schema_for_llm(target_tables=MY_TABLES)

print(schema_text)

except Exception as e:

print(f"Error: {e}")

finally:

if extractor:

extractor.close()

Why Markdown?

You might wonder why we convert the schema to Markdown. LLMs are trained heavily on documentation and code repositories (like GitHub). They are incredibly efficient at parsing Markdown headers and lists, making it the most “token-efficient” way to pass context to the model.

Give the database configuration details, here am using the local db, if you are using database on the server give the configuration details accordingly.

The “Brain”: Orchestrating the LLM Logic

If the schema is our “Secret Sauce,” then this class is the “Brain” that knows how to cook with it.

The SQLQueryGenerator doesn’t just pass text back and forth; it acts as a translator. It takes your messy, human question and your structured schema, then applies a strict set of T-SQL architectural rules to ensure the output actually runs on your server.

Why this “Brain” is different:

- Provider Agnostic: While I use Ollama here for local privacy, the architecture is built on the OpenAI standard, meaning you can swap to GPT-4 or Claude with a single line of code.

- Zero-Shot Precision: We use a temperature of 0.1. In creative writing, you want high temperature; in SQL generation, you want the LLM to be as “boring” and predictable as possible.

- Syntax Guardrails: We explicitly teach the LLM to avoid common pitfalls — like using LIMIT (Postgres/MySQL) when it should be using TOP (MS SQL Server).

# simple_query_generator.py

import os

from typing import Optional

from openai import OpenAI

class SimpleSQLQueryGenerator:

def __init__(self, provider: str = "ollama", model: str = None):

"""

Initialize the query generator.

Args:

provider: "ollama"

model: Model name (optional, uses defaults)

"""

self.provider = provider

if provider == "ollama":

self.client = OpenAI(api_key=os.getenv("OLLAMA_API_KEY"), base_url=os.getenv("OLLAMA_BASE_URL"))

self.model = model or "llama3.2"

else:

raise ValueError(f"Unsupported provider: {provider}")

def create_system_prompt(self, schema: str, dialect: str = "postgresql") -> str:

"""Create a detailed system prompt for the LLM."""

return f"""You are an expert T-SQL (Microsoft SQL Server) query generator. Your task is to convert natural language questions into accurate, high-performance MSSQL queries.

Database Schema:

{schema}

SQL Dialect: {dialect} (T-SQL / SQL Server)

Rules:

1. Generate ONLY the SQL query. No explanations, no markdown code blocks, and no backticks.

2. Use proper JOIN syntax (INNER, LEFT) and always use table aliases (e.g., `customers AS c`).

3. Use TOP for limiting results instead of LIMIT (e.g., `SELECT TOP 10 ...`).

4. For date filtering/extraction, use T-SQL functions like `GETDATE()`, `DATEPART()`, `DATEDIFF()`, and `FORMAT()`.

5. Use `COALESCE` to handle NULL values in calculations or concatenations.

6. When comparing strings, use the `LIKE` operator with `%` wildcards if partial matches are implied.

7. Use `ISNULL()` or `COALESCE()` for NULL-safe aggregations.

8. Follow T-SQL best practices: Use `QUOTED_IDENTIFIER` logic (square brackets `[ ]`) if table or column names contain spaces or are reserved keywords.

9. For pagination/offset, use `OFFSET 0 ROWS FETCH NEXT N ROWS ONLY` if `TOP` is not suitable.

10. Ensure all column names and table names match the provided schema exactly.

Important:

- MS SQL Server is the target; do NOT use `LIMIT`, `ILIKE`, or `TO_TIMESTAMP`.

- If the question is ambiguous, assume the most common business logic.

- If the schema lacks necessary information, provide a brief comment starting with `--` explain why.

- For temporal queries (e.g., "this year"), use `YEAR(order_date) = YEAR(GETDATE())`.

- Always group by all non-aggregated columns when using `GROUP BY`.

"""

def generate_query(

self,

question: str,

schema: str,

dialect: str = "mssql"

) -> str:

"""

Generate SQL query from natural language.

Args:

question: Natural language question

schema: Database schema

dialect: SQL dialect (postgresql, mysql, sqlserver)

Returns:

Generated SQL query

"""

system_prompt = self.create_system_prompt(schema, dialect)

if self.provider == "anthropic":

response = self.client.messages.create(

model=self.model,

max_tokens=1024,

system=system_prompt,

messages=[

{"role": "user", "content": question}

]

)

query = response.content[0].text.strip()

elif self.provider == "openai":

response = self.client.chat.completions.create(

model=self.model,

messages=[

{"role": "system", "content": system_prompt},

{"role": "user", "content": question}

],

temperature=0.1 # Lower temperature for more consistent output

)

query = response.choices[0].message.content.strip()

elif self.provider == "ollama":

response = self.client.chat.completions.create(

model=self.model,

messages=[

{"role": "system", "content": system_prompt},

{"role": "user", "content": question}

],

temperature=0.1

)

query = response.choices[0].message.content.strip()

# Clean up the query (remove markdown if present)

query = self._clean_query(query)

return query

def _clean_query(self, query: str) -> str:

"""Remove markdown formatting and extra whitespace."""

# Remove SQL markdown blocks

if query.startswith("```sql"):

query = query[6:]

if query.startswith("```"):

query = query[3:]

if query.endswith("```"):

query = query[:-3]

return query.strip()

Putting it All Together

Here is the final execution script. It connects to your local DB, extracts the schema, asks the question, and prints the executable SQL.

# main.py

import os

from dotenv import load_dotenv

from schema_extractor import SchemaExtractor

from simple_query_generator import SimpleSQLQueryGenerator

# Load environment variables

load_dotenv(override=True)

def main():

# 1. Config & Connection

# If running locally

db_params = {

"SERVER": "(localdb)\MSSQLLocalDB",

"DATABASE": "master",

"Trusted_Connection": "yes",

"Encrypt": "no"

}

"""

# If using environment variables (e.g., for production)

db_params = {

'host': os.getenv('DB_HOST'),

'port': os.getenv('DB_PORT'),

'database': os.getenv('DB_NAME'),

'user': os.getenv('DB_USER'),

'password': os.getenv('DB_PASSWORD')

}

"""

# 2. Extract Schema

my_tables = ["customers", "orders"]

extractor = SchemaExtractor(db_params)

schema_md = extractor.format_schema_for_llm(my_tables)

# 3. Generate Query

generator = SimpleSQLQueryGenerator()

user_question = "Show me the top 5 customers by total order amount."

sql_query = generator.generate_query(user_question, schema_md)

print(f"--- Database Schema ---n{schema_md}")

print(f"User Question: {user_question}n")

print(f"--- Generated SQL ---n{sql_query}")

if __name__ == "__main__":

main()

Pro-Tips for Production: Tuning Your “Brain”

Before you hit “Publish” on your generator, there are two golden rules to remember when working with LLMs and SQL:

1. Model Choice Matters (But Size Isn’t Everything)

The quality of your SQL depends heavily on the model you choose.

- Large Models (GPT-4, Claude 3.5): Excellent at complex logic and deeply nested subqueries.

- Local Models (Llama 3.2, Mistral): Blazing fast and private. While smaller, they are surprisingly “SQL-literate” if your schema is clean.

2. Prompt Engineering > Model Switching

If the generated SQL isn’t quite right, don’t immediately swap your model. Most issues can be fixed by tuning your System Prompt.

If the LLM makes a mistake, try adding a specific rule to the create_system_prompt method:

- Is it using LIMIT instead of TOP? Add: “Never use LIMIT; always use SELECT TOP X.”

- Is it hallucinating columns? Add: “Only use columns explicitly listed in the provided Schema.”

- Is it failing on dates? Add: “Use DATEDIFF or DATEPART for all temporal comparisons.”

The result?

By combining the Secret Sauce (your metadata) with the Brain (this logic), you transform a simple prompt like “Show me the top 5 customers by total order amount?” into a complex, multi-join T-SQL statement instantly.

C:sainathudataprojectssimple_sql_query_generator>uv run main.py

--- Database Schema ---

# Database Schema (MSSQL)

## Table: customers

Columns:

- customer_id: int NOT NULL (PRIMARY KEY)

- name: nvarchar NULL

- email: nvarchar NULL

- created_at: datetime2 NULL

## Table: orders

Columns:

- order_id: int NOT NULL (PRIMARY KEY)

- customer_id: int NULL

- order_date: date NULL

- total_amount: decimal NULL

Foreign Keys:

- customer_id → customers.customer_id

User Question: Show me the top 5 customers by total order amount.

--- Generated SQL ---

SELECT TOP 5

c.customer_id,

c.name,

COALESCE(SUM(o.total_amount), 0) AS total_order_amount

FROM

customers AS c

LEFT JOIN

orders AS o ON c.customer_id = o.customer_id

GROUP BY

c.customer_id, c.name

ORDER BY

total_order_amount DESC;

GitHub

GitHub – sainathudata/simple_sql_query_generator_llm

In the next article we will see how to validate the generated sql and execute them.

Building a Simple SQL Query Generator Using LLMs was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.