AI Is Not Replacing Software Engineers: It Is Redefining Them

The New Reality: AI Can Write Code, But Should It Ship Without You?

There is a lot of talk lately about AI “killing” the software developer role. Headlines claim that junior jobs are vanishing and that manual coding is a dead art. But as someone who has been deeply immersed in the latest AI advancements, my experience lately with AI has been that it isn’t a replacement for the engineer, it’s a powerful, albeit volatile, force multiplier.

In 2026, large language models (LLMs) like Claude Sonnet, Claude Opus, and GPT-5 Codex can generate, refactor, and even debug code at speeds that would have seemed like science fiction just a few years ago. But here’s the uncomfortable truth: while these models are rewriting the rules of software development, they’re also introducing new risks, tradeoffs, and responsibilities that every developer and every team needs to understand.

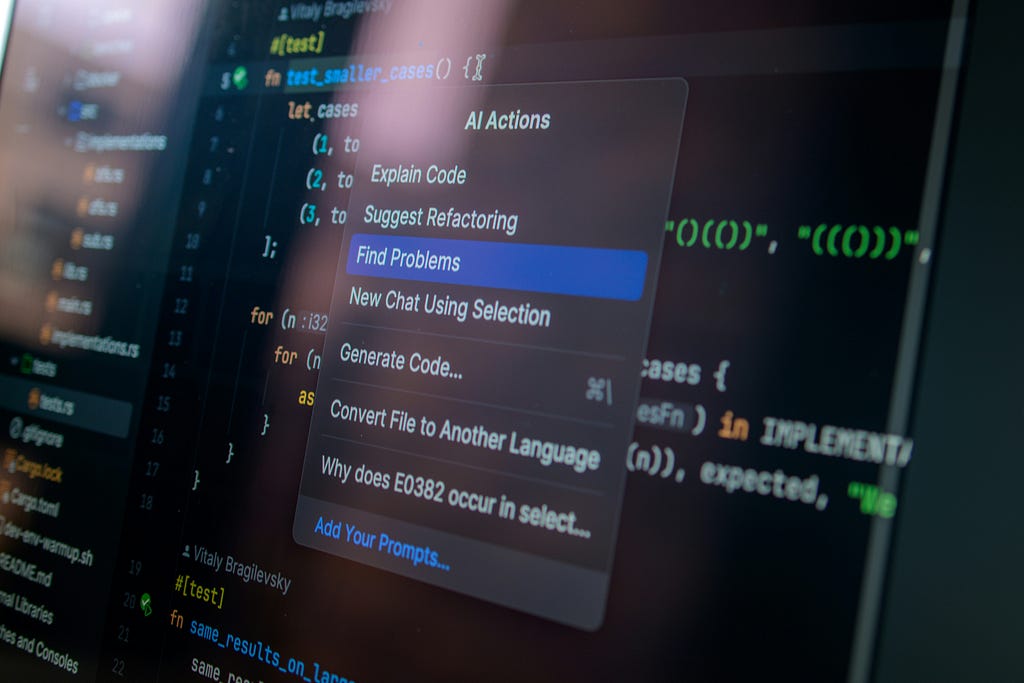

The answer up front: LLMs are transforming developer workflows, but they are not replacing the need for experienced engineers. Instead, they’re shifting our focus from writing code to reviewing, debugging, and validating it, especially when it comes to edge cases, maintainability, and security. If you want to ship reliable software in the age of AI, you need to master not just prompt engineering, but also the art of human-in-the-loop review, functional testing, and continuous code quality assurance.

Let’s break down what’s really changing, what’s at stake, and how you can adapt.

How LLMs Are Reshaping the Developer’s Job

From Code Author to Code Curator

The core promise of LLMs is speed. Need a data pipeline, a REST API, or a test suite? You can get a working draft in seconds. According to recent industry data, AI coding assistants now generate 41% of new code, and 84% of developers use AI tools in their daily workflows. In data science, LLMs have slashed preprocessing time from hours to minutes, automating tasks that used to consume up to 80% of a data scientist’s workload.

But this speed comes with a catch. The more code LLMs generate, the more time developers spend reviewing, debugging, and integrating that code. In fact, teams relying heavily on AI for feature development experience a 35% higher regression rate within six months compared to teams using a human-in-the-loop verification model.

The bottleneck has shifted: it’s no longer about how fast you can write code, but how effectively you can review and validate what the AI produces.

The Productivity–Quality Paradox

We are generating code faster than we can safely ship it. While AI makes us 10x faster at drafting, it creates a “bottleneck at the finish line.” Pull requests are 18% larger, and review times have spiked by 35%. We are effectively shifting our time from the keyboard to the code review.

The Tradeoffs of Using LLMs in Programming

What LLMs Do Well:

- Boilerplate and Routine Tasks: LLMs excel at generating standard code patterns, documentation, and unit tests. They’re like supercharged junior developers who never get tired.

- Multi-File Coordination: Advanced models like Claude Opus 4.6 can handle multi-file refactoring and context-aware changes, making them valuable for large codebases.

- Terminal Automation: GPT-5.3-Codex dominates in terminal-heavy workflows, automating deployment scripts, Docker pipelines, and CI/CD configurations.

Where LLMs Struggle :

- Edge Cases and Contextual Nuance: LLMs are pattern matchers, not reasoners. They handle common cases well but consistently miss edge conditions, boundary values, and domain-specific logic.

- Security Flaws: Approximately 45% of AI-generated code contains security vulnerabilities, including SQL injection, XSS, and hardcoded credentials.

- Maintainability and Readability: AI-generated code introduces 1.64× more maintainability and code quality errors than human-written code, leading to higher technical debt.

- Inconsistent Patterns: LLMs may generate different implementations for similar requirements, creating maintenance headaches and codebase drift.

- Licensing and IP Risks: LLMs can inadvertently reproduce code snippets from copyrighted or GPL-licensed projects, exposing organizations to legal liabilities.

The Cost of Speed :

The speed of LLMs can create a false sense of confidence. Many teams accept AI output because it passes tests or looks clean, but this habit leads to “vibe coding”, trusting AI results without checking structure or future impact. As a result, 67% of developers report spending more time debugging AI-generated code, and 75% of technology leaders face moderate or severe technical debt by 2026 due to AI-speed practices.

Why Human Oversight Is Essential: Code Review, Debugging, and Edge Cases

The Edge Case Blind Spot

LLMs are like interns: they can write the code for the common case, but they don’t think about the edge cases. They don’t have a mental model of the problem, they’re just generating text that looks like code. This leads to failures in scenarios such as:

- Inconsistent Input Data Formats: For example, a date column that sometimes includes a time and sometimes doesn’t. LLMs may not handle both formats unless explicitly prompted, leading to subtle bugs.

- Boundary Values: Negative prices, zero quantities, or maximum-length strings can cause AI-generated functions to return nonsensical results or crash.

- Internationalization: AI-generated validation functions may fail for emails with Unicode characters or right-to-left text, missing critical edge cases for global users.

- Business Logic: LLMs often miss domain-specific rules, such as special password requirements for enterprise users or exceptions for certain user types.

Case in point: In a real-world audit, an AI-generated database migration script passed all syntax tests but failed in production because it didn’t account for the production environment’s shard distribution, causing a 14-minute outage.

The Human-in-the-Loop Imperative

The most effective workflows treat LLMs as collaborators, not replacements. Human-in-the-loop (HITL) review is now essential for:

- Validating Logic: Ensuring that code not only compiles but also meets business requirements and handles edge cases.

- Security Auditing: Catching vulnerabilities that automated tools may miss.

- Maintaining Consistency: Enforcing coding standards, naming conventions, and architectural patterns.

- Accountability: Every line of AI-generated code must have a human “owner” who is responsible for its performance in production.

Industry best practice: Establish a policy where developers spend at least 30% of their time reviewing AI-generated logic. Manual review time should be proportionate to the complexity of the code, not the speed of generation.

The Dark Side: Readability, Maintainability, and Technical Debt

The Hidden Costs of Fast AI Coding

AI-generated code often prioritizes speed over structure, leading to:

- Poor Readability: Inconsistent formatting, unclear variable names, and lack of comments make code harder to understand and maintain.

- Maintainability Issues: Duplicate logic, improper loop handling, and inconsistent patterns increase the cost of future changes and debugging.

- Technical Debt: 75% of technology leaders are projected to face moderate or severe technical debt by 2026 due to AI-speed practices.

Empirical findings: According to the 2026 State of AI vs. Human Code Generation Report by CodeRabbit, AI-assisted pull requests contain 1.7x more issues on average than human-authored ones, with a specific 1.64x increase in maintainability errors.

Prompt Engineering and Debugging: The New Developer Superpower

Why Prompt Engineering Matters

Prompt engineering is now as important as traditional coding. The quality of your prompt determines the quality of the AI’s output. Effective prompt engineering involves:

- Providing Context: Include relevant files, error logs, stack traces, and environment details in your prompt.

- Specifying Constraints: Define language, framework, style, and business rules.

- Iterative Refinement: Use chain-of-thought and few-shot prompting to guide the model through complex reasoning steps.

- Debugging Before Prompting: Identify the root cause of a bug before asking the LLM to fix it. Otherwise, you risk getting a plausible but incorrect solution.

Debugging Workflows with LLMs

Debugging with LLMs is fundamentally different from traditional debugging:

- Observability: Capture the full trace of LLM interactions, including prompts, context, and outputs.

- Isolation and Reproduction: Curate failed production traces into test cases and add them to your evaluation dataset.

- Root Cause Analysis: Use distributed tracing and semantic alerting to pinpoint where failures occur, whether in the prompt, retrieval system, or model reasoning.

- Experimentation: Iterate on prompts, swap models, and test fixes against a “golden dataset” of real-world scenarios.

- Simulation and Regression Testing: Replay production traffic and adversarial scenarios to ensure fixes are robust and don’t introduce new bugs.

Best practice: Treat every LLM failure as a data point for improving your prompts, evaluation datasets, and model selection.

Model Tiers, Usage Limits, and Organizational Policies

The Reality of Usage Limits

Premium models like GPT-5 Codex, Claude Sonnet, and Claude Opus come with monthly usage limits that can slow development. When you hit these limits, you may have to fall back to lower-tier models like GPT-4 or GPT-5.1 Mini, which increases error rates and debugging time.

Typical limits (as of February 2026):

- ChatGPT Plus: ~25 tasks/day, 1 concurrent task, 10-minute timeout

- Pro: ~250 tasks/day, 3 concurrent tasks, 30-minute timeout

- Enterprise: Custom limits, higher concurrency, and priority processing

Fallback to “mini” models can extend usage but at the cost of reasoning depth and accuracy. For example, GPT-5.1-Codex-Mini offers up to 4x higher usage limits but is less reliable on complex tasks.

Cost and Performance Tradeoffs

- Claude Sonnet 5: $3/1M input tokens, $15/1M output tokens, 82.1% SWE-bench accuracy, $0.04 per fix, 1.2 attempts per fix.

- GPT-5.3-Codex: $1.75/1M input tokens, $14/1M output tokens, 56.8% SWE-bench Pro, 77.3% Terminal-Bench, $0.09 per fix, 1.8 attempts per fix.

- Claude Opus 4.6: $5/1M input tokens, $25/1M output tokens, 80.8% SWE-bench, excels at large codebase analysis and multi-agent workflows.

Key insight: GPT-5.3-Codex is faster and better for terminal automation, while Claude Sonnet and Opus are more accurate and cost-effective for production bug fixes and large-scale refactoring.

Table: Comparing LLM Model Tiers and Tradeoffs (Feb 2026)

Analysis: Claude Sonnet 5 leads in autonomous bug fixing and cost efficiency, while GPT-5.3-Codex is the go-to for fast, interactive coding and DevOps automation. Claude Opus 4.6 is best for large codebases and multi-agent workflows. Gemini 3 Pro is the budget option but requires more manual review.

Real-World Examples: Where LLMs Fail Without Human Oversight

Example 1: Date Parsing Gone Wrong

A developer used GPT-4 to extract deadlines from email threads, converting phrases like “by next Tuesday” into actual dates. The model misinterpreted “by next Tuesday” from April 5, 2024, as “by 12 April 2024” (a Friday), not a Tuesday. Even with improved prompts, the model failed to consistently compute correct dates. The solution? Extract the date string with the LLM, then use deterministic code to compute the actual date.

Example 2: Negative Price Calculation

An AI-generated function for calculating discounts passed basic tests but failed for negative discounts or discounts over 100%, resulting in negative prices or price increases. Property-based testing and sabotage testing caught these edge cases before they reached production.

Example 3: Security Vulnerabilities

AI-generated code for user authentication looked flawless but contained SQL injection vulnerabilities and hardcoded credentials. Automated security scans and human review identified and fixed these issues before deployment.

Security, IP, and Licensing Risks of LLM-Generated Code

Security Risks

- Hallucinated APIs and Ignored Constraints: LLMs may invent APIs or ignore security constraints, leading to vulnerabilities.

- Prompt Injection Attacks: Malicious inputs can manipulate LLMs to generate insecure code or leak sensitive information.

- Training Data Contamination: LLMs trained on public code repositories may replicate vulnerabilities present in their training data.

IP and Licensing Risks

- License Violations: LLMs may reproduce code snippets from GPL-licensed projects, requiring the entire derived codebase to be open-source.

- Attribution Requirements: Organizations must track and attribute code generated by LLMs, especially when using open-source models with specific license terms.

Best practice: Use automated license scanning, maintain clear records of model versions and code origins, and consult legal counsel for commercial deployments.

Tools and Practices: Automated Tests, Static Analysis, and CI/CD with LLMs

Automated Testing and Static Analysis

- Unit and Functional Tests: Validate that code behaves as expected under various scenarios.

- Property-Based Testing: Catch edge cases that example-based tests miss.

- Security Scanning: Use tools like Snyk and SonarQube to detect vulnerabilities and code quality issues.

- Code Quality Metrics: Track maintainability, readability, and technical debt using SonarQube and CodeQL.

CI/CD Integration

- Continuous Evaluation: Integrate LLM evaluations into CI/CD pipelines to catch issues early and maintain model quality.

- Automated Guardrails: Set up input and output validation, fallback behavior, and rollback mechanisms for failed deployments.

- Prompt Versioning: Treat prompts as code — version them, review changes, and maintain an audit trail.

Human-in-the-Loop Review

- Collaborative Reviews: Pair AI-generated code with human review to catch subtle issues and ensure alignment with project goals.

- Feedback Loops: Use human corrections and feedback to refine prompts, evaluation datasets, and model selection.

Guidance for Developers: Practical Recommendations and Checklist

- Treat LLMs as Junior Developers: Never merge AI-generated code without review. Assign a human owner to every line.

- Build a Robust Testing Pipeline: Use property-based and sabotage testing to catch edge cases. Track bug escape rates.

- Master Prompt Engineering: Provide clear context and constraints. Use chain-of-thought and few-shot prompting. Debug before prompting.

- Monitor Usage and Costs: Track model usage limits and plan for fallbacks. Optimize prompt size to maximize limits.

- Enforce Security and Compliance: Scan for vulnerabilities and license violations. Maintain records of model versions and code origins.

- Prioritize Maintainability: Review code for readability and consistency. Refactor AI-generated code to reduce technical debt.

- Integrate Human-in-the-Loop Review: Establish policies for manual review of logic. Use collaborative tools and feedback loops.

- Continuously Improve: Update test datasets as your product evolves. Monitor production incidents and feed failures back into your evaluation pipeline.

The Bottom Line: LLMs Are Transforming Development, But Only If You Adapt

AI coding models like Claude Sonnet, Claude Opus, and GPT-5 Codex are revolutionizing how we build software. They can generate code at unprecedented speed, automate routine tasks, and even assist in debugging and testing. But they are not magic bullets. Without disciplined review, robust testing, and human oversight, they can just as quickly introduce bugs, vulnerabilities, and technical debt.

The winning teams in 2026 are not those who trust AI blindly, but those who build verification systems, enforce review standards, and treat LLMs as powerful collaborators, not infallible experts.

If you want to thrive in this new era, focus on what LLMs can’t do: reason about edge cases, understand business context, and ensure long-term code quality. Master prompt engineering, invest in testing and review, and never ship code you don’t understand.

Your job isn’t going away, it’s evolving. The future belongs to developers who can harness AI’s speed while safeguarding software’s reliability, security, and maintainability.

About the Author

I am an AI Engineer specializing in Generative AI, agents, and Python-driven LLM workflows. My technical background spans from developing deep-learning-based image classification solutions to transforming operational processes with Python and modern agentic architectures.

A fundamentally curious individual, I am fueled by a passion for the rapid evolution of AI technology. Outside the IDE, I am a fitness enthusiast who believes that physical discipline provides the mental endurance needed to stay at the forefront of the AI revolution.

Let’s connect! 👏 Clap to support this story, follow for more 2026 dev insights, and find me on LinkedIn.

AI Is Not Replacing Software Engineers: It Is Redefining Them was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.