Agent Memory: Explained Simply

Your AI agent just spent an hour helping you plan a product launch. It asked smart questions, remembered your preferences, and gave you a tight output. Today, you open a new chat. It has no idea who you are. Here is why that happens, and what the field is doing about it.

The Amnesia Problem

At its core, a large language model is stateless. You send it a message, it produces a reply, and then it forgets everything. Not some things. Everything. Each new conversation is a blank slate.

This is not a bug. It is a deliberate architectural choice. The model itself is a giant function: input goes in, tokens come out. There is no persistent storage baked into the model weights where your conversation history lives between sessions.

For a simple chatbot, this is fine. You ask it to write a cover letter, it writes one, you move on. No need for continuity.

But agents are different. Agents are supposed to work on long-running tasks, learn your preferences over time, and collaborate with other agents across multiple sessions. Statelessness is a serious problem for them. You cannot have a helpful personal assistant that introduces itself every Monday morning.

The statefulness problem is the core challenge of building useful agents. Memory is the solution layer that sits between the stateless model and the real world that expects continuity.

The Wrong Mental Model

Most people, when they first think about memory in AI agents, reach for the simplest explanation: just stuff more into the context window.

This is understandable. The context window is literally the information the model can “see” right now. So if you want the agent to remember something, paste it into the context. Problem solved, right?

Not quite. Context windows are finite. The longest ones today top out around 1 to 2 million tokens, which sounds enormous until you realize a long-running autonomous agent might accumulate far more state than that. And even before you hit the limit, there is a subtler problem: models get worse at using information that is buried deep in a long context. Attention is not uniformly distributed.

More importantly, context is ephemeral. The moment a session ends, it is gone. You would need to re-inject the entire history at the start of every new session, which is both expensive and fragile.

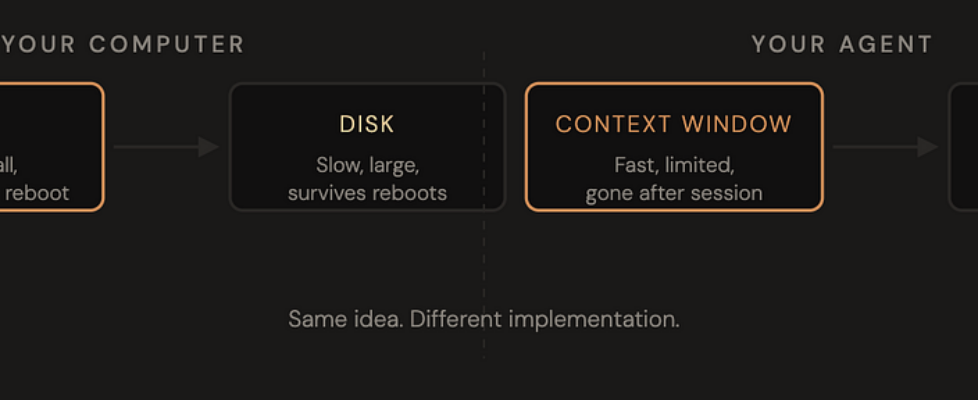

The better mental model is the one your computer has already taught you. Your computer does not store everything in RAM. It uses a hierarchy: fast, small, in-the-moment memory for what you are working on right now, and slower, larger, persistent storage for everything else. It decides what to load, what to keep, and what to let go.

Agent memory works the same way.

The Four Types of Agent Memory

Researchers studying both human cognition and AI systems have landed on a useful taxonomy. Agent memory is not one thing. It is a system with four distinct layers, each serving a different purpose.

Type 01

Working Memory

What the agent is actively thinking about right now. This is the context window: the user’s message, the conversation so far, and any documents or tool results that have been injected. It is fast, immediately accessible, and completely temporary. When the session ends, so does working memory.

Type 02

Episodic Memory

A record of what happened. Past conversations, completed tasks, decisions that were made, and why. Stored externally, retrieved when relevant. Think of it as the agent’s diary. It gives the agent a sense of personal history so it can say, “We talked about this two weeks ago.”

Type 03

Semantic Memory

Factual knowledge about the world and about you. Your name, your preferences, your role, your company’s tech stack. This is not tied to any specific conversation. It is just things the agent has learned and stored as persistent facts. Closest to a user profile or a knowledge base.

Type 04

Procedural Memory

Knowing how to do things. The tools available, the workflows to follow, the system prompt that shapes the agent’s behavior. You could think of model weights themselves as a form of procedural memory: trillions of parameters encoding how to reason, write, and respond. This layer is the least dynamic but the most foundational.

These four types map onto different parts of the technical stack. Working memory lives in the context window. Episodic and semantic memory live in external databases (vector stores, relational DBs, key-value stores). Procedural memory lives in the model weights and the system prompt.

How It Actually Works: Write, Retrieve, Forget

Now that we have the four types, let us walk through what happens mechanically when an agent uses memory.

Writing

At the end of a session, or at key moments during one, the agent (or a separate memory management process) decides what is worth keeping. This is harder than it sounds. You do not want to store everything. That would make retrieval slow and noisy. You want to store the things that are likely to be relevant later: decisions made, preferences expressed, and context that is not easily re-derived.

In practice, this often involves a second LLM call specifically for summarization and extraction. The raw conversation goes in, and structured memory items come out: facts, observations, events. These get written to a vector database or key-value store tagged with metadata like timestamps and topics.

Retrieval

At the start of a new session, or when the agent encounters a new task, it queries its memory stores for relevant context. Vector search is common here: the agent encodes the current query as an embedding and retrieves the stored memories with the highest semantic similarity.

The retrieved memories get injected into the context window alongside the new conversation. The agent now has access to its past without needing to re-read every prior session in full. This is the memory equivalent of reading your notes before a meeting rather than trying to remember everything from scratch.

Forgetting

This one gets less attention, but it matters a lot. Memory systems need to decay. Old, low-relevance memories should fade. Contradictory memories (you said you prefer Python, then switched to Go) need to be resolved. Otherwise, the agent’s knowledge base becomes stale and contradictory over time.

Some systems handle this with explicit time-based expiry. Others use recency and access frequency as signals. A few more sophisticated approaches borrow from spaced repetition: memories that keep getting retrieved stay fresh; ones that never come up fade.

Who Is Building This Today

Memory for AI agents is a young but fast-moving space. A few names worth knowing:

Mem0 is probably the most widely used memory layer right now. It sits between your agent and your database, handling the write/retrieve/forget logic automatically. You drop it into your stack and it manages episodic and semantic memory for you. The API is simple: you save memories, you search memories, and it handles the rest.

Letta (previously MemGPT) takes a more opinionated approach. It gives the agent explicit control over its own memory through tool calls. The agent can decide when to write to memory, what to write, and what to delete. It is more complex but also more transparent: the agent is an active participant in its own memory management rather than a passive recipient.

Beyond these, major framework players like LangChain and LlamaIndex both have memory modules. And increasingly, frontier model providers are building persistence directly into their APIs so that conversation history can carry over across sessions natively.

The direction is clear: memory is moving from a custom engineering problem that every team solved differently, to a first-class layer of the agent stack with standard interfaces and dedicated infrastructure.

Tying It Back to MCP and A2A

If you have been following this series, you have seen two other pieces of the agentic infrastructure puzzle. MCP (Model Context Protocol) is about how agents talk to tools and data sources. A2A (Agent to Agent) is about how agents talk to each other. Memory is the third piece: how agents maintain continuity with themselves over time.

Think of it this way. MCP handles the present: what can this agent access right now? A2A handles collaboration: how do multiple agents coordinate? Memory handles the past: what does this agent know from before?

Together, these three layers are starting to define what it means to build real agent infrastructure: not just a model that responds to prompts, but a system that can act, collaborate, and remember.

The models themselves are getting better every few months. The context windows keep growing. But memory as a system, with its write, retrieve, and decay logic, is not something you get for free from a bigger model. It is an engineering layer that needs to be built deliberately.

That is what makes it interesting to pay attention to right now.

Thanks for reading. If you found this useful, the rest of the series covers MCP and A2A in the same format. Hit follow if you want the next one when it drops.

Agent Memory: Explained Simply was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.