A Practical 6D Pose Estimation Pipeline for High-Mix Manufacturing

https://medium.com/media/8504f96dd1191f8ea628ece8efd8303b/href

The question our robotics team has been struggling with: how do we solve vision-based pick and place for high-mix low-volume (HMLV) manufacturing with 100% success rate?

The Problem of Deploying Robots in High-Mix, Low-Volume Manufacturing

Our startup team, based in Frankfurt, Germany, focuses on deploying industrial robots for high-mix, low-volume (HMLV) manufacturing in the automotive, metal, and aerospace industries.

High-mix, low-volume (HMLV) manufacturing refers to factories that produce many different products, each in small batches, rather than mass-producing a single part.

This production model is common among small and medium-sized enterprises (SMEs) and contrasts with traditional mass production.

Deploying a robot in an HMLV production line is exceptionally difficult because the system must adapt to 50–100 product variants. Client requirements are often strict:

- 100% success rate, because failures stop the line.

- Return on investment (ROI) within 12–18 months, which rules out expensive, specialized hardware.

- <1mm in precision, required for industry regulations.

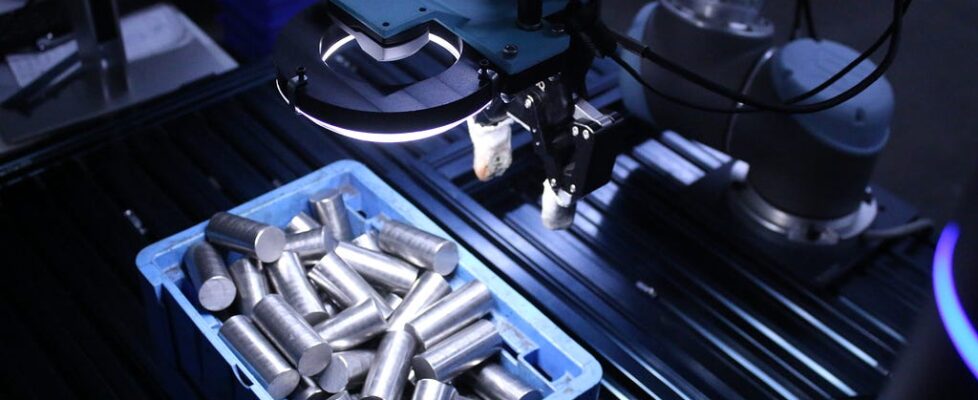

Among all use cases, the one with the highest demand we encountered was vision-based pick and place. Clients often had a large number of metallic products, typically ranging from 50 to 100 variants. The robot was required to precisely pick parts either from a bin or a tray, and place them into a machine or a holder with less than 1 mm accuracy.

Despite all the hype around Physical AI and robotics, this is a task that is trivial for a human and still exceptionally hard for a robot.

From a robotics systems perspective, these constraints place most of the burden on perception.

We needed a solid 6D pose estimation pipeline that must autonomously adapt to 50–100 different objects with sub-millimeter accuracy under changing lighting, clutter, and sensor noise.

Solving Object Pose Estimation for High-Mix Low-Volume Manufacturing with Vitreous

We quickly learned that: there isn’t a one-size-fits-all algorithm.

Therefore, we build several Skill groups for perception containing a multitude of algorithms, ranging from classical, deep learning to foundational models. Particularly, we built:

- Vitreous: 3D point cloud processing skills

- Pupil: Image processing skills

- Retina: Object detection skills

- Cornea: Object segmentation skills

For a quick refresher on the Telekinesis Agentic Library of robotic skills, please have a look into:

The Telekinesis Physical AI Stack: A Landscape View of Robotic Skills, Agents, and Architecture

For 6D pose estimation in high-mix industrial environments, the primary dependency is Vitreous, with supporting components from Pupil, Retina, and Cornea.

6D Object Pose Estimation with Classical Point Cloud Algorithms

Classical 6D pose estimation pipelines operate directly on point clouds of the scene. We provide a quick overview of the pipeline:

Input Point Cloud

The pipeline starts with a raw point cloud captured from an RGB-D sensor or a 3D camera. Depending on the sensor and driver, this data is commonly represented in formats such as PointXYZ, PointXYZRGB, or PointXYZI, where each point contains spatial coordinates and optionally color or intensity information. In ROS-based systems, point clouds are usually transmitted as sensor_msgs/PointCloud2, which must be deserialized before processing.

At this stage, the point cloud is dense, noisy, and unstructured. It includes the objects of interest, background geometry, supporting surfaces, and measurement artifacts such as missing depth values, flying pixels, and multi-path interference, particularly common with metallic parts.

Downsampling and Preprocessing

Raw point clouds are typically too dense for real-time processing. Downsampling reduces the number of points while attempting to preserve geometric structure. Several strategies are commonly used:

- Voxel Grid Downsampling: The space is discretized into a 3D voxel grid, and all points within a voxel are replaced by their centroid. This is the most common approach and provides uniform spatial resolution, but it can remove sharp edges and fine features critical for pose estimation.

- Uniform Sampling: Points are sampled at fixed spatial intervals without aggregation. This preserves original point positions but does not reduce noise.

- Viewpoint Visibility: Removes points that are not visible from a specified viewpoint, simulating line-of-sight occlusion.

- Bounding Box: Extracts points that lie within a specified 3D axis-aligned bounding box (AABB), removing all points outside the defined region.

Preprocessing may also include:

- Normal Estimation, required for point-to-plane ICP or feature-based registration

- NaN Removal, to discard invalid depth measurements

To solve 6D pose estimation for HMLV manufacturing, we often rely on Voxel Grid Downsampling, but depending on the use case, may need to test the other approaches.

Filtering

Filtering aims to remove points that are unlikely to belong to valid objects or that degrade registration quality. Common filters include:

- Statistical Outlier Removal (SOR): Removes points whose neighborhood distances deviate significantly from the mean. Effective for sensor noise, but sensitive to point density variations.

- Radius Outlier Removal (ROR): Discards points with fewer than a specified number of neighbors within a given radius. Useful for removing isolated noise but requires careful parameter tuning.

- Pass-Through Filters: Apply axis-aligned constraints (e.g. z-height filtering) to limit the region of interest. Often used to exclude ceiling, floor, or distant background points.

For HMLV manufacturing, Pass-Through filters are extremely valuable as they aggressively remove points from the point cloud, enabling faster pipelines. Moreover, Pass-Through filters allow the engineer to encode prior domain knowledge which is critical for industrial use cases.

Plane Segmentation and Background Removal

In many industrial scenes, dominant planar structures such as tables, trays, or bin floors must be removed. This is commonly done using RANSAC-based plane segmentation, which fits planar models and iteratively removes inliers.

Once planes are removed, additional background subtraction may be applied using:

In HMLV setups, frequent changes in fixtures and layouts often invalidate static background assumptions, making this step brittle.

Object Clustering

After background removal, the remaining points are segmented into individual object candidates. The most common approaches include:

- Euclidean Cluster Extraction: Groups points based on spatial proximity. Simple and fast, but fails when objects touch or overlap.

- Density-Based Clustering (e.g. DBSCAN): Can handle varying point densities but is sensitive to parameter selection and noise.

In practice for HMLV manufacturing, we often do not use this, but rather switch to a RGB segmentation model which is faster and more robust to variations.

Coarse Registration

Each cluster is aligned to a known object model, typically derived from CAD data (important assumption: we have access to CAD data for industrial use cases). Coarse registration aims to generate an initial pose estimate close enough for refinement. Common approaches include:

- Centroid Alignment: Translates model and scene centroids to coincide. Extremely fast but provides no orientation estimate.

- Feature-Based Registration: Uses geometric descriptors such as FPFH or SHOT to establish correspondences between model and scene points, followed by RANSAC-based pose estimation. More robust than centroid alignment but computationally expensive and sensitive to noise and symmetry.

Coarse registration is critical, as most refinement algorithms converge only locally.

ICP Refinement

Iterative Closest Point (ICP) is used to refine the pose estimate by minimizing distances between corresponding points. Variants include:

- Point-to-Point ICP

- Point-to-Plane ICP, which requires reliable normal estimation

ICP assumes sufficient overlap between model and scene and a good initial pose. It is highly sensitive to:

- Symmetric geometries

- Partial observations

- Depth noise

- Poor initial alignment

All the algorithms describe in this pipeline are available in Vitreous:

Vitreous: 3D Point Cloud Processing Skills | Telekinesis Docs

What fails in HMLV manufacturing?

However, in HMLV manufacturing, the dominant failure occurs in object clustering, and its effects propagate downstream through the entire pipeline.

Traditional clustering methods such as Euclidean Cluster Extraction or DBSCAN rely on geometric separation between objects in the point cloud. They assume that objects are spatially distinct, have consistent point densities, and are not in contact with each other.

In HMLV industrial settings, these assumptions rarely hold:

- Parts often touch, overlap, or interlock in bins and trays

- Thin or reflective metal surfaces collapse in depth measurements

As a result, clustering becomes unstable. Small changes in part geometry, bin fill level, or sensor viewpoint can lead to either over-segmentation (splitting a single object into multiple clusters) or under-segmentation (merging multiple objects into one cluster).

Failures in clustering directly impact coarse registration and ICP refinement. When clusters do not correspond to complete individual objects:

- Coarse registration produces incorrect or ambiguous initial poses

- Feature-based alignment fails due to partial geometry or symmetry

- ICP converges to incorrect local minima or diverges entirely

ICP, in particular, is a local optimization method and assumes a reasonable initial alignment and sufficient geometric overlap. When these assumptions are violated by poor clustering, ICP cannot recover, regardless of parameter tuning or refinement strategy.

In practice, many perceived “ICP failures” in HMLV manufacturing are in fact upstream segmentation failures.

A Robust 6D Pose Estimation Pipeline for High-Mix Manufacturing

To address the limitations of geometric clustering in high-mix environments, we modify the classical 6D pose estimation pipeline at a single but critical point: object separation.

Instead of relying on point cloud–based clustering, we perform object segmentation in the RGB domain using deep learning and foundation models for perception. These models, implemented in Cornea, operate directly on 2D images and are significantly more robust to object contact, overlap, and variations in geometry and surface properties.

RGB-Based Object Segmentation

RGB segmentation models leverage appearance cues such as edges, texture, and semantic context, which are often more stable than depth-based geometric cues in industrial settings. This is particularly important for metallic parts, where depth measurements are frequently noisy or incomplete.

Using deep-learning–based or foundation models, each object instance in the scene is segmented at the pixel level. This produces a set of object masks that are consistent across:

- different product variants

- varying bin fill levels

- changing lighting and sensor noise

Mapping Segmentation Masks to 3D Point Clouds

Once object masks are obtained in the image domain, they are projected into the point cloud using known camera intrinsics and depth information. Each mask is used to filter the point cloud, extracting a clean per-object point cloud without relying on geometric clustering.

This operation effectively replaces the clustering stage in the classical pipeline while preserving all downstream components.

Final 6D Pose Estimation Pipeline

The resulting 6D pose estimation pipeline consists of:

- Classical preprocessing, filtering, and plane removal

- RGB-based object segmentation for reliable object separation

- Mask-guided point cloud extraction

- Coarse registration against CAD models

- ICP refinement for sub-millimeter accuracy

By isolating the failure-prone clustering step and replacing it with RGB-guided segmentation, the pipeline maintains the strengths of classical geometric methods while achieving robustness and scalability required for HMLV manufacturing.

Join the Telekinesis Robotics Community

To learn more about Telekinesis, here are some resources to get started:

- Documentation to the Telekinesis Developer SDK: https://docs.telekinesis.ai/

- Github examples: https://github.com/telekinesis-ai/telekinesis-examples

We’re building a community of developers, researchers, and robotics enthusiasts who want to help grow the Telekinesis Skill Library. If you’re working on robotics, computer vision, or Physical AI and have a Skill you’d like to share or contribute, we’d love to collaborate.

Join the conversation on our Discord community, share ideas, and help shape the future of agentic Physical AI:

Join the Telekinesis Discord Server!

A Practical 6D Pose Estimation Pipeline for High-Mix Manufacturing was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.