What Happens When You Use LoRA On a CNN

Exactly what you would expect 🫠

Introduction

LoRA usually comes up in the context of LLMs, where the whole idea is pretty simple : freeze a large pretrained model, inject a small low-rank adapter, and fine-tune only that instead of updating the entire parameter set. It is cheap, efficient, and works well enough in language models that it has basically become the default way to do parameter-efficient fine-tuning.

What I wanted to check here was whether that same idea holds up even a little outside transformers. So, this is a small experiment where I take a simple CNN, train it on MNIST first, and then try adapting that same model to EMNIST using LoRA instead of retraining the whole thing from scratch. The idea is not to build the best handwritten classifier possible, but to see whether a lightweight low-rank update can reuse what the model learns from handwritten digits and carry that over into a broader handwritten character classification task.

The full implementation for this experiment is available here.

Setup

The setup is pretty simple. First, I train a small CNN on MNIST so it learns digit structure properly. Once that converges, I freeze most of the backbone, keep only a small part of the network trainable, inject a lightweight LoRA adapter into the projection layer, and then adapt that same model to EMNIST. Instead of touching the full network again, the goal is to make a small low-rank update do most of the work and see how far that gets.

Training A CNN Model On MNIST

MNIST is easy enough and the base CNN gets very high accuracy there without much effort, which is expected because handwritten digit classification is a fairly clean problem and even small CNNs do well on it.

Training That CNN Model To Adapt On EMNIST

The more interesting part starts once the same model is pushed onto EMNIST. Unlike MNIST, EMNIST is not just digits and the task gets messy pretty quickly because handwritten letters are much less cleanly separated.

Instead of training a second model from scratch, I reuse the MNIST backbone, keep most of it frozen, and only allow a small part of the network to adapt. The idea is to preserve the useful low-level structure the model already learns from handwritten digits and then make a much smaller update to see how much of that transfers to handwritten characters.

That adaptation is done in two parts. First, most of the CNN is frozen so the original feature extractor stays mostly intact. Then a small LoRA adapter is injected into the projection layer, and that low-rank update becomes the main path for adaptation while the classifier head is replaced and retrained for EMNIST.

def freeze_backbone(model):

for p in model.conv1.parameters():

p.requires_grad = False

for p in model.conv2.parameters():

p.requires_grad = True

for p in model.conv3.parameters():

p.requires_grad = False

for p in model.proj.parameters():

p.requires_grad = False

return model

The LoRA block itself is just a lightweight low-rank residual update added on top of the learned projection.

class LoRALayer(nn.Module):

def __init__(self, dim, rank=8, alpha=1.0):

super().__init__()

self.rank = rank

self.alpha = alpha

self.A = nn.Parameter(torch.randn(dim, rank) * 0.01)

self.B = nn.Parameter(torch.randn(rank, dim) * 0.01)

def forward(self, x):

return (x @ self.B.t() @ self.A.t()) * (self.alpha / self.rank)

That update is injected directly into the forward pass as a residual branch.

def forward(self, x):

x = self.conv1(x)

x = self.conv2(x)

x = self.conv3(x)

x = self.flatten(x)

x = self.relu(self.proj(x))

x = x + self.lora(x)

return self.fc(x)

Once the MNIST backbone is loaded, the final classifier is replaced with a larger head for EMNIST and only the smaller trainable pieces are updated.

lora_model = CNN(num_classes=62)

lora_model = load_backbone_only(lora_model, mnist_model)

lora_model = freeze_backbone(lora_model)

lora_model.fc = nn.Linear(256, 62).to(device)

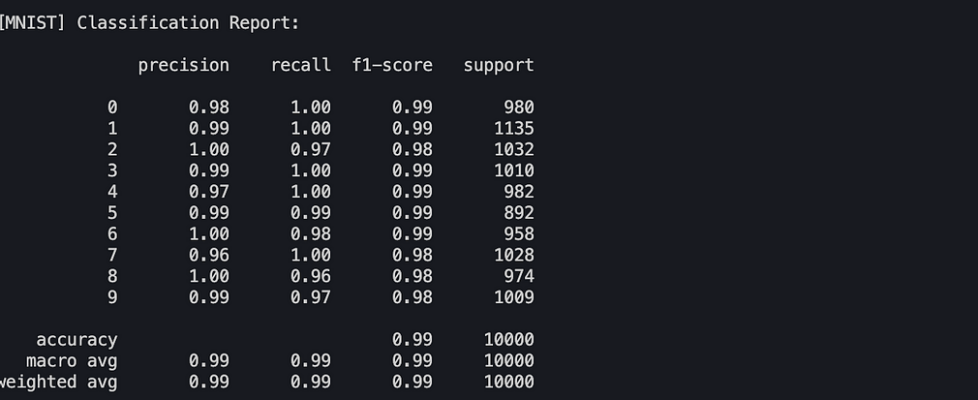

Here’s the classification report for the LoRA model :

Analysis

EMNIST is a much less forgiving dataset than MNIST and that difference shows up pretty quickly. Digits are clean, tightly clustered, and easy to separate. Characters are not. Once uppercase letters, lowercase letters, and digits all start sharing the same label space, visual overlap becomes a much bigger problem and the model starts running into ambiguity almost immediately.

Some classes transfer surprisingly well, especially the ones with distinct shapes, and that is where the LoRA path does manage to carry useful signal forward from the MNIST backbone. At the same time, the limitations show up almost immediately once the visual overlap between classes starts increasing. Digits get mapped into letters, letters collapse into nearby shapes, and characters that look even slightly ambiguous start confusing the model pretty fast 😭.

Confusion Matrices

The confusion matrix makes this very obvious because the model is not failing randomly, it is failing in consistent and predictable ways where visually similar classes bleed into each other.

For MNIST

For EMNIST

Misclassification Analysis

That becomes even clearer once you look at the most common misclassifications, because the model is usually not making completely random mistakes and is instead collapsing into visually nearby classes in a fairly repeatable way.

For MNIST

For EMNIST

Conclusion 🫠

What this really shows is not that LoRA works especially well outside LLMs, but that the idea does transfer at least a little. The model does adapt beyond digits and picks up enough signal to recognize some characters, so the LoRA branch is clearly doing something useful and the transfer is not completely failing.

That said, the results are still pretty meh overall. The model improves just enough to show the idea has some merit, but not enough to make the adaptation feel strong or reliable. It works, just not well enough here to be convincing.

Atleast this refreshed my memory on sklearn … it’s been a while 😩 too much GenAI these days. I also tried doing this for a bipedal walker before this … there’s a reason this post is not about that 🫠

Anyhow, if you enjoyed this, feel free to connect with me on Linkedin or on X (pretty new here) for more such experiments and random ML deep dives. I primarily write on my personal blog so you can read them wherever you prefer. I do have topic-aware gradient scroll bar there though 🥹

What Happens When You Use LoRA On a CNN was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.