Why a Boeing 737 and an A380 Don’t Feel the Same Turbulence — And What That Means for ML

Predicting The Invisible: An ML Model For the Turbulence that Causes 71% of Weather-Related Flight Accidents.

The Problem No One Can See

At 40,000 feet, a plane suddenly drops. Passengers slam into the ceiling. Drinks fly across the cabin. There was no warning, the sky was perfectly clear.

This is the story of how I built a system that predicts this — and why matching meteorology to aircraft aerodynamics was the key insight.

This is Clear Air Turbulence (CAT). Unlike storm-related turbulence, CAT is completely invisible. Pilots can’t see it. Radar can’t detect it. It strikes without warning, and turbulence responsible for 71% of all weather-related aviation accidents. In the US alone, it costs the industry $100–200 million per year in injuries, diversions, and delays.

And it’s getting worse. Climate change is destabilizing jet streams, making CAT more frequent and more intense every year.

Why I Took This On

In 2024, I was selected for SAYZEK, Turkey’s National Defense AI Program run by the Presidency of Defence Industries. The program pairs university researchers with real defense and aviation challenges. My assignment: environmental situational awareness; specifically, predicting atmospheric hazards that are invisible to conventional systems.

I chose to focus on Clear Air Turbulence because existing approaches had a fundamental gap. Traditional methods treat turbulence prediction as a binary problem: turbulence exists, or it doesn’t. But a Boeing 737 and an Airbus A380 experience the same atmospheric conditions very differently. A pocket of air that barely registers on a wide-body aircraft could throw a regional jet sideways. Nobody was modeling this.

My question was: what if we could predict not just whether turbulence exists, but how severely a specific aircraft type would feel it?

What I Built

I designed a machine learning pipeline that integrates three data sources that had never been combined for this purpose:

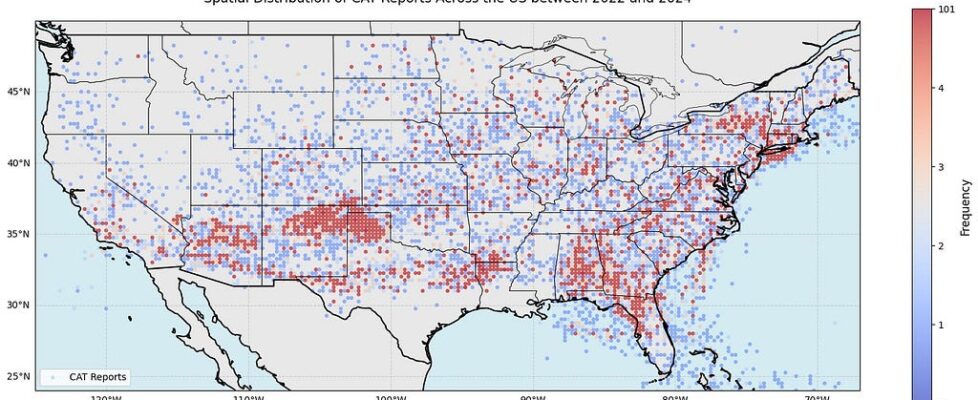

Pilot Reports (PIREPs) — 19,213 CAT events reported across US airspace between 2022– 2024, sourced from the Iowa Environmental Mesonet. Each report includes location, altitude, aircraft type, and perceived turbulence severity.

ERA5 Reanalysis Data — Meteorological variables from ECMWF at 0.25° spatial resolution and 1-hour temporal resolution. I engineered 14 turbulence diagnostic features from this data, including the Richardson number, vertical wind shear, and the TI3 instability index.

BADA Aircraft Database — Aerodynamic parameters from EUROCONTROL for each aircraft type: wing loading, drag coefficients, lift-to-drag ratios, aspect ratios. This was the novel ingredient — matching each pilot report to the specific aerodynamic profile of the aircraft that filed it.

The final dataset: 38,426 balanced samples across five US pressure levels (200–350 hPa), covering cruise altitudes where commercial aircraft actually fly.

The Hard Parts

Data alignment was the first bottleneck. Pilot reports come with imprecise timestamps and coordinates. ERA5 data sits on a fixed grid. Matching a pilot’s “somewhere over Oklahoma at around 2pm” to exact meteorological conditions at that point in the atmosphere required careful spatiotemporal interpolation across five pressure levels.

Feature engineering required domain knowledge, not just ML intuition. I couldn’t just throw raw weather variables at a model. I computed 14 physics-based turbulence diagnostics — each grounded in atmospheric science literature — because the relationship between raw variables and turbulence is highly non-linear. A temperature gradient alone means little; the Richardson number (which combines thermal stability with wind shear) tells you whether the atmosphere is about to break apart.

The aerodynamic integration was an open question. No one had published results on whether aircraft-specific features actually improve CAT prediction. I ran six parallel experiment scenarios — with and without aerodynamic features, across three turbulence severity groupings — to rigorously test whether this approach added real value or just noise.

Results

I benchmarked five algorithms: XGBoost, LightGBM, Random Forest, AdaBoost, and Logistic Regression.

XGBoost with aerodynamic features achieved the best performance:

The key finding: adding aircraft aerodynamics improved detection of dangerous turbulence from 84.5% to 86.6% — a statistically significant gain confirmed by 5-fold cross-validation (σ_POD: 0.013, well below the 2.1% improvement). This means fewer missed severe turbulence events, which directly translates to fewer injuries.

Geographic coordinates emerged as the most important features (17.5% combined importance), revealing that CAT is deeply tied to regional topography and jet stream pathways. The TI3 atmospheric instability index ranked third, and its spatial distribution strongly overlapped with actual CAT hotspots across the US — validating the physical intuition behind the model.

Winter months showed 3.5× higher CAT frequency than summer, with evening hours (15:00–21:00 UTC) being the most dangerous window.

Recognition

This work won first place in the Environmental Situational Awareness category at the SAYZEK National Defense AI Program. I presented the findings at ASELSAN — one of Turkey’s largest defense technology companies — to an audience of defense industry professionals and researchers.

What This Taught Me

This project shaped how I think about ML engineering. Three lessons stayed with me:

Domain knowledge isn’t optional. The aerodynamic feature idea didn’t come from a hyperparameter search — it came from understanding that turbulence is perceived differently by different aircraft. The best ML engineers are the ones who understand the problem domain deeply enough to ask questions that pure data scientists wouldn’t think to ask.

The gap between “model works” and “system works” is enormous. Aligning three heterogeneous data sources, handling missing values in pilot reports, computing physics based features at scale — these unglamorous tasks took more time than model training and had more impact on final performance.

Negative results have value. Aerodynamic features contributed only ~3% feature importance individually. In some scenarios, they slightly increased false alarm rates. Being honest about limitations — rather than overselling — is what makes research credible and systems trustworthy.

Recognition: 1st Place — SAYZEK National Defense AI Program, Environmental Situational Awareness Category

If you’re working on similar problems — aviation ML, weather prediction, or integrating physical systems with data-driven models — I’d love to connect.

📫 kago4022@colorado.edu 💼 LinkedIn 🔗 GitHub Repository

Currently pursuing an MS in AI at CU Boulder. Open to ML engineering roles — remote or relocation to US/EU.

Why a Boeing 737 and an A380 Don’t Feel the Same Turbulence — And What That Means for ML was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.