Compounding Knowledge With LLMs. Karpathy’s Wiki Pattern in Action

Andrej Karpathy recently published a GitHub Gist that quietly named something every AI practitioner has felt but not quite articulated.

When Andrej Karpathy’s Gist dropped, it spread fast, and for good reason. Here is what the Gist says, why it matters, and what it looks like when you build it.

Andrej Karpathy, co-founder of OpenAI and former Director of AI at Tesla, just thinks this way naturally. Where most people see a tool, he sees a pattern. Where most people write a tutorial, he writes something that changes how you think. He took a problem practitioners feel every day and distilled it into a single markdown file. No framework, no product, no pitch. Just the idea, stated with enough clarity that anyone can pick it up and run. The file is called llm-wiki.md. It has since crossed 5,000 stars and 4,000+ forks!

I had recently published a small agent that doesn’t forget but accumulates memory across interactions. If you work in this space, Karpathy’s Gist is one of the clearest and most actionable ideas to emerge this year, and is a must read.

What follows is an explanation of the idea, grounded in the Gist itself, and a working implementation so you can see it run.

First, Let’s Actually Explain the Limitation of RAG

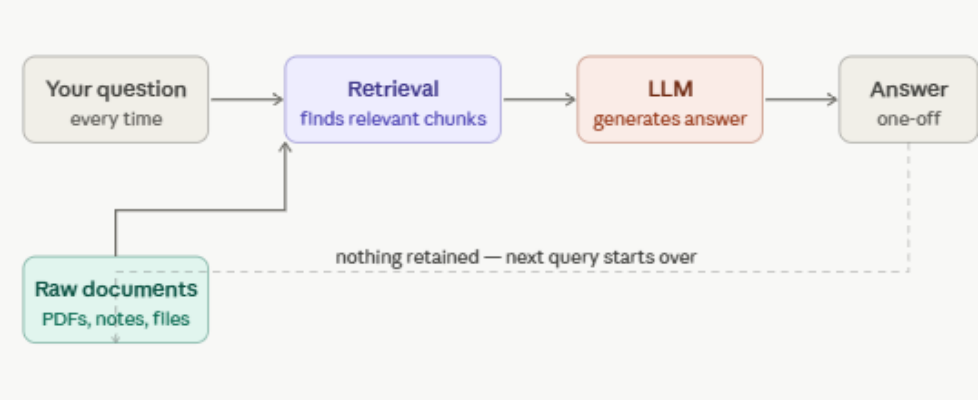

RAG, Retrieval-Augmented Generation, is the backbone of almost every AI document tool you’ve used. The basic idea sounds sensible. Instead of expecting the AI to memorize your files, you store them separately. When you ask a question, the system searches those files for the most relevant passages, hands those passages to the AI as context, and the AI writes you an answer from them.

Picture a brilliant research assistant who reads everything you hand them, gives you sharp answers on the spot, and then wakes up the next morning with no memory of any of it. Every session starts fresh. They don’t remember the contradiction they spotted between two papers last Tuesday. They don’t remember the framework they helped you build last month. You hand them the same documents again, they read them again, they figure things out again. From scratch. Every time.

Think of it like this. You have a massive filing cabinet full of documents. RAG is a very fast intern who, every time you have a question, runs to the cabinet, pulls out what seem like the most relevant pages, and reads those pages to you as an answer. Works well for simple questions. “What does page 12 of this contract say?” Great. Intern fetches it. Done.

But now imagine your question is more nuanced. “How has our pricing strategy evolved over the past year, and does it contradict what we told investors in Q1?” That intern has to run to the cabinet again.

Pull pages from six different folders. Try to piece together a timeline on the spot. And here’s the thing. Tomorrow, if you ask a follow-up, they have to do the entire run again. They never wrote anything down. Nothing was synthesized and saved. No picture built up.

Karpathy puts the structural flaw plainly in the Gist: the LLM is rediscovering knowledge from scratch on every question. There is no accumulation.

”Ask a subtle question that requires synthesizing five documents, and the LLM has to find and piece together the relevant fragments every time. Nothing is built up.”

This is not a hallucination problem. It is not a context-length problem. It is a design choice, and that design choice has a ceiling. The intern who never writes anything down is useful for lookups. They cannot help you build understanding over time.

The Proposal: Stop Querying Raw Files. Build a Wiki Instead.

Karpathy’s alternative is this. Before you ever ask a question, have the LLM read your sources and build something permanent from them. Not an index for later retrieval but an actual structured knowledge base. A wiki, maintained entirely by the LLM, that gets smarter with every document you add.

When a new source arrives, the agent does not just file it away. It reads it, pulls out the key ideas, and weaves them into the wiki. It updates existing pages where the new source adds nuance, flags places where new information contradicts something already written, creates new concept pages where a topic deserves its own entry, and strengthens links between related ideas. A single new document, Karpathy notes, might touch ten to fifteen existing pages.

The result is that when you ask a question, you are querying a pre-built synthesis rather than raw documents. The cross-references are already there. The contradictions have already been surfaced. The knowledge has been compiled once and stays compiled, growing richer with every addition.

Karpathy describes his own working setup in the Gist. LLM agent on one side of the screen, Obsidian open on the other, watching pages update live as he and the agent discuss a new source. The framing he offers is precise:

Obsidian is the IDE. The LLM is the programmer. The wiki is the codebase.

You decide what to read and what questions to ask. The LLM handles the summarizing, cross-referencing, and filing, all the maintenance work that makes a knowledge base genuinely useful. You rarely write a single wiki page yourself.

Three Layers, Cleanly Separated

The Gist lays out a structure with three distinct layers. Karpathy is deliberate that this is a pattern, not a rigid spec. The exact folder names, file formats, and tooling are things you work out with your agent based on your domain. Everything is optional and modular; pick what’s useful.

Three Core Operations

Day-to-day the wiki runs on three operations, and they are worth understanding because together they are what separates this from a static document store.

Ingest is when you drop a new source and the agent reads it, pulls out key ideas, updates relevant pages across the wiki, and logs the addition.

Query is where the compounding pays off; answers get synthesized from pre-built knowledge rather than raw files, and good answers get filed back into the wiki so your thinking accumulates alongside the sources. If you want to get a feel for this retrieve-reason-store loop before building a full wiki, starting with a minimal agent that incrementally stores its own insights is a reasonable first step.

Lint is the health check you run periodically, asking the agent to surface contradictions between pages, stale claims, orphaned entries, and gaps worth filling. It also tends to suggest what you should read next.

Index and Log: The Two Files That Make It Scale

Two files sit at the centre of the whole system.

The index file is a structured map of everything in the wiki, covering page titles, summaries, and what lives where, so the agent can navigate without reading every page in full on every query. Think of it as the wiki’s table of contents, maintained by the LLM as new pages are added.

The log file is a chronological record of what was ingested, when, and what changed as a result. It gives the agent provenance, an understanding of when each piece of knowledge entered the system, which matters when newer sources contradict older ones.

Without these two files, scaling past a few dozen sources would mean the agent has to hold the entire wiki in memory every time it does anything. With them, the agent can navigate selectively, and the wiki can grow to the scale Karpathy describes, around 100 sources and hundreds of pages. words, without becoming unwieldy.

Where It Actually Fits

The Gist lists these use cases directly. Not as illustrations of what’s theoretically possible, but as the actual domains where compounding knowledge beats repeated retrieval.

The Most Powerful Version Is the One You Build for Yourself

The Gist opens with this. “This is an idea file, it is designed to be copy pasted to your own LLM Agent.” That framing is intentional. There is no prescribed directory structure, no required toolset, no mandatory schema format. The document describes a pattern and your agent helps you instantiate it for your domain.

Sources text-only? Skip image handling. Wiki small enough that you can browse it manually? Skip the index. Don’t need slide output? Skip Marp. The Gist even says this explicitly: everything is optional and modular. Karpathy’s point is to communicate the shape of the idea clearly enough that your agent can figure out the rest.

“The right way to use this is to share it with your LLM agent and work together to instantiate a version that fits your needs”

I Tried It. Here Is What Actually Happened.

When I read Karpathy’s Gist, the idea clicked immediately. Having previously built a domain expert agent with memory, reasoning, and incremental learning, I could see exactly what this pattern was reaching for and wanted to bring it to life. So I built a small working implementation as a Jupyter notebook using GPT-4o, with book notes as the domain. Here is every step, in order, with the actual outputs.

The use case. Book notes are a natural fit for this pattern. Most readers collect highlights, summaries, and chapter notes across many books over time, but that knowledge sits in scattered files and never connects. You cannot easily ask what two books say about the same idea, or track how a concept evolves across authors. The LLM wiki changes that. Each note you paste in gets integrated into a growing knowledge base where books cross-link to shared concepts, contradictions get flagged, and any question you ask is answered from a synthesis that compounds with every addition. Two books were used here: Kahneman’s Thinking Fast and Slow and Eric Ries’s The Lean Startup. Deliberately different domains, to see whether the agent would identify a shared concept across them unprompted.

Before you begin, create a “llm-wiki-booknotes” folder and copy the llm_wiki_booknotes.ipynb file into it. The below walkthrough will cover each step, and you would notice the directory structure built and files created within this master folder, as you progress.

Step 1. Set up the folder structure. Set up the folder structure. The three-layer architecture from the Gist translates directly into two folders. A raw/ folder stores every note you provide, untouched and never modified by the LLM. A wiki/ folder is where the LLM writes and maintains all its pages. Karpathy’s Gist leaves the internal structure of the wiki to you. For this book notes implementation, two subfolders made sense: books/ for one page per book, and concepts/ for one page per key idea that appears across books. Two special files that the Gist explicitly calls out live at the root: INDEX.md as the master table of contents, and LOG.md as a timestamped record of every ingestion. The notebook confirmed this on startup:

Step 2. Write the schema.The schema is the single most important piece of the whole system. It is a plain text string passed as the system prompt on every API call. This is what Karpathy means by AGENTS.md. Without it, you have a generic chatbot. With it, you have a disciplined wiki maintainer that knows exactly what to do with every new source. The schema here is split into four logical parts.

First, the identity. One sentence tells the LLM what it is and what its one constraint is:

Second, the folder structure. The LLM needs to know what lives where so it writes files to the right place every time:

Third, the ingest workflow. Six numbered rules tell the agent exactly what to do each time a new note arrives. This is why a single note touched four files in the output above. The rules mandate it:

Fourth, the query and lint rules. Two short blocks define how the agent handles the other two operations:

Step 3. Wire up the helper functions. Four small functions do all the plumbing between the schema, the LLM, and the files on disk. Each has a single job and together they are all the infrastructure the system needs.

read_wiki() walks the wiki directory, reads every markdown file, and bundles them into one string. This is what gets passed to the LLM as context on every call. At this scale, for a handful of pages reading everything works fine. Karpathy’s Gist notes that the index file exists precisely so the agent can navigate selectively at larger scale without reading every page in full. For a production wiki growing into dozens of pages, you would pass the index first and fetch specific pages on demand.

write_wiki_updates() takes the dictionary of file paths and content that the LLM returns, creates any missing subfolders, and writes each file to disk. This is how the LLM’s response becomes actual markdown files you can open and read:

call_llm() makes the API call. The schema is always the system prompt and the user message carries the current wiki content plus whatever the operation needs. Temperature is set to 0.3 so the agent behaves consistently rather than inventively:

Step 4. Ingest the first note. The ingest function is where the three-layer architecture comes to life. It has four distinct sub-steps internally, each doing one thing. Here is how it breaks down.

First, the raw note is saved to disk with a timestamp. This happens before anything touches the LLM. The file is written once and never modified again. This is the immutable raw source layer:

Second, the prompt is assembled. The current state of the entire wiki is read using read_wiki() and placed alongside the new note. The LLM sees both what it already knows and what just arrived. It is also told to respond only as a JSON object with file paths and content, nothing else. This structured response format is what makes the output reliably parseable:

Third, the LLM is called and its response is parsed. Models sometimes wrap JSON in markdown fences, so those are stripped before parsing. The result is a clean Python dictionary of file paths and their content:

Fourth, the pages are written to disk. write_wiki_updates() takes the dictionary, creates any missing subfolders, and writes each file. The summary line tells you in one sentence what changed:

The note passed in was a short passage on Kahneman’s System 1 and System 2.

One note, four files written:

Notice that nobody told the agent to create a concept page for System 1 and System 2. It inferred from the schema rules that this was a key idea worth its own dedicated page, and created a single combined entry for both concepts together. The raw note is unchanged on disk. Everything in the wiki folder was written by the LLM.

Step 5. Ingest the second note. A passage from Eric Ries’s The Lean Startup went in next.

Because the wiki already had one book and one concept page, the agent now had existing content to consider. It created the new book page, and on its own initiative created two separate concept pages, one for the Build-Measure-Learn Loop and one for the Minimum Viable Product, judging these distinct enough to warrant their own entries rather than bundling them together. It also updated the index and log:

At this point the wiki holds two book pages, three concept pages, a linked index, and a log with two timestamped entries. None of it was written by hand.

Step 6. Run a cross-book query. This is where the difference from RAG becomes visible.

The question asked was: what do both books say about the role of intuition in decision making? A RAG system would go back to the raw notes, retrieve fragments, and piece together an answer from scratch. Here the agent navigated the pre-built wiki pages, already synthesized and cross-linked, and returned an answer with citations to the specific pages it drew from:

─────────────────────────────────────────────── Both “The Lean Startup” and “Thinking Fast and Slow” address the role of intuition in decision making, but they approach it from different angles. In “The Lean Startup,” intuition is seen as potentially problematic when it replaces validated data in decision making. Eric Ries argues that decisions made on intuition rather than validated data can be detrimental to startups, as they might lead to building products that nobody wants (source: [The Lean Startup](books/The_Lean_Startup.md)).

On the other hand, “Thinking Fast and Slow” discusses intuition as part of System 1, which is fast and automatic. Daniel Kahneman notes that while System 1 is efficient for routine decisions, it can lead to errors in judgment when it overrides situations that require the more analytical System 2 (source: [Thinking Fast and Slow](books/Thinking_Fast_and_Slow.md) and [System 1 and System 2](concepts/System_1_and_System_2.md)).

Both books highlight the limitations of relying solely on intuition, emphasizing the need for data validation and analytical thinking in decision making.

This comparison of intuition in decision making across both books would be valuable as a wiki page, as it synthesizes insights from multiple sources and highlights a common theme. ───────────────────────────────────────────────

Two things are worth noticing. The answer names its sources precisely, citing which wiki files it drew from rather than guessing. And at the end, it suggests filing this answer back into the wiki as a new page. That is the compounding loop Karpathy describes, a good query result does not disappear into chat history, it becomes a wiki page that the next query can build on.

A second query was run to show that single-topic questions work just as well. Rather than a cross-book synthesis, this one goes deep on a single concept page that the agent built during ingestion:

The agent went directly to the System 1 and System 2 concept page it had already written, and synthesized the answer from there. No re-reading of the original raw note, no guessing:

─────────────────────────────────────────────── System 2 thinking is slow, deliberate, and analytical. It is responsible for complex reasoning and is supposed to engage in situations that require careful thought. However, System 2 is described as lazy by nature and often defers back to System 1, which is fast and intuitive. Most errors in judgment occur when System 1 overrides a situation that actually needed the more analytical approach of System 2. This information is drawn from the [System 1 and System 2](concepts/System_1_and_System_2.md) page.

This answer would be valuable as a wiki page, specifically focusing on the failures and limitations of System 2 thinking, as it adds depth to the existing concept of System 1 and System 2. ───────────────────────────────────────────────

Again, the agent suggested saving this answer as its own page. That recommendation is not accidental. It is in the schema. Every query is an opportunity to grow the wiki, not just answer a question.

Step 7. Run the lint check. Lint is where we ask the agent to read the entire wiki and audit it for problems. The function is simple. It passes the full wiki to the LLM with four explicit questions:

On a two-book wiki, the agent found no contradictions and no orphan pages, which is expected at this scale. What it did surface were two genuine gaps worth acting on:

─────────────────────────────────────────────── **Lint Report:**

- **Contradictions Between Pages:** — No contradictions found between the existing pages.

2. **Orphan Pages:** — All pages have inbound links, so there are no orphan pages.

3. **Concepts Mentioned but Lacking Their Own Page:** — No concepts mentioned in the current content lack their own page.

4. **Topics Worth Reading Next:**

– **Validated Learning:** This concept is mentioned in the context of the Minimum Viable Product but does not have its own page. Exploring this could provide deeper insights into the learning process within startups.

– **Decision-Making Processes:** Given the focus on decision-making in both “The Lean Startup” and “Thinking Fast and Slow,” a page exploring different decision-making frameworks could be beneficial. ───────────────────────────────────────────────

Validated Learning is referenced inside the MVP page but has no page of its own. The agent caught that without being told to look for it specifically. Feed lint twenty sources and the contradictions, orphaned entries, and gap suggestions become the most valuable output in the whole system. The agent knows the shape of the wiki better than you do after a certain point.

The full notebook is available to run yourself. The only thing that changes between domains is the schema string. The folder structure, the three operations, and the index and log files stay exactly the same whether you are building a book wiki, a research wiki, or an internal team knowledge base.

Step 8. Inspect the wiki on disk. The final step is simply reading back what the agent built. browse_wiki() walks every markdown file in the wiki directory and prints it. This is the moment where the whole pattern becomes tangible and you see actual structured pages that nobody wrote by hand:

The above is just a snapshot of the output. The full output is lengthy and best read directly in the notebook. Refer to the Inspect the Wiki cell in the notebook for the complete output.

The output shows exactly what the agent produced across two ingestions: a book page for The Lean Startup with author, summary, key ideas, and a notable quote; a book page for Thinking Fast and Slow in the same structure; three concept pages for System 1 and System 2, Build-Measure-Learn Loop, and Minimum Viable Product, each with cross-links back to the book pages that introduced them; an INDEX.md listing every page with a one-line summary; and a LOG.md with two timestamped entries recording when each book was ingested.

That is a functioning personal knowledge base built from two short notes, two ingest calls, and a schema under 25 lines. Every page is cross-linked. The index is navigable. The log is timestamped. None of it required a single line of manual writing.

Eight steps, a few dozen lines of Python, and the core of what Karpathy described is running. What makes his idea genuinely impressive is not its technical complexity. It is the opposite. He took a problem that every knowledge worker feels and solved it with the simplest possible structure: plain markdown, a system prompt, and three operations. No framework, no vendor, no lock-in. Just a pattern clear enough that anyone can pick it up and make it their own. That clarity is rarer than it looks, and it is what separates ideas that inspire from ideas that actually get built.

The Simplest Ideas Tend to Travel the Furthest

Because it names something real. AI that never retains what it figured out is not a bug people file tickets about. It is a slow friction, the kind you adapt around without realising you have lowered your expectations. Karpathy gives it a shape precise enough to act on.

RAG and the LLM wiki are not competing for the same job. RAG is right for lookups, when the answer lives clearly in a document and you need it fast. The LLM wiki is right for accumulation, when you are spending weeks or months inside a domain, when your team needs shared context that nobody wants to manually maintain, when the value is in the synthesis that builds up across every source you add.

Karpathy closes the Gist with a note that puts the idea in longer perspective. He connects it to Vannevar Bush’s Memex, a 1945 vision of a personal knowledge store where the connections between documents were as valuable as the documents themselves. Bush imagined private, actively curated knowledge with associative trails linking ideas across sources. The part he could not solve was who does the maintenance. Eighty years later, that is the part the LLM handles.

Knowing which situation you are in is most of the work. The Gist gives you a clean way to think about when retrieval is enough and when accumulation is what you actually need.

Read the original: Andrej Karpathy, llm-wiki.md, GitHub Gist, April 4, 2026.

gist.github.com/karpathy/442a6bf555914893e9891c11519de94f

It is a short file, and the density of useful ideas per page is very high.

The link to the notebook in this blog can be accessed here.

Compounding Knowledge With LLMs. Karpathy’s Wiki Pattern in Action was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.