Created a dataset system for training real LLM behaviors (not just prompts

|

Most LLM dataset discussions still revolve around size, coverage, or “high-quality text,” but in practice the real failure mode shows up later when you actually plug models into workflows. Things like:

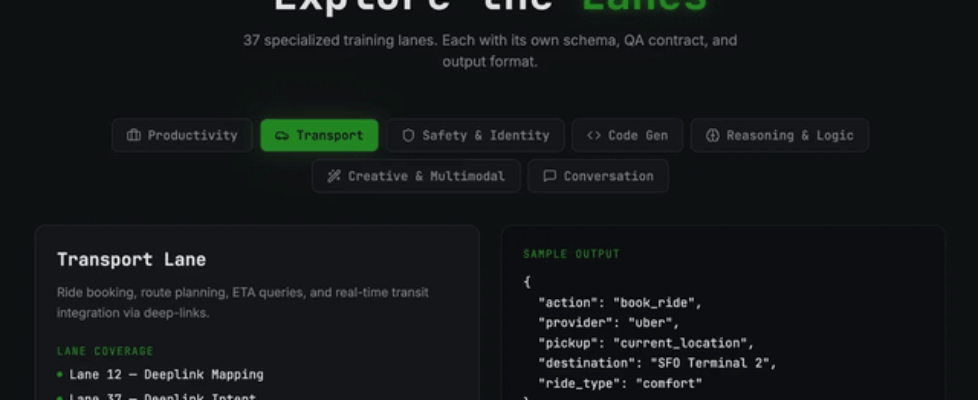

We ran into this repeatedly while building LLM systems, and it became pretty clear that the issue wasn’t just model capability, it was how the data was structured. That’s what led us to build Dino. Dino is a dataset system designed around training specific LLM behaviors, not just feeding more text. Instead of one big dataset, it’s broken into modular “lanes” that each target a capability like:

The idea is to train these behaviors in isolation and then combine them, so the model actually holds up in real-world, multi-step pipelines. It’s also built to support multi-domain and multilingual data, and focuses more on real-world ingestion scenarios rather than static prompt-response pairs. If you want to take a look: http://dinodsai.com submitted by /u/JayPatel24_ |