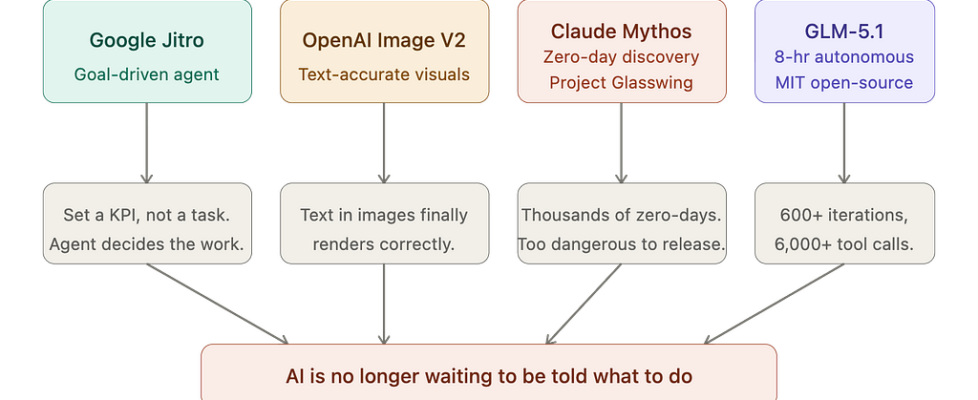

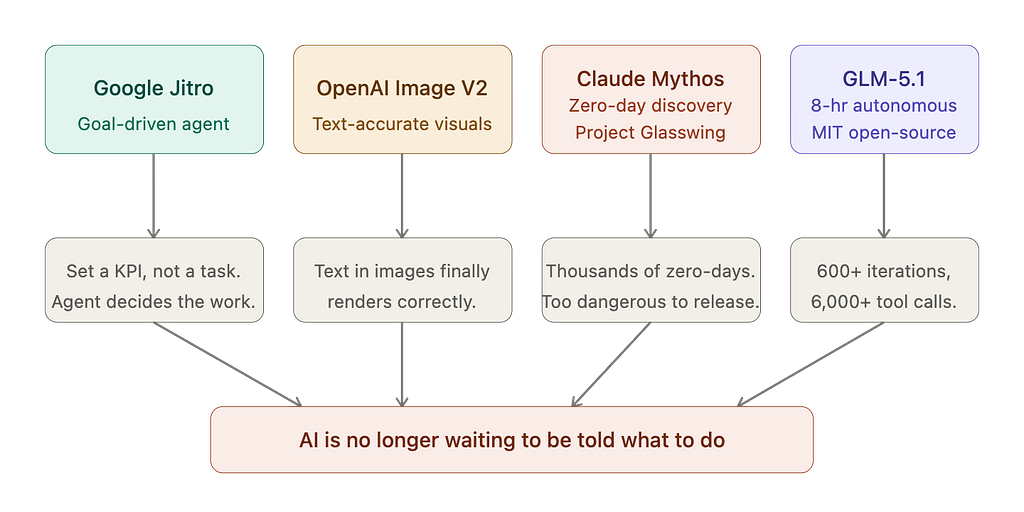

AI Stopped Asking for Instructions This Week

Google wants to give it a KPI. Anthropic built one too dangerous to ship. Z.ai ran one for eight hours straight. OpenAI fixed the one thing that’s embarrassed image generation for years.

Something shifted this week. Not in one lab — in all of them simultaneously.

The story isn’t any single release. The story is the direction every release points: AI that defines its own work, runs without supervision, finds problems you didn’t know existed, and improves long after you’ve stopped watching. Four different companies. Four different bets. All landing in the same week, all pointing at the same thing.

Here’s what actually happened.

Google Jitro: from task-runner to outcome-chaser

The way coding agents work today is fundamentally a manual loop. You spot a problem, describe it, the model executes, and you review. Repeat. You’re still the one identifying the work.

Google is building something that skips that first step.

Jules V2 — internally codenamed Jitro — appears designed around high-level goal-setting. Think KPI-driven development, where the agent autonomously identifies what needs to change in a codebase to move a metric in the right direction.

Instead of “fix this function,” you say “reduce error rate,” and the agent figures out where the errors are, what’s causing them, and what to change.

A dedicated workspace for the agent suggests Google envisions Jitro as a persistent collaborator rather than a one-shot tool — a workspace where developers can list goals, track insights, and configure tool integrations, a layer of continuity that current coding agents don’t offer.

The timing is deliberate. Google I/O 2026 kicks off May 19, and this is exactly the kind of showcase-ready feature Google would want to unveil alongside its broader Gemini ecosystem updates.

But there’s a harder problem underneath the impressive framing. When you give an agent a goal instead of a task, you’re also giving it the authority to decide what work exists. When agents pursue outcomes autonomously across production codebases, understanding what the agent was optimizing for, the reasoning it applied, and the constraints it evaluated becomes the foundation for trust. That’s not a UX problem — it’s an observability and governance problem that the industry doesn’t have clean answers to yet.

The question Jitro forces: how much do you trust an agent’s judgment about what to change in a codebase you’re responsible for? GitHub Copilot completes your sentences. Jitro would write the chapter.

OpenAI Image V2: fixing the embarrassing thing

There’s a problem that has followed AI image generation since the beginning. You ask for a button that says “Submit.” You get a button that says “Sbmuit.” Or “Sbumit.” Or a convincing rectangle with smudged glyphs where letters used to be.

It’s been the most reliable way to identify AI-generated images: look at the text.

OpenAI appears to be quietly testing Image V2, a next-generation image generation model that surfaced on LM Arena under the codenames maskingtape-alpha, gaffertape-alpha, and packingtape-alpha. Early impressions suggest it represents a meaningful step forward — testers highlight its ability to render realistic UI interfaces with correctly spelled button text, along with strong prompt adherence and compositional understanding.

GPT Image 1 had real problems: inconsistent text, hands that still broke in complex scenes. The early Image V2 examples suggest that most of those problems have been solved.

This matters more than the benchmark numbers suggest. Fix text rendering, and you unlock the actual business use cases: UI mockups, product screenshots, marketing visuals with real copy, design prototypes that don’t need a designer to manually repair the labels. These are the things people stopped trying to do with image models because the output kept breaking in the same predictable way.

The competitive context:

Google’s Nano Banana Pro has held the top spot on the LM Arena leaderboard for months, and OpenAI has been under pressure since late 2025, with Sam Altman describing the situation as a “code red” internally. Image V2 is the direct answer.

No official release date. But OpenAI used the same Arena-based blind testing approach in December 2025 when it previewed models before releasing GPT Image 1.5 just weeks later. The playbook is familiar. The launch is probably close.

Claude Mythos: the model Anthropic won’t release

Anthropic shipped its most capable model this week. They also announced they will not be making it generally available.

That’s not hedging. That’s the actual headline.

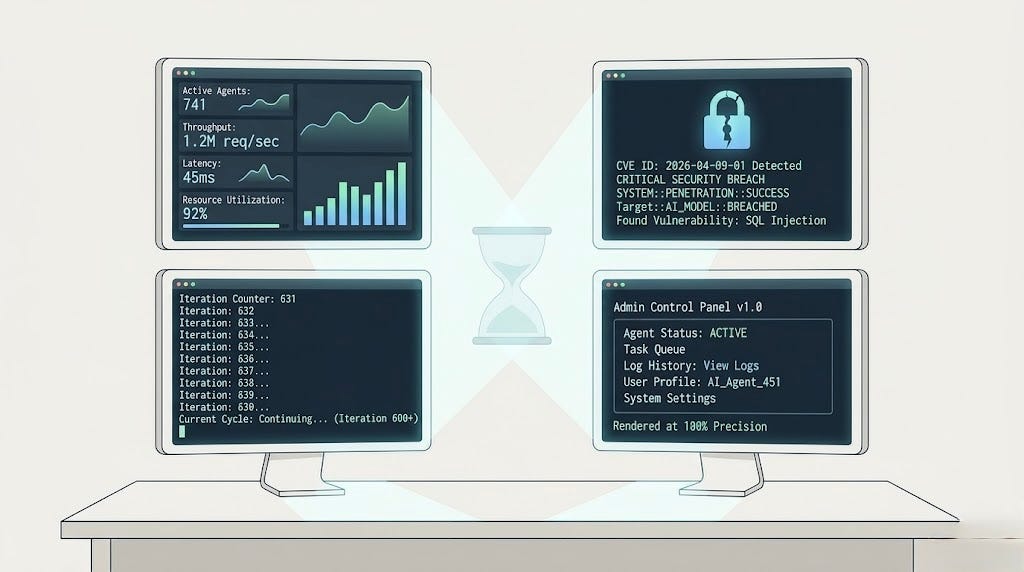

Over the past few weeks, Anthropic used Claude Mythos Preview to identify thousands of zero-day vulnerabilities — flaws previously unknown to software developers — in every major operating system and every major web browser, along with a range of other important pieces of software.

Mythos Preview fully autonomously identified and then exploited a 17-year-old remote code execution vulnerability in FreeBSD that allows anyone to gain root on a machine running NFS — classified as CVE-2026–4747. When Anthropic says “fully autonomously,” they mean no human was involved in either the discovery or exploitation of this vulnerability after the initial request to find the bug.

Read that again.

No human in the loop. Discovery and exploitation, start to finish, by the model.

The decision to restrict access is an important signal here. Anthropic said it did not explicitly train Mythos Preview to have these capabilities. Rather, they emerged as a downstream consequence of general improvements in code, reasoning, and autonomy. The same improvements that make the model substantially more effective at patching vulnerabilities also make it substantially more effective at exploiting them.

That’s the core tension in frontier AI in 2026, stated plainly: the capability you want for defense is the same capability you’re afraid to release. There’s no version of “good at finding vulnerabilities” that isn’t also “good at being a weapon.”

Anthropic’s response is Project Glasswing.

The launch partners include Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, Nvidia, and Palo Alto Networks. Anthropic is committing up to $100 million in usage credits for Mythos Preview across these efforts, along with $4 million in direct donations to open-source security organizations.

The theory:

Get defenders access to the model before the same capability proliferates to actors who won’t use it defensively.

It’s the right call. It’s also an acknowledgment that the window for that advantage is shrinking.

The 17-year-old FreeBSD bug that Mythos found was not discovered by any human in nearly two decades of trying. It took an AI model a few hours. Multiply that by every major operating system, every browser, and every piece of critical infrastructure — and you start to understand why the announcement is structured the way it is.

GLM-5.1: the marathon runner with open weights

Every other release this week was from a large, well-resourced Western lab. GLM-5.1 from Z.ai is from a Chinese company that listed on the Hong Kong Stock Exchange in January 2026 — and it just took the top spot on SWE-Bench Pro.

In 2023, open source was two years behind the frontier. In 2024, one year. In 2025, six months. On April 7, 2026, an open-source model claimed the top score on one of the most respected coding benchmarks in AI — beating both GPT-5.4 and Claude Opus 4.6.

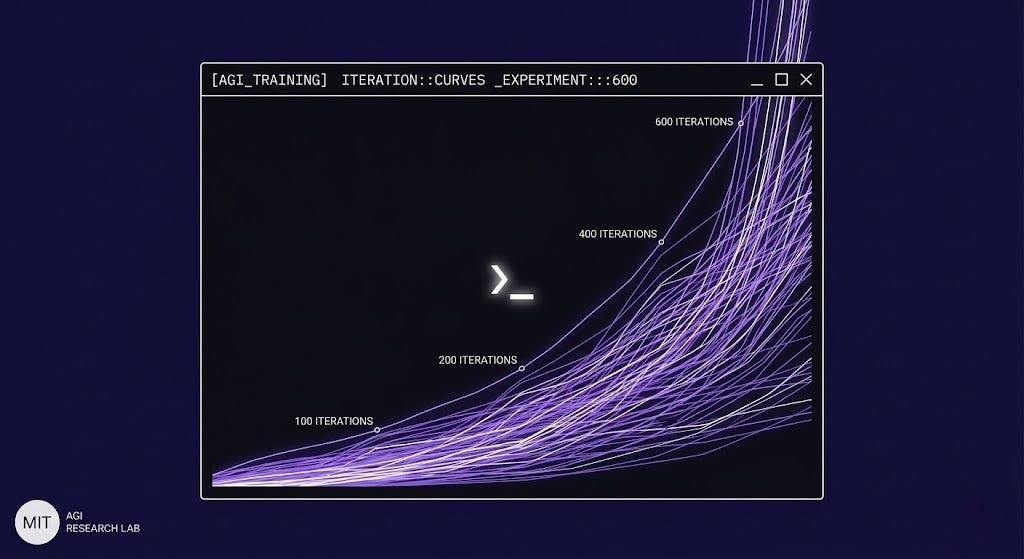

But the benchmark isn’t the real story. The real story is what GLM-5.1 does when you let it run.

In one demonstration, GLM-5.1 improved a vector database optimization task over more than 600 iterations and 6,000 tool calls, reaching 21,500 queries per second — about six times the best result achieved in a single 50-turn session. It didn’t plateau. It kept making structural changes: switching indexing strategies, introducing vector compression, and redesigning parallelism. It analyzed its own benchmark logs, identified what was slowing it down, and changed its approach. Repeatedly.

GLM-5.1 is a 754-billion-parameter Mixture-of-Experts model engineered to maintain goal alignment over extended execution traces that span thousands of tool calls. Z.ai’s leader noted on X:

Agents could do about 20 steps by the end of last year. GLM-5.1 can do 1,700 now.

That’s the number worth holding onto. Not 58.4 on SWE-Bench. 1,700 autonomous steps before the model loses the thread. That’s an 85x improvement in effective agentic capacity over a single year.

And it’s MIT licensed. Weights are available on Hugging Face. You can download, inspect, modify, and commercially use the model without restrictions. For any company with data governance requirements — finance, healthcare, defense — that changes the calculus on what’s deployable.

The throughline

Four releases. Four different categories. One pattern.

Google is building an agent that sets its own goals. Anthropic built one that finds vulnerabilities nobody knew existed — and decided it’s too capable to ship publicly. Z.ai built one that works for eight hours without stopping and gave away the weights. OpenAI fixed the thing that’s been embarrassing image generation for years.

None of these are chatbots getting smarter. They’re systems getting more autonomous, more persistent, and more capable of operating without the human in the loop.

That creates a real question the industry is going to have to answer more explicitly: as AI systems get better at defining their own work, what’s the appropriate scope of authority? Jitro is pursuing a KPI across a production codebase. Mythos chaining vulnerabilities into an exploit. GLM-5.1 running 1,700 steps on a task you handed it six hours ago.

These aren’t hypotheticals anymore. They’re this week.

The design question used to be: “What should AI do when I ask it to?” The question is now:

What should AI do when nobody’s asking?

We don’t have a consensus answer. We probably should.

AI Stopped Asking for Instructions This Week was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.