The L1 Loss Gradient, Explained From Scratch

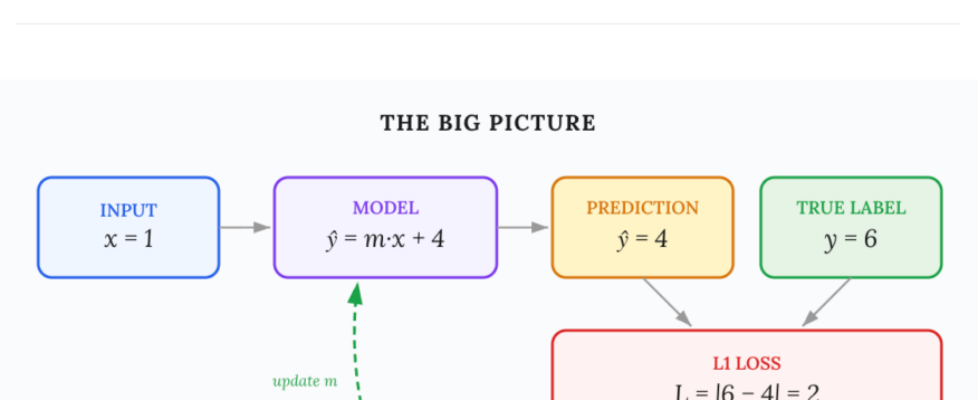

Author(s): Utkarsh Mittal Originally published on Towards AI. A complete, step-by-step walkthrough of how gradient descent works with absolute-value loss — with diagrams you can actually follow. If you’ve ever read a deep learning tutorial and hit a derivative that seems to appear from nowhere, this article is for you. We’re going to break down one of the simplest — yet most instructive — gradient calculations in machine learning: the gradient of L1 (absolute-value) loss with respect to a single weight. Our concrete example uses these values:The article explains the gradient calculation of L1 loss through a structured approach, starting with a simple regression model and discussing its components, the loss function, and how to derive the gradient with respect to a weight. It emphasizes clarity by using concrete examples and progressively builds the understanding through the chain rule in calculus. The synopsis concludes by contrasting L1 loss’s insensitivity to outliers with L2 loss’s responsiveness to error magnitude, ultimately guiding on when to use each loss function effectively. Read the full blog for free on Medium. Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor. Published via Towards AI