Why System Behaviour Must Be Designed, Not Improvised

The case for decision-driven architecture in an age of feature-first thinking and ungoverned AI

By Muhammad Ejaz Ameer, Product & Decision Architecture Lead

There is a moment in the life of almost every digital product when the team realises something uncomfortable: the system does not actually know what it is supposed to do.

Not because the engineers were careless. Not because the product managers were absent. But because somewhere between the roadmap and the release, nobody formally decided how the system should behave — only what features it should have.

This distinction sounds subtle. It is not. It is the difference between a product that operates consistently at scale and one that generates a permanent backlog of edge cases, manual interventions, and operational debt that no sprint ever quite clears.

This article makes the case for a different approach: decision-driven architecture — designing system behaviour deliberately, before features are built, so that the product operates through logic rather than through people.

The Feature-First Trap

Feature-first design is intuitive. A user need is identified. A feature is scoped. The feature is built. The cycle repeats.

The problem is not that this process is wrong. It is that it is incomplete. Features describe what a system can do. They do not describe how the system should behave when two features interact, when a workflow reaches an ambiguous state, when a user takes an action the team did not anticipate, or when an external service returns an unexpected result.

In feature-first teams, these gaps are filled informally — through Slack messages, manual overrides, support tickets escalated to developers, and judgement calls made by whoever is available. The system does not decide; a person decides, every time. This is not a process. It is organised improvisation.

Over time, organised improvisation becomes operational debt. The manual decisions that were supposed to be temporary become load-bearing. The overrides that were supposed to be exceptions become standard practice. The system grows in capability but not in coherence.

The team is not failing. The architecture is.

What Decision-Driven Architecture Actually Means

Decision-driven architecture starts from a different premise: that the system’s behaviour under real operational conditions must be designed explicitly, not discovered gradually.

In practice, this means making deliberate decisions about:

State — what are the valid states a workflow, record, or entity can exist in? How does it transition between them? What is explicitly not permitted?

Rules — what conditions must be true for an action to proceed? Who has authority to override, and under what circumstances? What happens when a condition is not met?

Routing — when a process branches, what determines which path is taken? Is that decision made by the system or delegated to a human? If delegated, what happens if no human acts?

Edge cases by design — what does the system do when inputs are incomplete, ambiguous, or outside expected parameters? This is not a QA question. It is an architecture question.

Ownership — when a decision must be made, which part of the system is responsible for making it? Ambiguous ownership is the root cause of most manual intervention.

These are not engineering concerns alone. They are product concerns, because they determine how the product actually behaves for real users in real conditions — and that is fundamentally a product responsibility.

The role that sits at this intersection — between product thinking and system design — is what I call product and decision architecture. It is not traditional software engineering. It is not product management in the conventional sense. It is the discipline of ensuring that product intent is encoded into system behaviour, not left to informal execution.

Why Product Logic Must Live in the System, Not in People

Consider what happens when product logic lives in people rather than systems.

A new team member joins. They are not yet familiar with the unwritten rules. They make decisions that are technically permitted by the software but operationally incorrect. Errors propagate before anyone catches them.

A senior person is unavailable. A decision that requires their judgement is delayed. A workflow stalls. A customer is affected. The senior person returns, fixes it manually, and the team moves on — having learned nothing systematically.

The product scales. The volume of decisions increases beyond what any individual or small team can consistently manage. Quality becomes a function of individual attention rather than systemic design. The product does not degrade because people stop caring; it degrades because the architecture was never designed to carry the operational load.

When product logic lives in the system, none of this happens. State transitions are enforced automatically. Routing decisions are made by rules, not by whoever is available. Edge cases produce defined outcomes, not undefined escalations. The system is not dependent on specific individuals maintaining specific knowledge.

This is not about removing human judgement from products. Humans should make judgement calls on genuinely novel situations. The goal is to ensure that routine, repeatable decisions — which constitute the vast majority of operational activity — are made by the system, consistently, without requiring human intervention every time.

The result is a product that is operationally consistent at any scale, not just when the right people are watching.

Workflow Governance: States, Transitions, and Rules

The practical implementation of decision-driven architecture begins with workflow governance — the formal design of how workflows move through a system.

Most digital products have workflows. An order is placed, processed, fulfilled, and completed. A booking is requested, approved, confirmed, and delivered. An application is submitted, reviewed, decided, and communicated. These are sequences of states connected by transitions.

In feature-first design, these workflows are implemented functionally — the transitions happen, but the rules governing them are often implicit, scattered across the codebase, or enforced only at the UI layer. A determined or confused user can sometimes reach states the team never intended.

In decision-driven architecture, workflows are modelled explicitly before implementation. Every state is named and defined. Every transition has explicit preconditions. Every actor — user, system, external service — has defined permissions for what they can and cannot trigger. The model is the specification; the implementation follows from it.

This has a practical consequence that teams often underestimate: it forces decisions to be made early that would otherwise be deferred until they become problems. When you model a workflow explicitly, you discover immediately that you have not decided what happens when a payment is partially completed, or when two users attempt to modify the same record simultaneously, or when an external API returns a timeout rather than a failure. These are not edge cases. They are operational realities that every production system will encounter.

Discovering them in the model costs nothing. Discovering them in production costs significantly more.

The AI Governance Problem

The emergence of AI-generated outputs inside digital products has made decision-driven architecture more important, not less.

The current pattern in many product teams is to treat AI as a feature — a capability that generates outputs and surfaces them to users. The output is variable, the behaviour is probabilistic, and the integration is often shallow: AI generates something, it is displayed, the user acts on it.

This pattern is operationally unsafe at scale.

AI outputs are not deterministic. They vary across inputs, across time, and across contexts. When AI outputs feed directly into operational workflows without structured governance, the system behaviour becomes partially unpredictable. The product may perform well on average while failing inconsistently at the edges — exactly where production systems are most vulnerable.

The correct architectural response is not to avoid AI integration. It is to govern AI outputs through defined system states rather than allowing them to propagate freely.

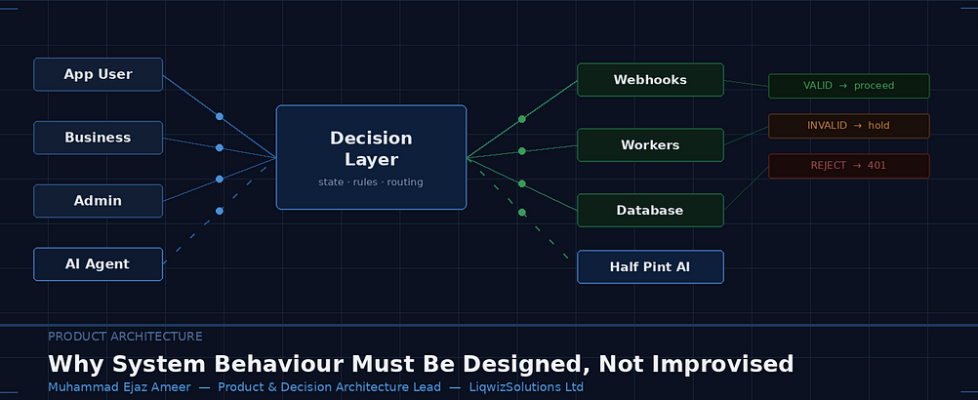

In practice, this means treating AI-generated outputs as inputs to a decision layer, not as decisions themselves. The AI generates a recommendation, a classification, a draft, or an action proposal. The system then evaluates that output against defined rules, validates it against current system state, and either proceeds, flags for review, or rejects — based on logic that has been explicitly designed.

This approach has a specific structural consequence: the AI cannot produce operationally harmful behaviour that the system has not been designed to handle. The system does not trust the AI output blindly. It processes it through the same governance layer that governs all other inputs.

I encountered this design challenge directly while working with BeerGram, a digital platform for the hospitality sector, on the architectural integration of their AI feature, Half Pint AI. The initial approach treated Half Pint AI outputs as direct operational instructions — the AI generated suggestions and the system acted on them. The architectural intervention was to insert a state validation layer between the AI and the operational system. Half Pint AI outputs were evaluated against current workflow state, rule constraints, and permission boundaries before any system action was taken. Outputs that passed validation proceeded. Outputs that did not were held for human review rather than applied or discarded silently.

The result was not a less capable AI integration. It was a production-safe AI integration — one where BeerGram’s operational consistency was maintained regardless of the variability of Half Pint AI outputs. The AI became a governed input to the system rather than an ungoverned instruction source.

What This Looks Like in Practice

Decision-driven architecture is not a methodology with a fixed process. It is a discipline that manifests differently depending on the product context. But there are consistent characteristics in how it is applied.

It starts before implementation. The time to design system behaviour is before the system is built, not after the first production incident. This requires product and architecture stakeholders to work together at the design stage on questions that are often deferred to engineering: what are the states? what are the rules? what happens at the edges?

It produces artefacts that are not just technical. Decision models, state diagrams, transition rules, and permission matrices are architecture outputs. They are also product documentation. They should be legible to non-engineers because the decisions they encode are product decisions, not only engineering decisions.

It actively reduces operational surface area. A well-designed decision architecture does not just make the system behave correctly — it reduces the number of situations that require human intervention. Every manual override that is eliminated by a well-defined rule is a reduction in operational risk and maintenance cost.

It is the foundation for scale. A product with implicit, person-dependent operational logic cannot scale consistently. A product with explicit, system-encoded decision logic can — because the logic does not degrade as volume increases.

The Emerging Discipline

Product and decision architecture is not yet a widely recognised title or discipline. Most organisations have product managers and engineers, and the space between them — where product intent becomes system behaviour — is occupied informally, if at all.

This is a gap that matters more as digital products become more complex, more automated, and more reliant on AI-generated outputs. The question of how a system behaves is not a question that can be answered by features alone, or by engineering alone, or by product management alone. It requires a discipline that holds all three of these concerns simultaneously and makes explicit decisions at their intersection.

The organisations that build this capability will have products that are operationally consistent, scalable without proportional increases in operational cost, and safe to extend with AI without introducing unpredictable system behaviour.

The organisations that do not will continue filling the gap with improvisation — which works, until the scale at which it stops working.

Muhammad Ejaz Ameer is a Product & Decision Architecture Lead working at the intersection of product logic, system behaviour design, and workflow governance. He is the founder of LiqwizSolutions Ltd and works with digital platforms to ensure that product intent is encoded into system behaviour rather than left to informal execution.

Why System Behaviour Must Be Designed, Not Improvised was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.