Kimi K2.5: How Moonshot AI’s 100-Agent Swarm Is Redefining Work with Multimodal Intelligence

How Moonshot AI’s Agent Swarm Technology is Redefining What’s Possible in AI Automation

The AI landscape shifted dramatically on January 26, 2026, when Moonshot AI quietly released Kimi K2.5. No grand keynote. No viral marketing campaign. Just a silent rollout that would soon have developers, researchers, and enterprise teams buzzing with excitement.

What makes this release special isn’t just another incremental improvement in model performance. It’s a fundamental reimagining of how AI systems can work. Kimi K2.5 introduces something unprecedented in the open-source world:

Agent Swarm — the ability to coordinate up to 100 specialized AI sub-agents working in parallel, executing complex tasks up to 4.5x faster than traditional single-agent approaches.

This isn’t science fiction. This is happening now. And it’s about to change everything you thought you knew about AI automation.

What Exactly is Kimi K2.5?

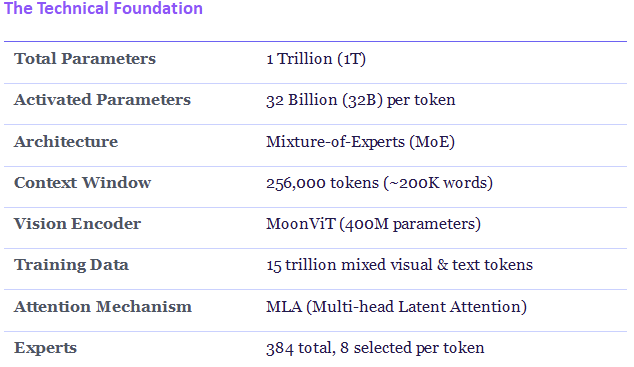

Kimi K2.5 is Moonshot AI’s most powerful open-source model to date. Built on a Mixture-of-Experts (MoE) architecture with 1 trillion total parameters (activatinonly 32 billion per request for efficiency), it represents a quantum leap from its predecessor, Kimi K2.

But raw specs only tell part of the story. What truly sets K2.5 apart is its

Native Multimodal Architecture

Unlike models that bolt vision capabilities onto text-based foundations, K2.5 was trained from the ground up on 15 trillion mixed visual and text tokens. This means it doesn’t just ‘see’ images — it understands them with the same fluency as text. It can:

Convert screenshots and UI designs directly into functional code

Reconstruct entire websites from video recordings

Debug visual layouts by inspecting rendered outputs

Solve complex visual puzzles using algorithmic reasoning

Four Modes, Infinite Possibilities

Kimi K2.5 operates through four distinct modes, each optimized for different types of tasks:

Instant Mode: For quick questions and simple responses. Fast, efficient, perfect for rapid-fire interactions where speed matters more than depth.

Thinking Mode: When problems require deeper reasoning or step-by-step analysis. K2.5 breaks down complex issues, examines them from multiple angles, and delivers well-reasoned solutions.

Agent Mode: For research and content creation tasks that involve generating structured outputs. Documents, slides, spreadsheets, websites, detailed reports — all created through natural conversation.

Agent Swarm Mode: The game-changer. For large-scale or multi-step projects where different parts of the work can run in parallel. This is where up to 100 sub-agents collaborate simultaneously.

Agent Swarm: The 100-Agent Revolution

Imagine you’re building a marketplace application. Traditionally, you’d either code it yourself or guide a single AI agent through the entire process step by step. With Agent Swarm, something remarkable happens:

Agent 1–10: Design frontend components simultaneously

Agent 11–20: Build backend APIs in parallel

Agent 21–30: Write comprehensive test cases

Agent 31–40: Create documentation and user guides

All coordinated by Kimi K2.5, working together seamlessly.

How Parallel-Agent Reinforcement Learning Works

Training an orchestrator for parallel execution presents unique challenges. When 50 agents execute concurrently, how do you attribute success or failure to specific decisions?

Moonshot developed Parallel-Agent Reinforcement Learning (PARL) to solve this. Early training rewards parallel execution to prevent ‘serial collapse’ — where theorchestrator defaults to single-agent execution because it feels safer. The reward function explicitly incentivizes sub-agent instantiation and concurrent execution.

Later training shifts focus to task quality. The final reward balances completion quality (80%) with critical path efficiency (20%). This prevents artificial task splitting without actual performance benefits.

Performance Result:BrowseComp benchmark jumps from 60.6% (standard agent) to 78.4% (Agent Swarm) — a 29% improvement from parallelization alone. Execution time reduced by up to 4.5x.

Benchmarks: How K2.5 Stacks Up

Where K2.5 Leads

0. Agentic Tasks: 50.2% on HLE-Full with tools vs GPT-5.2’s 45.5% and Claude’s 43.2%. When K2.5 gets access to tools, its score jumps by 20 points. GPT-5.2 only gains 11 points with the same tools.

1. Vision Tasks: Wins 8 out of 16 major image understanding tests. Leads on OCR with 92.3% accuracy — critical for document processing workflows.

2. Web Browsing: 74.9% on BrowseComp (78.4% in Swarm mode), significantly ahead of competitors.

3. Cost Efficiency: Complete benchmark suite costs $0.27 vs Claude Opus 4.5 at $1.14 (76% lower) and GPT-5.2 at $0.48 (44% lower).

Where Others Lead

It’s important to be honest about limitations. Claude Opus 4.5 still leads on pure code writing (80.9% vs 76.8% on SWE-Bench) and terminal automation (59.3% vs 50.8% on Terminal-Bench). For maximum single-task performance in these specific areas, Claude remains the top choice.

Why Kimi K2.5 Changes the Game

Beyond benchmarks, K2.5 brings several unique advantages to the table:

1. True Open Source

Kimi K2.5 is released under a Modified MIT License. You can download the weights from Hugging Face, deploy on your own infrastructure, fine-tune for your specific needs, and build products without worrying about API rate limits or vendor lock-in.

2. Vision-to-Code That Actually Works

Show K2.5 a screenshot of a website, and it can recreate it. Show it a video of an interface in action, and it can build the interactive version. This isn’t just generating static HTML — it’s creating fully functional, animated, responsive implementations.

3. Office Productivity at Scale

K2.5 Agent handles high-density, large-scale office work end-to-end. It can create Word documents with annotations, construct financial models with Pivot Tables, write LaTeX equations in PDFs, and scale to 10,000-word papers or 100-page documents. Tasks that took hours now complete in minutes.

4. Massive Context Window

With 256K tokens of context (approximately 200,000 words or 400 pages), K2.5 can process entire books, massive codebases, or long legal documents in a single pass. No more chunking data and losing coherence.

Real-World Use Cases

Who should be using Kimi K2.5? Here’s where it shines:

For Developers

Convert Figma designs to production-ready React components

Reconstruct competitor websites from screenshots for rapid prototyping

Debug UI issues through visual inspection rather than console logs

Generate 3D visualizations in Three.js from reference images

For Researchers

Conduct literature reviews across hundreds of papers simultaneously

Extract and synthesize data from multiple sources in parallel

Generate comprehensive reports with proper citations

For Enterprises

Process thousands of customer support tickets with specialized agents

Generate personalized documentation for different user segments

Automate complex workflows that previously required human coordination

The Pricing Advantage

Let’s talk numbers. If you’re building AI-powered products at scale, costs matter. Here’s the reality:

Real-World Cost Example

If your startup processes 100 million tokens per month:

With ChatGPT API:$9,000/month

With Kimi K2.5 API:$310/month

Annual Savings: $104,280

That’s not a typo. The cost difference is that dramatic. And with cached inputs at just $0.10 per million tokens, repeated queries become even more affordable.

The Bottom Line

Kimi K2.5 represents something we rarely see in AI: a genuine paradigm shift that’s immediately accessible. The Agent Swarm capability isn’t a future promise — it’s available today, and it works.

For developers, it offers a path to dramatically accelerate complex projects. For enterprises, it provides a way to automate workflows that were previously too complex for single-agent systems. For the open-source community, it sets a new standard for what’s possible when models are released with genuine freedom to build upon.

Key Takeaways

• Agent Swarm: Up to 100 parallel sub-agents, 4.5x faster execution

Native multimodal: Vision, text, and video in one unified model

• Open source: Modified MIT License, weights on Hugging Face

• Cost efficient: 76–97% cheaper than proprietary alternatives

• Production ready: 256K context, stable across 200–300+ tool calls

The AI arms race isn’t just about who has the biggest model anymore. It’s about who can deliver real capabilities that solve real problems. With K2.5, Moonshot AI has made a compelling case that the future belongs to coordinated intelligence — many agents working as one.

The question isn’t whether agent swarms will become standard. It’s how quickly the rest of the industry will catch up.

Ready to try Kimi K2.5?

Web: kimi.com | API: platform.moonshot.ai | Code: Kimi Code CLI

Kimi K2.5: How Moonshot AI’s 100-Agent Swarm Is Redefining Work with Multimodal Intelligence was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.