Data Collection Methods

Choosing the Right Approach for Your Research

Every data project starts with a fundamental question: where will the data come from? The answer shapes everything that follows. Choose the wrong collection method, and you might end up with biased samples, incomplete information, or data that simply does not answer your research question. Choose wisely, and you build a foundation that supports valid insights and actionable conclusions.

Data collection is not a one-size-fits-all endeavor. A market researcher surveying customer satisfaction needs different tools than a medical researcher tracking patient outcomes. A UX designer observing user behavior faces different challenges than a political scientist analyzing policy documents. Understanding the full spectrum of collection methods helps you match your approach to your specific needs.

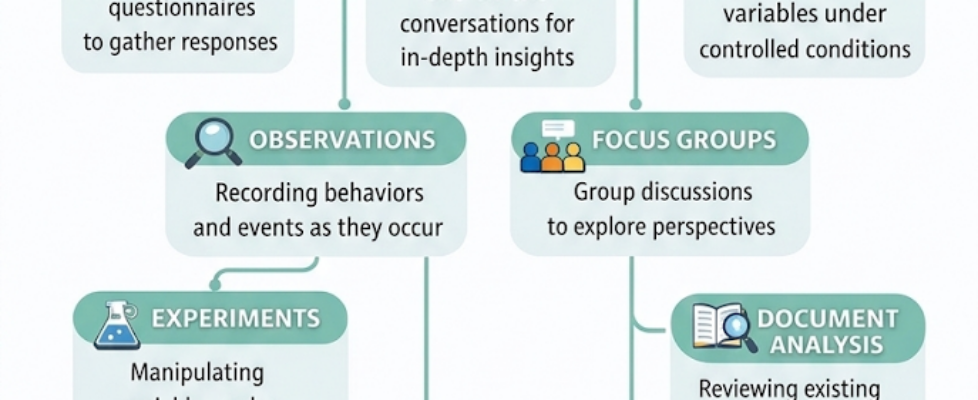

In this article, we’ll explore the primary data collection methods: surveys, interviews, observations, experiments, focus groups, document analysis, and case studies. We’ll examine when each method works best, what challenges you might face, and how to implement them effectively. By the end, you’ll have a clear framework for choosing the right approach for your next project.

Understanding Primary vs Secondary Data

Before diving into specific methods, it’s important to distinguish between primary and secondary data.

- Primary data is information you collect directly from original sources. You design the collection process, control the methodology, and gather data tailored to your specific research question. When you conduct a survey, interview participants, or run an experiment, you’re collecting primary data.

- Secondary data is information that already exists, collected by someone else for a different purpose. Census data, published research papers, company financial reports, and government statistics all qualify as secondary data. When you analyze existing documents or datasets, you’re working with secondary data.

Both types have their place. Primary data gives you exactly what you need but requires more time and resources. Secondary data is faster and cheaper but may not perfectly align with your needs. Most robust research projects use both.

1. Surveys: Structured Data Collection at Scale

Surveys use structured questionnaires to gather responses from a sample population. They’re the workhorse of data collection, capable of reaching hundreds or thousands of participants relatively quickly and cost-effectively.

When to Use Surveys

Surveys work best when you need quantifiable data from a large group, when your questions have clear, definable answer options, and when you want to measure attitudes, behaviors, or demographics across a population. They’re ideal for market research, customer satisfaction studies, employee engagement assessments, and public opinion polling.

Survey Design Fundamentals

The quality of your survey data depends entirely on your question design. Good surveys follow several key principles:

- Keep questions clear and specific. Avoid double-barreled questions that ask about two things at once. Instead of “How satisfied are you with our product quality and customer service?” split it into two questions.

- Use appropriate question types. Multiple choice works for predefined categories. Likert scales (strongly disagree to strongly agree) capture attitudes. Open-ended questions gather qualitative insights but are harder to analyze at scale.

- Consider question order. Start with easy, non-threatening questions to build rapport. Place demographic questions at the end. Group related questions together. Watch for order effects where earlier questions influence later responses.

- Test before deployment. Pilot your survey with a small group. Look for confusing wording, technical issues, or questions that don’t yield useful data.

Example: Customer Satisfaction Survey

Imagine you’re launching a new mobile app and need to understand user satisfaction. A survey lets you reach thousands of users efficiently:

1. How often do you use the app?

- Daily

- Weekly

- Monthly

- Rarely

2. Rate your satisfaction with app speed (1-5 scale)

3. Rate your satisfaction with ease of navigation (1-5 scale)

4. What feature do you use most? (multiple choice)

5. What improvement would you most like to see? (open-ended)

This survey provides quantitative metrics (usage frequency, satisfaction scores) and qualitative insights (desired improvements) from a large sample.

Limitations and Challenges

Surveys face several challenges. Response rates can be low, especially for online surveys. Self-reported data may not match actual behavior. Question wording can introduce bias. Survey fatigue leads to rushed or incomplete responses. And surveys can’t capture the depth and nuance that other methods provide.

2. Interviews: Deep Insights Through Conversation

Interviews involve one-on-one conversations designed to gather in-depth information, explore complex topics, and understand individual perspectives. Unlike surveys, interviews are flexible, allowing you to probe deeper when interesting themes emerge.

Types of Interviews

- Structured interviews follow a predetermined script with standardized questions. Every participant gets the same questions in the same order. This approach makes interviews more comparable across participants but sacrifices flexibility.

- Semi-structured interviews use a general guide but allow for follow-up questions and tangents. You cover key topics but adapt to what each participant shares. This balances consistency with depth.

- Unstructured interviews are more conversational, with only broad topics rather than specific questions. They’re exploratory, useful when you don’t yet know what questions to ask.

When to Use Interviews

Interviews excel when you need detailed explanations, want to understand motivations and thought processes, need to explore sensitive topics, or require flexibility to follow unexpected but relevant directions. They’re valuable for user research, expert consultations, life history research, and exploratory studies.

Conducting Effective Interviews

Good interviews require preparation and skill. Develop an interview guide covering key topics but remaining flexible. Create a comfortable environment where participants feel safe sharing honestly. Use open-ended questions that encourage elaboration. Practice active listening, showing genuine interest without leading responses. Allow silence after questions, giving participants time to think.

Example: User Experience Research

A software company wants to understand why users abandon their onboarding process. Interviews reveal insights surveys cannot:

“Walk me through your first experience with our platform. What were you trying to accomplish?”

The user might reveal: “I wanted to import my existing data, but I couldn’t find that option. After clicking around for five minutes, I just gave up. I assumed I’d need to contact support, and I didn’t have time for that.”

This level of detail exposes specific friction points. The user’s story provides context surveys miss, showing not just what happened but why and how it felt.

Limitations and Challenges

Interviews are time-intensive. Each interview requires preparation, execution, and analysis. You can’t reach as many participants as with surveys. Interviewer bias can influence responses. Data analysis is subjective and labor-intensive. And scheduling interviews with busy participants can be difficult.

3. Observations: Watching Behavior in Natural Settings

Observational research involves watching and recording behaviors as they naturally occur. Instead of asking people what they do, you watch what they actually do. This method captures authentic behavior that participants might not accurately report.

Types of Observation

- Participant observation means the researcher joins the group being studied. An anthropologist living in a community or an employee observing workplace culture from within uses this approach.

- Non-participant observation keeps the researcher separate. You watch from outside the situation, minimizing your influence on behavior.

- Structured observation uses predetermined categories and coding schemes. You know what behaviors you’re looking for and how to record them systematically.

- Unstructured observation is more exploratory. You watch and take notes without predetermined categories, allowing patterns to emerge organically.

When to Use Observations

Observations work when behavior matters more than attitudes, when you suspect self-reports might be inaccurate, when studying children or others who can’t easily articulate experiences, or when context and environment are crucial to understanding behavior.

Example: Retail Store Layout Optimization

A retailer wants to improve store layout to increase sales. Observational research provides crucial insights:

Researchers position themselves to watch customer movement patterns. They record which aisles customers enter first, where they pause, what displays attract attention, and which areas they skip entirely. Time-stamped notes track how long customers spend in different sections.

The data reveals that customers rarely venture to the back left corner despite profitable items being there. The observation also shows customers frequently pause at the entrance, looking confused about where to go first.

These insights lead to actionable changes: clear signage at the entrance, relocated high-demand items to draw traffic to underutilized areas, and improved sight lines to the back of the store.

Limitations and Challenges

Observations are time-consuming and labor-intensive. The observer’s presence can alter behavior (the Hawthorne effect). Recording and coding behavioral data requires training and creates subjective interpretation challenges. You can observe what people do but not always why they do it. And some settings or behaviors are impractical or unethical to observe.

4. Experiments: Establishing Cause and Effect

Experiments involve manipulating one or more variables under controlled conditions to establish causal relationships. Unlike observational methods that show correlation, well-designed experiments can demonstrate causation.

Core Elements of Experiments

Experiments require an independent variable (what you manipulate), a dependent variable (what you measure), control groups (who don’t receive the treatment), and experimental groups (who do receive the treatment). Random assignment to groups helps ensure that differences in outcomes result from your manipulation, not pre-existing differences between groups.

When to Use Experiments

Experiments are essential when you need to prove causation, test specific hypotheses about cause and effect, compare different interventions or approaches, or make decisions that require evidence of impact. They’re common in medical research, A/B testing in digital products, educational interventions, and psychological research.

Example: Email Marketing Optimization

An e-commerce company wants to increase email open rates. They design an experiment:

Independent variable: Email subject line style Dependent variable: Open rate

They randomly assign 10,000 subscribers to three groups:

- Group A receives subject lines with emojis

- Group B receives subject lines with personalization (recipient’s name)

- Group C receives standard subject lines (control)

Results show:

- Group A (emoji): 22 percent open rate

- Group B (personalization): 31 percent open rate

- Group C (control): 18 percent open rate

The experiment demonstrates that personalization causes higher open rates, not just correlates with them. The company can confidently implement personalized subject lines knowing they drive results.

Limitations and Challenges

Experiments can be expensive and time-consuming. Ethical constraints limit what you can manipulate, especially with human subjects. Artificial experimental conditions may not reflect real-world situations. Some important variables cannot be experimentally manipulated. And experiments often require statistical expertise to design and analyze properly.

5. Focus Groups: Collective Perspectives and Discussions

Focus groups bring together a small group of participants (typically 6–12 people) for a guided discussion about specific topics. The group dynamic generates insights that individual interviews might miss, as participants build on each other’s ideas and challenge different perspectives.

When to Use Focus Groups

Focus groups excel at exploring how people think about issues, generating ideas and hypotheses, understanding group norms and shared experiences, testing concepts or messaging before full rollout, and uncovering the language and terminology people naturally use.

Conducting Effective Focus Groups

A skilled moderator is essential. They keep discussion on track while allowing natural conversation flow, ensure all voices are heard without letting anyone dominate, probe interesting comments without leading responses, and manage group dynamics to maintain a comfortable environment.

Questions move from general to specific, starting with broad warm-up questions before diving into key topics. The moderator uses projective techniques like “imagine if…” scenarios or reacts to stimuli like product prototypes or advertisements.

Example: Healthcare Service Improvement

A hospital wants to improve patient experience in the emergency department. They conduct focus groups with recent patients:

In the discussion, one participant mentions frustration with waiting without updates. Others immediately agree, sharing similar experiences. One participant says, “It’s not even the wait time itself. I just wanted to know what was happening. Was I forgotten?”

Another adds, “Yes, and I felt bad asking the nurse because I could see how busy they were. But not knowing made me so anxious.”

This group dynamic reveals that the core issue is communication, not just wait time. Individual interviews might have surfaced this, but the group validation and building on each other’s experiences makes the theme unmistakable. The hospital implements a system to provide wait time updates, addressing the real patient concern.

Limitations and Challenges

Focus groups present unique challenges. Dominant participants can skew results. Group dynamics may pressure conformity, suppressing minority opinions. Scheduling multiple participants simultaneously is difficult. You need fewer groups than surveys or interviews but more participants per session. Data analysis requires interpreting group interactions, not just individual comments. And sensitive topics may not be suitable for group discussion.

6. Document Analysis: Mining Existing Records

Document analysis involves reviewing and analyzing existing written, visual, or digital materials. These documents were typically created for purposes other than your research but contain valuable data relevant to your questions.

Types of Documents

Public documents include government records, newspapers, websites, social media posts, and published reports. Private documents include personal letters, diaries, emails, internal company communications, and medical records. Physical artifacts like photographs, videos, objects, and buildings can also serve as data sources.

When to Use Document Analysis

Document analysis works when studying historical questions, tracking changes over time, analyzing organizational practices through internal documents, examining public discourse or media coverage, or conducting research where direct data collection is impractical or impossible.

Example: Analyzing Corporate Culture Through Email

A consulting firm wants to understand communication patterns in a company experiencing internal conflict. With permission, they analyze anonymized email data:

They examine email frequency, length, tone, and network patterns. The analysis reveals that different departments rarely communicate across silos. When they do, emails are formal and lengthy, suggesting lack of casual relationship. Senior leadership mostly sends one-way announcements rather than engaging in dialogue.

This document analysis provides objective evidence of communication problems that interviews might not fully capture, as participants might not recognize or admit patterns visible in the aggregate data.

Limitations and Challenges

Documents were created for other purposes, so they may not address your specific questions. Access can be restricted, especially for private or sensitive documents. Document authenticity and accuracy must be verified. Bias in document creation affects interpretation. And massive document volumes can make analysis overwhelming without proper tools or sampling strategies.

7. Case Studies: Deep Dives into Specific Examples

Case studies involve detailed investigation of a single case or a small number of cases. A case might be an individual, organization, event, community, or any bounded system relevant to your research question.

When to Use Case Studies

Case studies are valuable when you need deep understanding of complex phenomena, want to explore how and why things happen in context, study rare or unique situations, develop theories for later testing, or provide rich examples to illustrate broader patterns.

Conducting Case Studies

Strong case studies use multiple data sources. You might conduct interviews with key stakeholders, observe operations, analyze documents, and review quantitative metrics. This triangulation strengthens conclusions by viewing the case from multiple angles.

Clear boundaries matter. Define what is and is not part of your case. Are you studying one hospital department or the whole hospital? One decision or an entire decision-making process? Explicit boundaries prevent scope creep and focus analysis.

Example: Technology Adoption in Education

A researcher studies how one school successfully integrated tablets into elementary classrooms when many others failed. The case study includes:

- Interviews with teachers, administrators, students, and parents

- Observation of classroom tablet use

- Analysis of lesson plans and student work

- Review of training materials and rollout communications

- Student performance data before and after implementation

The case reveals that success factors included extensive teacher training (not just technical but pedagogical), phased rollout allowing iteration, strong principal support, and alignment with existing curriculum rather than forcing curriculum changes.

While findings from one school don’t prove these factors guarantee success everywhere, the case provides rich insights and hypotheses for other schools to consider and test.

Limitations and Challenges

Case studies are time-intensive and require significant resources for thorough investigation. Findings may not generalize to other contexts. Researcher bias in case selection and interpretation can skew conclusions. Defining appropriate case boundaries requires careful thought. And synthesizing multiple data sources into coherent narrative requires analytical skill.

Combining Methods: Triangulation for Stronger Insights

The most robust research often combines multiple methods. This triangulation approach uses different methods to examine the same question, with converging evidence strengthening conclusions.

Example: Employee Engagement Study

A company wants to understand and improve employee engagement. They use multiple methods:

- Survey (quantitative data from all employees): Measures overall engagement scores, identifies departments with lower engagement, tracks changes over time.

- Interviews (qualitative depth with 30 employees): Explores why engagement differs across departments, uncovers specific issues like lack of career development opportunities or poor manager relationships.

- Document analysis (internal communications and HR records): Examines turnover patterns, analyzes exit interview themes, reviews past engagement initiatives.

- Focus groups (group perspectives from each department): Validates interview findings, generates improvement ideas, identifies department-specific concerns versus company-wide issues.

Together, these methods provide comprehensive understanding. Surveys show what and where (which departments struggle). Interviews and focus groups reveal why (underlying causes). Document analysis provides historical context. Each method compensates for others’ limitations.

Choosing Your Collection Method: A Decision Framework

Selecting the right method requires considering several factors:

- Research question nature: Exploratory questions need flexible methods like interviews or observations. Confirmatory questions testing specific hypotheses need experiments or structured surveys.

- Required data type: Quantitative questions about how many, how much, or how often favor surveys or experiments. Qualitative questions about why, how, or what it means favor interviews, observations, or focus groups.

- Available resources: Surveys and document analysis can be cost-effective. Experiments and case studies typically require more investment.

- Timeline: Secondary data analysis and surveys can be faster. Longitudinal observations or complex experiments take longer.

- Access to participants: Limited access might favor document analysis or case studies. Easy access enables surveys or experiments.

- Ethical considerations: Sensitive topics might preclude observations. Vulnerable populations require special protections affecting method choice.

Practical Implementation Tips

Regardless of method, some principles apply universally:

- Start with clear objectives. What specific questions do you need answered? What will you do with the data? Clarity upfront prevents collecting interesting but ultimately useless information.

- Pilot test everything. Test surveys, interview guides, observation protocols, and experimental procedures with small samples before full deployment. Fix problems early when they’re cheap to address.

- Document your process. Record decisions about sampling, question wording, coding schemes, and analysis approaches. Documentation enables replication and helps you defend methodological choices.

- Consider ethical implications. Obtain informed consent, protect participant privacy, minimize risks, and ensure voluntary participation. Ethics reviews are not just bureaucratic hurdles but essential protections.

- Plan for analysis upfront. Don’t collect data without knowing how you’ll analyze it. Data analysis methods should inform collection design.

Common Pitfalls to Avoid

- Mismatch between question and method. Asking why with a survey that only captures what wastes resources and fails to provide needed insights.

- Insufficient sample size. Particularly problematic for surveys and experiments where statistical power matters. Small samples may not detect real effects.

- Leading questions or observer bias. Your beliefs can contaminate data through how you ask questions, what you observe, or how you interpret responses.

- Ignoring context. Data without context loses meaning. A low survey score needs context to interpret. An observed behavior needs environmental context to understand.

- Treating all data as equally valid. Secondary data quality varies. Interview participants may have different levels of knowledge. Critical evaluation of data sources matters.

Final Thoughts

Data collection is not simply a technical process but a strategic choice that shapes everything downstream. The methods you choose determine what you can learn, what questions you can answer, and ultimately what decisions you can confidently make.

No single method is best for all situations. Surveys provide breadth but lack depth. Interviews provide depth but lack breadth. Experiments establish causation but sacrifice realism. Each method offers distinct advantages while accepting specific limitations.

The key is matching method to purpose. What are you trying to learn? What decisions will the data inform? What resources do you have? Who can you access? Answering these questions points toward appropriate methods.

Remember that data collection is just the beginning. The best-collected data becomes valuable only through thoughtful analysis and interpretation. But poor collection methods poison everything that follows. Invest time in choosing and implementing the right approach, and you build a foundation for insights that drive real impact.

Whether you’re launching a new product, improving a service, testing a theory, or making strategic decisions, your success depends on having the right data. Now you have a comprehensive framework for gathering it.

If you found this guide helpful, please:

- Clap to show your appreciation and help others discover this article

- Share with colleagues who face data collection challenges

- Comment with your own experiences, questions, or tips about data collection methods

- Follow for more in-depth articles on research methods and data analysis

Your engagement helps these technical guides reach researchers, analysts, and decision-makers who can benefit from them. What data collection challenges have you faced? What methods have worked well in your projects? Share your insights in the comments below.

Data Collection Methods was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.