Operationalizing Agentic AI on AWS: A 2026 Architect’s Guide

Stop building AI toys. Learn the 4 pillars to get AI Agents into production on Amazon Bedrock.

If you sit in an executive meeting today and ask, “Are we investing enough in AI?”, the answer is almost always yes. If you then ask, “Which specific workflows are materially better today because of AI agents, and how do we know?”, the room usually gets very quiet.

The gap between those two answers isn’t a missing foundation model. It isn’t a missing vector database. It’s a missing operating model.

In 2026, the enterprises actually seeing ROI from Generative AI aren’t just deploying models; they are deploying agents — autonomous, tool-using AI systems. And when agentic AI works, it looks less like magic software and more like a well-run team: each agent has a clear job, a supervisor, reliable tools, and a way to improve.

Let’s break down how to bridge the execution gap and operationalize Agentic AI on AWS using Amazon Bedrock AgentCore.

Stop Asking “Where Can We Use an Agent?”

Most organizations start their AI journey backwards. They buy the tool and look for the nail. A much better starting point is: “Where is the work already structured like a job an agent could do?”

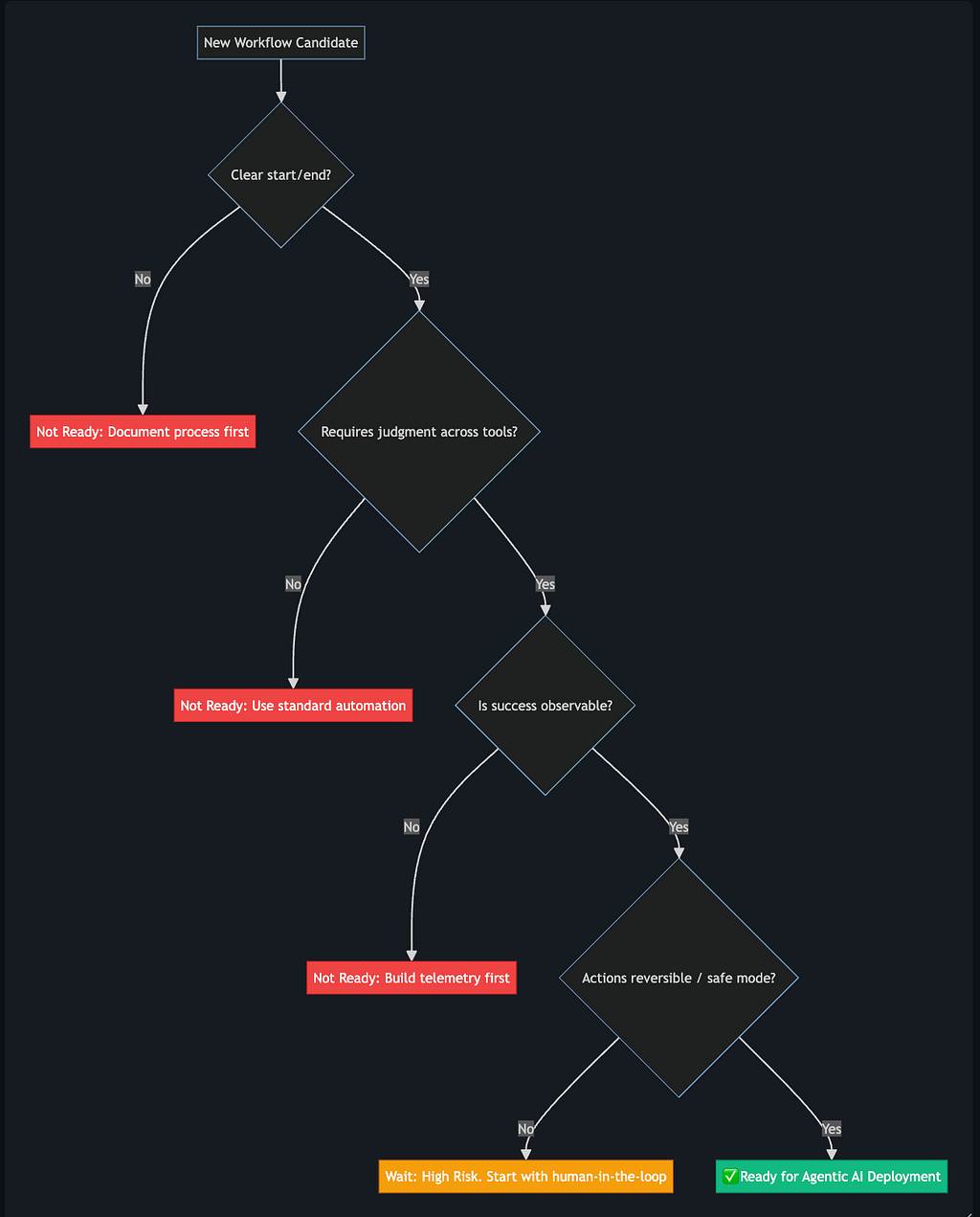

For a workflow to be ready for an AWS Agent, it needs to satisfy the 4 Pillars of Agentic Readiness.

Pillar 1: Clear Start, End, and Purpose

An agent needs to know when to wake up, what constitutes “done,” and how to handle a reasonable amount of edge cases without crashing.

Think of an automated invoice processor. The trigger is clear (an email arrives in S3), the purpose is clear (extract data and match to a purchase order), and the end is clear (submit to an ERP system or flag for human review).

If your human team cannot currently articulate what “done well” looks like for a task — including exceptions — it is not ready for an agent.

Pillar 2: Judgment Across Tools

Traditional automation (like a standard Lambda function) follows a hard-coded script. If X, then Y.

Agents are different. They use ReAct (Reason + Act) loops. They reason about what information they need, decide which APIs to query, interpret the response, and then determine the next step.

To do this on AWS, your systems need well-defined, secure, and reliable interfaces (Tools). Without tools, an LLM is just a chatbot. With tools, it becomes an agent.

Here is how you securely define a tool schema for Amazon Bedrock using Python and Boto3. This tool allows the agent to safely check inventory levels:

import boto3

import json

# Initialize the Bedrock Agent client

bedrock_agent = boto3.client('bedrock-agent')

# Define the Tool Schema (OpenAPI format is heavily utilized in Bedrock)

get_inventory_tool_schema = {

"functions": [

{

"name": "check_warehouse_inventory",

"description": "Checks the current inventory level of a specific product SKU.",

"parameters": {

"type": "object",

"properties": {

"sku_id": {

"type": "string",

"description": "The unique 8-character String ID of the product."

},

"warehouse_location": {

"type": "string",

"description": "The region code, e.g., 'us-east-1' or 'eu-west-1'."

}

},

"required": ["sku_id"]

}

}

]

}

print("Tool schema defined successfully for Bedrock consumption.")

# Next step would be attaching this Action Group to your Bedrock Agent.

# This involves creating the action group (with your function or API schema),

# and then associating it with the agent so Bedrock knows what actions

# the agent can actually perform. :contentReference[oaicite:0]{index=0}

If your current process involves humans using spreadsheets and emailing attachments, you have API/Data Engineering work to do before you can deploy an agent.

Pillar 3: Observable and Measurable Success

Someone who didn’t build the agent must be able to look at its output and say, “This is correct” or “This needs fixing.”

But observability goes beyond spotting a wrong answer. You need to see how the agent arrived at its answer. What data did it retrieve? Which tools did it call?

In AWS, enabling Trace Logs for Bedrock Agents is non-negotiable for production. This allows you to inspect the agent’s internal monologue (the Chain of Thought) via CloudWatch.

Here is an example of querying a Bedrock Agent’s reasoning trace using Python:

import boto3

import time

cloudwatch = boto3.client('logs')

def get_agent_reasoning_trace(log_group_name: str, session_id: str):

"""

Queries CloudWatch Logs to extract the specific Reasoning steps (ReAct loop)

taken by an Amazon Bedrock Agent during a specific session.

"""

query = f"""

fields @timestamp, @message

| filter sessionId = '{session_id}'

| filter tracePart.trace.type = 'REASONING'

| sort @timestamp asc

| limit 50

"""

# Start the Insight query

start_query_response = cloudwatch.start_query(

logGroupName=log_group_name,

startTime=int(time.time() - 86400), # Last 24 hours

endTime=int(time.time()),

queryString=query

)

query_id = start_query_response['queryId']

# Wait for the query to complete

response = None

while response is None or response['status'] == 'Running':

time.sleep(1)

response = cloudwatch.get_query_results(queryId=query_id)

print(f"Found {len(response['results'])} reasoning steps:")

for result in response['results']:

for field in result:

if field['field'] == '@message':

print(f"-> {field['value']}n")

# Example Usage:

# get_agent_reasoning_trace('/aws/bedrock/agents/my-support-agent', 'session-12345')

If you cannot evaluate the reasoning, you cannot improve the agent, and you cannot defend its decisions to compliance teams when it makes a mistake.

Pillar 4: The Safe Mode and Reversibility

The best early candidates for Agentic AI are tasks where mistakes are caught quickly, corrected cheaply, and do not create irreversible harm.

If an agent misclassifies an IT support ticket, a human can simply re-route it. The cost of failure is negligible. But if an agent approves a $50,000 payment to a vendor, the cost of being wrong is unacceptable.

Start with recommendations. Have the agent draft the email, but require a human to click “Send.” As trust, telemetry, and evaluation pipelines mature, you earn the right to move into closed-loop, autonomous execution.

Bridging the Gap in 2026

The gap between POC and Production isn’t a technology gap anymore. It is an execution and governance gap. By leveraging Amazon Bedrock AgentCore, AWS has abstracted away much of the underlying infrastructure complexity (like managing conversational state and tool routing orchestration).

Your job as an architect or leader is to provide the boundaries, the tools, and the observability.

Your Action Plan for This Week:

- Audit: Look at your current AI roadmap and run those tasks through the 4-Pillar Agent-Shaped filter.

- Instrument: Ensure every existing agent POC is writing ReAct trace logs to CloudWatch.

- Boundaries: Implement AWS Bedrock AgentCore Policies to set hard guardrails on what your agents are not allowed to do.

Found this guide helpful? Drop a comment below with the craziest thing you’ve seen an AI agent try to do in production!

Operationalizing Agentic AI on AWS: A 2026 Architect’s Guide was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.