LLMOps Guide: The End-to-End Pipeline for Reliable AI Applications

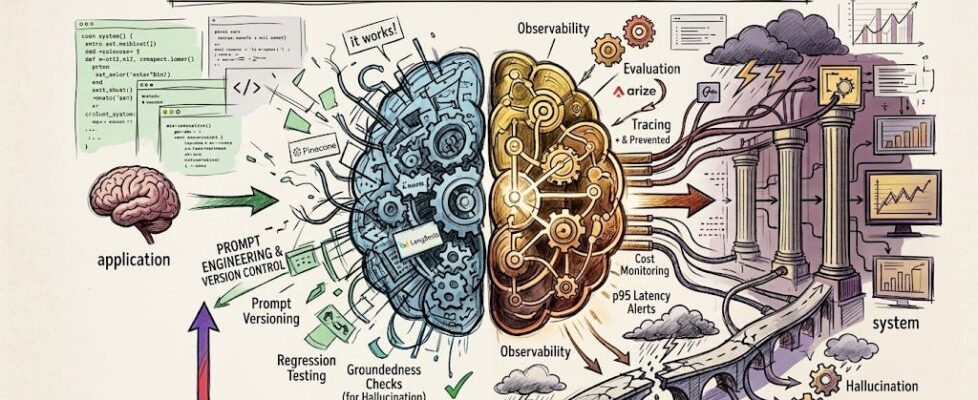

Author(s): Divy Yadav Originally published on Towards AI. For developers who have just built an LLM, RAG, or agentic system and are wondering what comes next. Most teams celebrate when their AI application finally works. The demo looks good, the feature ships. Photo by authorThis article discusses the challenges teams face when transitioning from an AI application that merely works to a robust production system that remains reliable and performant. It emphasizes the importance of LLMOps—Large Language Model Operations—which encompasses various practices and tools that ensure AI systems are continually evaluated, monitored, and improved after deployment. Major topics include understanding the operational layer’s role, how LLMOps differs from traditional MLOps, and the necessity of creating a continuous improvement loop that incorporates real-world performance data to enhance application functionality and user experience. Read the full blog for free on Medium. Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor. Published via Towards AI