What Are AI Hallucinations? A Guide to Causes and Prevention

Discover what AI hallucinations are, their types, why they occur, potential negative impact, and strategies to reduce them.

When people see something that is not actually there, we often call it a hallucination, a moment when their sensory perception doesn’t match an external stimulus.

Artificial intelligence (AI) based systems tend to hallucinate, too. When an AI gives outputs that are factually incorrect, logically inconsistent, or completely fabricated, all while maintaining a tone of absolute confidence, that’s called an AI hallucination.

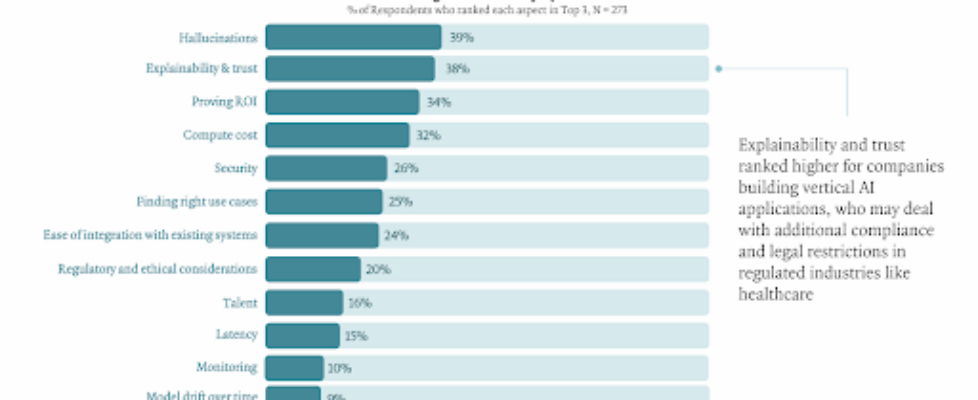

These hallucinations are the biggest problem with AI, as enterprises cite them as their top challenge, and they cost companies about $67.4 billion globally in 2024.

Since hallucination behaviors and their effects depend on the type of AI system, from chatbots such as ChatGPT to image generators and autonomous vehicles to cybersecurity systems, we need a disciplined process for managing them.

In this article, we will explain what AI hallucinations are, explore their causes and their impact, and outline a practical framework to reduce them for building trustworthy AI systems.

What is an AI hallucination?

An AI hallucination is when a generative AI model produces output that seems fluent and authoritative, even when it’s incorrect (false or made up).

An AI hallucination is when a generative model confidently outputs information that is false or made up.

Fundamentally, these AI models are not designed to provide fact-based results. Instead, they compose statistically likely outputs (such as the next word in a sentence or the next pixel in an image) based on patterns in their training data.

For example, with large language models (LLMs), often used as generative AI chatbots, hallucinations are convincing but incorrect, fabricated, or irrelevant pieces of information that appear in well-formed, confident sentences.

What Are The Types of AI Hallucinations?

Understanding the different types of AI hallucinations is the first step toward building better validation and testing pipelines.

Factual Hallucinations

Factual hallucinations occur when the AI model lacks correct knowledge and fills in gaps with made-up details (verifiably false or contradicts real-world facts).

The model presents these fabrications with confidence, ranging from minor inaccuracies, like misstating a date, to inventing entire events or sources.

For example, factual hallucination occurred during Google Bard’s initial public demo. The AI incorrectly stated that the James Webb Space Telescope “took the very first pictures of a planet outside of our own solar system,” when in fact the first such image was taken 16 years before the JWST was launched.

Contextual Hallucinations

Contextual hallucinations occur when the AI’s output doesn’t match the user’s provided context. The information may be correct in general, but it is either irrelevant or only tangentially related to the question asked.

For instance, you ask a chatbot to summarize a financial report, and it provides a correct but completely unrelated history of the company’s founding.

AI hallucination example, the day after Bard’s debut, Microsoft’s Bing Chat AI held a public demo that also included hallucinations. Bing Chat provided incorrect figures about the Gap’s earnings and Lululemon’s financial data.

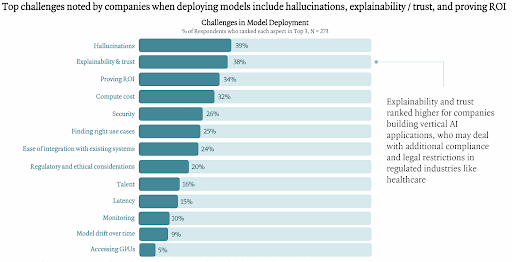

Logical Hallucinations

Logical hallucinations are reasoning errors where an AI’s output contains faulty logic or self-contradictory statements. Even if individual facts within the response are correct, the model fails to apply a logical structure and leads to an incorrect or nonsensical conclusion.

For instance, consider a language model tasked with solving the equation 2x + 3 = 11 step by step. The model might correctly perform the first operation, “Step 1: Subtract 3 from both sides to get: 2x=8.” However, it then makes a critical reasoning error in the next step, “Step 2: Divide both sides by 2 to get: x=3.”

The final conclusion is wrong, not because of a faulty external fact, but because of a fundamental breakdown in its own reasoning chain.

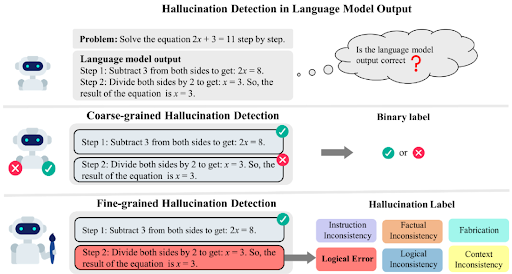

Multimodal Hallucinations

These occur in models dealing with multiple data types (text, images, audio). The model fabricates or misaligns content across modalities.

For instance, a generative model might create a photorealistic scene that includes objects not mentioned in the prompt, or a video captioning model might hallucinate when it misinterprets video frames (describe nonexistent features).

Likewise, large audio models (LAMs) are prone to hallucinations. These errors can appear as unrealistic audio snippets, fabricated quotes or facts, and inaccuracies in capturing audio features like timbre, pitch, or background noise.

What Causes AI to Hallucinate?

AI hallucinations are the result of three core issues, including limitations in training data (biased training data), constraints in model architecture, and the probabilistic nature of AI models’ response generation. Let’s explore them in detail.

AI systems are built by collecting large amounts of data and feeding it into a computational system that learn to identify patterns within it.

When the data is inaccurate, biased, or incomplete, the AI model will develop blind spots. And if the model faces an input outside its knowledge scope (due to domain shift or exposure to out-of-distribution inputs).

It will try to fill the gap by generating a plausible-sounding but incorrect output because it doesn’t learn to say “I don’t know” (that’s rare in training data).

Flawed training data is just one reason, the model’s architecture also influences this behavior. Some designs are more prone to overgeneralization, struggle with ambiguous prompts, or lack mechanisms to reconcile conflicting information, which makes them more likely to “hallucinate” under uncertainty.

Since AI model responses are based on predicting the most statistically likely patterns in the data rather than verifying facts, they can produce false responses if the statistical pattern resembles the truth. Unlike humans, AI doesn’t have a real understanding or an internal sense of what is real or true.

What Are The Consequences of AI Hallucinations?

The effects of AI hallucinations can vary depending on the use of a specific model. Let’s explore how these errors undermine trust in AI technologies and pose significant challenges to ensuring the safety, reliability, and integrity of decisions based on AI-generated data.

- Spreading of Misinformation: Sometimes, AI generates false information that spreads rapidly and causes problems in areas where accuracy is crucial, such as news, education, and science. When AI creates believable but false content, it can mislead people, affect public opinions, and even influence elections. A well-known example occurred when a Microsoft AI travel article once recommended the Ottawa Food Bank as a “tourist hotspot,” causing embarrassment.

- Economic and Reputational Costs: False narratives and misleading information generated by AI can result in reputational and financial harm for individuals and institutions. For instance, Deloitte was supposed to repay the Albanese government (part of the fee) after using generative AI to create a $440,000 report on Australia’s welfare system. The report had many errors, false references, and incorrect footnotes. As a result, it faced criticism from officials and academics over the erosion of professional standards. Similarly, Google’s Bard AI’s factual error caused Alphabet’s stock to drop roughly $100 billion in a day.

- Safety and Reliability Concerns in High-Stakes Applications: In sensitive domains like healthcare, an AI hallucination can lead to serious harm to patients. These high risks are a main reason why many healthcare organizations have postponed full-scale AI deployment, citing concerns about reliability and trust.

How to Prevent AI Hallucinations and Build Trust in AI Systems

Given the significant risks, the main question for any organization is, Can AI hallucinations be fixed? As OpenAI acknowledges in its research, AI hallucinations are mathematically inevitable and cannot be solved through better engineering.

Therefore, AI hallucinations can’t be stopped entirely, but their frequency can be greatly reduced and their damage limited through the following techniques and strategies.

Mitigating Hallucinations at the Data and Model Level

Creating more reliable AI begins with designing the AI system itself to be less prone to fabrication through improved data and training strategies. It is not about having a single solution, but about choosing the right tradeoffs.

So, use high-quality, relevant (domain-specific), and narrowly scoped (company policies, product info) training data.

Restricting the model’s knowledge domain and fine-tuning it exclusively on vetted, authoritative sources can dramatically lower the chances of it “making things up.” As GDIT cites, training on domain-specific data cuts hallucinations because the model no longer has to guess outside its knowledge.

Since generative AI models tend to guess, one strategy is to train them to say “I don’t know” when they are unsure. This might involve changing prompts or training signals to help models express uncertainty instead of guessing.

OpenAI research suggests adding explicit confidence targets, which teach models to recognize when they lack enough evidence and to say “I don’t know” rather than generating a false answer.

Furthermore, architectural options such as Retrieval-Augmented Generation (RAG) or hybrid systems that combine generative models with symbolic AI or rule-based logic can help minimize hallucinations. RAG links the AI to external knowledge sources (grounding the model’s responses) and significantly improves factual accuracy, and provides traceability to source material.

Rigorously Testing and Evaluating for Hallucinations

All of the above-mentioned technical approaches can manage the hallucination rate, but none can eliminate it. Each mitigation changes the model’s error profile without erasing it.

Therefore, you need thorough testing to let people trust your AI system. The goal of testing is not to reach an impossible standard of perfection but to have quantifiable trust in it (measurable trust). For example, knowing that the AI system performs correctly 96% of the time and that the other 4% of failures occur in predictable situations.

These insight turns an unpredictable AI into a manageable tool with a well-understood error profile. It provides the clarity needed to roll out systems with confidence, create a data-based plan for improvements, and innovate quickly without unknown risks.

Here are some practical strategies for evaluating your generative AI systems:

- A/B Testing (Across Models/Versions/): Compare different models or versions to determine which one yields fewer errors. Test the model with challenging or misleading inputs to assess the stability of the AI model. Re-run the model on the same inputs (or slight variants) to see if it flips on facts, and a trustworthy model should be stable.

- Gold-Standard Comparisons: Compare the AI’s outputs against a verified dataset curated by human experts. This is the ultimate benchmark for factual accuracy in any domain. Also, explore established benchmarks for factuality. Datasets like TruthfulQA, HaluEval, FACTScore, or domain-specific quizzes can measure how often the model states true vs false statements.

- Quantitative metrics: Track domain-specific similarity metrics over time, and simple ones include precision and recall on fact-checks, or BLEU/ROUGE scores against reference answers (in QA tasks), and hallucination rate. Hallucination Rate is the fraction of outputs with any false claims. Maintain these metrics as part of your model evaluation pipeline. Other metrics include the Truthfulness Score, Consistency Score, Citation Accuracy, and user trust surveys.

- Qualitative evaluation: Periodically have domain experts review AI outputs. Collect examples of subtle or critical hallucinations, and analyze patterns. Create a set of criteria for human reviewers (hallucination checklist), like checking every statistic or date against a source, or verifying each reference link.

User and Oversight Strategies

The most reliable safeguard against AI hallucinations is human judgment. Trustworthy AI is not about replacing humans but augmenting them.

- Embed Human Oversight: Organizations must build human verification into AI workflows, as no technical solution can replace critical thinking.

- Foster AI Literacy: Educating users on AI’s limitations enables them to write more specific prompts and critically question outputs.

What Are AI Hallucinations? A Guide to Causes and Prevention was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.