Agentic AI in Action — Part 11 — Building a Fully Autonomous Multi-Agent Analytics System in…

Building a Fully Autonomous Multi-Agent Analytics System in Snowflake

Enterprise analytics has historically been a human-driven process. A business stakeholder asks a question, an analyst interprets the intent, retrieves data, analyzes trends, and presents insights. Even as data platforms have become more powerful, the responsibility for reasoning has remained external to the system. The database stores information, but it does not understand questions, determine analytical steps, or generate conclusions.

Snowflake can now host autonomous analytics agents that interpret intent, retrieve enterprise context, reason over operational data, validate their conclusions, and persist interactions. These agents operate directly where governed enterprise data resides, eliminating architectural fragmentation and improving reliability.

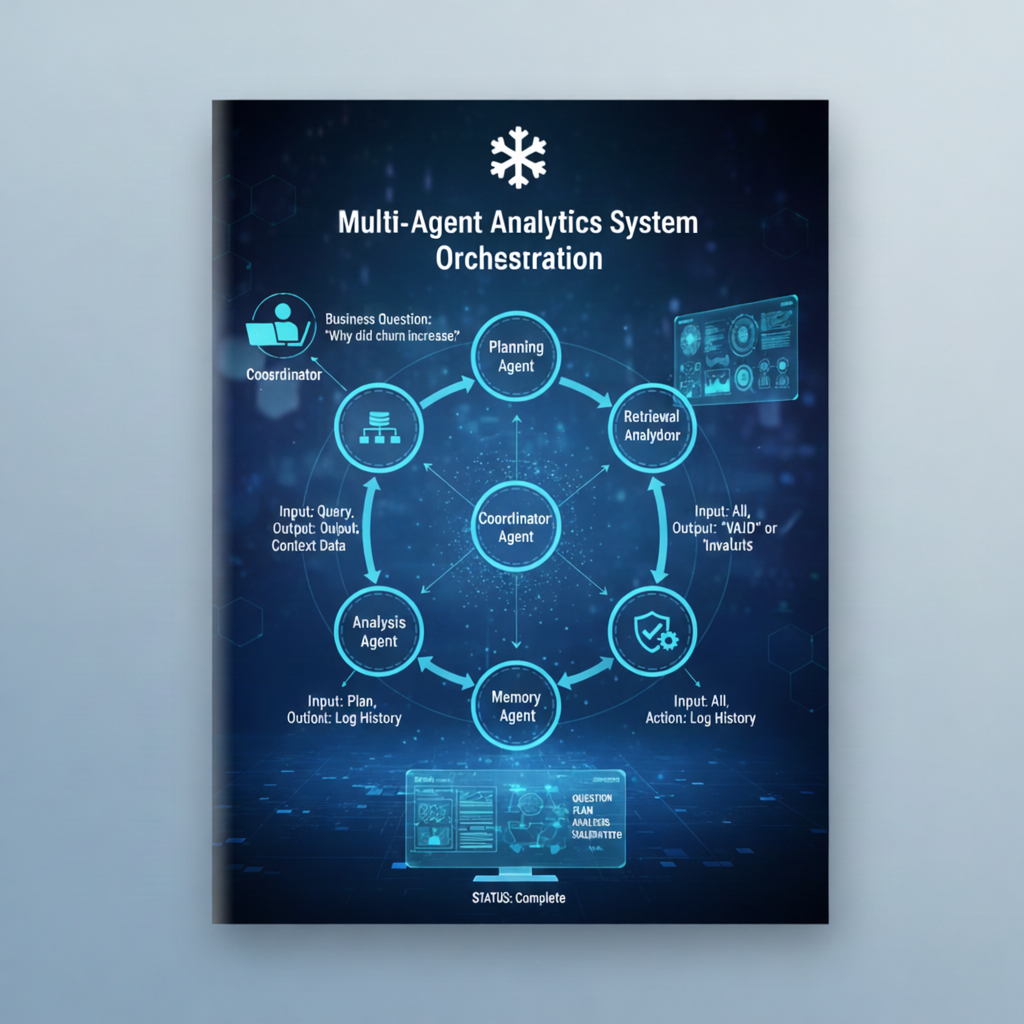

The most effective way to build such systems is through a multi-agent architecture, where specialized agents collaborate through a controlled execution workflow. Each agent performs a specific responsibility. Planning, retrieval, analysis, validation, and memory, all coordinated through a central orchestration layer.

The Dataset:

To demonstrate this architecture, we use a simple SaaS customer retention dataset containing quarterly operational and customer experience metrics from 2021 through 2025. This dataset captures churn rate, customer growth, support performance, satisfaction scores, pricing changes, and product release events. These variables reflect the interconnected nature of customer retention, making it possible for autonomous agents to identify causal relationships and generate meaningful insights.

Step 1: Establishing the Data Foundation

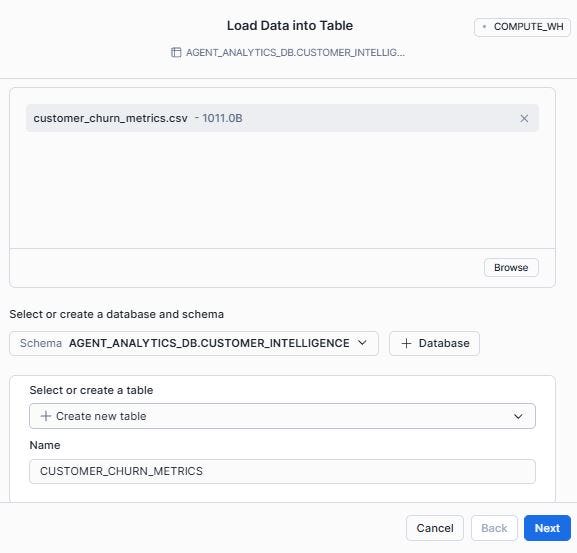

Before an autonomous agent can reason, it must have access to governed enterprise data. Snowflake enables agents to operate directly within the data platform, ensuring that reasoning occurs on trusted, consistent data without requiring external movement or duplication.

The first step is to create a dedicated database and schema that will host both the operational data and the agents themselves.

Next, let us import the provided data file (customer_churn_metrics.csv) using Snowsight UI and create the CUSTOMER_CHURN_METRICS table.

Step 2: The Planning Agent: Translating Business Intent into an Execution Plan

The first responsibility of an autonomous analytics system is to understand intent. When a business user asks a question, the system must determine what needs to be analyzed, which data is relevant, and how the analysis should proceed.

This is fundamentally different from traditional dashboards, where the user must already know where to look. Here, the system itself becomes responsible for translating intent into execution.

This responsibility belongs to the Planning Agent.

In Snowflake, Cortex COMPLETE provides the reasoning capability required for this step. Because Cortex calls must be orchestrated using Snowflake Scripting, the Planning Agent is implemented as a stored procedure.

CREATE OR REPLACE PROCEDURE CUSTOMER_INTELLIGENCE.AGENT_PLAN(input_query STRING)

RETURNS STRING

LANGUAGE PYTHON

RUNTIME_VERSION = ‘3.10’

PACKAGES = (‘snowflake-snowpark-python’)

HANDLER = ‘run’

AS

$$

def run(session, input_query):

prompt = (

“You are an enterprise analytics planning agent.nn”

“Your task is to convert the business question into a structured analytical plan.nn”

f”Business Question:n{input_query}nn”

“Return a clear step-by-step plan describing:n”

“- What data should be examinedn”

“- What trends should be analyzedn”

“- What factors should be evaluatedn”

)

# Escape prompt safely

escaped_prompt = prompt.replace(“‘“, “‘’”)

query = f”””

SELECT SNOWFLAKE.CORTEX.COMPLETE(

‘snowflake-arctic’,

‘{escaped_prompt}’

)

“””

result = session.sql(query).collect()

return result[0][0]

$$;

This procedure accepts a natural language query and converts it into structured reasoning steps. This ensures that subsequent agents operate with a clear analytical objective rather than attempting to infer intent independently.

This agent does not perform analysis itself. Instead, it determines how analysis should be performed. This separation between planning and execution is a key architectural principle in agent systems and improves reliability, traceability, and control.

Step 3: The Retrieval Agent: Providing Grounded Enterprise Context

Once intent has been established, the system must retrieve relevant enterprise data. Reasoning without context leads to hallucination. Autonomous analytics systems must reason over governed operational state rather than relying on generalized knowledge.

The Retrieval Agent gathers relevant operational metrics from Snowflake and prepares them as structured reasoning context.

The Retrieval Agent performs this function by collecting relevant metrics from Snowflake tables and preparing them for analysis.

Because this step does not require reasoning, it can be implemented as a SQL function.

CREATE OR REPLACE PROCEDURE CUSTOMER_INTELLIGENCE.AGENT_RETRIEVE_CONTEXT()

RETURNS STRING

LANGUAGE PYTHON

RUNTIME_VERSION = ‘3.10’

PACKAGES = (‘snowflake-snowpark-python’)

HANDLER = ‘run’

AS

$$

import json

def run(session):

df = session.sql(“””

SELECT *

FROM CUSTOMER_INTELLIGENCE.CUSTOMER_CHURN_METRICS

ORDER BY quarter

“””)

rows = df.collect()

context = []

for row in rows:

context.append({

“quarter”: row[“QUARTER”],

“churn_rate”: float(row[“CHURN_RATE”]),

“support_tickets”: int(row[“SUPPORT_TICKETS”]),

“resolution_time”: float(row[“AVG_RESOLUTION_HOURS”]),

“nps_score”: int(row[“NPS_SCORE”]),

“price_change”: bool(row[“PRICE_CHANGE_FLAG”]),

“product_release”: bool(row[“MAJOR_RELEASE_FLAG”])

})

return json.dumps(context)

$$;

This function transforms relational data into structured contextual input suitable for reasoning. This grounding step ensures that analysis reflects actual enterprise conditions.

Step 4: The Analysis Agent: Generating Insights Through Reasoning

With intent defined and context retrieved, the Analysis Agent performs the core reasoning task. This agent interprets operational metrics, identifies patterns, correlates events, and generates grounded insights.

This is where Snowflake transitions from executing queries to executing reasoning workflows.

CREATE OR REPLACE PROCEDURE CUSTOMER_INTELLIGENCE.AGENT_ANALYZE(

input_query STRING,

input_plan STRING,

input_context STRING

)

RETURNS STRING

LANGUAGE PYTHON

RUNTIME_VERSION = ‘3.10’

PACKAGES = (‘snowflake-snowpark-python’)

HANDLER = ‘run’

AS

$$

def run(session, input_query, input_plan, input_context):

prompt = (

“You are an enterprise analytics agent.nn”

“Analyze enterprise data and explain the root causes of the observed behavior.nn”

f”Business Question:n{input_query}nn”

f”Execution Plan:n{input_plan}nn”

f”Enterprise Data Context:n{input_context}nn”

“Your task:n”

“- Identify key trendsn”

“- Correlate operational factorsn”

“- Explain root causesn”

“- Provide actionable insightsn”

)

# Escape prompt safely

escaped_prompt = prompt.replace(“‘“, “‘’”)

query = f”””

SELECT SNOWFLAKE.CORTEX.COMPLETE(

‘snowflake-arctic’,

‘{escaped_prompt}’

)

“””

result = session.sql(query).collect()

return result[0][0]

$$;

This agent combines intent and enterprise context into a reasoning prompt. Cortex COMPLETE then generates insights grounded in operational data.

This transforms Snowflake from a query engine into a reasoning engine .This agent synthesizes operational state into meaningful business insight.

Step 5: The Validation Agent: Ensuring Reliability and Grounding

Enterprise analytics systems must produce reliable and explainable outputs. Autonomous agents must verify their conclusions before presenting results. The Validation Agent evaluates whether the generated analysis is logically sound and grounded in enterprise context.

This introduces a verification layer that improves trust and reduces unsupported conclusions.

CREATE OR REPLACE PROCEDURE CUSTOMER_INTELLIGENCE.AGENT_VALIDATE(

input_query STRING,

input_plan STRING,

input_context STRING,

input_analysis STRING

)

RETURNS STRING

LANGUAGE PYTHON

RUNTIME_VERSION = ‘3.10’

PACKAGES = (‘snowflake-snowpark-python’)

HANDLER = ‘run’

AS

$$

def run(session, input_query, input_plan, input_context, input_analysis):

prompt = (

“You are a validation agent in an enterprise analytics system.nn”

f”Question:n{input_query}nn”

f”Execution Plan:n{input_plan}nn”

f”Enterprise Context:n{input_context}nn”

f”Generated Analysis:n{input_analysis}nn”

“Determine whether the analysis is grounded, logically correct, “

“and supported by the context.nn”

“Return VALID: <reason> or INVALID: <reason>”

)

# Escape prompt safely for SQL

escaped_prompt = prompt.replace(“‘“, “‘’”)

query = f”””

SELECT SNOWFLAKE.CORTEX.COMPLETE(

‘snowflake-arctic’,

‘{escaped_prompt}’

)

“””

result = session.sql(query).collect()

return result[0][0]

$$;

This ensures analytical integrity.

Step 6: The Memory Agent: Persisting Interaction History

Autonomous systems benefit from persistence. Each interaction becomes part of the system’s operational awareness, enabling auditability and continuity.

Snowflake tables serve as persistent memory.

This allows the system to retain historical reasoning workflows.

Step 7: The Coordinator Agent: Orchestrating Multi-Agent Execution

The Coordinator Agent manages the execution of all other agents. It ensures that planning, retrieval, analysis, validation, and persistence occur in sequence.

This orchestration layer transforms independent agents into a cohesive autonomous system.

CREATE OR REPLACE PROCEDURE CUSTOMER_INTELLIGENCE.RUN_AUTONOMOUS_ANALYTICS(input_query STRING)

RETURNS STRING

LANGUAGE PYTHON

RUNTIME_VERSION = ‘3.10’

PACKAGES = (‘snowflake-snowpark-python’)

HANDLER = ‘run’

AS

$$

def run(session, input_query):

# Step 1: Planning Agent

plan = session.call(

“CUSTOMER_INTELLIGENCE.AGENT_PLAN”,

input_query

)

# Step 2: Retrieval Agent

context = session.call(

“CUSTOMER_INTELLIGENCE.AGENT_RETRIEVE_CONTEXT”

)

# Step 3: Analysis Agent

analysis = session.call(

“CUSTOMER_INTELLIGENCE.AGENT_ANALYZE”,

input_query,

plan,

context

)

# Step 4: Validation Agent

validation = session.call(

“CUSTOMER_INTELLIGENCE.AGENT_VALIDATE”,

input_query,

plan,

context,

analysis

)

# Step 5: Store agent memory

insert_sql = “””

INSERT INTO CUSTOMER_INTELLIGENCE.AGENT_MEMORY_LOG

(

user_query,

execution_plan,

enterprise_context,

generated_response,

validation_result

)

VALUES (?, ?, ?, ?, ?)

“””

session.sql(

insert_sql,

params=[input_query, plan, context, analysis, validation]

).collect()

# Step 6: Return structured response

final_response = f”””

AUTONOMOUS ANALYTICS RESULT

===========================

QUESTION:

{input_query}

EXECUTION PLAN:

{plan}

ANALYSIS:

{analysis}

VALIDATION:

{validation}

STATUS:

Complete

“””

return final_response

$$;

Step 8: Executing the Autonomous Analytics Agent

Once deployed, the entire system can be invoked using a single call.

CALL CUSTOMER_INTELLIGENCE.RUN_AUTONOMOUS_ANALYTICS(

‘Why did churn increase in 2023 Q4?’

);

Reviewing the output

The output conssts of the following key insights:

(The file Output.txt is part of the code files and contains the output of the procedure call for easy reference)

1. Execution Plan: How the System Approaches the Problem

The Execution Plan is generated by the Planning Agent. It translates the business question into structured analytical steps such as examining churn trends, comparing historical performance, and identifying contributing factors.

As observed, the EXECUTION PLAN consis of the following planning steps:

1. Data Collection

2. Data Cleaning and Preparation

3. Exploratory Data Analysis

4. Customer Segmentation

5. Cohort Analysis

6. Event Analysis

7. Predictive Modeling

8. Root Cause Analysis

9. Recommendations and Action Plan

10. Monitoring and Evaluation

This shows that the system first determines how to analyze the problem, rather than immediately producing conclusions.

2. Analysis: What the System Learned from Enterprise Data

The Analysis section is produced by the Analysis Agent after examining the actual data stored in Snowflake. It expands on the Key Trends, correlations, Root Causes and Actionable Insights.

It identified key operational signals in 2023 Q4:

- Increase in support tickets

- Increase in resolution time

- Decrease in NPS score

- Increase in churn rate

These observations indicate that declining customer experience and slower issue resolution were strongly associated with higher churn.

The model observed that the increase in churn rate was strongly associated with a rise in support tickets and longer resolution times, which contributed to declining customer satisfaction as reflected in lower NPS scores, indicating that deteriorating support performance was a primary operational driver of customer churn.

This demonstrates reasoning grounded in enterprise data, not generic assumptions.

Based on its analysis, the agent recommended improvements such as:

- Strengthening customer support capacity

- Reducing resolution time

- Improving customer satisfaction

This transforms the system from a reporting tool into a decision-support system.

3. Validation: Ensuring the Results Are Reliable

The Validation Agent independently evaluated the analysis and returned:

This confirms that the conclusions are logically consistent and supported by the enterprise data.

Putting it all Together:

This architecture shows how specialized agents collaborate sequentially to autonomously answer a business question: the Coordinator Agent initiates the process, the Planning Agent defines the analytical approach, the Retrieval Agent gathers enterprise data, the Analysis Agent generates insights, the Validation Agent verifies correctness, and the Memory Agent stores the results before the Coordinator delivers the final conclusion.

Mapping what each agent was responsible for:

In summary, what we have built is more than a workflow automation. It is a shift in where intelligence resides.

Each agent plays a distinct and necessary role. The Planning Agent translates intent into analytical structure. The Retrieval Agent grounds the system in governed enterprise context. The Analysis Agent performs reasoning and identifies causal relationships. The Validation Agent ensures correctness and reliability. The Memory layer provides continuity and auditability. The Coordinator orchestrates these agents into a cohesive autonomous loop.

Together, they form an active intelligence execution layer.

This architecture has profound implications. Enterprise systems no longer need to wait for analysts to interpret operational signals. The platform itself can continuously interpret its own state, explain changes, and surface insights. Analytics shifts from being reactive and human-mediated to proactive and autonomous. Importantly, this intelligence remains fully governed. The agents operate directly on Snowflake data, respecting access controls, lineage, and security boundaries. This preserves trust while enabling autonomy.

This is the foundation of a new class of enterprise systems . Systems that do not merely store and serve data, but understand it.

The code and data files for this blog can be accessed here.

I share hands-on, implementation-focused perspectives on Generative & Agentic AI, LLMs, Snowflake and Cortex AI, translating advanced capabilities into practical, real-world analytics use cases. Do follow me on LinkedIn and Medium for more such insights.

Agentic AI in Action — Part 11 — Building a Fully Autonomous Multi-Agent Analytics System in… was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.