Tokens and Context are a Modern Shopping Cart in an ‘AI Supermarket’

A modern exploration of how AI reads, remembers, and reasons with a full breakdown of today’s leading models.

There is a supermarket unlike any other. Its shelves stretch beyond what the eye can see, stocked with every word ever written, every pattern ever formed, every idea ever expressed. This is the AI supermarket and when you step inside, you need two things to make sense of it all: tokens and context. One is your currency. The other is your cart.

“Tokens are what you pay; Context is what you can carry.”

Most people interact with AI models daily without ever thinking about the machinery beneath. They type a message, get a response, and move on. But understanding how tokens and context work is not just a technical curiosity, it is the key to unlocking why AI behaves the way it does, “why it sometimes forgets, why it sometimes costs more, and why some models feel sharper than others”.

The Currency of Intelligence — What Is a Token?

Before an AI model reads a single word you write, it runs your text through a process called tokenization. Rather than processing text letter-by-letter or word-by-word, the model breaks language into chunks called tokens, that sit somewhere between characters and full words. Common short words become single tokens. Longer or rarer words are split into multiple pieces.

“A token is just a small chunk of text — the basic unit an AI model reads and writes in”.

As a practical rule of thumb, one token is roughly three-quarters of a word. One hundred tokens is approximately seventy-five words. A typical paragraph like this one contains somewhere between fifty and eighty tokens.

“Every word you type, every sentence the AI generates — it all flows through the economy of tokens.”

This matters for two reasons. First, AI models have a maximum number of tokens they can process at once then the context window. Second, most AI APIs charge based on the number of tokens consumed. Input tokens, meaning what you send, and output tokens, meaning what the model generates, both contribute to the bill. Load more into your cart, and you pay more at checkout.

Why Not Just Use Words?

Tokenization is a practical middle ground. Processing text character-by-character would create sequences too long to manage. Processing full words would require a vocabulary so enormous it would become computationally unmanageable. Tokens solve this by breaking text into sub-word units, for e.g. the word “tokenization” might become “token” and “ization,” the word “unbelievable” might split three ways. This flexibility is what makes modern language models robust against new words, typos, and multilingual text.

Think of it like this: instead of reading letter-by-letter or word-by-word, AI models break text into pieces that are roughly syllable-to-word sized. For example:

- “cat” → 1 token

- “unbelievable” → might be 3 tokens: “un” + “believ” + “able”

- “Artificial intelligence is cool” → roughly 6–8 tokens

The model converts these text chunks into numbers, does its math, and converts the numbers back into text when responding. It never actually “reads” words the way humans do but it processes streams of these numeric token IDs.

The practical reason you’d care about tokens is that AI models have a token limit (how much text they can handle at once), and APIs typically charge per token used. So a longer conversation or document = more tokens = higher cost.

A simple rule of thumb: 1 token ≈ ¾ of a word, or about 100 tokens ≈ 75 words.

If the cart is the limit, tokens are the items and their price tags.

- Granularity: In a supermarket, you don’t just buy “a meal”; you buy the ingredients. In AI, you don’t process “sentences”; you process tokens (roughly 4 characters or 0.75 words).

- Efficiency: A “Modern Shopping Cart” user is savvy. They look for “concentrated” items. In AI terms, this is Prompt Engineering — finding the most efficient way to pack information into the cart so you don’t waste “money” (tokens) on fluff.

The Cart That Carries Everything — the “Context Window”

If tokens are the currency, the context window is the shopping cart. It is the bounded space within which the AI model operates which is the total amount of text it can see, process, and reason over at any given moment.

Everything competes for space inside that cart: your current message, the entire conversation history, any documents or code you have pasted in, system-level instructions, and the model’s own previous replies. The moment content exceeds the cart’s capacity, something gets left behind and typically the oldest material, which quietly falls out of the model’s awareness.

“The AI supermarket has infinite shelves. But your cart can only carry so much before something gets left behind.”

Size Is Not Everything

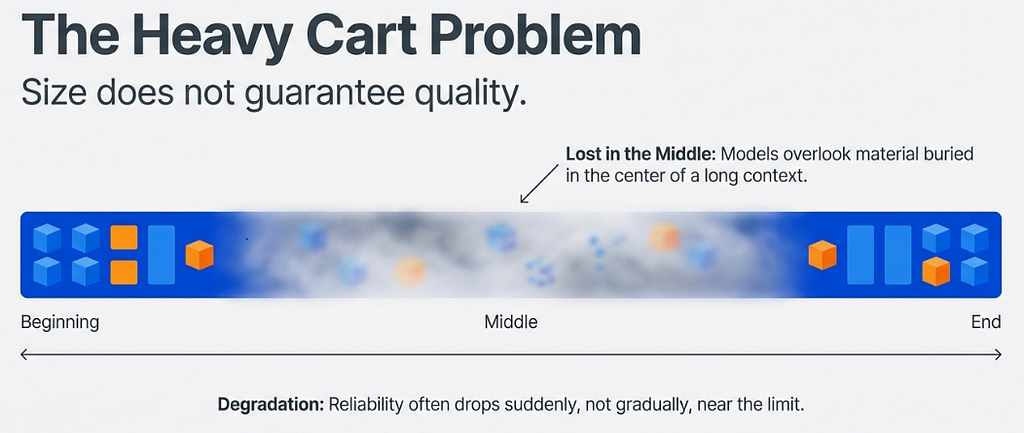

A large context window is genuinely powerful, hence it means you can paste in an entire codebase, a lengthy legal document, or hours of transcripts and ask the model to reason across all of it. But raw size does not guarantee quality.

Research has revealed two persistent limitations. The first is the lost-in-the-middle problem: models pay more attention to content at the beginning and end of their context, overlooking material buried in the middle. The second is performance degradation near capacity, where most models become less reliable as they approach their token limit, often dropping suddenly rather than gradually.

So Then — What is an AI Context Window?

The context window is the maximum amount of text an AI model can “see” and work with at one time, think of it as the model’s short-term memory.

Everything within that window is what the model uses to understand and respond: your instructions, the conversation history, any documents you paste in, and its own previous replies. Once something falls outside the window, the model simply can’t access it anymore.

Why does size matter?

A small context window (e.g. 4K–8K tokens) means the model forgets earlier parts of a long conversation quickly. A large context window (e.g. 200K tokens) lets you paste in entire books, codebases, or legal documents and have the model reason across all of it.

Context Size — What it fits roughly

The key limitation

A large context window doesn’t guarantee the model actually uses all the information well. Two known problems:

- Lost in the middle — models tend to pay more attention to content at the beginning and end of the context, and can miss details buried in the middle.

- Degraded performance — most models become less reliable as you approach their maximum token limit, even if they technically “accept” the input.

Context window vs. memory

These are often confused:

- Context window = temporary, resets every conversation, everything is visible to the model at once

- Memory = a separate feature (like Claude’s memory or ChatGPT’s memory) that summarizes and stores key facts across conversations, then injects them into the context window when relevant

So memory is essentially a workaround for the context window’s temporary nature.

Here’s a breakdown of token limits for the major AI models as of early 2026:

Anthropic (Claude)

- Claude Sonnet 4 / Opus 4: 200,000 tokens (up to 1 million in extended API access)

OpenAI (GPT)

- GPT-4o: 128,000 tokens

- GPT-5: 400,000 tokens

Google (Gemini)

- Gemini 2.5 Flash: ~200,000 tokens

- Gemini 2.5 Pro: 1,000,000 tokens

- Gemini 1.5 Pro: up to 2,000,000 tokens (the largest among mainstream models)

- Gemini 3 Pro: 1,000,000 tokens

Meta (Llama)

- Llama 3.1: up to 128,000 tokens

- Llama 4: up to 10,000,000 tokens (experimental)

DeepSeek

- DeepSeek models: competitive context windows with very low cost (~$0.48/million tokens)

Magic LTM-2-Mini (specialized)

- 100,000,000 tokens — purpose-built for software dev, not a general-purpose model

A few important caveats

Real-world performance often degrades well before the advertised limit. A model claiming 200K tokens typically becomes unreliable around 130K, with sudden performance drops rather than gradual degradation.

Also, models struggle with information placed in the middle of long contexts which is known as the “lost in the middle” problem in situations where accuracy drops dramatically for content in the middle, even if it fits within the token limit.

So bigger isn’t always better, the quality of how a model uses its context matters just as much as the raw number.

Tokens as a Real-World Yardstick

The context window numbers only become meaningful when you translate them into real-world content. One hundred thousand tokens is roughly a full-length novel. Two hundred thousand tokens is a standard legal contract plus all its exhibits, appendices, and correspondence. One million tokens is a small library. Ten million tokens is a large codebase. You do not think in tokens. But you do know what fits in a cart, and now you know roughly what fits in each model’s cart. Choose accordingly.

Conclusion Shop Wisely

Understanding tokens and context does not require a computer science degree. It requires the same intuition you bring to any resource-constrained environment. You have a cart of fixed size. Every word costs something. Some items belong in that cart more than others.

When you write a rambling prompt that meanders before reaching the point, you are filling your cart with items you do not need. When you paste an entire document to ask about one paragraph, you are hoping the model finds what matters in a pile. If you Experienced while using the AI Models, the AI forgets something from early in a long conversation, now you know why. It fell out of the cart.

“Tokens are your currency, context is your cart — welcome to the AI supermarket.”

The AI supermarket is extraordinary. Its shelves hold the distilled knowledge of generations, and its models grow more capable by the month. But the cart is yours to manage. Use it with intention, choose the right model for the right task, and the intelligence waiting on those shelves becomes something genuinely remarkable. Waste it, and you will leave the store with less than you came for.

References

[1] Anthropic. (2025). Claude Models Overview — Context Windows & Pricing. Anthropic Developer Documentation.

[2] OpenAI. (2025). OpenAI API Pricing. OpenAI.

[3] Google. (2025). Gemini API Pricing. Google AI Developer.

[4] Meta AI. (2025). Llama 4: Multimodal Models with Native Long Context. Meta AI Research.

[5] DeepSeek. (2025). DeepSeek API Pricing. DeepSeek Platform.

[6] Mistral AI. (2025). Mistral Large: Model Overview & Pricing. Mistral AI.

[7] Artificial Analysis. (2026). AI Model Comparison: Context Windows & Pricing.

[8] Liu, N. F., et al. (2023). Lost in the Middle: How Language Models Use Long Contexts.

Tokens and Context are a Modern Shopping Cart in an ‘AI Supermarket’ was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.