Agentic AI in Action -Part 10 -Beyond Frameworks: Building the Core Loop From First Principles

Beyond Frameworks: Building the Agent Core Loop From First Principles

In the series of Agentic AI blogs, we have seen practical examples built using frameworks. We have also examined guardrails, governance, and why many impressive agent demos struggle when they encounter real operational complexity. At this point, it becomes useful to pause and strip everything back to first principles.

Frameworks play an important role in accelerating the development of agentic systems and in turning ideas into working solutions quickly. At the same time, their real value becomes clearer when we understand the underlying mechanics they build upon, particularly how decisions evolve over time. When we momentarily look beneath these layers, agentic behavior does not disappear; instead, its foundation becomes visible as a simple but powerful loop that observes state, decides what to do next, acts on that decision, and updates itself until a stable outcome is reached.

This blog focuses on that loop. We will demonstrate a simple scenario, with the focus being on simulating this behaviour, while intentionally avoiding any framework, orchestration library, or planner. The objective is clarity, not convenience. Every decision the agent makes is visible, every transition is explainable, and every outcome can be traced back to a concrete rule.

What Makes a System Truly Agentic

A system becomes agentic not because it uses a language model or orchestration library, but because it progresses through decisions over time. Agentic systems do not simply respond. They move.

This movement is defined by the ability to reason about current state, incorporate past actions, choose an appropriate next step, and then change state in response to that action. If any of these elements are missing, the system either becomes reactive or enters infinite loops that look intelligent but never converge.

Understanding this distinction is critical, especially when building systems that must operate reliably outside of demos.

By removing frameworks and even LLM calls from the equation, this example makes the agent’s reasoning loop explicit, allowing us to study agentic behavior without abstraction.

The Use Case: Support Ticket Processing

Support ticket processing provides a clear and realistic context for agentic behavior. Tickets are rarely resolved in a single step. Some require classification before action, others demand escalation, and some must pause until additional information is available.

To keep behavior predictable and auditable, the agent is constrained to five actions.

Classify the issue

Ask for clarification

Propose a resolution

Escalate to a human

Close the ticket

By limiting the action space, the agent is forced to reason deliberately rather than improvising behavior.

The above illustration will become clearer as we walkthrough the ten steps below.

Step 1: The Source File (ticket_data.csv)

The agent begins with no assumptions and no internal knowledge. Its entire view of the world comes from incoming data of open tickets stored in a CSV file (ticket_data.csv). A sample extract from the file is displayed below.

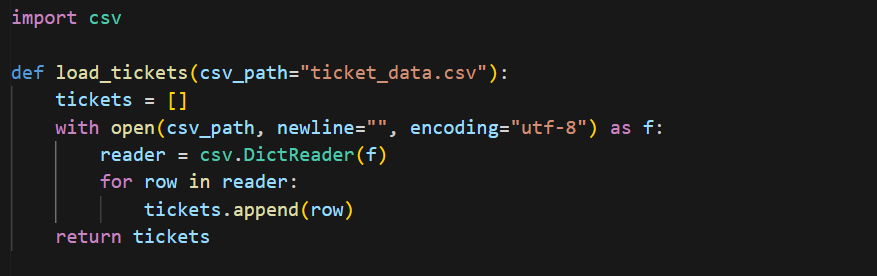

Step 2: Loading Tickets Into the System

This step exists purely to ingest state. No reasoning happens here. Separating ingestion from decision-making ensures that the agent logic remains clean, testable, and extensible.

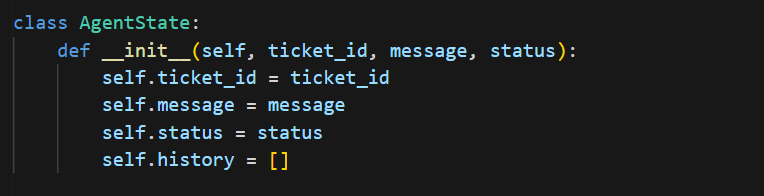

Step 3: Defining Agent State

Agent state captures everything the agent knows about a ticket at a given moment.

This step defines the agent’s working memory through the AgentState object, which captures the ticket context in ticket_id, message, and status, along with a running history of actions taken. By making state explicit and persistent across steps, the agent can reason about what has already happened and avoid repeating decisions.

(The history field is especially important, as agentic behavior is defined by sequences of actions rather than final answers. Without explicit state and history, agents lose awareness of their own behavior and repeat decisions endlessly).

Step 4: Reasoning About the Next Action

This function represents the agent’s reasoning step. Its responsibility is not to solve tickets, but to decide what should happen next. Crucially, it uses both the ticket content and the agent’s past actions. This prevents repetition and ensures forward progress.

In this step, the call_llm(state) function decides the agent’s next action by evaluating both state.message and state.history. The ticket text provides the external context, while state.history ensures the agent does not repeat actions. Early checks on state.status prevent further reasoning once a ticket is no longer open, and keywords in msg trigger immediate escalation for high-risk issues. For billing-related tickets, the function enforces an explicit sequence by returning classify before propose_resolution, while sensitive requests return ask_clarification instead of proceeding unsafely. For all other cases, a single propose_resolution is allowed before returning close. By centralizing these decisions in one function, the agent guarantees forward progress, convergence, and transparent behavior.

Step 5: Selecting the Next Action

This step isolates decision logic from execution. It allows decision-making to evolve independently, whether that means introducing a real language model, adding policy checks, or layering governance controls.

Step 6: Executing Actions and Updating State

This step turns decisions into outcomes. Every action must change state in a meaningful way. If actions do not move the agent closer to a terminal state, the system will loop indefinitely while appearing confident.

In this step, the execute_action(action, state) function turns decisions into observable outcomes by updating both state.history and state.status.

Each supported action produces a deliberate state transition:

1. classify: appends context to state.history without closing the ticket,

2. ask_clarification: records the request and sets state.status to waiting,

3. propose_resolution: marks the issue as addressed and transitions the ticket to closed,

4. escalate: moves the ticket to an escalated terminal state.

These updates ensure that every action has a tangible effect on the agent’s state, preventing repeated decisions and guaranteeing that the agent moves toward a stable endpoint. By making state changes explicit in this function, the system ensures that action execution and state progression remain tightly coupled and fully explainable.

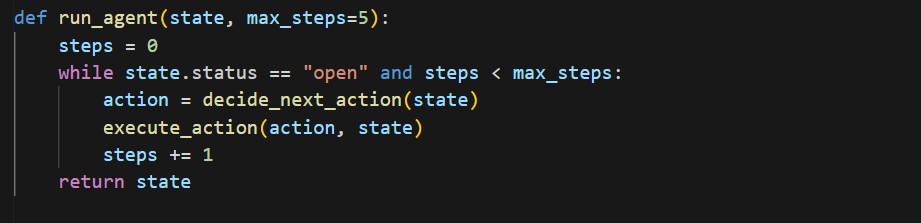

Step 7: The Agentic Loop

This loop is the agent. It is where reasoning, action, and state updates come together. Without this loop, the system would be reactive rather than agentic.

The run_agent(state, max_steps=5) function forms the agentic loop by repeatedly calling decide_next_action(state) and applying the result through execute_action(action, state) while the ticket remains open. The loop naturally terminates as actions update state.status to a terminal value such as closed, waiting, or escalated, ensuring the agent progresses and converges rather than repeating decisions.

Step 8: Running the Agent Across All Tickets

Each ticket progresses independently through the same logic, mirroring real-world parallel processing.

The agent is applied uniformly across all incoming tickets by iterating over the loaded records and initializing a new AgentState for each one. Calling run_agent(state) ensures that every ticket follows the same decision logic while progressing independently through its own reasoning trajectory, mirroring how agentic systems operate in real environments.

Step 9: Inspecting Agent Trajectories

The code block shown below surfaces the agent’s behavior by printing each ticket’s final state.status alongside the ordered entries in state.history, making the full decision trajectory visible and explainable rather than just the final outcome.

This output is not a log, but an explanation of the agent’s behavior.

Step 10: Analyzing the Trajectories

To understand how the agent behaves in practice, it is important to look beyond final outcomes and examine the sequence of decisions the agent makes as it moves each ticket toward resolution, escalation, or an intentional pause.

- The Multi-Step Path: For billing issues, notice the agent didn’t just “guess.” It followed a logical sequence: Classify → Propose Resolution → Close. This ordered progression is the hallmark of a system that “reasons” through state.

- The Safety Valve: When the agent encountered “system crashes,” it recognized its own limitations. It didn’t hallucinate a fix; it Escalated immediately. In an agentic system, knowing when not to act is as important as the action itself.

- The Intentional Pause: For sensitive or ambiguous requests, the agent moved to a Waiting state. This isn’t a failure or an error — it’s a deliberate terminal state that prevents unsafe autonomous action.

Let us examine few rows.

Consider the ticket:

This ticket is related to a billing issue. The agent first classified the ticket to establish context, then proposed a resolution, and closed the ticket. The key detail is not the closure, but the ordered progression.

Now compare it with:

This ticket referenced system crashes. The agent recognized that automated handling was inappropriate and escalated immediately. Escalation here is an intentional outcome, not a failure.

Straightforward requests show a simpler path, like:

The agent did not over-process these tickets. It moved directly to resolution.

Some tickets require restraint:

These involve sensitive or ambiguous requests. The agent paused rather than guessing, treating waiting as a valid terminal state.

Across all examples, the agent never repeats itself and never jumps steps. Each decision moves it toward resolution, escalation, or an intentional pause.

This design makes agentic behavior explicit, auditable, and trustworthy. Decisions unfold step by step, state transitions are visible, and outcomes are intentional rather than accidental. Instead of asking a model for answers, the system repeatedly asks what should happen next and moves itself toward a stable outcome.

Agentic AI frameworks remain central to building scalable, reliable, and production-ready systems, offering capabilities such as orchestration, observability, governance, and integration that simple implementations cannot provide on their own. Their real value becomes even clearer when the underlying decision loop is well understood. When you build from first principles, “Agentic AI” stops being a black box and starts being a predictable, auditable tool for real-world operations. With that foundation in place, frameworks stop feeling abstract and instead become deliberate design choices.

The notebook and data associated with this blog can be accessed here.

I share hands-on, implementation-focused perspectives on Generative & Agentic AI, LLMs, Snowflake and Cortex AI, translating advanced capabilities into practical, real-world analytics use cases. Do follow me on LinkedIn and Medium for more such insights.

Agentic AI in Action -Part 10 -Beyond Frameworks: Building the Core Loop From First Principles was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.