LAI #115: The Hidden Cost of “Agent-First” Thinking

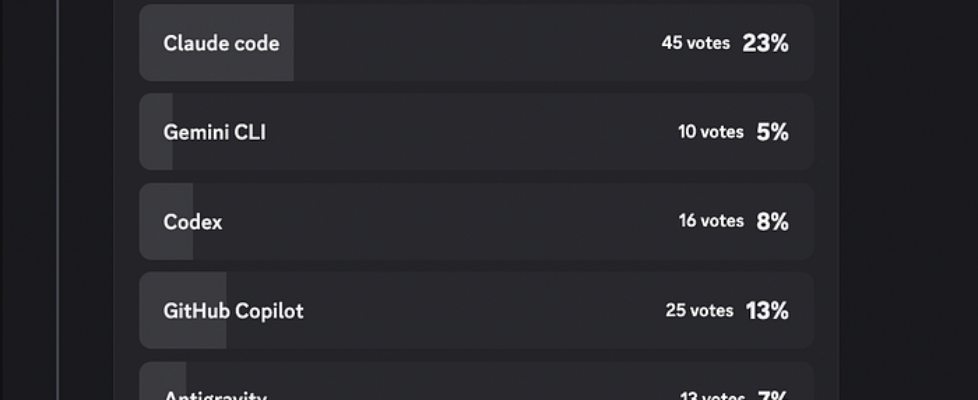

Author(s): Towards AI Editorial Team Originally published on Towards AI. Good morning, AI enthusiasts! AI is getting embedded into real workflows: repos, data platforms, enterprise search, and production infrastructure. And as that happens, a pattern is showing up everywhere: the biggest failures aren’t model failures. They’re systems failures: brittle architectures, missing reproducibility, unclear trust boundaries, and performance bottlenecks nobody budgeted for. That’s what this week’s issue is about. We cover why reproducibility is pushing teams from data lakes to lakehouses, how local-first agents are evolving (and why security and drift matter more when you run them on your own machine), and what it takes to build RAG systems that stay grounded with citations in real enterprise stacks. We also go down a level into the fundamentals with the geometry that explains linear regression clearly, and a practical breakdown of TPU architecture so you can reason about performance without treating hardware as a black box. Let’s get into it. What’s AI Weekly Most teams don’t get “agent systems” wrong because they picked the wrong framework or model. They get them wrong because they make the architecture decision too early, before they’ve asked what kind of work the system actually needs to do. And this week, in What’s AI, that’s the problem the video tackles: if you don’t match the system design to the task’s shape, you end up with something that looks sophisticated but is fragile, expensive, and constantly begging for rework. Watch the full video on YouTube. — Louis-François Bouchard, Towards AI Co-founder & Head of Community Learn AI Together Community Section! Featured Community post from the Discord Psbigbig_71676 has built WFGY, an open source (MIT) text-only reasoning core. It is a single PDF you paste into the system prompt, then you just use your model normally. The goal is to reduce hallucinations, have more stable multi-step reasoning, and reduce drift across follow-ups. You can check it out on GitHub and support a fellow community member. If you have any feedback, share it in the thread. AI poll of the week! This poll makes one thing pretty clear: a lot of you are actively using coding agents now (Claude Code is the most common pick), but plain chat is still a close default for many, and the rest is a pretty even spread across Cursor, Copilot, and a long tail of other tools, which tells me people are still experimenting rather than settling on one workflow. The simplest explanation is that chat is great when you want to talk through a problem or sanity-check an approach, while agents earn their keep when there’s real repo work to do across files and you don’t want to keep copying context back and forth. Where’s your trust boundary with coding agents right now, and what are you comfortable letting them do unsupervised (edits, refactors, dependency bumps, running tests, opening PRs)? Let’s discuss in the thread! Collaboration Opportunities The Learn AI Together Discord community is flooding with collaboration opportunities. If you are excited to dive into applied AI, want a study partner, or even want to find a partner for your passion project, join the collaboration channel! Keep an eye on this section, too — we share cool opportunities every week! 1. Awixor has built Sunder, a Chrome extension that acts as a local privacy firewall between the user and any AI chat interface. They are looking for a contributor who can help with Rust, privacy engineering, or browser extensions. If this sounds interesting to you, connect with them in the thread. 2. Nigs01 is learning AI/ML/DL and is looking to create a group to study together. If you have a group or want to join one, reach out to them in the thread. 3. Stofboy is starting to explore ways to monetize some of the agents they’ve been creating, and wants to connect with others interested in doing it. If this sounds relevant, connect with them in the thread. Meme of the week! Meme shared by bin4ry_d3struct0r TAI Curated Section Article of the week From Data Lake to Data Lakehouse: Why AI Changes The Rules For Data Platforms By Sarah Lea This article examines the shift from traditional data lakes to data lakehouses, driven by AI’s reproducibility demands. While data lakes offer flexibility through schema-on-read, they often lack consistent versioning, which can turn them into data swamps — where model results change because underlying data shifts go untracked. The piece illustrates this with a churn-model simulation, comparing a mutable data lake approach with a versioned lakehouse setup. Using tools like DuckDB and SHA-256 hashing, it shows how table-like abstractions and transaction logs help developers determine whether performance improvements stem from the model or from data modifications. Our must-read articles 1. OpenClaw + Ollama + Security Guide: The ULTIMATE LOCAL AI Assistant Agent has ARRIVED! By Gao Dalie (高達烈) Following the viral surge of Clawdbot, this article explores OpenClaw, an open-source personal AI agent that runs locally on your hardware. Unlike traditional chat assistants, OpenClaw is positioned as an autonomous partner that can execute tasks across platforms like Slack and WhatsApp. It highlights a self-evolving architecture where agents discover skills through ClawHub and manage learning via Git-backed persistent memory. By integrating with Ollama, users can run LLMs locally without API fees. Alongside a practical setup guide, the piece also flags security risks and stresses the need for sandboxed environments when running high-privilege local agents. 2. Build a RAG-Powered AI Agent with Microsoft Foundry and Foundry IQ via the Azure Portal By Laura Verghote This guide walks through building a production-ready RAG chatbot using Microsoft Foundry and Foundry IQ through the Azure Portal. By integrating Azure AI Search and Blob Storage, it shows how to move beyond a model’s static knowledge and build an agent that returns grounded answers with source citations. The tutorial covers the core architecture, including deploying GPT-4o and embedding models, creating vector indices, and configuring retrieval, then closes with testing and publishing. 3. The Geometry of Linear Equations and Linear […]