Trying to clarify something about the Bellman equation

|

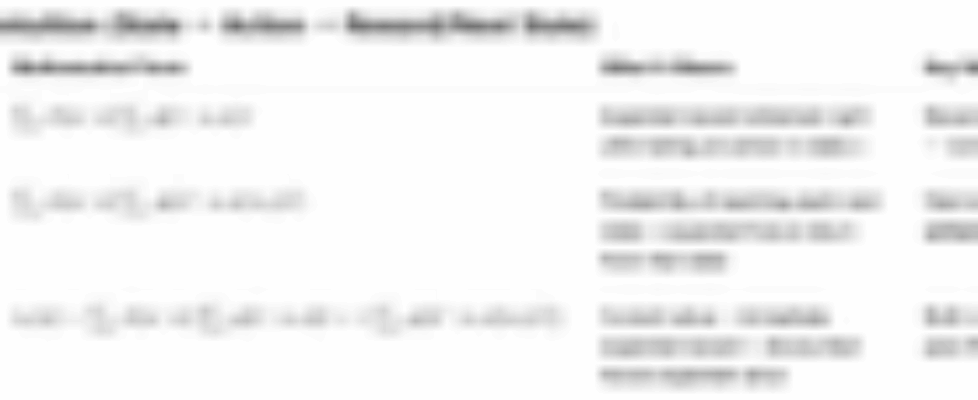

I’m checking if my understanding is correct. In an MDP, is it accurate to say that: State does NOT directly produce reward or next state. Instead, the structure is always: State → Action → (Reward, Next State) So:

Meaning both reward and transition depend on (s,a), not on s alone. Is this the correct way to think about it? submitted by /u/New-Yogurtcloset1818 |

Like

0

Liked

Liked