What Are LLM Parameters? A Simple Explanation of Weights, Biases, and Scale

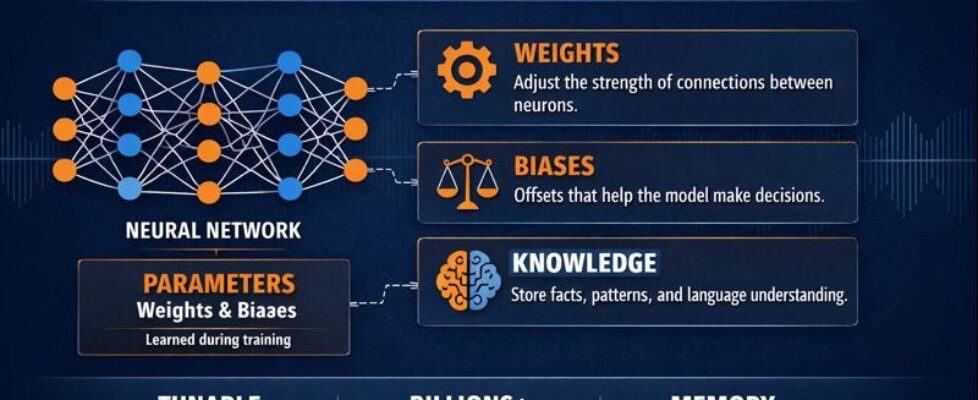

Author(s): Mandar Panse Originally published on Towards AI. No complicated words. Just real talk about how this stuff works. Large Language Models (LLMs) like GPT, LLaMA, and Mistral contain billions of parameters, primarily weights and biases, that define how the model understands language. Everyone talks about “GPT-4 has 1.76 trillion parameters” like it’s supposed to mean something. But most people just smile and pretend they understand. Let’s actually explain what this means. No confusing tech words. No showing off. Just clear answers. What Exactly Is a Parameter? A parameter is just a number. That’s all it is. Think about baking cookies. The recipe says “2 cups of flour” and “1 cup of sugar.” Those numbers (2 and 1) control how the cookies turn out. Change those numbers and you get different cookies. Parameters work exactly the same way. They’re numbers that control how the AI behaves. But instead of having 5 numbers in a recipe, an AI model has billions of them. These numbers are usually decimals like 0.543 or -0.891. They sit inside the model in huge lists. When someone types a question, these numbers get used in calculations to figure out the answer. The model takes your words, turns them into numbers, does a ton of math with all these parameters, and turns the result back into words. That’s your answer. Every single parameter plays a tiny role in that process. Change one parameter and the answer might change slightly. Change a million parameters and the answer changes completely. What Do These Numbers Actually Mean? Each parameter value determines part of how the model thinks. Imagine tuning a guitar. Each string needs to be set to exactly the right tightness. Too loose and it sounds wrong. Too tight and it sounds wrong. Get all six strings just right and it sounds beautiful. Parameters are like that, except there are billions of “strings” instead of six. Each one needs to be set to exactly the right value for the model to work properly. When parameter number 5,482 is set to 0.732, it helps the model recognize certain patterns in language. Change it to 0.941 and those patterns look different. Get all billions of parameters set correctly and the model can write essays, answer questions, and have conversations. Nobody knows exactly what each individual parameter does. It’s all working together in complex ways. But we know that the right combination of all these numbers makes the model smart. How Does Training Set These Numbers? This part is actually pretty amazing. At the start of training, all the parameters are random numbers. The model is basically useless. It gives garbage answers to everything. Then training begins. The model sees examples of text and tries to learn patterns. Here’s how it works: The model sees: “The cat sat on the” It tries to guess the next word: “banana” The training system says: “Wrong. The answer is ‘mat’” Now here’s the magic: Every single parameter in the entire model gets adjusted. Just a tiny amount. Maybe parameter 1 goes from 0.5430 to 0.5431. That’s a tiny change. Then it tries again: “The dog ran through the” Model guesses: “window” Training system: “Wrong. Answer is ‘park’” Again, every parameter adjusts slightly. This happens trillions of times. Guess, check, adjust. Guess, check, adjust. Over and over and over. After weeks of this with massive computers, those parameters have learned patterns of language. They’re no longer random. They’re tuned to recognize how words fit together, what questions mean, and how to respond. Nobody manually sets parameter number 8,291,047 to 0.6234. The training process figures out the right value through repetition. Show enough examples and the model learns on its own. It’s like learning to ride a bike. At first, everything is wobbly and wrong. But after falling down a hundred times, your body figures out the balance. Training does that but with billions of numbers instead of your body. How Size Relates to Parameter Count More parameters means bigger model. Simple as that. Think about different phones: Basic phone: 32 GB storage, holds some apps and photos Medium phone: 128 GB storage, holds lots more Big phone: 512 GB storage, holds everything AI models work the same way: 7 billion parameters needs about 14 GB of space. A decent computer can handle this. It fits on a regular hard drive. Responses come back fast. 70 billion parameters needs about 140 GB of space. Now you need serious computer equipment. Most regular computers can’t handle this. Responses take longer. 700 billion parameters needs about 1,400 GB of space. That’s massive. Only big companies with server rooms can run this. Very slow responses. The name tells you the size. When you see “Llama 3 70B,” that means 70 billion parameters. When you see “Mistral 7B,” that’s 7 billion parameters. Bigger number means bigger file size means more powerful computer needed means slower responses means more expensive to run. This is why ChatGPT runs in the cloud instead of on your phone. The model is too big to fit on a phone. Even if it did fit, your phone battery would die in about 3 minutes trying to run it. What Is Quantization? The Clever Compression Trick Here’s where things get really practical and interesting. Remember how a 70 billion parameter model needs 140 GB of space? That’s because each parameter normally takes up 2 bytes of storage. This is called “16-bit” or “fp16” format. But here’s a clever trick: what if we could squeeze those numbers down to take less space? That’s exactly what quantization does. It’s like compressing a photo. A high-quality photo might be 10 MB. Compress it and it becomes 2 MB. It loses a tiny bit of quality, but most people can’t tell the difference. Quantization does the same thing to model parameters. Normal parameters (16-bit): Very precise numbers like 0.54729184 Quantized parameters (8-bit): Less precise numbers like 0.547 Super quantized (4-bit): Even less precise like 0.5 The numbers are rounded and simplified. […]