Word Embeddings in NLP: From Bag-of-Words to Transformers (Part 1)

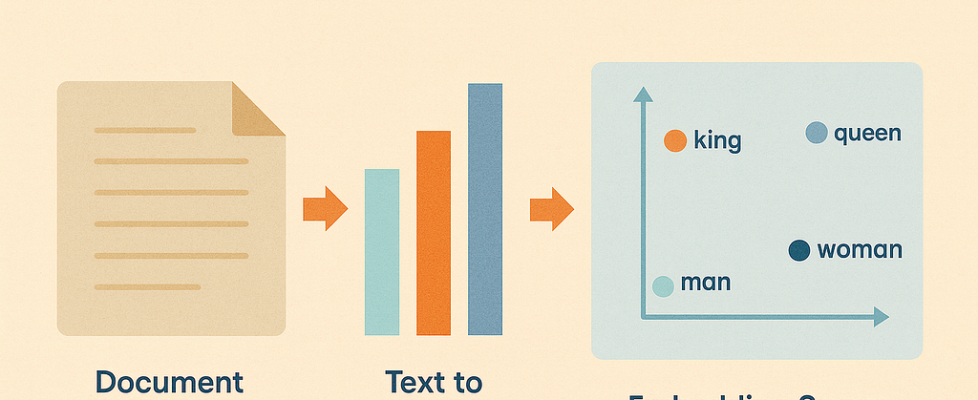

Author(s): Sivasai Yadav Mudugandla Originally published on Towards AI. Image generated with Microsoft Copilot · 1. Introduction: Why Computers Struggle with Language· 2. What Are Word Embeddings and Why Do We Need Them? ∘ The Map Analogy ∘ Why We Need Them ∘ Word embeddings fix this by encoding meaning: ∘ The Core Idea ∘ Why This Revolutionized NLP· 3. Types of Word Embeddings: From Simple to Sophisticated ∘ The Three Generations ∘ Why This Progression Matters· 3.1 Frequency-Based Embeddings (The Starting Point) ∘ 3.1.1. Bag-of-Words (BoW) ∘ 3.1.2. TF-IDF (Term Frequency-Inverse Document Frequency) ∘ 3.1.3. N-grams· 4. Choosing Between Frequency-Based Methods: Quick Guide ∘ The Decision Tree ∘ Quick Comparison Table ∘ Decision Guide by Task Type ∘ Common Mistakes to Avoid ∘ Quick Checklist: Have You Chosen Right?· 5. Final Thoughts ∘ What I’ve Learned ∘ What’s Next· 6. Resources & Further Learning 1. Introduction: Why Computers Struggle with Language I’ll never forget my first attempt at building a sentiment analysis model. I had a dataset of product reviews, some Python knowledge, and a lot of confidence. The plan seemed simple: count the words, feed them to a model, and boom — instant sentiment classifier. It failed miserably. The model couldn’t understand the relationships between words. It had no idea that “awful” and “terrible” express similar negativity, or that “awesome” and “excellent” both mean strong positivity. To the model, every word was just an isolated feature with no connection to any other word. And when someone wrote “This product isn’t bad,” the model saw “bad” and classified it as negative, ignoring the “isn’t.” That’s when I realised: computers don’t understand language the way we do. We humans, get meaning instantly. We know “king” relates to “queen,” that “huge” and “gigantic” mean similar things, and that “bank” means something different in “river bank” versus “bank account.” We understand context, nuance, and relationships between words. But computers? They only understand numbers. And the obvious way to convert words to numbers — just assigning each word a unique number — doesn’t capture any of this meaning. To a computer, “cat” as number 5 and “dog” as number 10 have no relationship, even though we know they’re both animals, both pets, both commonly discussed together. This is the fundamental problem that word embeddings solve: they turn words into numbers in a way that preserves meaning and relationships. During my Gen AI course, learning about word embeddings was one of those “everything clicks” moments. Suddenly, I understood why my early NLP attempts failed and how modern systems like ChatGPT can actually understand language. Word embeddings bridge the gap between how computers process information (numbers) and how language actually works (meaning and relationships). In this article, I’ll walk you through: What word embeddings actually are and why they’re crucial The evolution from simple frequency-based methods to sophisticated contextual embeddings Every major type of embedding: when to use it, its strengths, and its limitations How to choose the right embedding for your specific task Common mistakes I’ve seen (and made) and how to avoid them Whether you’re building your first NLP model or just trying to understand how modern AI handles language, this guide will give you the foundation you need. Let’s start with the basics. 2. What Are Word Embeddings and Why Do We Need Them? Here’s the simplest way I can explain word embeddings: they represent words as points in a multi-dimensional space, where the distance and direction between points capture meaning. That probably sounds abstract, so let me use an analogy. The Map Analogy Think about a map of your city. Every location has coordinates — two numbers that tell you exactly where it is. For example: Coffee Shop: (40.7580, -73.9855)Bakery next door: (40.7582, -73.9853)Your home: (44.7128, -77.0060) Here’s the interesting part: the distance between coordinates tells you something meaningful. The coffee shop at (40.7580, -73.9855) and the bakery at (40.7582, -73.9853) have very similar coordinates because they’re actually next to each other. Your home at (44.7128, -77.0060) has quite different coordinates because it’s miles away. The coordinates themselves might look like random numbers, but the relationships between them capture real‑world proximity. Word embeddings work the same way, but instead of mapping physical locations, we’re mapping words in a “meaning space”. Words with similar meanings end up close to each other. Words that appear in similar contexts are nearby. And the direction between words can even capture relationships. Here’s a real example that blew my mind when I first saw it: vector(“king”) – vector(“man”) + vector(“woman”) ≈ vector(“queen”) The mathematical relationship between these word vectors actually captures the conceptual relationship between the words. The difference between “king” and “man” is similar to the difference between “queen” and “woman” — both represent a gender relationship in royalty. Why We Need Them Let’s go back to my sentiment analysis failure. When I first tried it, I was essentially doing this: “good” → 1″bad” → 2″awesome” → 3″terrible” → 4 Just random numbers. The computer had no idea that “good” and “awesome” are similar, or that “bad” and “terrible” are similar. Every word was equally different from every other word. Word embeddings fix this by encoding meaning: “good” → [0.2, 0.8, 0.1, …]”awesome” → [0.3, 0.9, 0.1, …]”bad” → [-0.2, -0.7, 0.1, …]”terrible” → [-0.3, -0.8, 0.0, …] These aren’t random numbers — each dimension captures some aspect of meaning. Now, when the computer processes these vectors, it can see that “good” and “awesome” are similar (their vectors are close), and both are opposite to “bad” and “terrible” (notice the negative values). The Core Idea Word embeddings capture three crucial things: Semantic Similarity Words with similar meanings have similar embeddings. “huge,” “gigantic,” and “enormous” all cluster together in the embedding space. Contextual Relationships Words that appear in similar contexts are represented similarly. If “coffee” and “tea” often appear in similar sentences (“I’ll have a ___”), their embeddings will be close. Analogical Reasoning The relationships between words are captured in the geometry of the space. […]