No Libraries No Shortcuts: Reasoning Models from Scratch with PyTorch — Part 1

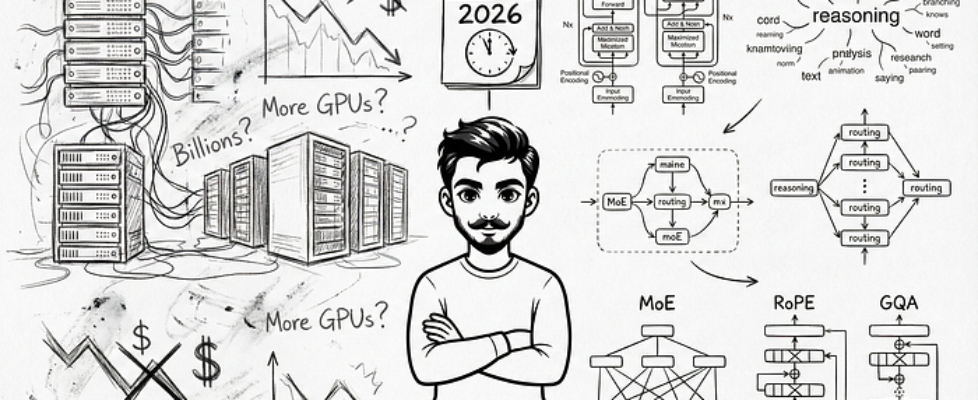

Author(s): Ashish Abraham Originally published on Towards AI. The no BS Guide to implementing LLMs with Mixture of Experts, RoPE, and Grouped Query Attention from scratch There is this term called “moment” that has been spooking and exciting AI investors of this decade. For some, it was about printing money like just as after the “ChatGPT moment” in late 2022, that eventually led to the stock market surviving on the magnificent 7 AI stocks to this day. For others, 2025 opened with a colder wake-up call. It was about throwing money down the drain, the “DeepSeek moment” which occurred around late January 2025, when an underdog Chinese startup built a world-class LLM in their basement, which outperformed all existing ones, and that too on a suspiciously small electricity bill. This made a joke out of billions going into mega-data centers, sending Nasdaq down 3.1%, S&P 500 down 1.5% and the AI darling Nvidia down 16.9%. Image By AuthorThis article delves into the advancements in reasoning models within AI, explaining the transition from standard language modeling to incorporating complex reasoning behaviors in modern transformer architectures. It highlights the significance of architectural changes and innovative training methods that equip models with multi-step thinking capabilities. The piece goes on to cover essential components like Mixture of Experts, Rotary Positional Encoding, and Grouped Query Attention, while addressing the limitations of existing models and exploring the theoretical frameworks behind these advancements. Read the full blog for free on Medium. Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor. Published via Towards AI