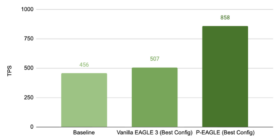

P-EAGLE: Faster LLM inference with Parallel Speculative Decoding in vLLM

EAGLE is the state-of-the-art method for speculative decoding in large language model (LLM) inference, but its autoregressive drafting creates a hidden bottleneck: the more tokens that you speculate, the more sequential forward passes the drafter needs. Eventually those overhead eats into your gains. P-EAGLE removes this ceiling by generating all K draft tokens in a single forward pass, delivering up to 1.69x speedup over vanilla EAGLE-3 on real workloads on NVIDIA B200. You can unlock this performance gain […]