Optimizing LoRA target module selection for efficient fine tuning

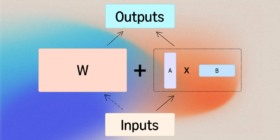

Fine-tuning a large language model (LLM) on a specific task requires updates to billions of parameters across trillions of tokens, with the attendant costs in GPU resources and time. Low-rank adaptation (LoRA) is a more efficient alternative that freezes the original model weights but introduces lightweight matrices into specific model sublayers, or “modules”. These matrices (commonly referred to as “adapters”) modify the modules’ weights, enabling not only efficient fine tuning but also on-demand model serving, which dramatically lowers […]