How AI Is Changing the Needs and Values of Finance Leaders and Their Teams – SPONSOR CONTENT FROM DELOITTE

Sponsor content from Deloitte.

Sponsor content from Deloitte.

arXiv:2602.23408v1 Announce Type: new Abstract: The specification of the action space plays a pivotal role in imitation-based robotic manipulation policy learning, fundamentally shaping the optimization landscape of policy learning. While recent advances have focused heavily on scaling training data and model capacity, the choice of action space remains guided by ad-hoc heuristics or legacy designs, leading to an ambiguous understanding of robotic policy design philosophies. To address this ambiguity, we conducted a large-scale and systematic empirical study, confirming […]

arXiv:2602.18583v1 Announce Type: new Abstract: Real-time guardrails require evaluation that is accurate, cheap, and fast – yet today’s default, LLM-as-a-judge (LLMAJ), is slow, expensive, and operationally non-deterministic due to multi-token generation. We present Luna-2, a novel architecture that leverages decoder-only small language models (SLMs) into a deterministic evaluation model to reliably compute complex task-specific LLMAJ metrics (e.g. toxicity, hallucination, tool selection quality, etc.) at an accuracy at par or higher than LLMAJ using frontier LLMs while drastically reducing […]

arXiv:2601.12222v1 Announce Type: new Abstract: Music generative artificial intelligence (AI) is rapidly expanding music content, necessitating automated song aesthetics evaluation. However, existing studies largely focus on speech, audio or singing quality, leaving song aesthetics underexplored. Moreover, conventional approaches often predict a precise Mean Opinion Score (MOS) value directly, which struggles to capture the nuances of human perception in song aesthetics evaluation. This paper proposes a song-oriented aesthetics evaluation framework, featuring two novel modules: 1) Multi-Stem Attention Fusion (MSAF) […]

arXiv:2602.02623v1 Announce Type: new Abstract: Causal artificial intelligence aims to enhance explainability, trustworthiness, and robustness in AI by leveraging structural causal models (SCMs). In this pursuit, recent advances formalize network sheaves and cosheaves of causal knowledge. Pushing in the same direction, we tackle the learning of consistent causal abstraction network (CAN), a sheaf-theoretic framework where (i) SCMs are Gaussian, (ii) restriction maps are transposes of constructive linear causal abstractions (CAs) adhering to the semantic embedding principle, and (iii) […]

arXiv:2304.04724v3 Announce Type: replace-cross Abstract: We analyze the mixing time of Metropolized Hamiltonian Monte Carlo (HMC) with the leapfrog integrator to sample from a distribution on $mathbb{R}^d$ whose log-density is smooth, has Lipschitz Hessian in Frobenius norm and satisfies isoperimetry. We bound the gradient complexity to reach $epsilon$ error in total variation distance from a warm start by $tilde O(d^{1/4}text{polylog}(1/epsilon))$ and demonstrate the benefit of choosing the number of leapfrog steps to be larger than 1. To surpass […]

Why the seeds of the next civilization are already growing, and how to see them. What if the chaos around us isn’t collapse, but transformation? Michel Bauwens has spent decades mapping the edges of change. From peer-to-peer networks to the commons, from medieval guilds to distributed autonomous organizations, he’s been tracking something most people miss: the seeds of a new civilization, already growing underneath the noise. Some people might call Bauwens a unicorn, because he does not fit […]

Reinforcement learning (RL) is increasingly used to personalize instruction in intelligent tutoring systems, yet the field lacks a formal framework for defining and evaluating pedagogical safety. We introduce a four-layer model of pedagogical safety for educational RL comprising structural, progress, behavioral, and alignment safety and propose the Reward Hacking Severity Index (RHSI) to quantify misalignment between proxy rewards and genuine learning. We evaluate the framework in a controlled simulation of an AI tutoring environment with 120 sessions across […]

For ethical and safe AI, machine unlearning rises as a critical topic aiming to protect sensitive, private, and copyrighted knowledge from misuse. To achieve this goal, it is common to conduct gradient ascent (GA) to reverse the training on undesired data. However, such a reversal is prone to catastrophic collapse, which leads to serious performance degradation in general tasks. As a solution, we propose model extrapolation as an alternative to GA, which reaches the counterpart direction in the […]

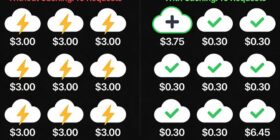

Author(s): Nikhil Originally published on Towards AI. LLM API Token Caching: The 90% Cost Reduction Feature when building AI Applications If you’ve used Claude, GPT-4, or any modern LLM API, you’ve been spending far more than necessary on token processing if you are not caching the system prompt or any prompt that just static and doesn’t change for every api call. Cost comparison: 10x savings on cached token readsToken caching provides substantial cost benefits by allowing reuse of […]