Beyond Gradient Descent: A Practical Guide to SGD, Momentum, RMSProp, and Adam (with Worked…

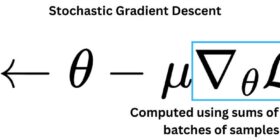

Beyond Gradient Descent: A Practical Guide to SGD, Momentum, RMSProp, and Adam (with Worked Examples) When we train machine learning models, we rarely use “vanilla” gradient descent. In practice, we almost always reach for improved variants that converge faster, behave better with noisy gradients, and handle tricky loss landscapes more reliably. Modern training commonly relies on stochastic gradient descent (mini-batch SGD), plus optimizers like Momentum, RMSProp, and Adam. 1) The baseline: Gradient Descent vs. Stochastic (Mini-batch) Gradient Descent 1.1 What […]