Exciting Changes Are Coming to the TDS Author Payment Program

Authors can now benefit from updated earning tiers and a higher article cap The post Exciting Changes Are Coming to the TDS Author Payment Program appeared first on Towards Data Science.

Authors can now benefit from updated earning tiers and a higher article cap The post Exciting Changes Are Coming to the TDS Author Payment Program appeared first on Towards Data Science.

Master path operations, Pydantic models, dependency injection, and automatic documentation. The post Code Less, Ship Faster: Building APIs with FastAPI appeared first on Towards Data Science.

February 2026: exchange with others, documentation, and MLOps The post The Machine Learning Lessons I’ve Learned This Month appeared first on Towards Data Science.

A PyTorch implementation on the YOLOv3 architecture from scratch The post YOLOv3 Paper Walkthrough: Even Better, But Not That Much appeared first on Towards Data Science.

As OpenAI transitions from a wildly successful consumer startup into a piece of national security infrastructure, the company seems unequipped to manage its new responsibilities.

The idea of ”Privacy-Preserving AI” usually stops at local inference. You run a model on a phone, and the data stays there. But things get complicated when you need to prove to a third party that the output was actually generated by a specific, untampered model without revealing the input data. I’ve been looking into the recently open-sourced Remainder prover (the system Tools for Humanity uses for World). From an ML engineering perspective, the choice of a GKR […]

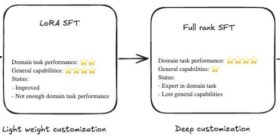

Large language models (LLMs) perform well on general tasks but struggle with specialized work that requires understanding proprietary data, internal processes, and industry-specific terminology. Supervised fine-tuning (SFT) adapts LLMs to these organizational contexts. SFT can be implemented through two distinct methodologies: Parameter-Efficient Fine-Tuning (PEFT), which updates only a subset of model parameters, offering faster training and lower computational costs while maintaining reasonable performance improvements; Full-rank SFT, which updates all model parameters rather than a subset and incorporates more […]

Customer service teams face a persistent challenge. Existing chat-based assistants frustrate users with rigid responses, while direct large language model (LLM) implementations lack the structure needed for reliable business operations. When customers need help with order inquiries, cancellations, or status updates, traditional approaches either fail to understand natural language or can’t maintain context across multistep conversations. This post explores how to build an intelligent conversational agent using Amazon Bedrock, LangGraph, and managed MLflow on Amazon SageMaker AI. Solution […]

Are you struggling to balance generative AI safety with accuracy, performance, and costs? Many organizations face this challenge when deploying generative AI applications to production. A guardrail that’s too strict blocks legitimate user requests, which frustrates customers. One that’s too lenient exposes your application to harmful content, prompt attacks, or unintended data exposure. Finding the right balance requires more than just enabling features; it demands thoughtful configuration and nearly continuous refinement. Amazon Bedrock Guardrails gives you powerful tools for […]

Following controversies surrounding ChatGPT, many users are ditching the AI chatbot for Claude instead. Here’s how to make the switch.